Keeping Medical AI Healthy and Trustworthy: A Review of Detection and Correction Methods for System Degradation

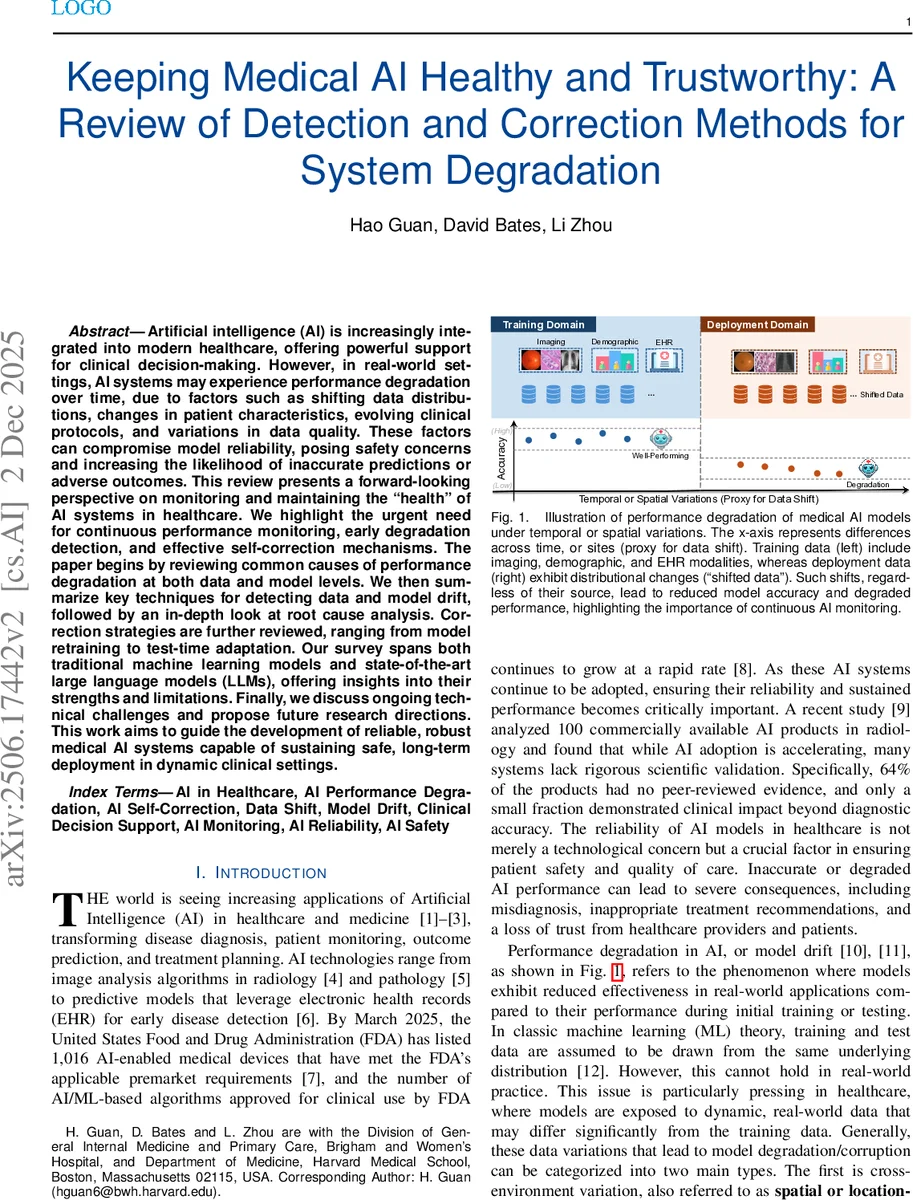

Artificial intelligence (AI) is increasingly integrated into modern healthcare, offering powerful support for clinical decision-making. However, in real-world settings, AI systems may experience performance degradation over time, due to factors such as shifting data distributions, changes in patient characteristics, evolving clinical protocols, and variations in data quality. These factors can compromise model reliability, posing safety concerns and increasing the likelihood of inaccurate predictions or adverse outcomes. This review presents a forward-looking perspective on monitoring and maintaining the “health” of AI systems in healthcare. We highlight the urgent need for continuous performance monitoring, early degradation detection, and effective self-correction mechanisms. The paper begins by reviewing common causes of performance degradation at both data and model levels. We then summarize key techniques for detecting data and model drift, followed by an in-depth look at root cause analysis. Correction strategies are further reviewed, ranging from model retraining to test-time adaptation. Our survey spans both traditional machine learning models and state-of-the-art large language models (LLMs), offering insights into their strengths and limitations. Finally, we discuss ongoing technical challenges and propose future research directions. This work aims to guide the development of reliable, robust medical AI systems capable of sustaining safe, long-term deployment in dynamic clinical settings.

💡 Research Summary

This paper provides a comprehensive review of performance degradation in medical artificial intelligence (AI) systems and proposes a unified Detection‑Diagnosis‑Correction (DDC) framework to keep such systems healthy and trustworthy over long‑term deployment. The authors begin by highlighting the rapid proliferation of AI‑enabled medical devices—over a thousand FDA‑cleared products by early 2025—and the growing evidence that many of these tools lack rigorous post‑deployment validation. They argue that in dynamic clinical environments, AI models inevitably encounter both spatial (cross‑institutional) and temporal (within‑institutional) variations that lead to data drift (changes in input distribution) and model drift (changes in the model’s behavior or parameters).

The paper formally distinguishes reliability (consistent performance across time, populations, and settings) from robustness (stability under perturbations) and defines two complementary monitoring tasks: data monitoring (detecting shifts in the input space) and model monitoring (detecting shifts in performance or output distribution). Mathematical formulations are provided for hypothesis testing of distributional equality, with explicit thresholds (ε) and window sizes (w) that control sensitivity and false‑alarm rates.

A central contribution is the DDC cycle. In the Detection stage, the authors categorize data shift into covariate shift, label shift, and concept shift, and survey a wide range of detection techniques: statistical two‑sample tests (MMD, KL/JS divergence, Wasserstein distance), change‑point detectors (CUSUM, Page‑Hinkley), and unsupervised deep‑learning approaches (autoencoders, variational Bayes, clustering). Both static (between‑site) and streaming (temporal) scenarios are covered.

The Diagnosis stage focuses on root‑cause analysis. The review links drift signals to potential sources—external data changes versus internal model changes—using causal inference frameworks and explainable AI methods such as SHAP, LIME, and Integrated Gradients to track feature importance drift or representation shift.

In the Correction stage, three major families of remediation are discussed. (1) Batch retraining: periodic collection of labeled data and full model re‑training, the traditional approach for handling gradual drift. (2) Test‑time adaptation: domain adaptation techniques (adversarial DA, CORAL), parameter‑efficient fine‑tuning methods (LoRA, adapters), and prompt engineering for large language models (LLMs) that allow rapid, label‑free adjustments. (3) Continuous learning and calibration: online or incremental learning algorithms, memory‑based rehearsal, Bayesian uncertainty estimation (Monte‑Carlo dropout, deep ensembles), and post‑hoc calibration (Platt scaling, isotonic regression) to restore calibrated probabilities. The authors emphasize that LLMs, despite their impressive capabilities, also suffer temporal degradation—e.g., GPT‑4’s answer changes in 25 % of radiology exam questions after a few months—necessitating ongoing evaluation and adaptation.

Regulatory alignment is addressed by mapping the DDC components to the FDA’s Predetermined Change Control Plan (PCCP) and ISO/IEC 42001 audit‑trail requirements. Detection corresponds to “Data Management Practices” and “Performance Evaluation,” Diagnosis to “Impact Assessment” and “Traceability,” and Correction to “Retraining Practices” and “Update Procedures,” thereby providing a compliance‑ready lifecycle management blueprint.

The paper concludes by identifying open challenges: accurate root‑cause identification when ground‑truth labels are scarce, balancing false‑positive and false‑negative alerts in real‑time monitoring, and the computational cost of post‑correction validation. Future research directions include multimodal drift detection, privacy‑preserving federated monitoring, human‑in‑the‑loop decision support for correction actions, and automated regulatory reporting pipelines. Overall, the review offers a detailed taxonomy, practical guidelines, and a regulatory‑aligned roadmap for sustaining safe, reliable, and trustworthy medical AI systems in ever‑changing clinical settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment