a person wearing decorative forehead jewelry, speaking" "a person speaking with burning trees in the background"

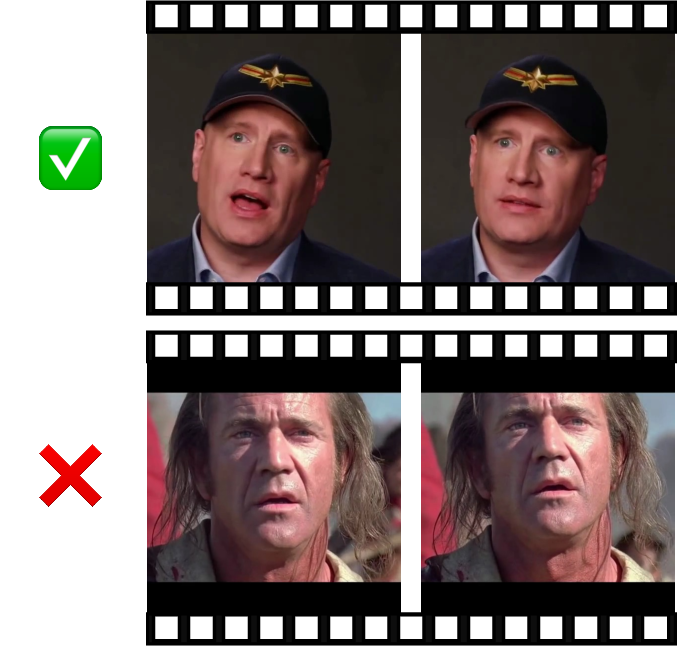

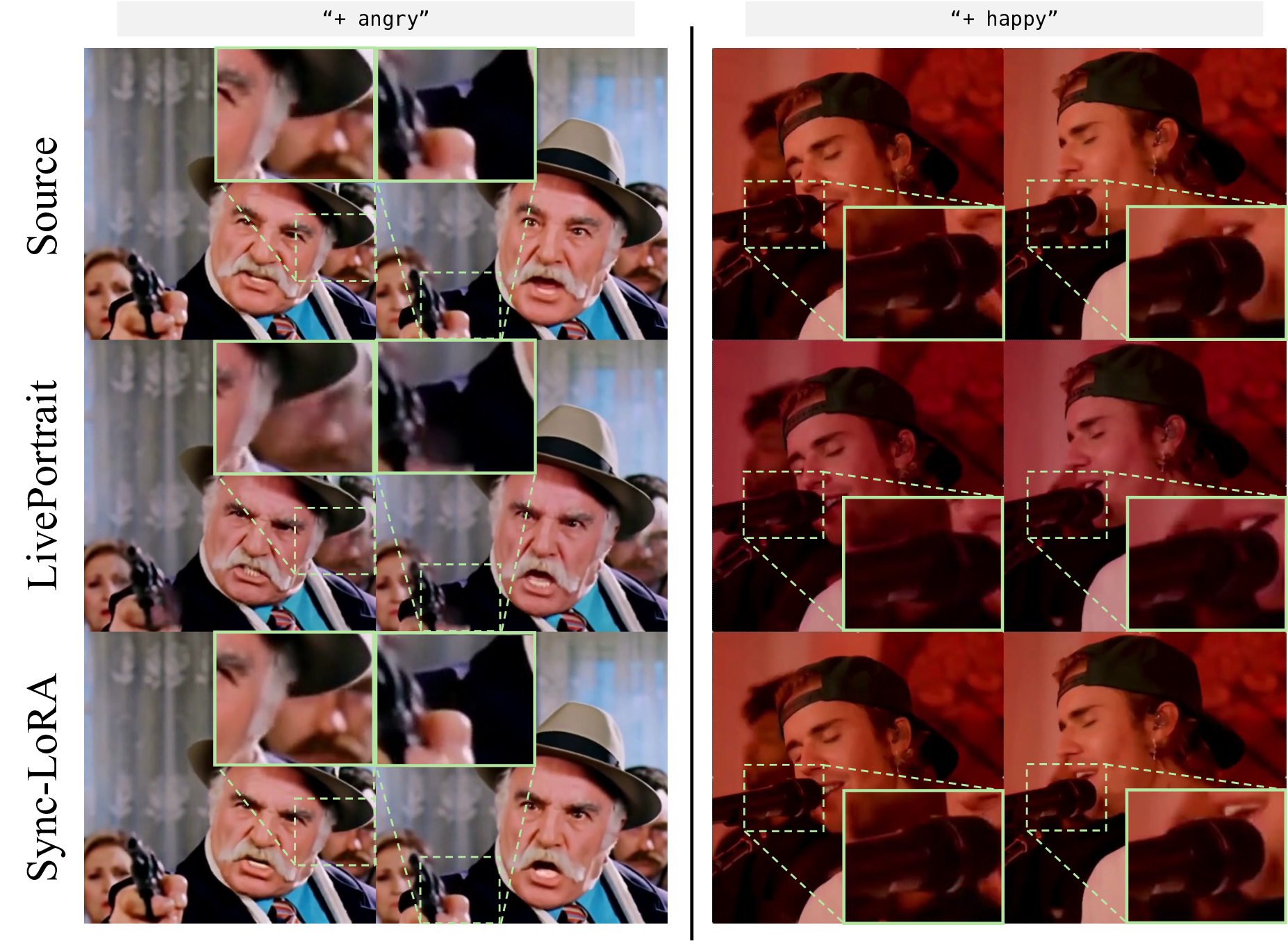

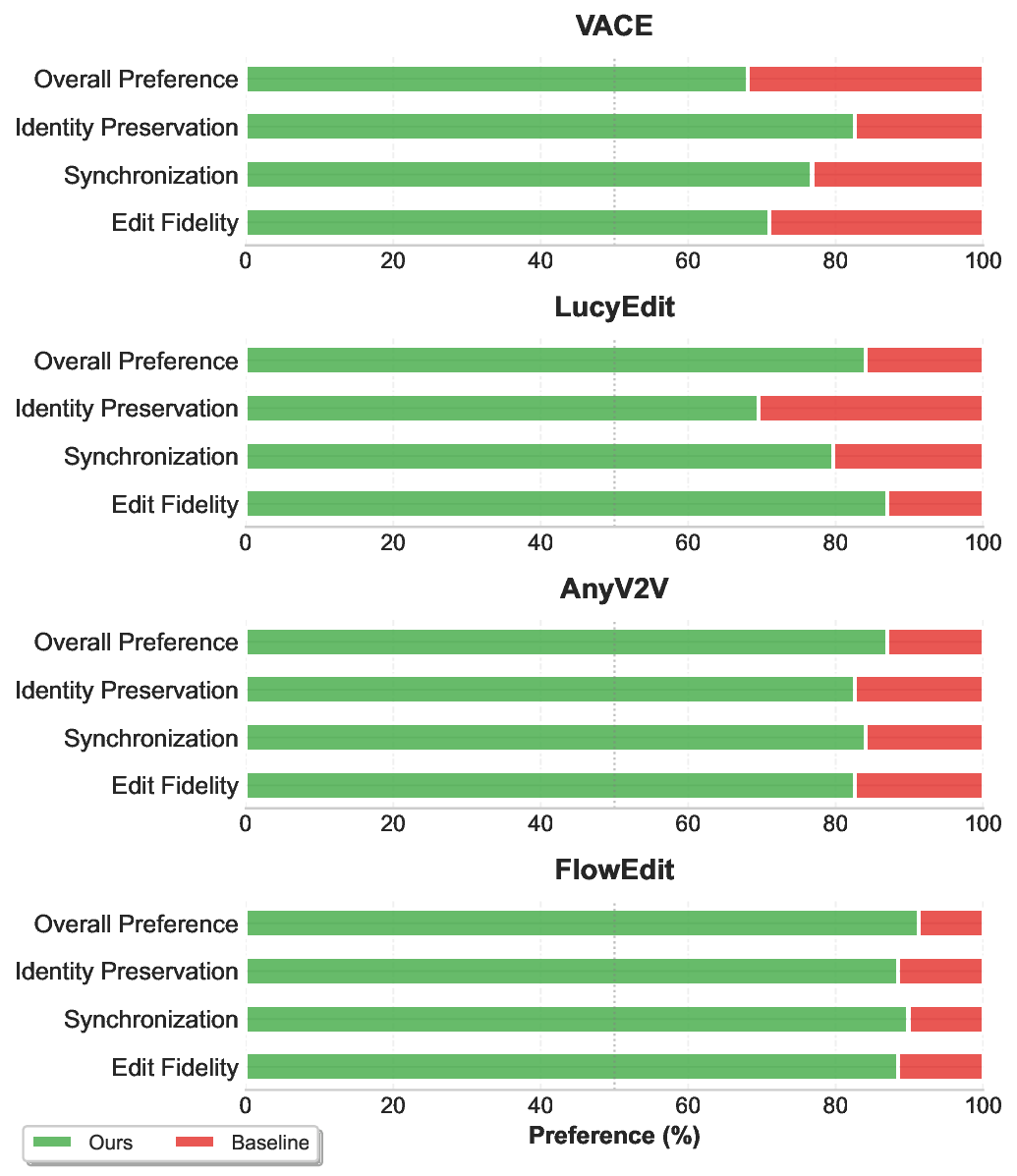

Source Video Edited First Frame "a person wearing a fedora hat, speaking and moving his hand" Figure 1 . Given a source video and an edited first frame, our method propagates the visual edit. The resulting video precisely retains the subject's identity and frame-accurate source motion, while faithfully adopting the new appearance from the edited frame.

Deep Dive into 첫 프레임 편집을 전체 영상에 자연스럽게 전파하는 방법.

a person wearing decorative forehead jewelry, speaking" “a person speaking with burning trees in the background”

Source Video Edited First Frame “a person wearing a fedora hat, speaking and moving his hand” Figure 1 . Given a source video and an edited first frame, our method propagates the visual edit. The resulting video precisely retains the subject’s identity and frame-accurate source motion, while faithfully adopting the new appearance from the edited frame.

In-Context Sync-LoRA for Portrait Video Editing

Sagi Polaczek1

Or Patashnik1

Ali Mahdavi-Amiri2

Daniel Cohen-Or1

1Tel Aviv University

2Simon Fraser University

“a person wearing

decorative

forehead jewelry,

speaking”

”a person

speaking with

burning trees in

the background“

Source Video

Edited First Frame

“a person wearing

a fedora hat,

speaking and

moving his hand”

Figure 1. Given a source video and an edited first frame, our method propagates the visual edit. The resulting video precisely retains the

subject’s identity and frame-accurate source motion, while faithfully adopting the new appearance from the edited frame.

Abstract

Editing portrait videos is a challenging task that requires

flexible yet precise control over a wide range of modi-

fications, such as appearance changes, expression edits,

or the addition of objects. The key difficulty lies in pre-

serving the subject’s original temporal behavior, demand-

ing that every edited frame remains precisely synchronized

with the corresponding source frame.

We present Sync-

LoRA, a method for editing portrait videos that achieves

high-quality visual modifications while maintaining frame-

accurate synchronization and identity consistency. Our ap-

proach uses an image-to-video diffusion model, where the

edit is defined by modifying the first frame and then propa-

gated to the entire sequence. To enable accurate synchro-

nization, we train an in-context LoRA using paired videos

that depict identical motion trajectories but differ in ap-

pearance.

These pairs are automatically generated and

curated through a synchronization-based filtering process

that selects only the most temporally aligned examples for

training. This training setup teaches the model to combine

motion cues from the source video with the visual changes

introduced in the edited first frame.

Trained on a com-

pact, highly curated set of synchronized human portraits,

Sync-LoRA generalizes to unseen identities and diverse ed-

its (e.g., modifying appearance, adding objects, or chang-

ing backgrounds), robustly handling variations in pose and

expression. Our results demonstrate high visual fidelity and

strong temporal coherence, achieving a robust balance be-

tween edit fidelity and precise motion preservation. Project

page: https://sagipolaczek.github.io/Sync-LoRA/ .

1. Introduction

With the rapid advancement of diffusion-based video edit-

ing techniques, text- and image-driven methods have gained

prominence for achieving high-quality, temporally consis-

tent edits [19, 27, 57].

These techniques hold strong

promise for applications in advertising, film production,

game development, and interactive media, where fine-

grained control over visual content is essential.

A particular interest in video editing lies in the editing

of portrait videos, as a large portion of visual media fea-

tures humans [17, 20, 35]. Editing portrait videos presents

a uniquely demanding challenge. On the one hand, the vi-

sual appearance of the subject must be modified according

arXiv:2512.03013v1 [cs.CV] 2 Dec 2025

to a user-defined instruction. On the other hand, the result-

ing video is expected to preserve the exact motion patterns

of the source video. This requirement goes beyond visual

consistency across frames. The edited video should follow

the source on a frame-by-frame basis so that every blink,

gaze shift, or articulation occurs at the same moment as in

the source. This level of temporal synchronization is critical

in talking-head scenarios, where even small misalignment

may render the output unfaithful to the source performance.

At the same time, the desired edit is often localized and spe-

cific, such as adding a mustache, changing an expression, or

inserting a visual accessory. The goal is to alter only what is

strictly required by the instruction, leaving all other visual

content and motion untouched. Achieving this combination

of precise synchronization, a minimal editing footprint, and

temporal identity preservation remains an open challenge

for most existing video editing techniques [17, 27, 29, 37].

Inspired by the In-Context LoRA (IC-LoRA) paradigm

[1, 9, 11, 26, 46, 65], we introduce Sync-LoRA, a method

designed to generate precisely synchronized edited portrait

videos. The edit is guided by modifying only the first frame

of the source video, which, together with a text prompt, de-

fines the visual transformation to be applied. Our key idea

is to fine-tune an image-to-video diffusion model using cu-

rated pairs of videos, one showing the original sequence and

the other its edited counterpart. These paired videos are

constructed to be frame-accurately synchronized, meaning

that corresponding frames exhibit identical motion trajecto-

ries while differing only in the aspects altered by the edit.

To obtain such pairs, we develop a two-stage pipeline:

generation followed by synchronization-based filtering. In

the generation stage, a vision-language model produces

diverse subject prompts and edit

…(Full text truncated)…

This content is AI-processed based on ArXiv data.