Robots often fail at everyday tasks because instructions skip commonsense details like hidden preconditions and small subgoals. Traditional symbolic planners need these details to be written explicitly, which is time consuming and often incomplete. In this project we combine a Large Language Model with symbolic planning. Given a natural language task, the LLM suggests plausible preconditions and subgoals. We translate these suggestions into a formal planning model and execute the resulting plan in simulation. Compared to a baseline planner without the LLM step, our system produces more valid plans, achieves a higher task success rate, and adapts better when the environment changes. These results suggest that adding LLM commonsense to classical planning can make robot behavior in realistic scenarios more reliable.

Deep Dive into LLM과 고전 계획의 결합으로 로봇 일상 작업의 상식 보강.

Robots often fail at everyday tasks because instructions skip commonsense details like hidden preconditions and small subgoals. Traditional symbolic planners need these details to be written explicitly, which is time consuming and often incomplete. In this project we combine a Large Language Model with symbolic planning. Given a natural language task, the LLM suggests plausible preconditions and subgoals. We translate these suggestions into a formal planning model and execute the resulting plan in simulation. Compared to a baseline planner without the LLM step, our system produces more valid plans, achieves a higher task success rate, and adapts better when the environment changes. These results suggest that adding LLM commonsense to classical planning can make robot behavior in realistic scenarios more reliable.

Enhancing Cognitive Robotics with Commonsense through

LLM-Generated Preconditions and Subgoals

Ohad Bachner

ohad.bachner@campus.technion.ac.il

Bar Gamliel

bargamliel@campus.technion.ac.il

November, 2025

Abstract

Robots often fail at everyday tasks because instructions skip commonsense details like hidden pre-

conditions and small subgoals. Traditional symbolic planners need these details to be written explicitly,

which is time consuming and often incomplete. In this project we combine a Large Language Model

with symbolic planning. Given a natural language task, the LLM suggests plausible preconditions and

subgoals. We translate these suggestions into a formal planning model and execute the resulting plan

in simulation. Compared to a baseline planner without the LLM step, our system produces more valid

plans, achieves a higher task success rate, and adapts better when the environment changes.

These

results suggest that adding LLM commonsense to classical planning can make robot behavior in realistic

scenarios more reliable.

Getting task

Goal 1

Goal 2

Execution

heuristic

heuristic

plan execution

Sub Goal 1

Sub Goal 2

LLM

LLM

heuristic

heuristic

Figure 1: Planner flow with LLM-induced subgoals feeding back into the plan.

1

Introduction

Autonomous robots are increasingly deployed in dynamic and unstructured environments, where they must

plan and execute complex tasks under uncertainty.

Classical planning approaches, typically modeled in

PDDL and solved with heuristic search, provide a principled foundation for task planning (Edelkamp and

Schr¨odl, 2011; Geffner and Bonet, 2013).

However, these methods rely on explicit domain models that

enumerate preconditions and effects of actions. In practice, such models often omit implicit commonsense

knowledge, for example, that a container must be upright before pouring, or that water must be boiled before

making tea. The absence of such knowledge can lead to plans that are logically correct but physically invalid.

Cognitive robotics research seeks to bridge symbolic reasoning with robot perception and control (Ghallab

et al., 2004). While significant progress has been made in integrating planning with motion control and

execution, robots still lack the ability to autonomously infer commonsense constraints that humans consider

obvious.

Large Language Models (LLMs), trained on massive corpora of human knowledge, present a

promising avenue for addressing this gap. LLMs can generate likely preconditions, subgoals, and contextual

constraints from natural language task descriptions, potentially enriching classical planning models.

1

arXiv:2512.00069v1 [cs.RO] 24 Nov 2025

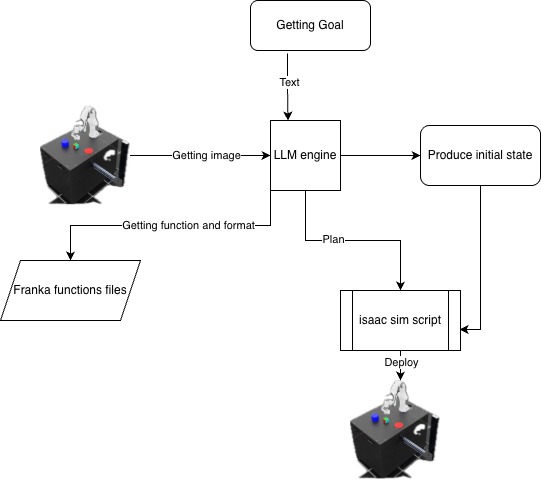

In this project, we investigate how LLMs can complement symbolic planning in cognitive robotics. Specif-

ically, we propose a framework in which an LLM (specifically, Claude 4.5 Sonnet) generates candidate

preconditions and subgoals. These are then mapped into formal representations within the Unified Plan-

ning Framework (UPF), which are then combined with heuristic search. The enriched models are then

executed by a robot in simulation, enabling a comparison between baseline planning and commonsense-

enhanced planning. Our goal is to evaluate whether this integration improves plan validity, robustness, and

task success rates.

This work aligns with the objectives of courses in AI planning and cognitive robotics, which emphasize

algorithmic novelty in planning and autonomous robotic behavior.

By combining advances in heuristic

search with commonsense reasoning from LLMs, we aim to contribute toward more capable and resilient

autonomous robots.

2

Related Work

2.1

Classical Planning and Heuristic Search

Classical planning provides the algorithmic foundation for our approach, with states, action schemas, and

goal-directed search typically formalized in PDDL. We rely on heuristic search to scale task planning and

to surface useful planning signals (e.g., landmarks/plateaus) that drive our triggers. The standard texts by

Ghallab et al. (2004), Edelkamp and Schr¨odl (2011), and Geffner and Bonet (2013) summarize the formal

models, heuristic families, and search strategies that underlie our planner and analysis.

2.2

LLMs and Symbolic Models

Recent work uses LLMs to construct or repair symbolic planning models from natural language. In particu-

lar, Pavel Smirnov and Gienger (2024) generate full PDDL domains and iteratively apply syntax/semantic

checks and reachability analysis before planning. By contrast, we keep a fixed hand-written domain and

inject runtime deltas—extra preconditions and subgoals—only when our classifier gate is triggered during

planning/execution. This shifts from offline model synthesis to online, task-specific constraint augmentation,

with lightweight feasibility probes instead of full-domain regeneration.

2.3

Generalization and World Models

A critical failure mode in deployed robotics is the inability to generalize beyond pre-defined tasks. Recent

work on large-scale world models, such as th

…(Full text truncated)…

This content is AI-processed based on ArXiv data.