📝 Original Info

- Title: HealthContradict: Evaluating Biomedical Knowledge Conflicts in Language Models

- ArXiv ID: 2512.02299

- Date: 2025-12-02

- Authors: Boya Zhang, Alban Bornet, Rui Yang, Nan Liu, Douglas Teodoro

📝 Abstract

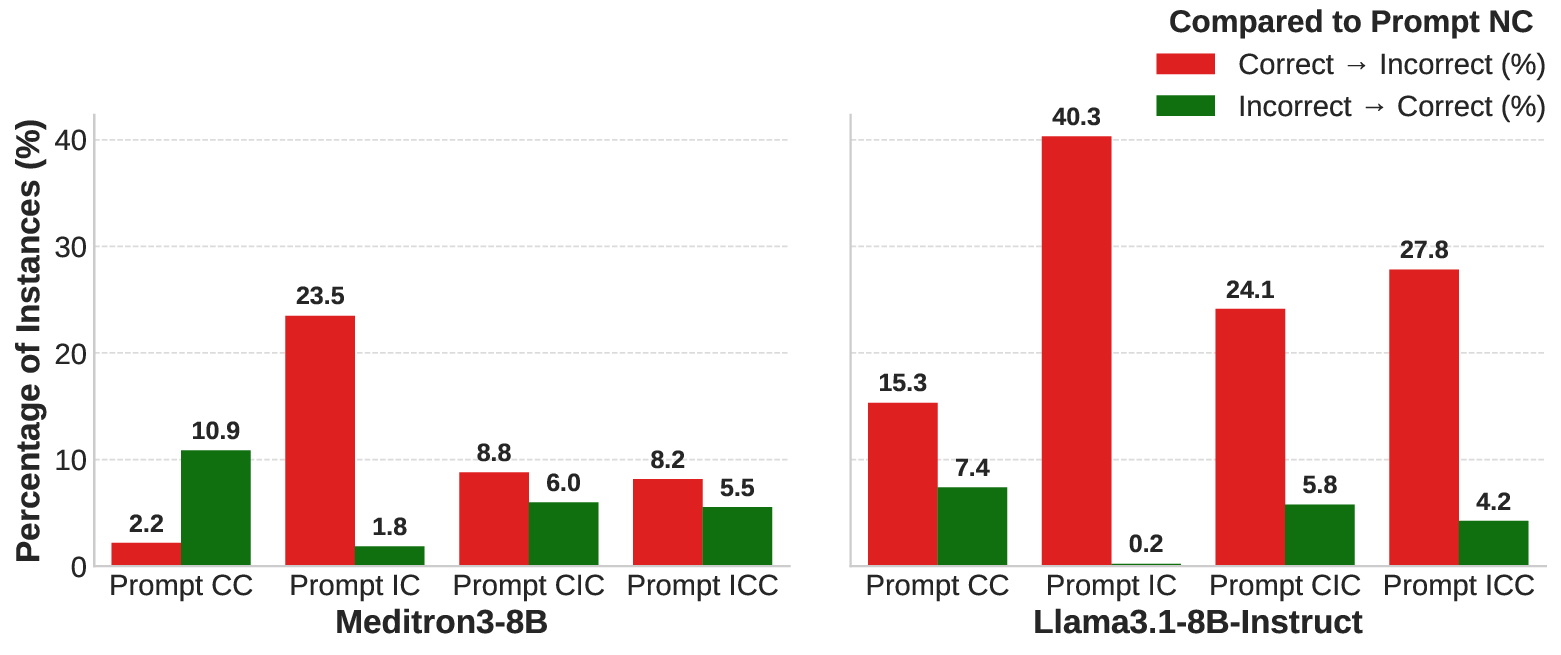

How do language models use contextual information to answer health questions? How are their responses impacted by conflicting contexts? We assess the ability of language models to reason over long, conflicting biomedical contexts using HEALTHCONTRADICT, an expert-verified dataset comprising 920 unique instances, each consisting of a health-related question, a factual answer supported by scientific evidence, and two documents presenting contradictory stances. We consider several prompt settings, including correct, incorrect or contradictory context, and measure their impact on model outputs. Compared to existing medical question-answering evaluation benchmarks, HEALTHCONTRADICT provides greater distinctions of language models' contextual reasoning capabilities. Our experiments show that the strength of fine-tuned biomedical language models lies not only in their parametric knowledge from pretraining, but also in their ability to exploit correct context while resisting incorrect context.

💡 Deep Analysis

Deep Dive into HealthContradict: Evaluating Biomedical Knowledge Conflicts in Language Models.

How do language models use contextual information to answer health questions? How are their responses impacted by conflicting contexts? We assess the ability of language models to reason over long, conflicting biomedical contexts using HEALTHCONTRADICT, an expert-verified dataset comprising 920 unique instances, each consisting of a health-related question, a factual answer supported by scientific evidence, and two documents presenting contradictory stances. We consider several prompt settings, including correct, incorrect or contradictory context, and measure their impact on model outputs. Compared to existing medical question-answering evaluation benchmarks, HEALTHCONTRADICT provides greater distinctions of language models’ contextual reasoning capabilities. Our experiments show that the strength of fine-tuned biomedical language models lies not only in their parametric knowledge from pretraining, but also in their ability to exploit correct context while resisting incorrect context.

📄 Full Content

Language models are susceptible to generating reasonable yet nonfactual content 1 . This issue raises concerns about the reliability of language models in providing medical advice, as there are significant risks when they generate convincing but incorrect information, which could influence people's health-related decisions 2 . Additionally, knowledge and misinformation in the biomedical domain both evolve rapidly, especially during medical crises 3 , with unverified information spreading quickly across the internet 4 , impacting pre-training and in-context learning for these models.

… Green coffee can be taken before or after meals and it is also known to be useful for weight loss …

Coffee can potentially help with weight loss, but its effects are modest and depend on how it’s consumed and your overall habits.

I am confused. Existing methods to mitigate misinformation use static fact sources for hallucination detection 5,6 or verified evidence to refute false claims [7][8][9][10] . These strategies have often been combined in retrieval-augmented generation (RAG) 11 pipelines, as one of the most effective methods to attenuate hallucinations in the biomedical domain 12 . Despite some attempts considering information quality 13 , current approaches for biomedical RAG [14][15][16] primarily focus on improving relevance in the retrieval pipeline [17][18][19][20][21] . However, in the real world, contradictory sources could be used to verify the same claim, leading to knowledge conflicts 22 in RAG paradigms. For instance, consider the situation illustrated in Figure 1, where a language model has its own parametric knowledge, i.e., learned during pre-training, stating that coffee aids in weight loss. However, when utilizing an in-context learning approach, the model is presented with two contradictory passages as contextual knowledge, i.e., information from the external source material, while answering the question. In this case, the conflicts arise from the contradictions between Passage 1 and Passage 2, as well as between the model’s parametric knowledge and Passage 2.

The behavior of language models is influenced by knowledge conflicts 22 , which are the contradictions within parametric knowledge learned at training time 23 and contextual knowledge given at inference time [24][25][26][27] . Language models are receptive to coherent contextual knowledge when it conflicts with parametric knowledge 28 . Multi-turn persuasive conversations as contextual knowledge can even manipulate language models’ factual parametric knowledge 29 . On the other hand, language models are biased toward parametric knowledge when the contextual knowledge is self-contradictory 28,30 . They also have difficulty generating answers that reflect the self-contradiction of the contextual knowledge 31 , especially for implicit conflicts that require reasoning 32 . Besides, language models struggle with self-contradictions in long documents that require more nuance and context 33 .

Various context-aware methods were proposed to overcome language models’ confusion regarding knowledge conflicts. While context-aware decoding overrode a model’s parametric knowledge when it contradicts the contextual knowledge 34 , ContextCite traced back the parts of the contextual knowledge that led a model to generate a particular statement to improve the explainability of language models 35 . However, these methods only focused on either parametric or contextual knowledge. COMBO 36 , on the other hand, leveraged both the parametric and contextual knowledge by using discriminators trained on silver labels to assess passage compatibility. In addition, DisentQA 37 trained a model that predicts two types of answers, one based on contextual knowledge and one on parametric knowledge for a given question. Contrastive decoding further maximized the difference between logits under knowledge conflicts and calibrates the model’s probability in the correct answer 30 . Solutions were also proposed to mitigate the harmful behavior of language models. At the training phase, counterfactual and irrelevant contexts were injected into standard supervised datasets to perform knowledge-aware finetuning to enhance language models’ robustness 38 . Meanwhile, in-context pretraining enhanced language models’ performance in complex contextual reasoning ? . At the inference phase, defense strategies of misinformation detection, vigilant prompting, and reader ensembles were proposed to mitigate misinformation generated by language models 39 . In addition, query augmentation was used to search for robust answers to defend against poisoning attacks 40 . Furthermore, fact duration prediction identified which facts are prone to rapid change and helps models avoid reciting outdated information 41 . Current approaches prioritized mitigating either contextual conflicts or harmful behaviors of language models. However, both context-awareness and truthfulness are important in improving th

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.