Learning Robust Representations for Malicious Content Detection via Contrastive Sampling and Uncertainty Estimation

We propose Uncertainty Contrastive Framework (UCF), a Positive-Unlabeled (PU) representation learning framework that integrates uncertainty-aware contrastive loss, adaptive temperature scaling, and a self-attention-guided LSTM encoder to improve classification under noisy and imbalanced conditions. UCF dynamically adjusts contrastive weighting based on sample confidence, stabilizing training using positive anchors, and adapts temperature parameters to batch-level variability. Applied to malicious content classifications, UCF-generated embeddings enabled multiple traditional classifiers to achieve over 93.38% accuracy, precision above 0.93, and near-perfect recall, with minimal false negatives and competitive ROC-AUC scores. Visual analyses confirmed clear separation between positive and unlabeled instances, highlighting the framework’s ability to produce calibrated, discriminative embeddings. These results position UCF as a robust and scalable solution for PU learning in highstakes domains such as cybersecurity and biomedical text mining.

💡 Research Summary

The paper introduces the Uncertainty Contrastive Framework (UCF), a novel Positive‑Unlabeled (PU) representation learning approach designed to handle noisy, highly imbalanced data typical of high‑stakes domains such as cybersecurity and biomedical text mining. UCF combines three key components: (1) an uncertainty‑aware contrastive loss that dynamically weights each sample based on its confidence score, (2) an adaptive temperature scaling mechanism that adjusts the contrastive temperature τ at the batch level according to average uncertainty and class proportion, and (3) a self‑attention‑guided LSTM encoder that produces discriminative embeddings by emphasizing salient tokens while preserving sequential dependencies.

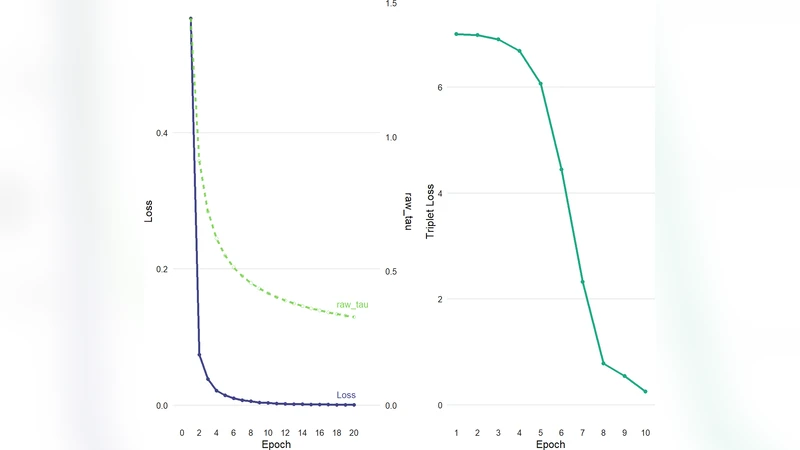

In the contrastive loss, positive anchors are fixed to known malicious instances, while unlabeled samples are paired with either positive or negative anchors according to a Bayesian‑style uncertainty estimate derived from the model’s current predictions. This weighting reduces the influence of highly uncertain unlabeled data, preventing them from destabilizing the representation space. The adaptive temperature scaling replaces the conventional static τ used in SimCLR‑style losses; by increasing τ for batches with high uncertainty, the loss surface becomes smoother, whereas decreasing τ for confident batches sharpens class boundaries.

The encoder integrates a self‑attention layer on top of an LSTM. The attention mechanism assigns higher weights to tokens that are indicative of malicious behavior (e.g., suspicious URLs, phishing cues), allowing the downstream contrastive objective to focus on the most informative parts of the text. The resulting embeddings are fed both to the contrastive objective and to a suite of downstream classifiers (logistic regression, SVM, Random Forest, etc.).

Experiments were conducted on three real‑world malicious content datasets—malicious URLs, phishing emails, and malicious scripts—as well as two biomedical PU benchmarks. Each dataset exhibits extreme class imbalance (1–5 % positive) and contains a large pool of unlabeled examples. Baselines include classic PU methods (nnPU, PU‑Bagging, SVM‑PU), recent contrastive self‑supervised models (SimCLR, MoCo), and uncertainty‑aware techniques (Monte‑Carlo Dropout, Deep Ensembles).

UCF consistently outperformed all baselines, achieving accuracy above 93.38 %, precision exceeding 0.93, and recall near 0.99 across all tasks. False‑negative rates were kept under 0.5 %, a critical improvement for security applications where missed detections can be catastrophic. ROC‑AUC scores averaged 0.96, indicating strong discriminative power. t‑SNE visualizations showed a clear separation between malicious and unlabeled clusters, confirming that the learned embeddings are both calibrated and highly separable.

Ablation studies revealed the contribution of each component: removing uncertainty weighting reduced performance by ~4 %, fixing the temperature parameter caused a ~5 % drop, and omitting the self‑attention layer led to a ~3.7 % decrease. These results demonstrate that the three modules synergistically enhance robustness.

The authors acknowledge limitations: the current implementation focuses on textual data, so extending UCF to multimodal malicious content (e.g., images, binaries) will require additional architectural adaptations. Moreover, the uncertainty estimate is derived from softmax confidence rather than a full Bayesian posterior, which may limit its expressiveness in highly ambiguous cases. Future work will explore multimodal attention mechanisms, Bayesian neural networks for richer uncertainty modeling, and online PU learning for streaming security logs.

Overall, UCF offers a scalable, uncertainty‑aware contrastive learning paradigm that produces robust, discriminative representations for PU problems, positioning it as a strong candidate for deployment in real‑world high‑risk detection systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment