Graph Distance as Surprise: Free Energy Minimization in Knowledge Graph Reasoning

📝 Original Info

- Title: Graph Distance as Surprise: Free Energy Minimization in Knowledge Graph Reasoning

- ArXiv ID: 2512.01878

- Date: 2025-12-01

- Authors: Researchers from original ArXiv paper

📝 Abstract

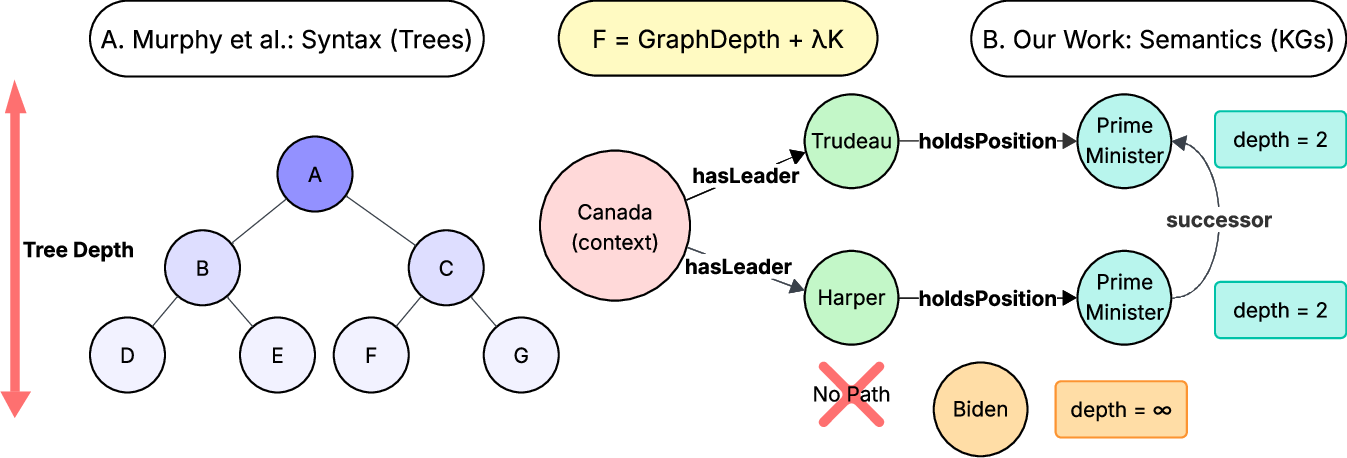

In this work, we propose that reasoning in knowledge graph (KG) networks can be guided by surprise minimization. Entities that are close in graph distance will have lower surprise than those farther apart. This connects the Free Energy Principle (FEP) from neuroscience to KG systems, where the KG serves as the agent's generative model. We formalize surprise using the shortest-path distance in directed graphs and provide a framework for KG-based agents. Graph distance appears in graph neural networks as message passing depth and in model-based reinforcement learning as world model trajectories. This work-in-progress study explores whether distance-based surprise can extend recent work showing that syntax minimizes surprise and free energy via tree structures.💡 Deep Analysis

Deep Dive into Graph Distance as Surprise: Free Energy Minimization in Knowledge Graph Reasoning.In this work, we propose that reasoning in knowledge graph (KG) networks can be guided by surprise minimization. Entities that are close in graph distance will have lower surprise than those farther apart. This connects the Free Energy Principle (FEP) from neuroscience to KG systems, where the KG serves as the agent’s generative model. We formalize surprise using the shortest-path distance in directed graphs and provide a framework for KG-based agents. Graph distance appears in graph neural networks as message passing depth and in model-based reinforcement learning as world model trajectories. This work-in-progress study explores whether distance-based surprise can extend recent work showing that syntax minimizes surprise and free energy via tree structures.

📄 Full Content

📸 Image Gallery