📝 Original Info

- Title: 비정상 환경을 위한 예측 기반 오프라인 강화학습 프레임워크

- ArXiv ID: 2512.01987

- Date: 2025-12-01

- Authors: Suzan Ece Ada, Georg Martius, Emre Ugur, Erhan Oztop

📝 Abstract

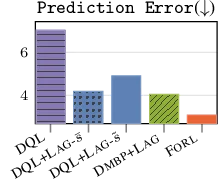

Offline Reinforcement Learning (RL) provides a promising avenue for training policies from pre-collected datasets when gathering additional interaction data is infeasible. However, existing offline RL methods often assume stationarity or only consider synthetic perturbations at test time, assumptions that often fail in real-world scenarios characterized by abrupt, time-varying offsets. These offsets can lead to partial observability, causing agents to misperceive their true state and degrade performance. To overcome this challenge, we introduce Forecasting in Non-stationary Offline RL (FORL), a framework that unifies (i) conditional diffusion-based candidate state generation, trained without presupposing any specific pattern of future non-stationarity, and (ii) zero-shot time-series foundation models. FORL targets environments prone to unexpected, potentially non-Markovian offsets, requiring robust agent performance from the onset of each episode. Empirical evaluations on offline RL benchmarks, augmented with real-world time-series data to simulate realistic non-stationarity, demonstrate that FORL consistently improves performance compared to competitive baselines. By integrating zero-shot forecasting with the agent's experience, we aim to bridge the gap between offline RL and the complexities of real-world, non-stationary environments.

💡 Deep Analysis

Deep Dive into 비정상 환경을 위한 예측 기반 오프라인 강화학습 프레임워크.

Offline Reinforcement Learning (RL) provides a promising avenue for training policies from pre-collected datasets when gathering additional interaction data is infeasible. However, existing offline RL methods often assume stationarity or only consider synthetic perturbations at test time, assumptions that often fail in real-world scenarios characterized by abrupt, time-varying offsets. These offsets can lead to partial observability, causing agents to misperceive their true state and degrade performance. To overcome this challenge, we introduce Forecasting in Non-stationary Offline RL (FORL), a framework that unifies (i) conditional diffusion-based candidate state generation, trained without presupposing any specific pattern of future non-stationarity, and (ii) zero-shot time-series foundation models. FORL targets environments prone to unexpected, potentially non-Markovian offsets, requiring robust agent performance from the onset of each episode. Empirical evaluations on offline RL be

📄 Full Content

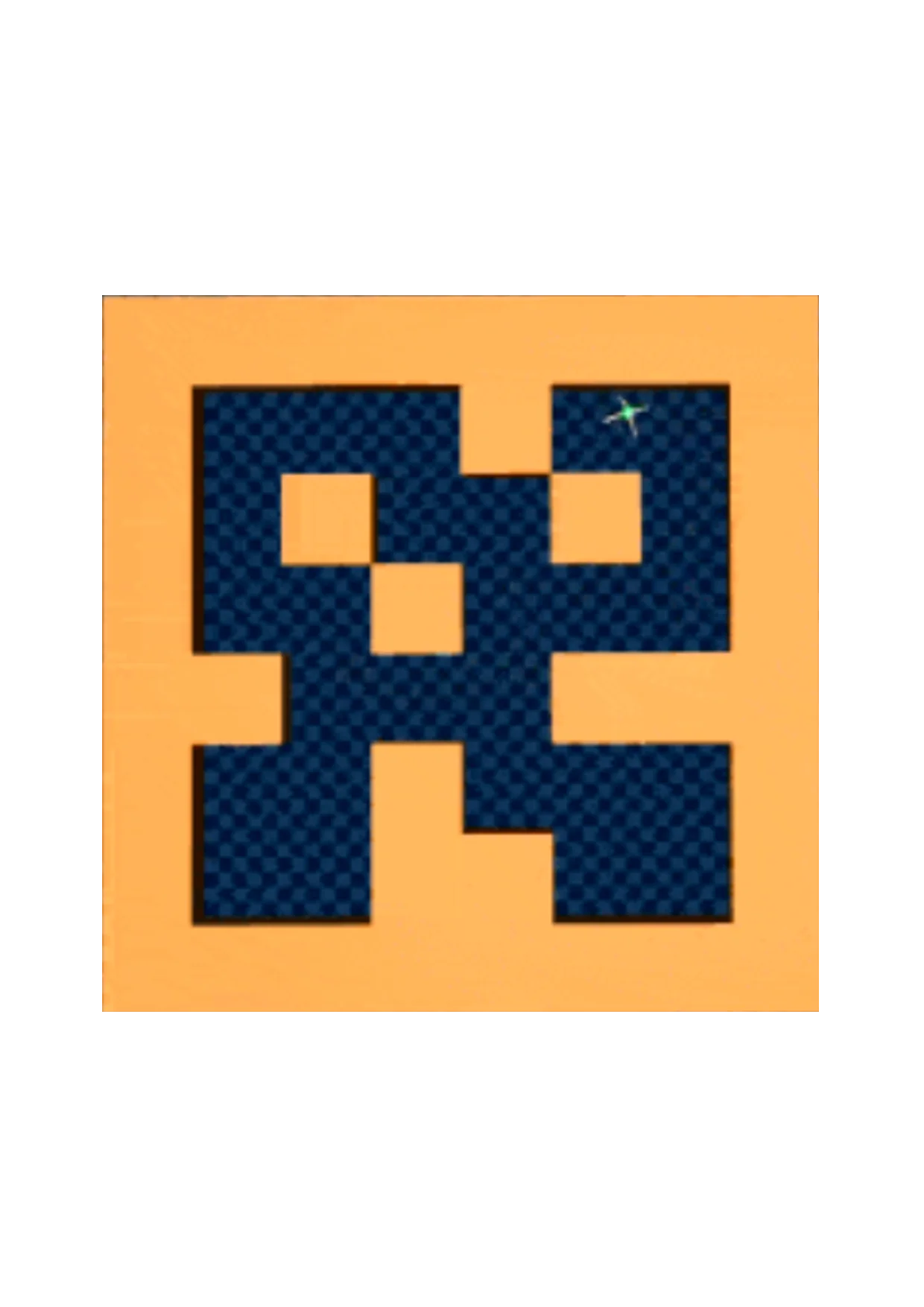

Figure 1: Setting. The agent does not know its location in the environment because its perception is offset every episode j by an unknown offset b j (only vertical offsets are illustrated). FORL leverages historical offset data and offline RL data (from a stationary phase) to forecast and correct for new offsets at test time. Ground-truth offsets ( , ) are hidden throughout the evaluation episodes.

Offline Reinforcement Learning (RL) leverages static datasets to avoid costly or risky online interactions [1,2]. Yet, agents trained on fully observable states often fail when deployed with noisy or corrupted observations. While robust offline RL methods address test-time perturbations, such as sensor noise or adversarial attacks [3], a critical gap persists in addressing non-stationarity within the observation function-a challenge that fundamentally alters the agent’s perception of the environment over time.

Prior online algorithms that consider the scope of non-stationarity as the observation function focus on learning agent morphologies [4] and generalization in Block MDPs [5]. While this scope of nonstationarity holds significant potential for real-world applications [6], it remains underexplored. We focus on the episodic evolution of the observation function at test-time in offline RL. In our setup, each dimen-sion of an agent’s state is influenced by an unknown, time-dependent constant additive offset that remains fixed within a single operational interval (an “episode”). This leads to a stream of evolving observation functions [7], extending across multiple future episodes, where the offsets remain hidden throughout the prediction window. For instance, industrial robots might apply a daily calibration offset to each joint, while sensors can exhibit a deviation until the next scheduled recalibration. Similarly, in healthcare or finance, data may be partially aggregated or withheld to comply with regulations, effectively obscuring finer-grained variations and leaving a single offset as the dominant factor per episode. By only storing these representative offsets, we circumvent the challenges of continuous interaction buffers in bandwidth-constrained or privacy-sensitive environments. Because the offset can differ across state dimensions (e.g., different sensor or actuation channels), each state dimension can be affected by a different unknown bias that stays constant for that episode but evolves differently across episodes. Approaches that assume predefined perturbations can struggle with these abrupt, episodic shifts because such offsets violate the typical assumption of smoothly varying observation functions. Frequent retraining, hyperparameter optimization, or extensive online adaptation to new observation function evolution patterns are costly, risky (due to trial-and-error in safety-critical settings), and may be infeasible if these patterns no longer reflect assumptions made during training. By separating offset data (episodic calibration values) from the massive replay buffers, a zero-shot forecasting-based approach can anticipate each new offset from the beginning of the episode without requiring policy updates or making assumptions on task evolution at test time [8]. Modeling these multidimensional additive offsets as stable, per-episode constants presents a robust and efficient way to handle time-varying conditions in non-stationary environments where the evolution of tasks follows a non-Markovian, time-series pattern [9], mitigating the risks of online exploration. We consider an offline RL setting during training where we have only access to a standard offline RL dataset collected from a stationary environment [3] with fully observable states. Initial data may be collected under near-ideal conditions and then gradually affected by wear, tear, or other natural shifts-even as the underlying physical laws (dynamics) remain unchanged. At test time, however, we evaluate in a nonstationary environment where both the observation function and the observation space change due to time-dependent external factors. This setup can be interpreted as environments shifting along observation space dimensions while the initial state of the agent is sampled from a uniform distribution over the state space. A simplified version of this setup for an offset affecting only one dimension of the state is illustrated in Figure 1. Here, the agent “knows” it is in a maze but does not know where it is in the maze. Furthermore, it will remain uncertain of its location across all episodes at test-time, as in every episode, a new offset leads to a systematic misalignment between perceived and actual positions. Importantly, these offsets may not conform to Gaussian or Markovian assumptions; instead, they may stem directly from complex, real-world time-series data [9] and remain constant throughout each episode. As a result, standard noise-driven or parametric state-estimation techniques, which typically rely on smoothly varyi

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.