📝 Original Info

- Title: STRIDE: A Systematic Framework for Selecting AI Modalities – Agentic AI, AI Assistants, or LLM Calls

- ArXiv ID: 2512.02228

- Date: 2025-12-01

- Authors: Researchers from original ArXiv paper

📝 Abstract

The rapid shift from stateless large language models (LLMs) to autonomous, goaldriven agents raises a central question: When is agentic AI truly necessary? While agents enable multi-step reasoning, persistent memory, and tool orchestration, deploying them indiscriminately leads to higher cost, complexity, and risk. We present STRIDE (Systematic Task Reasoning Intelligence Deployment Evaluator), a framework that provides principled recommendations for selecting between three modalities: (i) direct LLM calls, (ii) guided AI assistants, and (iii) fully autonomous agentic AI. STRIDE integrates structured task decomposition, dynamism attribution, and self-reflection requirement analysis to produce an Agentic Suitability Score, ensuring that full agentic autonomy is reserved for tasks with inherent dynamism or evolving context. Evaluated across 30 real-world tasks spanning SRE, compliance, and enterprise automation, STRIDE achieved 92% accuracy in modality selection, reduced unnecessary agent deployments by 45%, and cut resource costs by 37%. Expert validation over six months in SRE and compliance domains confirmed its practical utility, with domain specialists agreeing that STRIDE effectively distinguishes between tasks requiring simple LLM calls, guided assistants, or full agentic autonomy. This work reframes agent adoption as a necessity-driven design decision, ensuring autonomy is applied only when its benefits justify the costs.

💡 Deep Analysis

Deep Dive into STRIDE: A Systematic Framework for Selecting AI Modalities -- Agentic AI, AI Assistants, or LLM Calls.

The rapid shift from stateless large language models (LLMs) to autonomous, goaldriven agents raises a central question: When is agentic AI truly necessary? While agents enable multi-step reasoning, persistent memory, and tool orchestration, deploying them indiscriminately leads to higher cost, complexity, and risk. We present STRIDE (Systematic Task Reasoning Intelligence Deployment Evaluator), a framework that provides principled recommendations for selecting between three modalities: (i) direct LLM calls, (ii) guided AI assistants, and (iii) fully autonomous agentic AI. STRIDE integrates structured task decomposition, dynamism attribution, and self-reflection requirement analysis to produce an Agentic Suitability Score, ensuring that full agentic autonomy is reserved for tasks with inherent dynamism or evolving context. Evaluated across 30 real-world tasks spanning SRE, compliance, and enterprise automation, STRIDE achieved 92% accuracy in modality selection, reduced unnecessary agen

📄 Full Content

STRIDE: A Systematic Framework for Selecting AI

Modalities—Agentic AI, AI Assistants, or LLM Calls

Shubhi Asthana1, Bing Zhang1, Chad DeLuca1, Ruchi Mahindru2, Hima Patel3

1IBM Research – Almaden, CA, USA

2IBM Research – Yorktown, NY, USA

3IBM Research – India

{sasthan, delucac}@us.ibm.com, bing.zhang@ibm.com, rmahindr@us.ibm.com, himapatel@in.ibm.com

Abstract

The rapid shift from stateless large language models (LLMs) to autonomous, goal-

driven agents raises a central question: When is agentic AI truly necessary? While

agents enable multi-step reasoning, persistent memory, and tool orchestration,

deploying them indiscriminately leads to higher cost, complexity, and risk.

We present STRIDE (Systematic Task Reasoning Intelligence Deployment Evalua-

tor), a framework that provides principled recommendations for selecting between

three modalities: (i) direct LLM calls, (ii) guided AI assistants, and (iii) fully

autonomous agentic AI. STRIDE integrates structured task decomposition, dy-

namism attribution, and self-reflection requirement analysis to produce an Agentic

Suitability Score, ensuring that full agentic autonomy is reserved for tasks with

inherent dynamism or evolving context.

Evaluated across 30 real-world tasks spanning SRE, compliance, and enterprise

automation, STRIDE achieved 92% accuracy in modality selection, reduced un-

necessary agent deployments by 45%, and cut resource costs by 37%. Expert

validation over six months in SRE and compliance domains confirmed its practical

utility, with domain specialists agreeing that STRIDE effectively distinguishes

between tasks requiring simple LLM calls, guided assistants, or full agentic au-

tonomy. This work reframes agent adoption as a necessity-driven design decision,

ensuring autonomy is applied only when its benefits justify the costs.

1

Introduction

Recent advances have transformed AI from simple stateless LLM calls to sophisticated autonomous

agents, enabling richer reasoning, tool use, and adaptive workflows. While this progression unlocks

significant value in domains such as site reliability engineering (SRE), compliance, and automation,

it also introduces substantial trade-offs in cost, complexity, and risk. A central design challenge

emerges: when agents are truly necessary, and when are simpler alternatives sufficient?

We distinguish three modalities: (i) LLM calls, providing single-turn inference without memory

or tools, which is ideal for straightforward query-response scenarios; (ii) AI assistants, which

handle guided multi-step workflows with short-term context and limited tool access that is suitable

for structured processes requiring human oversight; and (iii) Agentic AI, which autonomously

decomposes tasks, orchestrates tools, and adapts with minimal oversight, which is necessary for

complex, dynamic environments requiring independent decision-making. Table 1 contrasts these

modalities.

Current practice often overuses agentic AI, deploying autonomous systems even when simpler

modalities would suffice. This tendency leads to unnecessary cost, complexity, and risk, particularly

39th Conference on Neural Information Processing Systems (NeurIPS 2025) Workshop: LAW - Bridging

Language, Agent, and World Models.

arXiv:2512.02228v1 [cs.AI] 1 Dec 2025

Table 1: Comparison of AI Modalities

Attribute

LLM Call

AI Assistant

Agentic AI

Reasoning Depth

Shallow

Medium

Deep

Tool Needs

Single

Single/Multiple

Multiple

State Needs

None

Ephemeral

Persistent

Risk Profile

Low

Medium

High

Use Case Example

Exchange rate lookup

Summarize meeting notes

Plan 5-day travel itinerary

in enterprise contexts where reliability and governance are critical. A principled framework for

deciding when agents are truly necessary has been missing, leaving design-time choices largely

intuition-driven rather than evidence-based. While agentic AI unlocks transformative value in

domains like SRE, compliance verification, and complex automation, deploying it indiscriminately

carries risks:

• Overengineering: using agents for simple queries wastes compute and developer effort.

• Security & compliance risks: uncontrolled tool use and API calls may leak sensitive data.

• System instability: recursive loops and unbounded workflows degrade reliability.

We propose STRIDE, a novel framework for necessity assessment at design time: systematically

deciding whether a given task should be solved with an LLM call, an AI assistant, or agentic AI.

STRIDE analyzes task descriptions across four integrated analytical dimensions:

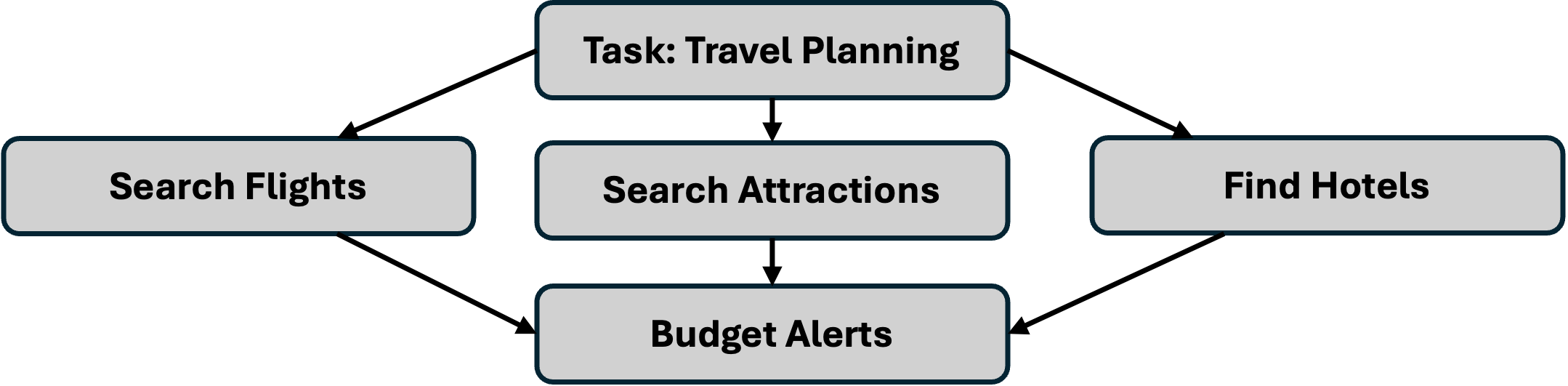

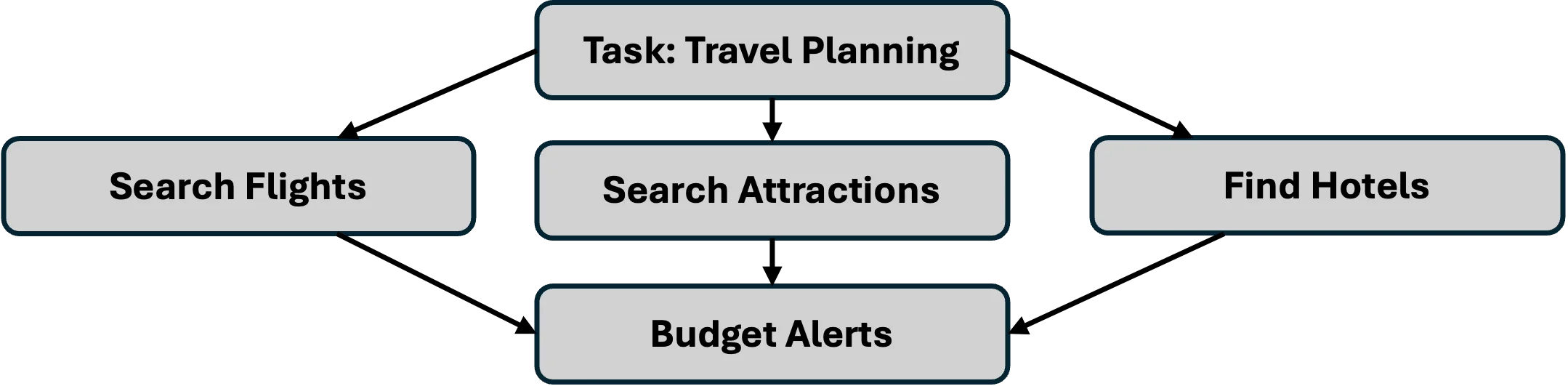

• Structured Task Decomposition: Tasks are decomposed into a directed acyclic graph

(DAG) of subtasks, systematically breaking down objectives to reveal inherent complexity,

interdependencies, and sequential reasoning requirements that distinguish simple queries

from multi-step challenges.

• Dynamic Reasoning and Tool-Interaction Scoring: STRIDE quantifies reasoning depth

together with tool dependencies, external data access, and API requirements, identifying

when sophisti

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.