📝 Original Info

- Title: EmoRAG: Evaluating RAG Robustness to Symbolic Perturbations

- ArXiv ID: 2512.01335

- Date: 2025-12-01

- Authors: Researchers from original ArXiv paper

📝 Abstract

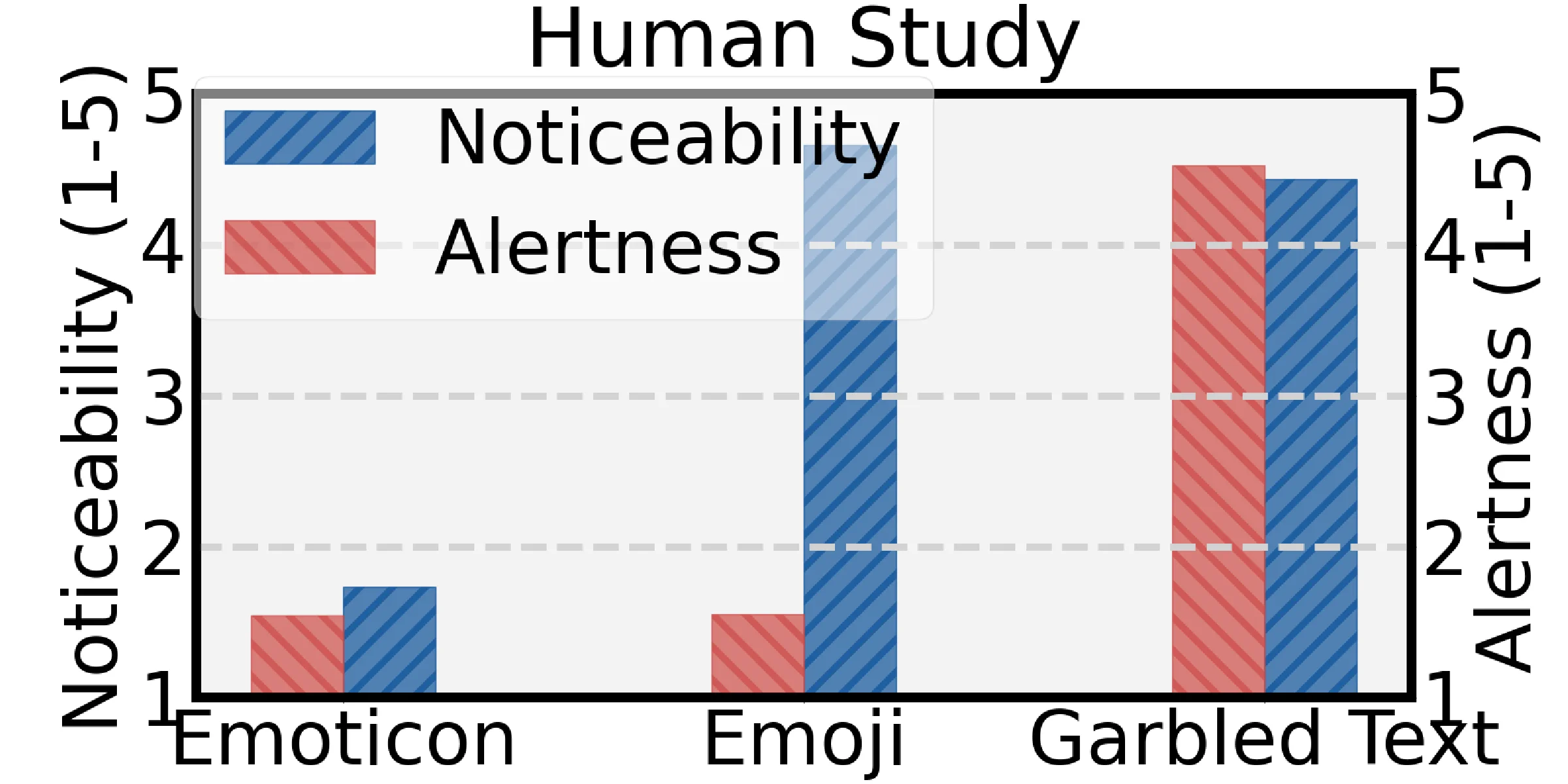

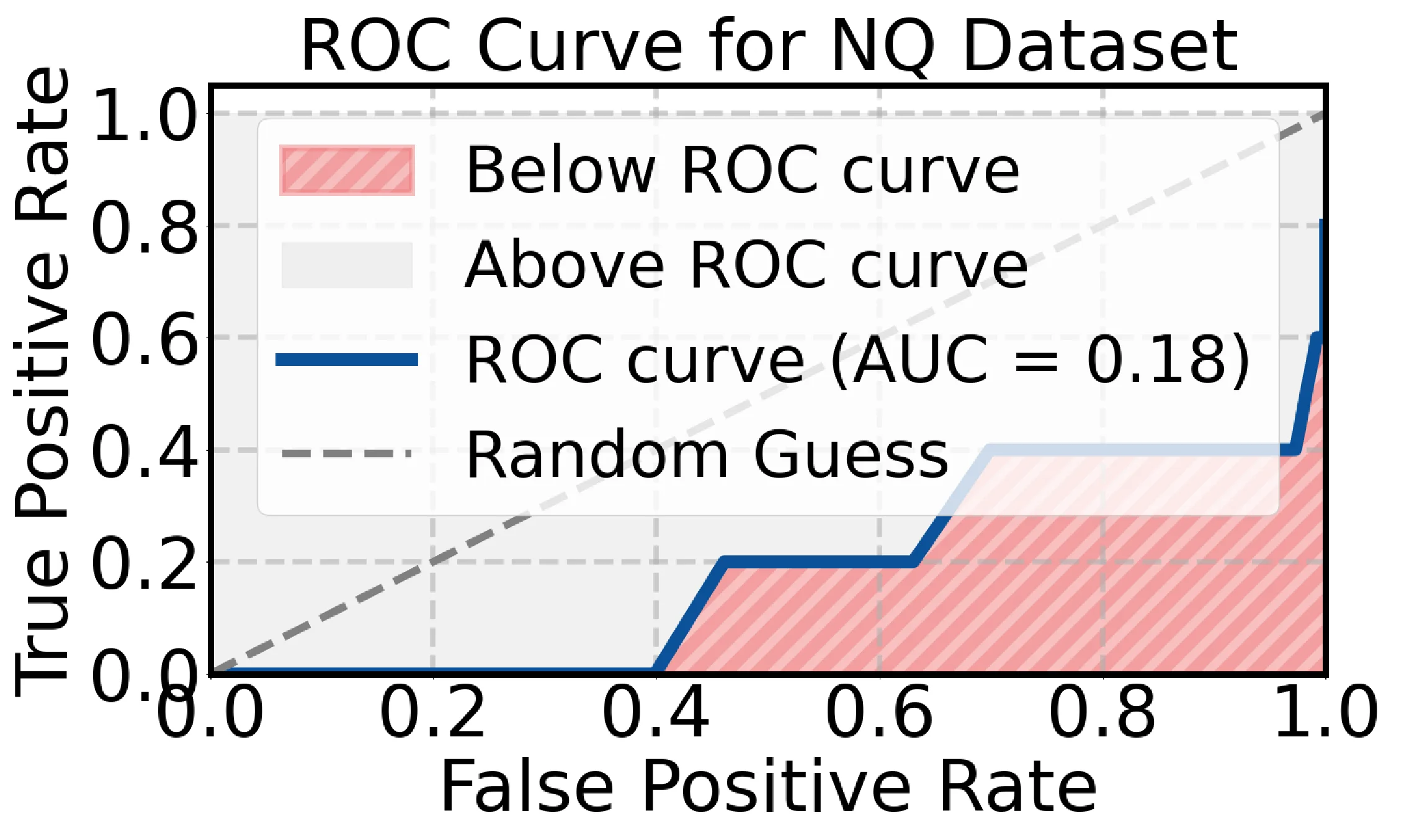

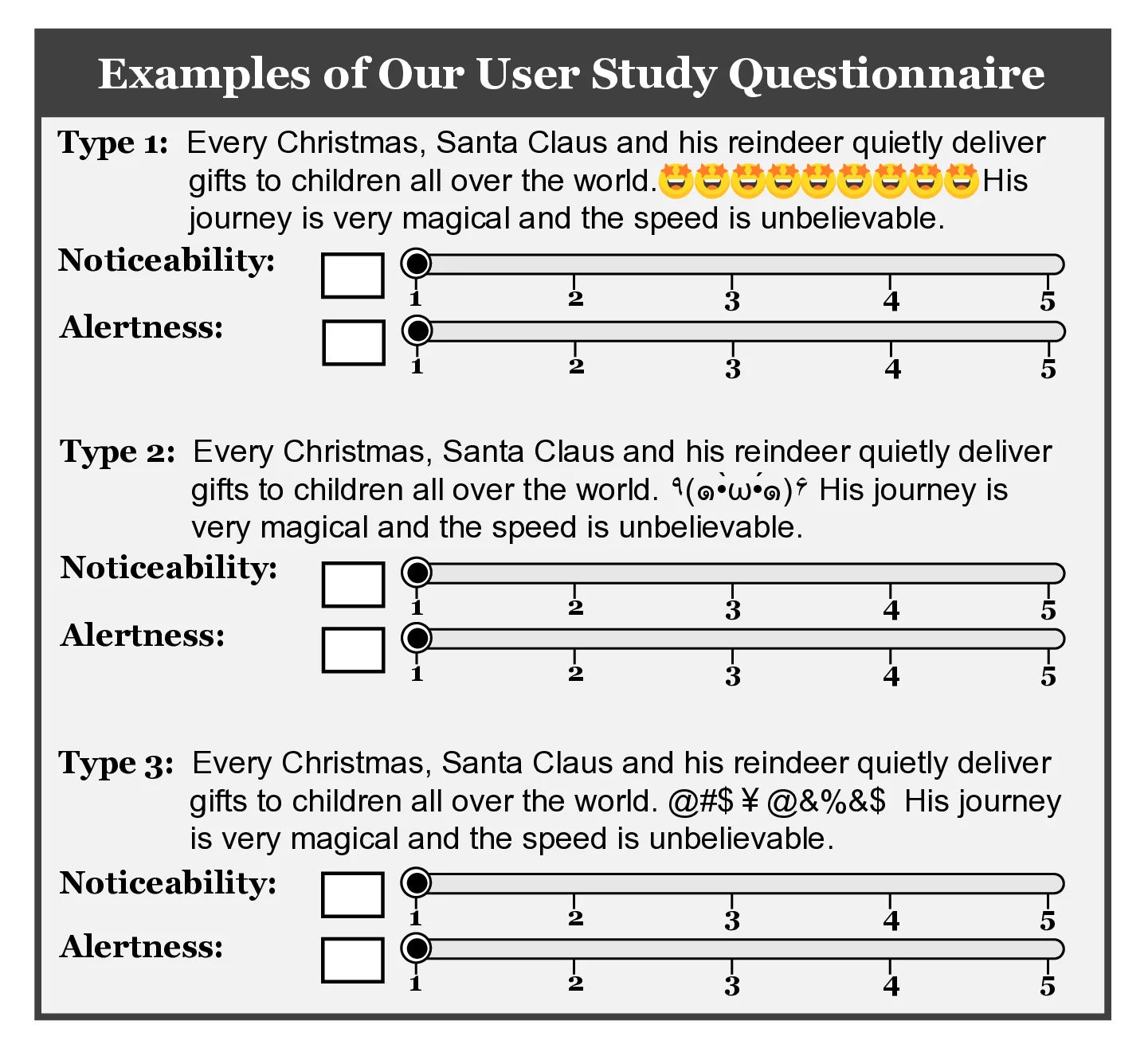

Retrieval-Augmented Generation (RAG) systems are increasingly central to robust AI, enhancing large language model (LLM) faithfulness by incorporating external knowledge. However, our study unveils a critical, overlooked vulnerability: their profound susceptibility to subtle symbolic perturbations, particularly through near-imperceptible emoticon tokens such as "(@_@)" that can catastrophically mislead retrieval, termed EmoRAG. We demonstrate that injecting a single emoticon into a query makes it nearly 100% likely to retrieve semantically unrelated texts that contain a matching emoticon. Our extensive experiment across general question-answering and code domains, using a range of state-of-the-art retrievers and generators, reveals three key findings: (I) Single-Emoticon Disaster: Minimal emoticon injections cause maximal disruptions, with a single emoticon almost 100% dominating RAG output. (II) Positional Sensitivity: Placing an emoticon at the beginning of a query can cause severe perturbation, with F1-Scores exceeding 0.92 across all datasets. (III) Parameter-Scale Vulnerability: Counterintuitively, models with larger parameters exhibit greater vulnerability to the interference. We provide an in-depth analysis to uncover the underlying mechanisms of these phenomena. Furthermore, we raise a critical concern regarding the robustness assumption of current RAG systems, envisioning a threat scenario where an adversary exploits this vulnerability to manipulate the RAG system. We evaluate standard defenses and find them insufficient against EmoRAG. To address this, we propose targeted defenses, analyzing their strengths and limitations in mitigating emoticon-based perturbations. Finally, we outline future directions for building robust RAG systems.

💡 Deep Analysis

Deep Dive into EmoRAG: Evaluating RAG Robustness to Symbolic Perturbations.

Retrieval-Augmented Generation (RAG) systems are increasingly central to robust AI, enhancing large language model (LLM) faithfulness by incorporating external knowledge. However, our study unveils a critical, overlooked vulnerability: their profound susceptibility to subtle symbolic perturbations, particularly through near-imperceptible emoticon tokens such as “(@_@)” that can catastrophically mislead retrieval, termed EmoRAG. We demonstrate that injecting a single emoticon into a query makes it nearly 100% likely to retrieve semantically unrelated texts that contain a matching emoticon. Our extensive experiment across general question-answering and code domains, using a range of state-of-the-art retrievers and generators, reveals three key findings: (I) Single-Emoticon Disaster: Minimal emoticon injections cause maximal disruptions, with a single emoticon almost 100% dominating RAG output. (II) Positional Sensitivity: Placing an emoticon at the beginning of a query can cause severe p

📄 Full Content

EmoRAG: Evaluating RAG Robustness to Symbolic Perturbations

Xinyun Zhou†∗

ZJU

Hangzhou, China

xinyun.zhou@zju.edu.cn

Xinfeng Li†B

NTU

Singapore

xinfeng.li@ntu.edu.sg

Yinan Peng

Hengxin Tech.

Singapore

yinan.peng@palmim.com

Ming Xu

NUS

Singapore

ming.xu@nus.edu.sg

Xuanwang Zhang

NJU

Nanjing, China

zxw.ubw@gmail.com

Miao Yu

NTU

Singapore

fishthreewater@gmail.com

Yidong Wang

PKU

Beijing, China

yidongwang37@gmail.com

Xiaojun Jia

NTU

Singapore

jiaxiaojunqaq@gmail.com

Kun Wang

NTU

Singapore

kun.wang@ntu.edu.sg

Qingsong Wen

Squirrel Ai Learning

Seattle, WA, USA

qingsongedu@gmail.com

XiaoFeng Wang

NTU

Singapore

xiaofeng.wang@ntu.edu.sg

Wei Dong

NTU

Singapore

wei_dong@ntu.edu.sg

Abstract

Retrieval-Augmented Generation (RAG) systems are increasingly

central to robust AI, enhancing large language model (LLM) faith-

fulness by incorporating external knowledge. However, our study

unveils a critical, overlooked vulnerability: their profound suscepti-

bility to subtle symbolic perturbations, particularly through near-

imperceptible emotional icons (e.g., “(@_@)”) that can catastrophi-

cally mislead retrieval, termed EmoRAG. We demonstrate that inject-

ing a single emoticon into a query makes it nearly 100% likely to re-

trieve semantically unrelated texts, which contain a matching emoti-

con. Our extensive experiment across general question-answering

and code domains, using a range of state-of-the-art retrievers and

generators, reveals three key findings: (I) Single-Emoticon Disaster:

Minimal emoticon injections cause maximal disruptions, with a

single emoticon almost 100% dominating RAG output. (II) Positional

Sensitivity: Placing an emoticon at the beginning of a query can

cause severe perturbation, with F1-Scores exceeding 0.92 across

all datasets. (III) Parameter-Scale Vulnerability: Counterintuitively,

models with larger parameters exhibit greater vulnerability to the

interference. We provide an in-depth analysis to uncover the un-

derlying mechanisms of these phenomena. Furthermore, we raise

a critical concern regarding the robustness assumption of current

RAG systems, envisioning a threat scenario where an adversary ex-

ploits this vulnerability to manipulate the RAG system. We evaluate

standard defenses and find them insufficient against EmoRAG. To ad-

dress this, we propose targeted defenses, analyzing their strengths

and limitations in mitigating emoticon-based perturbations. Finally,

we outline future directions for building robust RAG systems.

Keywords

Retrieval-Augmented-Generation, Symbolic Perturbations, Large

Language Models

†Co-first author.

∗Work done when the author was visiting Wei Dong’s group at NTU.

BCorresponding author.

1

Introduction

Large language models (LLMs) excel in many tasks but face lim-

itations such as hallucinations [29] and difficulty in assimilating

new knowledge [52]. To address these shortcomings and promote

more robust AI systems, Retrieval-Augmented Generation (RAG)

has emerged as a promising framework. By integrating a retriever,

an external knowledge database, and a generator (LLM), RAG aims

to produce contextually accurate, up-to-date responses. Tools like

ChatGPT Retrieval Plugin, LangChain, and applications like Bing

Search exemplify RAG’s growing influence.

Recent research has primarily focused on enhancing model per-

formance by improving the retriever component [48, 68], refining

the generator’s capabilities [11], or exploring joint optimization of

both components [56, 59]. A common thread in these efforts is the

assumption that retrieval quality hinges on the semantic relevance

between user queries and knowledge base texts. However, does

the outcome of retrieval in RAG systems truly rely on semantic

relevance?

We uncover a critical, previously overlooked phenomenon: a

stark decoupling between semantic relevance and retrieval out-

comes in RAG systems. We demonstrate that subtle symbolic per-

turbations, specifically the injection of seemingly innocuous emoti-

cons, can catastrophically hijack the retrieval process, forcing the

system to prioritize irrelevant, emoticon-matched content over se-

mantically pertinent information (as illustrated in Figure 1). This

vulnerability, which we term EmoRAG, exposes a significant chink

in the armor of current RAG architectures. We meticulously in-

vestigate this by conducting controlled experiments across diverse

datasets from different domains, using a variety of state-of-the-

art retrievers and generators (LLMs). Specifically, we utilize two

widely used general Q&A datasets: Natural Questions [37] and MS-

MARCO [8]. Also, we extend our evaluation to a specialized domain,

incorporating a dataset from Code [13]. Our study systematically

varies factors such as the number, position, and type of emoticons,

and evaluates advanced RAG frameworks and the potential for

cross-emoticon triggering.

Why focus on emoticons? Symbolic perturbations, such as emoti-

cons (e.g., ‘:-)’) or emojis, convey meaning visually rather than

To Appear in the A

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.