📝 Original Info

- Title: Multi-Path Collaborative Reasoning via Reinforcement Learning

- ArXiv ID: 2512.01485

- Date: 2025-12-01

- Authors: - Jindi Lv (1,2) - Yuhao Zhou (1) - Zheng Zhu (2) - Xiaofeng Wang (2,3) - Guan Huang (2) - Jiancheng Lv (1) Affiliations: 1. Sichuan University 2. GigaAI 3. Tsinghua University

📝 Abstract

Chain-of-Thought (CoT) reasoning has significantly advanced the problem-solving capabilities of Large Language Models (LLMs), yet conventional CoT often exhibits internal determinism during decoding, limiting exploration of plausible alternatives. Recent methods attempt to address this by generating soft abstract tokens to enable reasoning in a continuous semantic space. However, we find that such approaches remain constrained by the greedy nature of autoregressive decoding, which fundamentally isolates the model from alternative reasoning possibilities. In this work, we propose Multi-Path Perception Policy Optimization (M3PO), a novel reinforcement learning framework that explicitly injects collective insights into the reasoning process. M3PO leverages parallel policy rollouts as naturally diverse reasoning sources and integrates cross-path interactions into policy updates through a lightweight collaborative mechanism. This design allows each trajectory to refine its reasoning with peer feedback, thereby cultivating more reliable multi-step reasoning patterns. Empirical results show that M3PO achieves state-of-the-art performance on both knowledge- and reasoning-intensive benchmarks. Models trained with M3PO maintain interpretability and inference efficiency, underscoring the promise of multi-path collaborative learning for robust reasoning.

💡 Deep Analysis

Deep Dive into Multi-Path Collaborative Reasoning via Reinforcement Learning.

Chain-of-Thought (CoT) reasoning has significantly advanced the problem-solving capabilities of Large Language Models (LLMs), yet conventional CoT often exhibits internal determinism during decoding, limiting exploration of plausible alternatives. Recent methods attempt to address this by generating soft abstract tokens to enable reasoning in a continuous semantic space. However, we find that such approaches remain constrained by the greedy nature of autoregressive decoding, which fundamentally isolates the model from alternative reasoning possibilities. In this work, we propose Multi-Path Perception Policy Optimization (M3PO), a novel reinforcement learning framework that explicitly injects collective insights into the reasoning process. M3PO leverages parallel policy rollouts as naturally diverse reasoning sources and integrates cross-path interactions into policy updates through a lightweight collaborative mechanism. This design allows each trajectory to refine its reasoning with pe

📄 Full Content

Multi-Path Collaborative Reasoning via Reinforcement Learning

Jindi Lv1,2

Yuhao Zhou1

Zheng Zhu2

Xiaofeng Wang2,3

Guan Huang2

Jiancheng Lv1

1Sichuan University

2GigaAI

3Tsinghua University

Project page: https://multi-path-collaborative-reasoning.github.io/

Qwen2.5-1.5B Qwen2.5-3B

20

25

30

35

40

45

50

Exact Match (%)

26.1

36.7

35.6

40.2

GRPO

M3PO

(a) Average scores across five knowl-

edge datasets: GRPO vs. M3PO.

Qwen2.5-1.5B Qwen2.5-3B

50

55

60

65

70

75

80

Accuracy (%)

57.9

69.1

60.3

70.5

GRPO

M3PO

(b) Average accuracy across five

STEM datasets: GRPO vs. M3PO.

Qwen2.5-1.5B Qwen2.5-3B

20

40

60

80

100

Accuracy (%)

34.2

58.0

30.6

56.0

Original

Soft Thinking

(c) Training-free accuracy on MATH-

500: Original vs. Soft Thinking.

Qwen2.5-1.5B Qwen2.5-3B

40

50

60

70

80

90

100

Accuracy (%)

60.7

76.4

55.6

76.7

Original

Soft Thinking

(d)

Training-free

accuracy

on

GSM8k: Original vs. Soft Thinking.

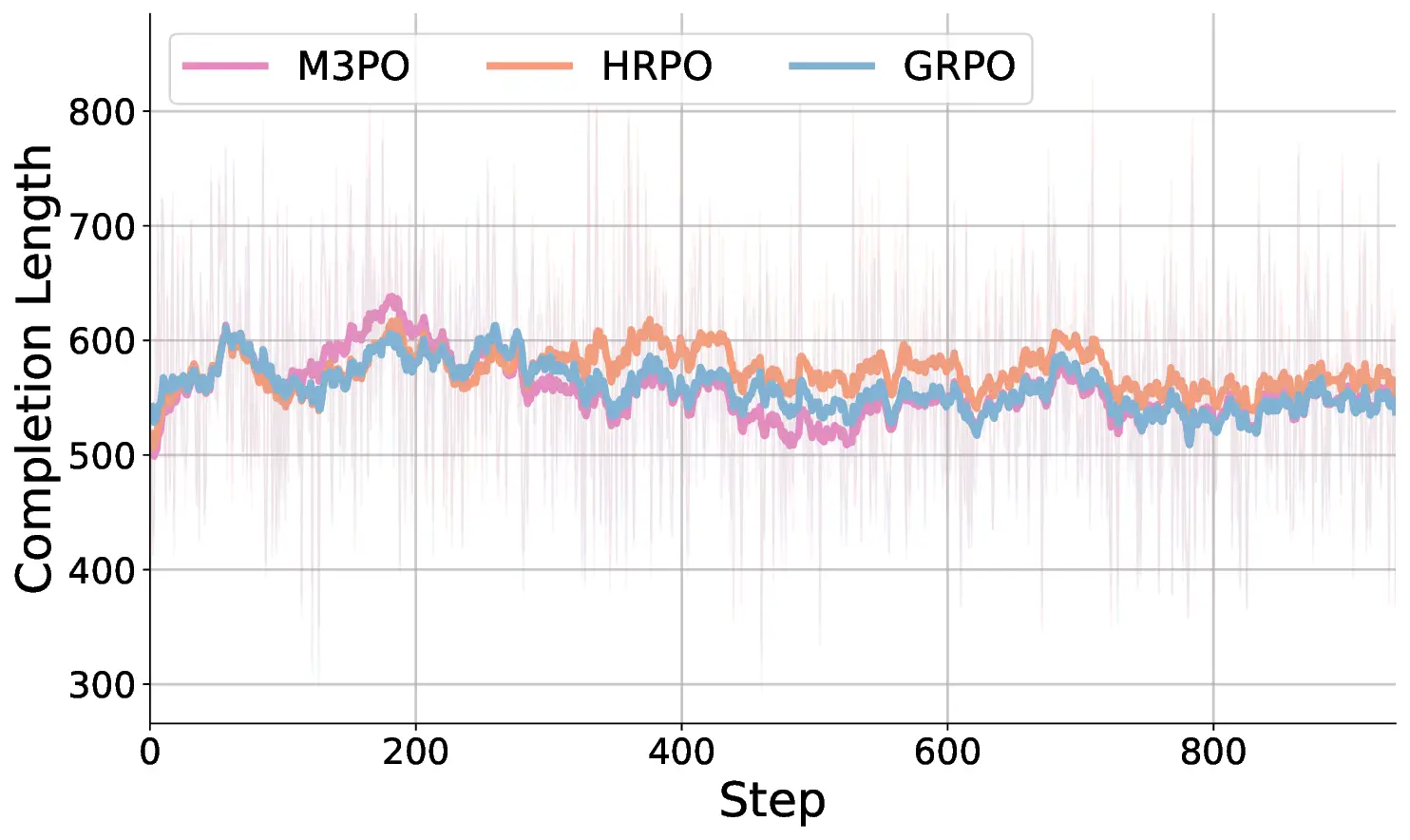

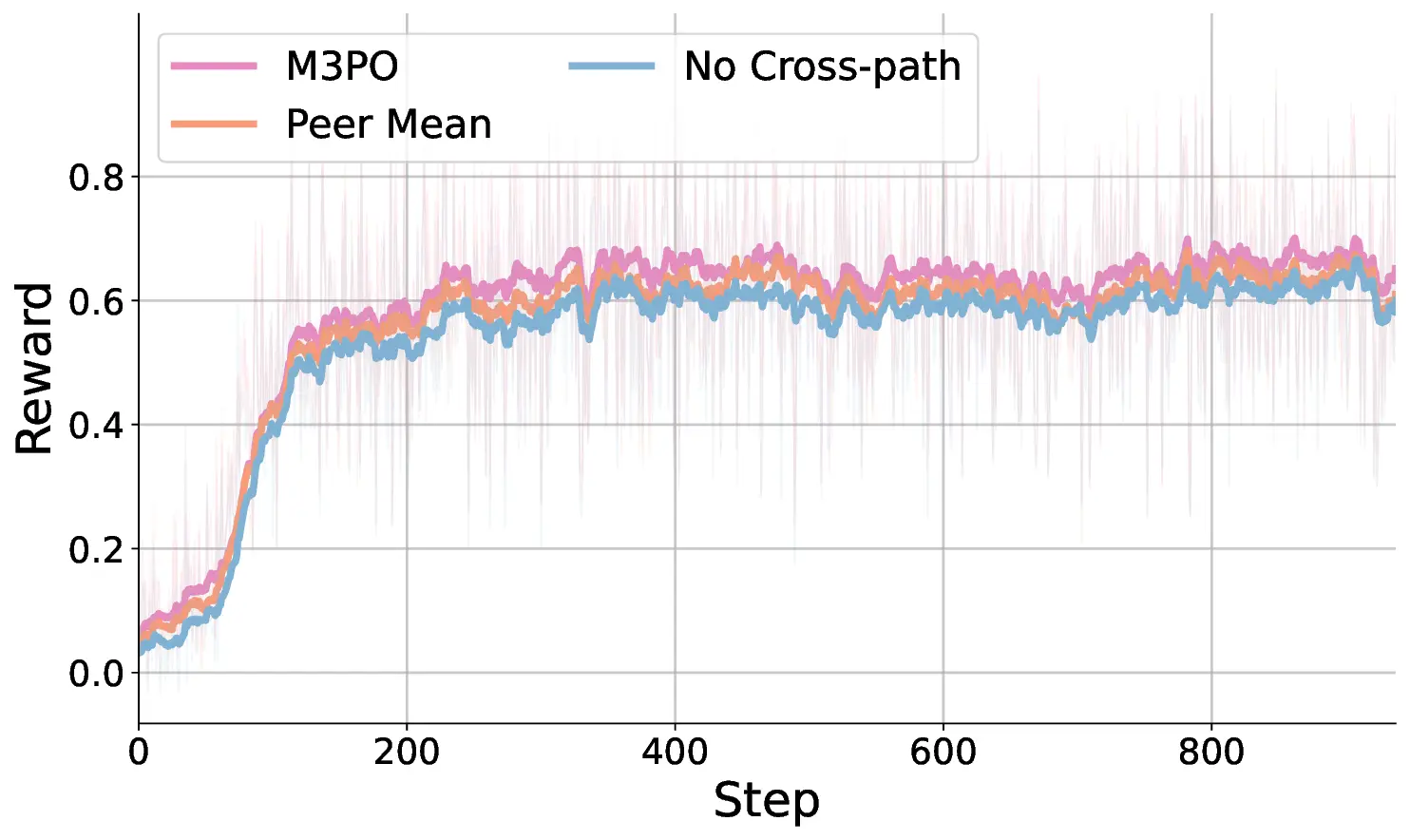

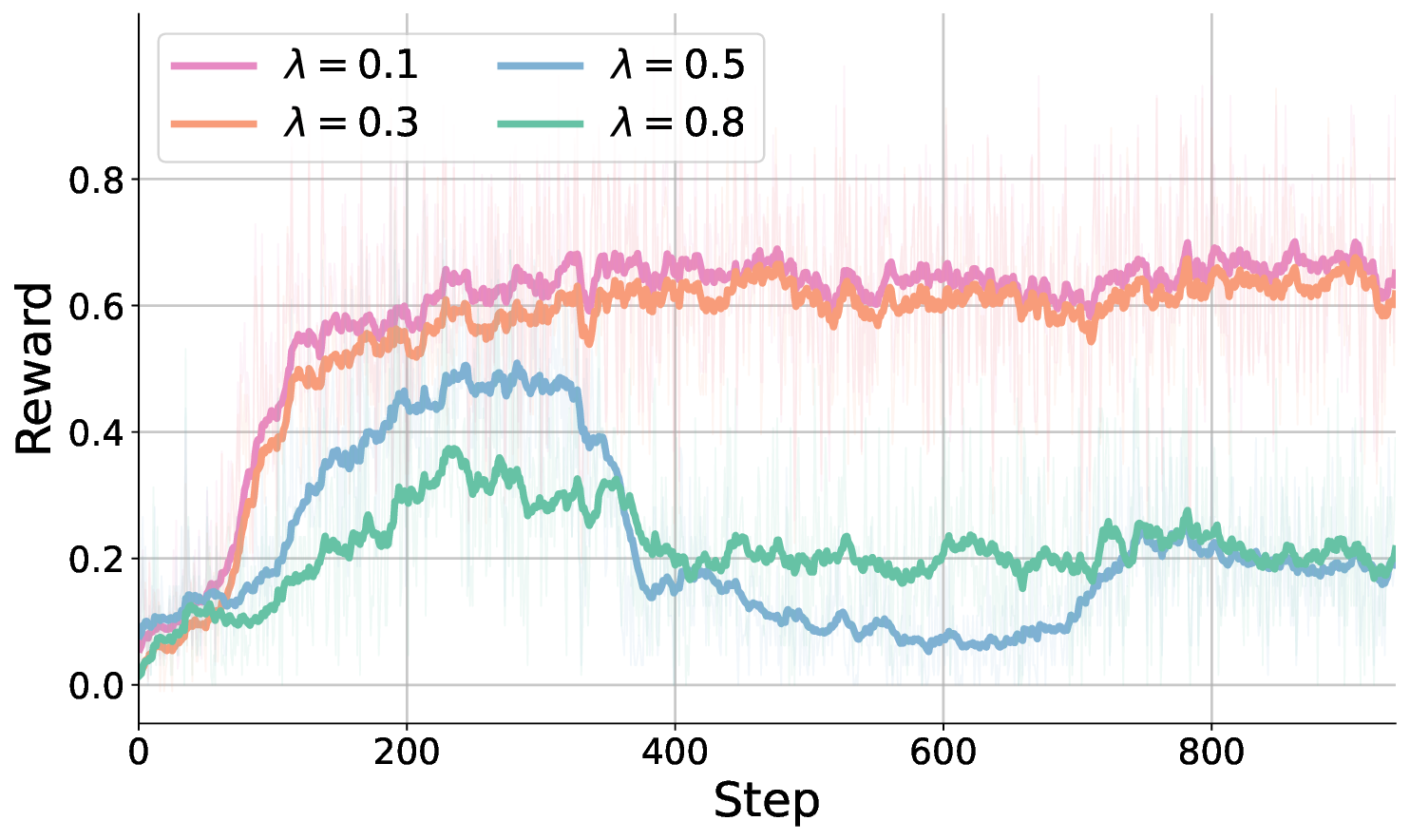

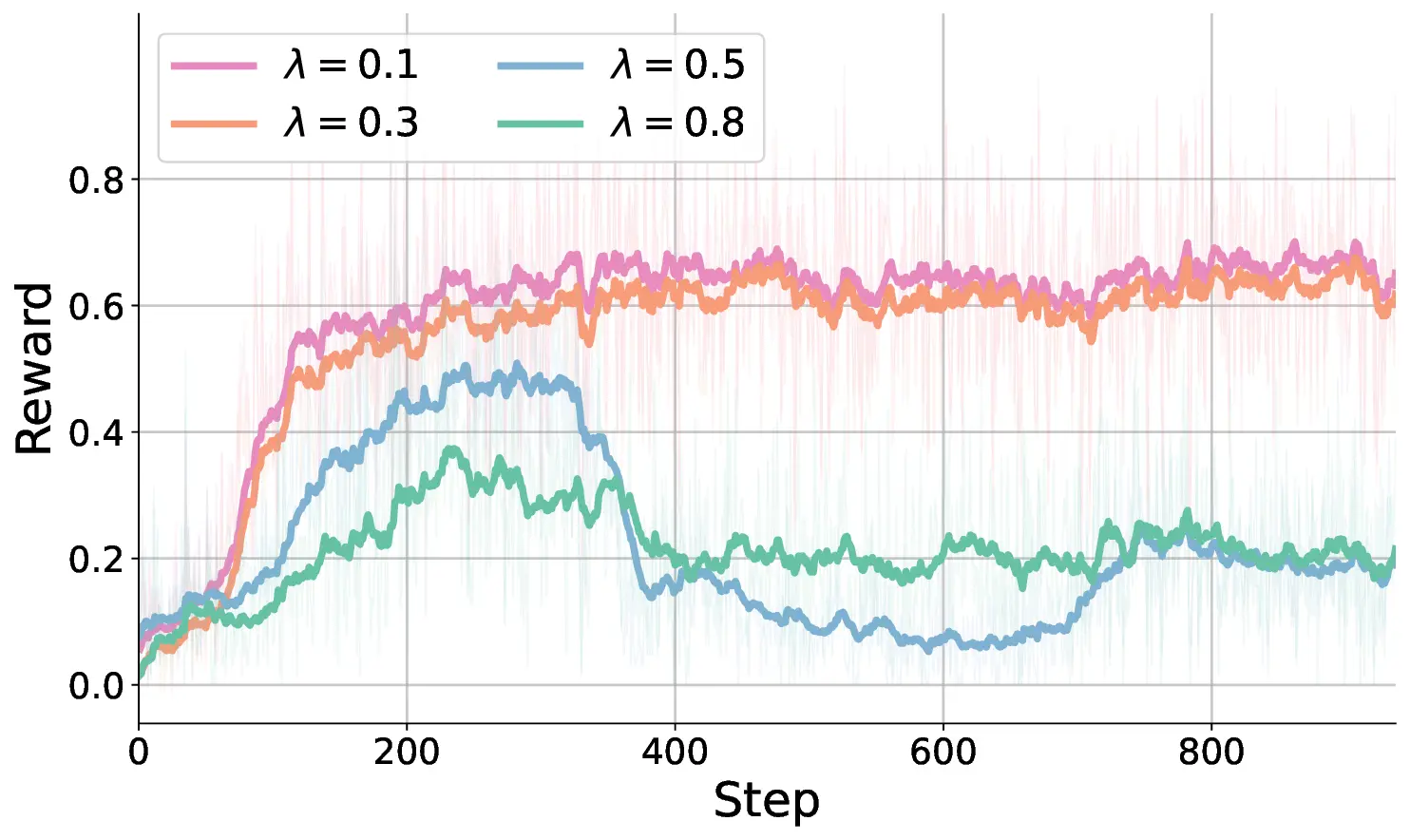

Figure 1. Comparative analysis of reasoning paradigms. Figures (a) and (b) present results on knowledge and STEM benchmarks, while

Figures (c) and (d) evaluate Soft Thinking without training on MATH-500 and GSM8k. Soft Thinking shows degraded performance,

indicating extraneous inference noise. In contrast, M3PO consistently improves performance on knowledge- and reasoning-intensive

benchmarks (with up to a 9.5% average gain on five knowledge datasets), demonstrating the superiority of multi-path reasoning paradigm.

Abstract

Chain-of-Thought (CoT) reasoning has significantly ad-

vanced the problem-solving capabilities of Large Language

Models (LLMs), yet conventional CoT often exhibits inter-

nal determinism during decoding, limiting exploration of

plausible alternatives. Recent methods attempt to address

this by generating soft abstract tokens to enable reason-

ing in a continuous semantic space. However, we find that

such approaches remain constrained by the greedy nature

of autoregressive decoding, which fundamentally isolates

the model from alternative reasoning possibilities. In this

work, we propose Multi-Path Perception Policy Optimiza-

tion (M3PO), a novel reinforcement learning framework

that explicitly injects collective insights into the reasoning

process. M3PO leverages parallel policy rollouts as nat-

urally diverse reasoning sources and integrates cross-path

interactions into policy updates through a lightweight col-

laborative mechanism. This design allows each trajectory

to refine its reasoning with peer feedback, thereby cultivat-

ing more reliable multi-step reasoning patterns. Empirical

results show that M3PO achieves state-of-the-art perfor-

mance on both knowledge- and reasoning-intensive bench-

marks. Models trained with M3PO maintain interpretabil-

ity and inference efficiency, underscoring the promise of

multi-path collaborative learning for robust reasoning.

1. Introduction

The advent of Chain-of-Thought (CoT) [14, 21, 51] has

markedly improved the reasoning capabilities of Large Lan-

guage Models (LLMs) [29, 43, 50] on complex tasks. By

generating explicit intermediate steps in natural language,

CoT decomposes problems and guides models step-by-step

toward the answer [4]. However, the discrete token decod-

ing underlying standard CoT induces internal determinism,

which limits the exploration of plausible alternatives [57].

Recent efforts seek to transcend discrete token decoding

by introducing continuity into the reasoning process. Latent

reasoning [15, 39, 41] propagates hidden states instead of

tokens to enable differentiable reasoning, but such represen-

tations often deviate from the pretrained embedding space,

requiring costly alignment and limiting compatibility [38].

To address this, Soft Thinking [52, 61] performs soft aggre-

gation within the input embedding space, preserving model

compatibility while simulating a smooth semantic flow.

Nevertheless, we reveal that Soft Thinking inherently

lacks the ability to represent diverse semantic trajectories

in parallel [52]. As shown in Figure 2, during consecutive

decoding, each subsequent distribution consistently follows

the dominant semantic token from the previous step, result-

ing in a coherent but exclusive progression along a single

trajectory. Although soft aggregation improves information

capacity, it primarily reinforces the dominant path while in-

troducing extraneous noise over time, as evidenced in Fig-

1

arXiv:2512.01485v2 [cs.AI] 8 Dec 2025

ures 1c and 1d.

This phenomenon stems from the greedy nature of LLMs

in autoregressive generation, where hidden states evolve at

each step toward the most confident semantic direction [52].

Such locally optimal decisions are amplified over long rea-

soning chains, resulting in a cumulative bias toward domi-

nant paths and exclusion of alternatives. Thus, when a rea-

soning trajectory begins with a flawed premise, the lack of

timely corrective feedback allows erroneous logic to propa-

gate unimpeded through subsequent steps [53].

In this work, we propose M3PO, a novel Multi-Path

Perception Policy O

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.