This paper introduces a comprehensive unified framework for constructing multi-view diffusion geometries through intertwined multi-view diffusion trajectories (MDTs), a class of inhomogeneous diffusion processes that iteratively combine the random walk operators of multiple data views. Each MDT defines a trajectory-dependent diffusion operator with a clear probabilistic and geometric interpretation, capturing over time the interplay between data views. Our formulation encompasses existing multi-view diffusion models, while providing new degrees of freedom for view interaction and fusion. We establish theoretical properties under mild assumptions, including ergodicity of both the point-wise operator and the process in itself. We also derive MDT-based diffusion distances, and associated embeddings via singular value decompositions. Finally, we propose various strategies for learning MDT operators within the defined operator space, guided by internal quality measures. Beyond enabling flexible model design, MDTs also offer a neutral baseline for evaluating diffusion-based approaches through comparison with randomly selected MDTs. Experiments show the practical impact of the MDT operators in a manifold learning and data clustering context.

Deep Dive into Multi-view diffusion geometry using intertwined diffusion trajectories.

This paper introduces a comprehensive unified framework for constructing multi-view diffusion geometries through intertwined multi-view diffusion trajectories (MDTs), a class of inhomogeneous diffusion processes that iteratively combine the random walk operators of multiple data views. Each MDT defines a trajectory-dependent diffusion operator with a clear probabilistic and geometric interpretation, capturing over time the interplay between data views. Our formulation encompasses existing multi-view diffusion models, while providing new degrees of freedom for view interaction and fusion. We establish theoretical properties under mild assumptions, including ergodicity of both the point-wise operator and the process in itself. We also derive MDT-based diffusion distances, and associated embeddings via singular value decompositions. Finally, we propose various strategies for learning MDT operators within the defined operator space, guided by internal quality measures. Beyond enabling flex

Progress in data acquisition and distributed computing architectures has allowed widespread access to multi-view data, where each view provides a different representation of the same data objects. In such contexts, views may correspond to multiple data modalities (e.g. text, images, sensor data), multiple measuring instruments, or may be produced via transformations of an original input data view (e.g. projections, texture-edgefrequency decompositions and related construction). Two approaches for combining multi-view representations exist: representation fusion, which seeks a single common representation, and representation alignment, which aims to produce complementary representations informed by the multiple views. This work will focus on representation fusion. Several approaches have been developed for this purpose; see surveys Random walk-based representation learning relies on pairwise affinity measures to form graphs over point-cloud data, followed by a random walk defined via the random walk Laplacian. This operator is tightly connected to the graph-diffusion operator that models heat flow over the discrete domain encoded by the graph's vertices and edges. Powers of the transition matrix reveal relationships between points at different time scales and effectively denoises the data by pushing representations toward prominent directions captured by low-frequency eigenvectors [7,34]. Approaches such as Diffusion Maps (DM) [3] belong to the kernel eigenmap family of manifold learning methods that use spectral decompositions to reveal geometric structure. In the single-view setting, it is known that under mild conditions the diffusion operator converges to the heat kernel on a continuous underlying manifold [3]. Since the heat kernel encodes rich geometric information [1,11], this observation is key for the diffusion-based framework. Diffusion operators have been employed in manifold learning [3], clustering [33,34], denoising, and visualization [43,13].

The reliability and interpretability of single-view diffusion methods have motivated their extension to the multi-view setting. A central challenge in this context is to design that can meaningfully integrate heterogeneous information from multiple views. Existing approaches typically rely on fixed rules for coupling view-specific diffusion operators into a single composite operator. Multi-view Diffusion Maps (MVD) [24] use products of view-specific kernels to promote cross-view transitions, following multi-view spectral clustering ideas [5] that encode interactions through block structures built from crosskernel terms.Alternating Diffusion (AD) [19] constructs a composite operator by alternating between view-specific transition matrices at each step, effectively enforcing the random walk to switch views iteratively. Integrated Diffusion (ID) [20] first applies diffusion within each view independently to denoise the data, and then combines the resulting operators to capture interview relationships. Other methods, such as Cross-Diffusion (CR-DIFF) [40] and Composite Diffusion (COM-DIFF) [35], utilize both forward and backward diffusion operators to model complex interactions between views. Finally, diffusion-inspired intuition has also been applied in the multi-view setting through a sequence of graph shift operators interpreted as convolutional filters [2].

While the proposed work focuses on operator-based techniques estimating the global behavior of the random walk through iterated transition matrices, a separate family operates by sampling local random walks (i.e. explicit vertex sequences), such as node2vec [12] and its variants. Extensions to multi-graph settings have also been explored [29,16,37]. Our main contributions are summarized below:

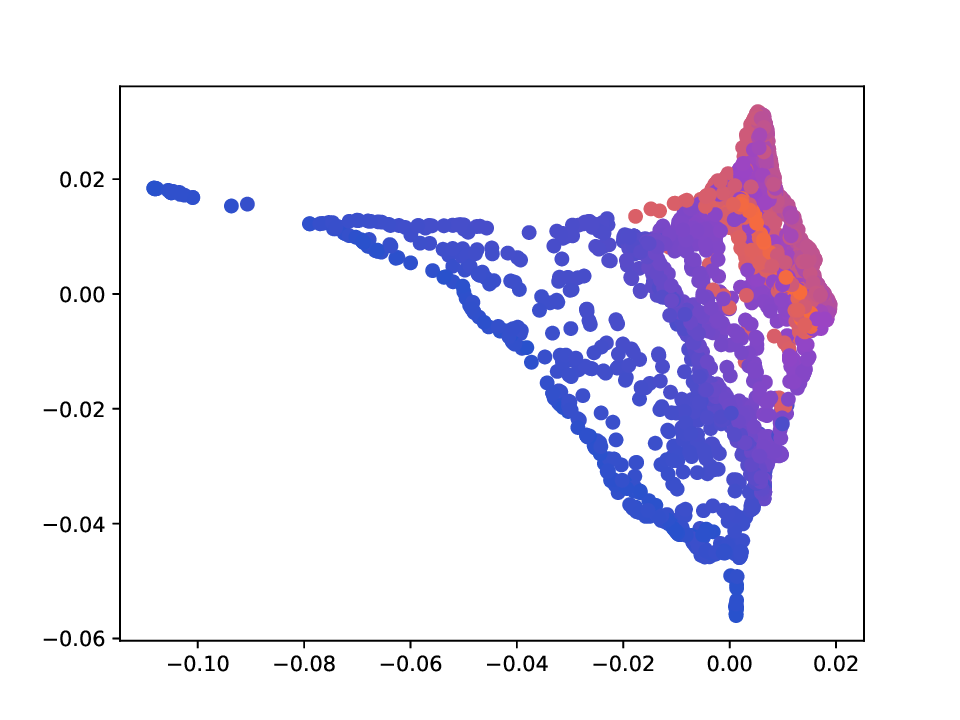

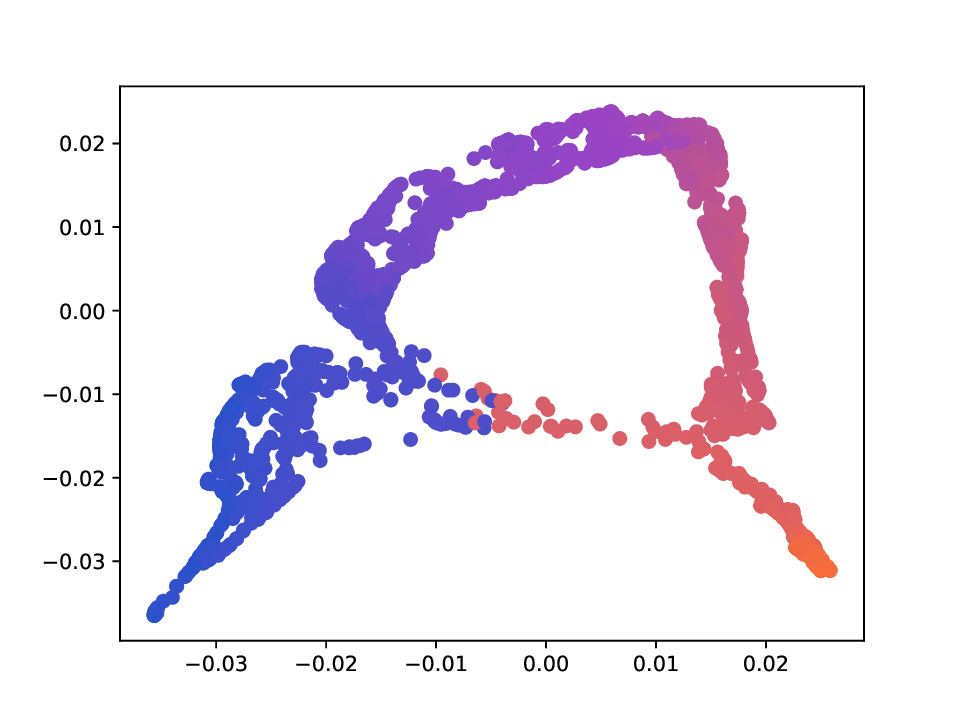

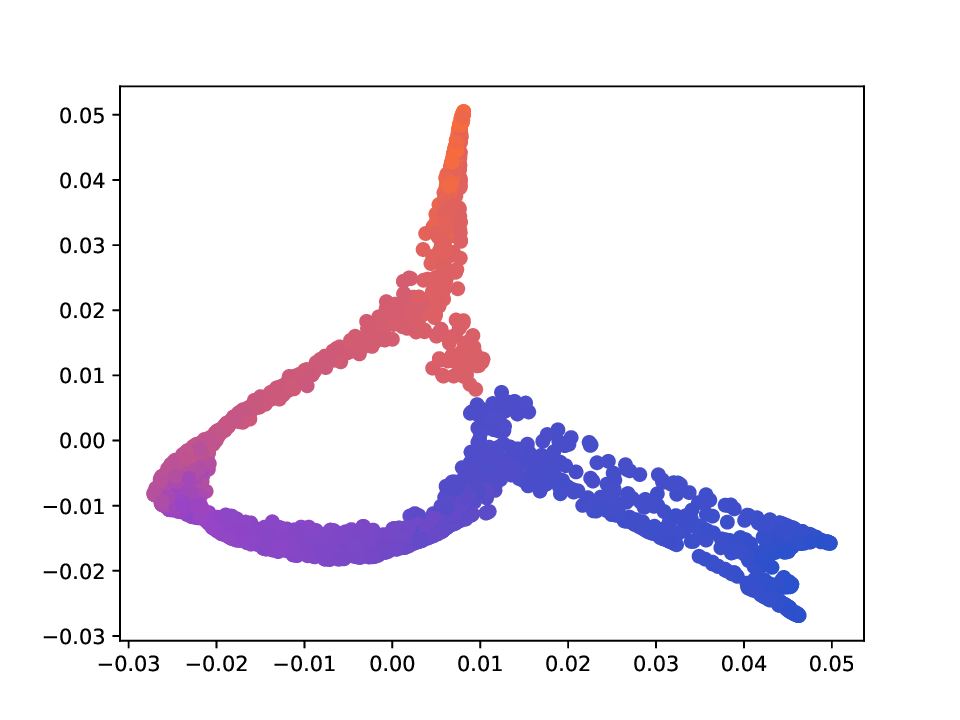

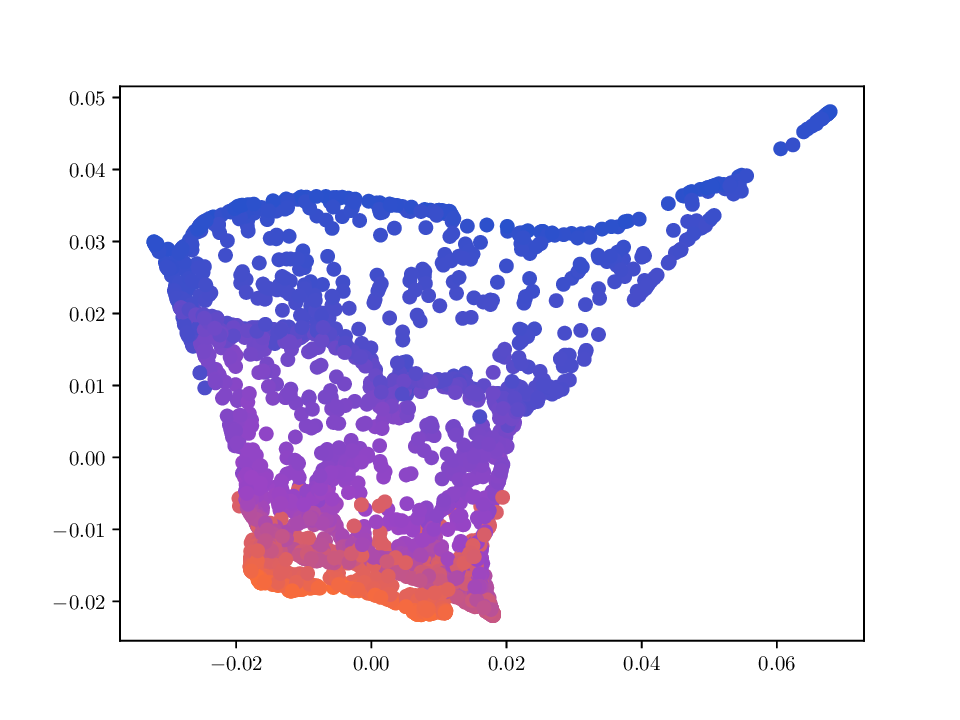

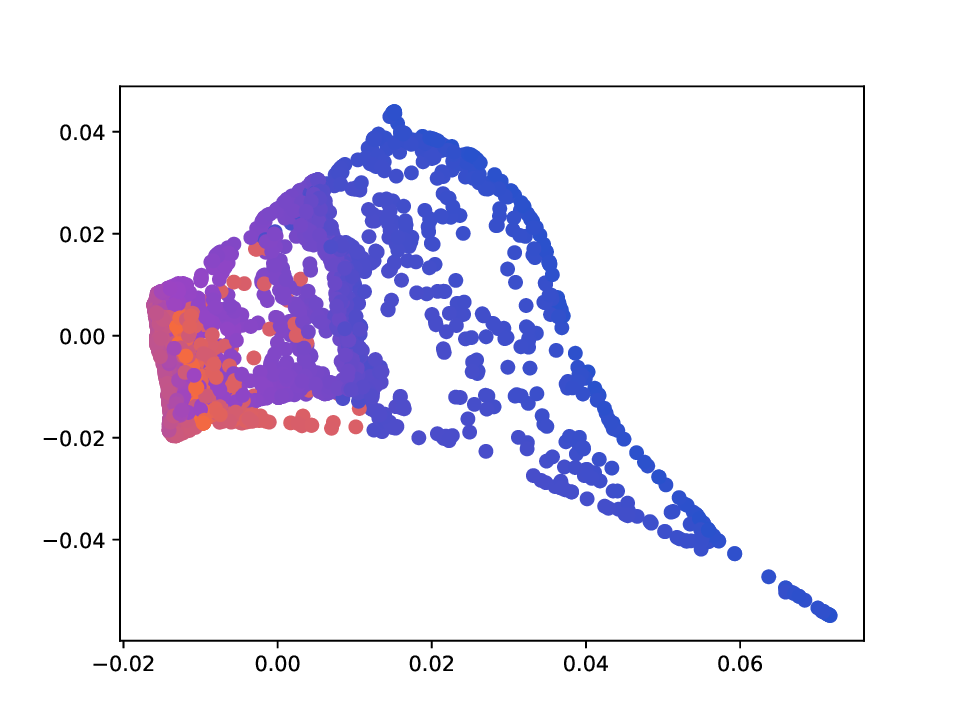

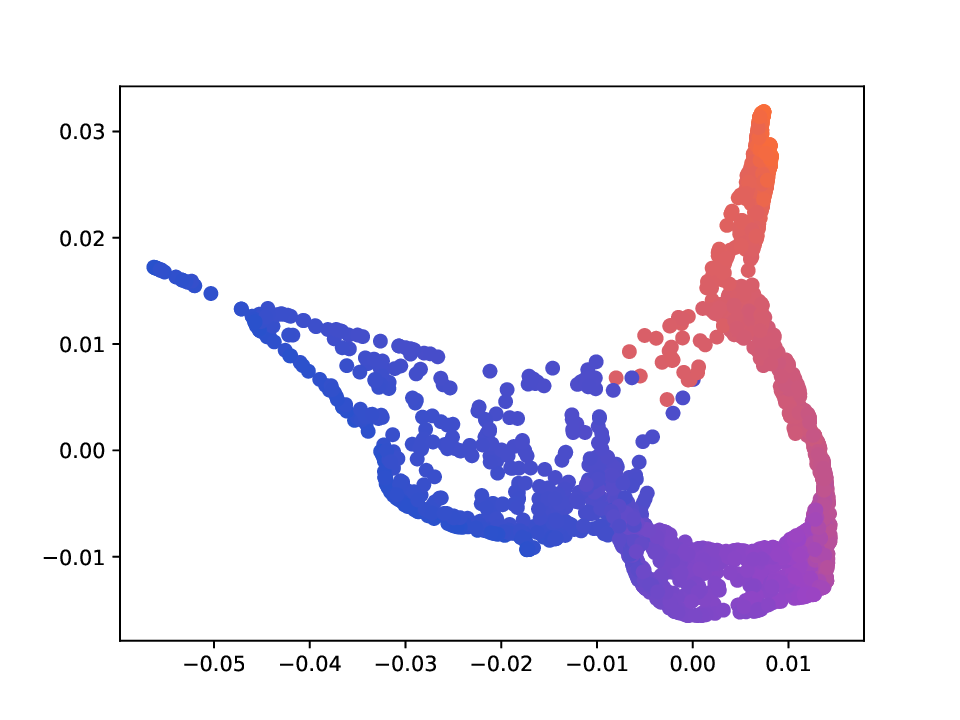

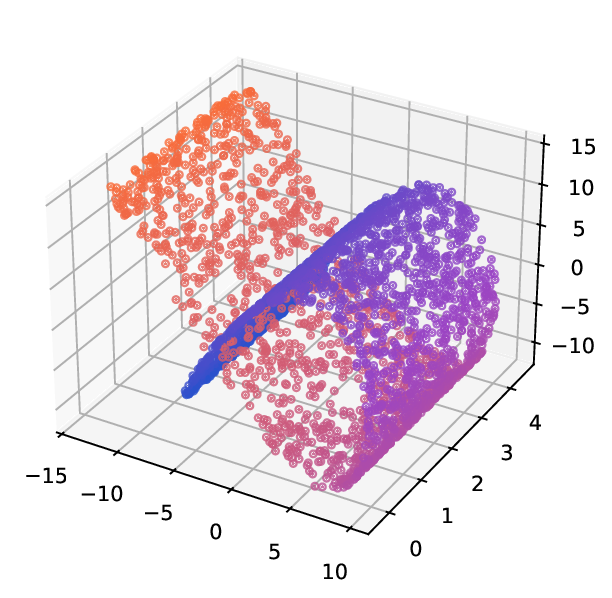

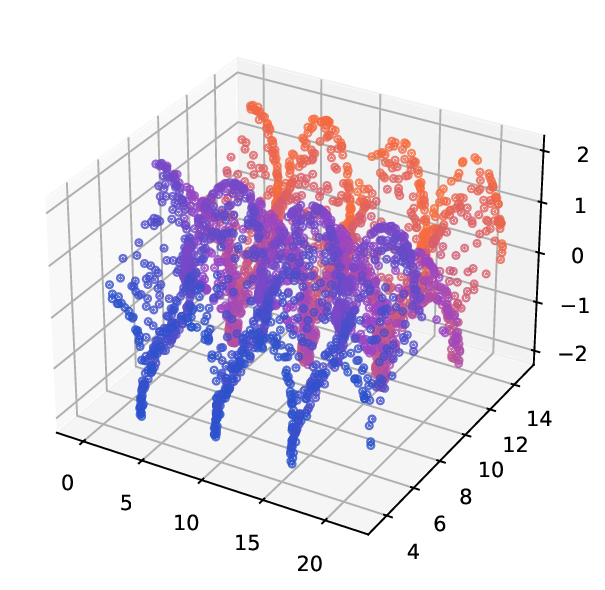

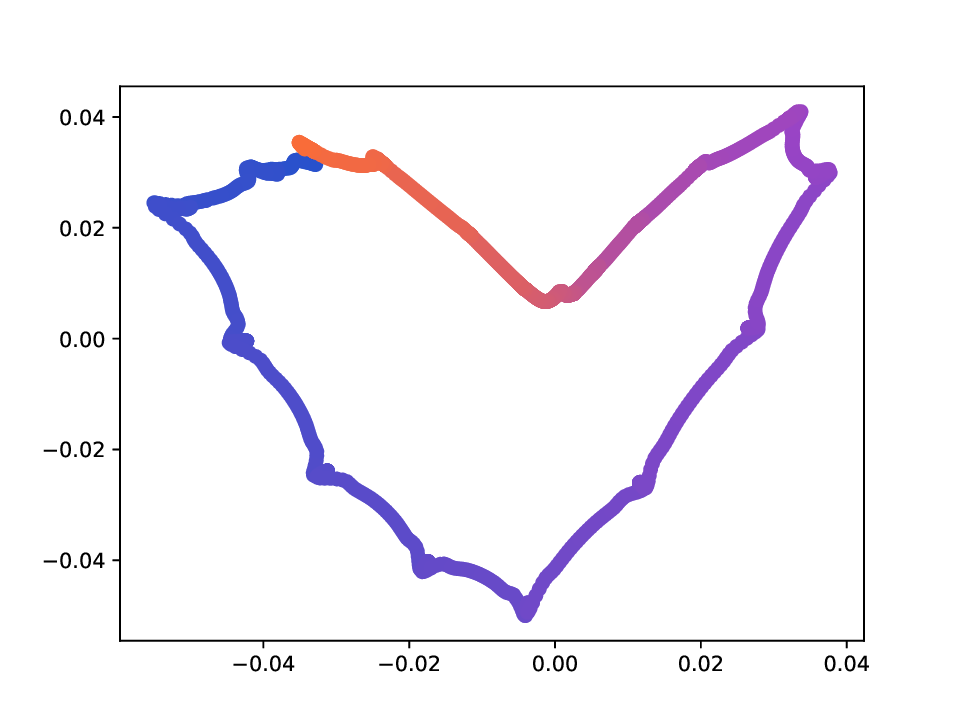

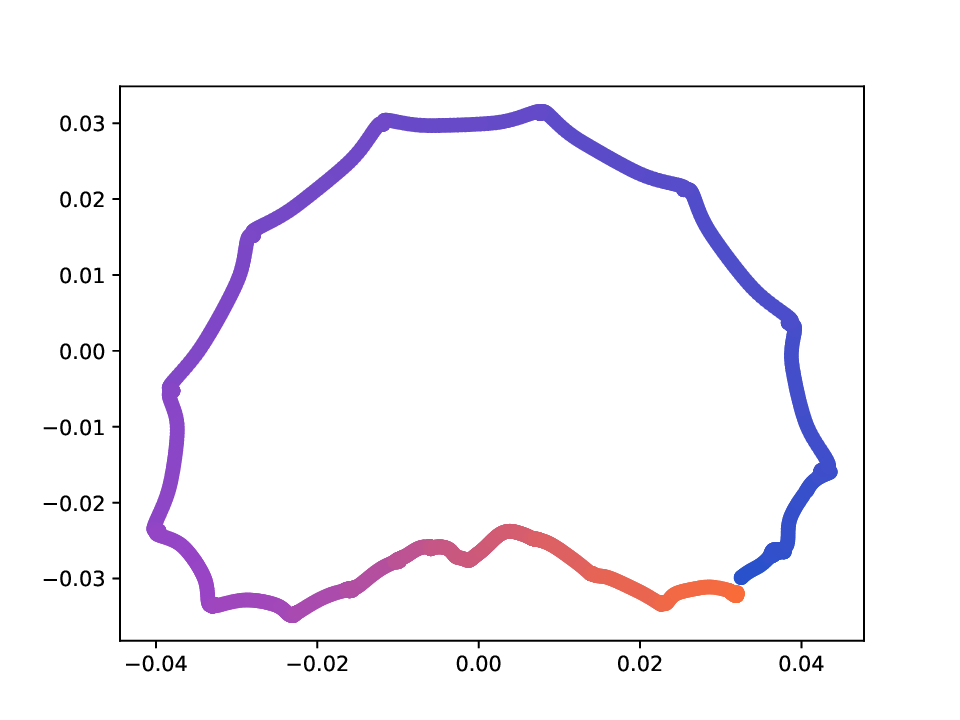

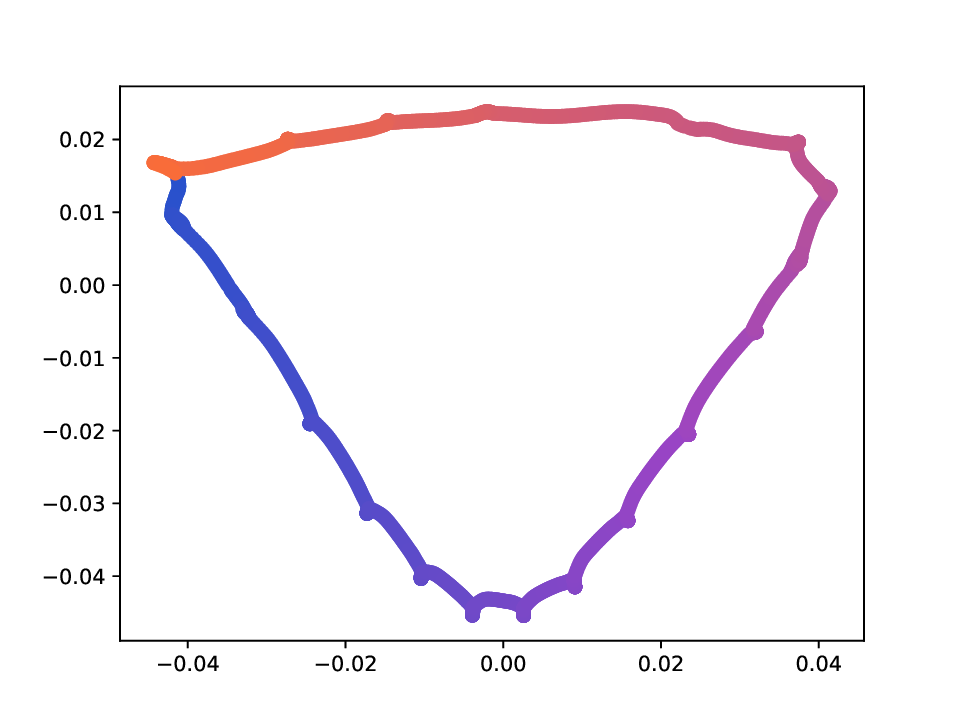

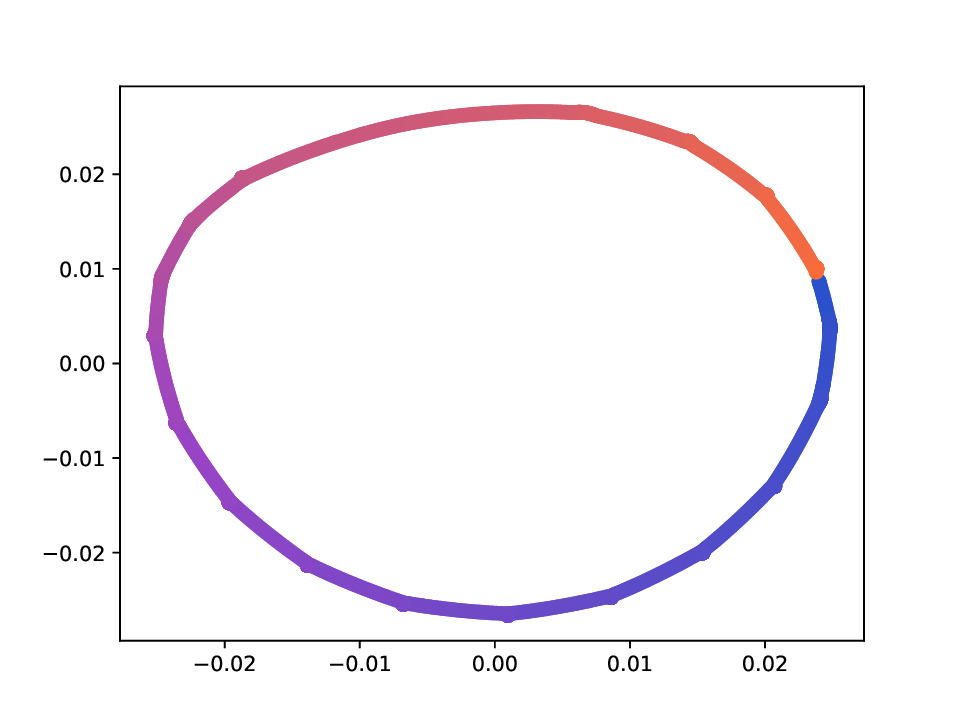

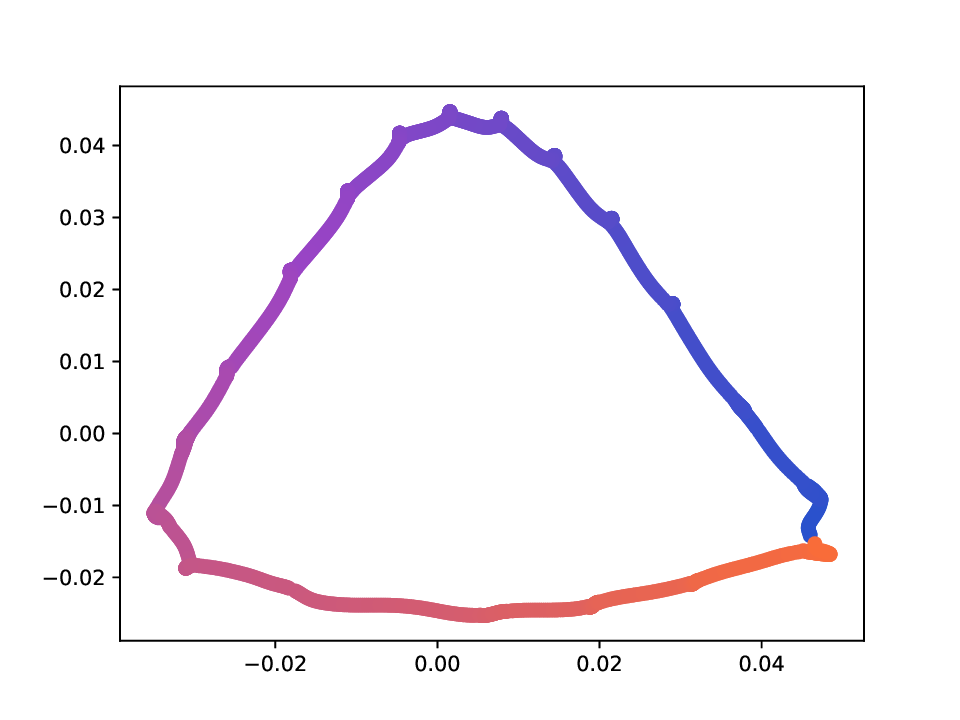

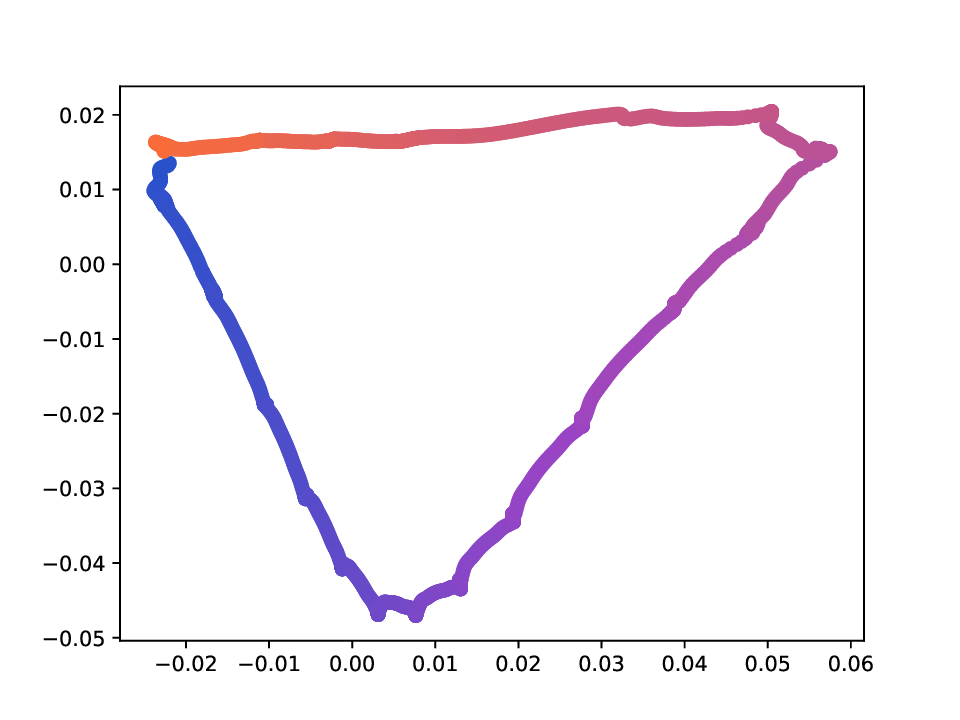

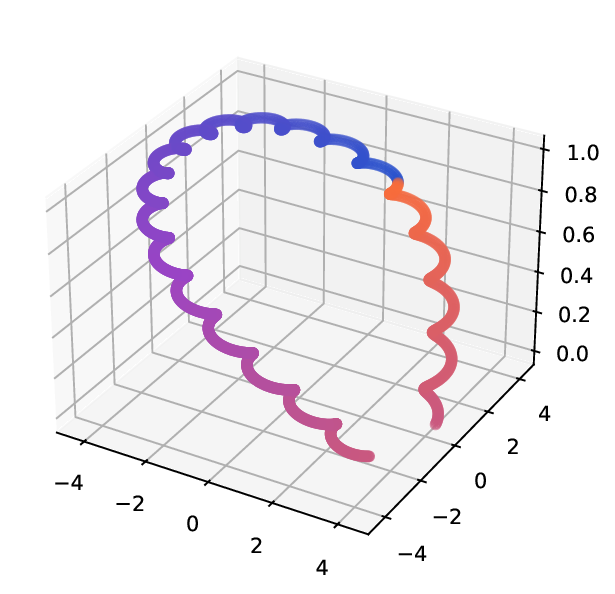

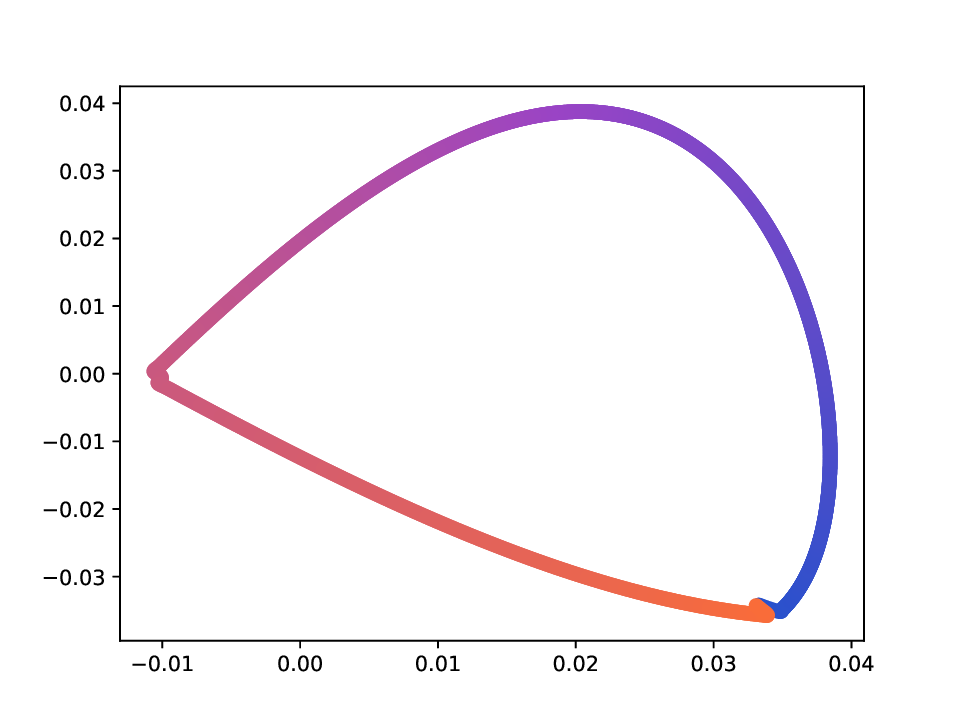

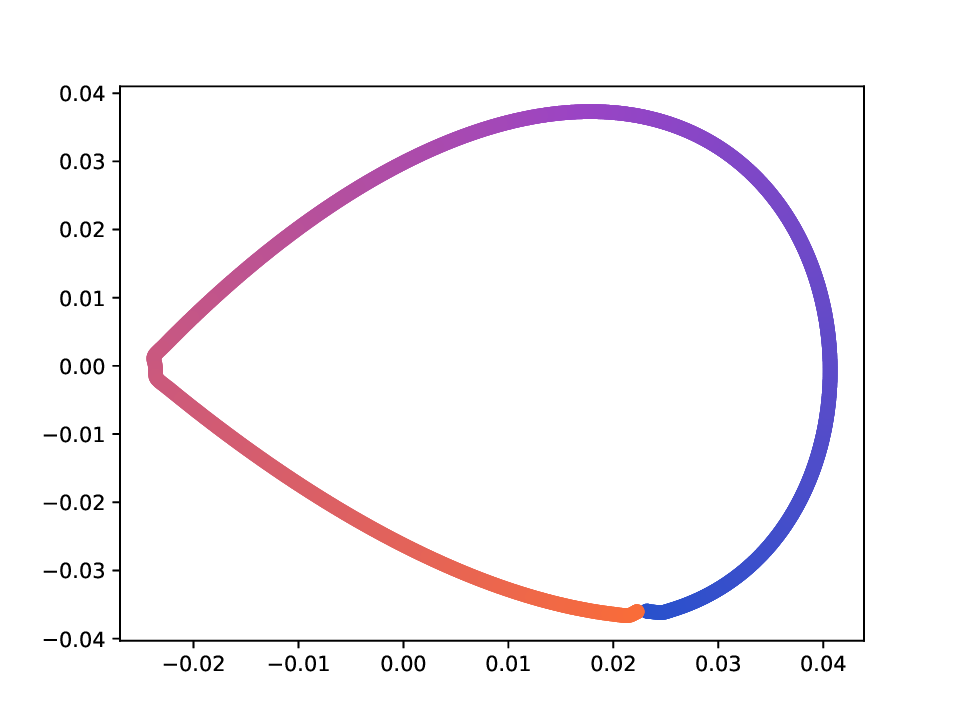

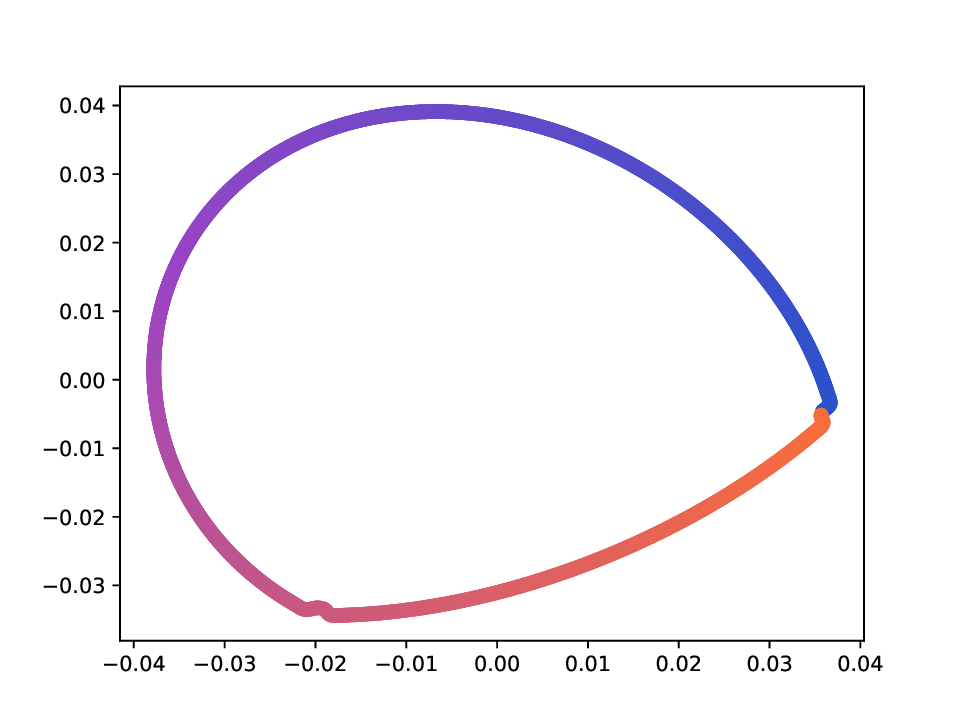

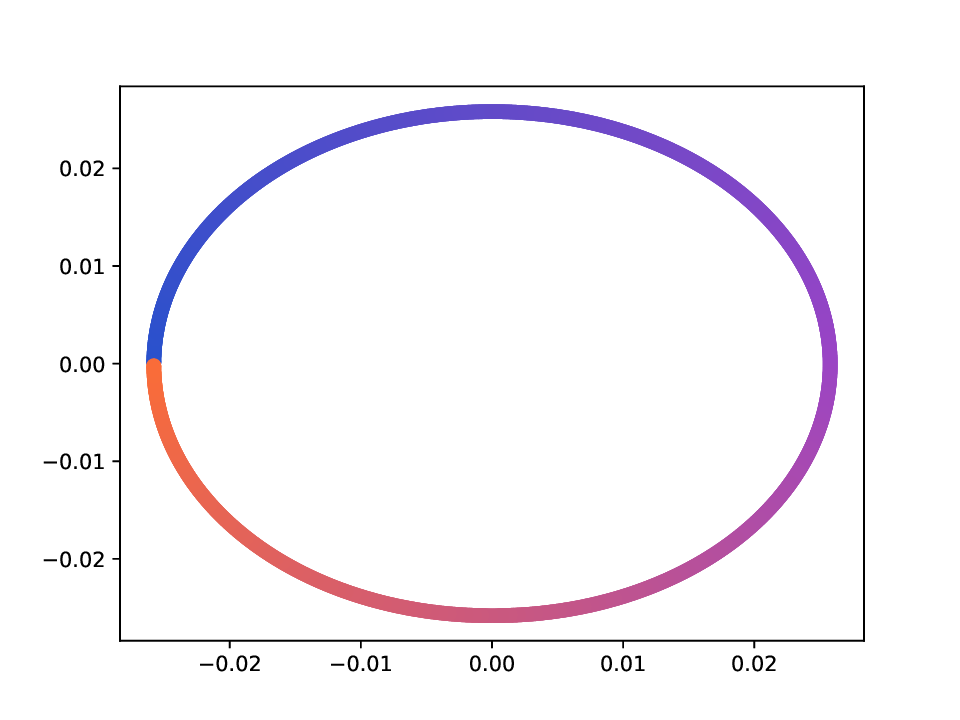

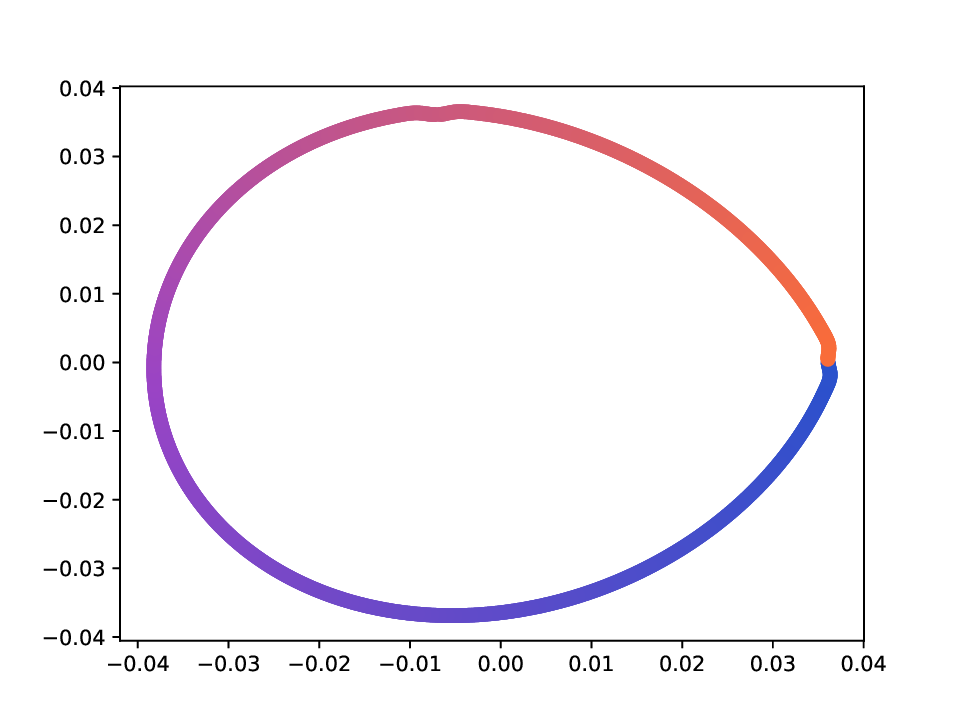

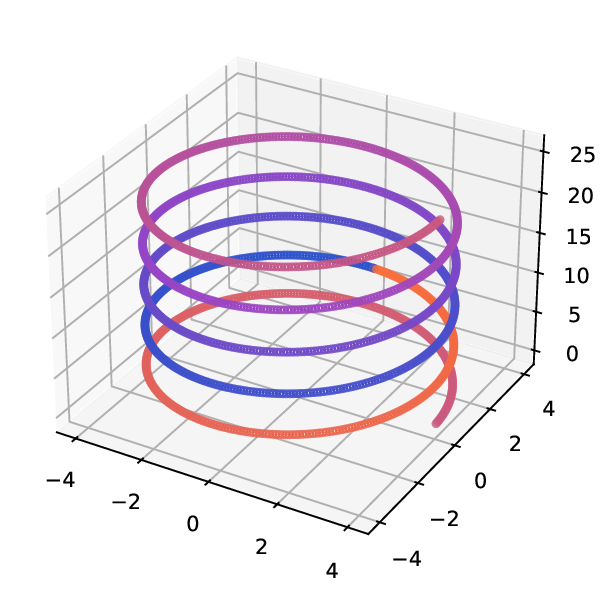

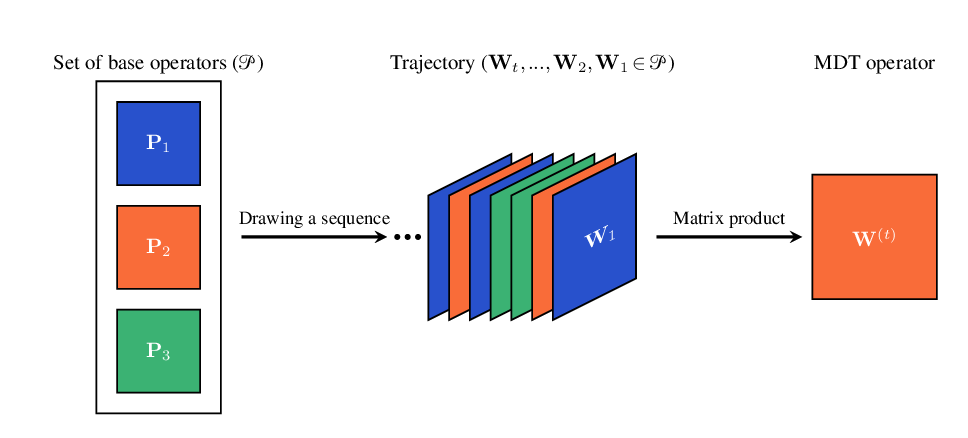

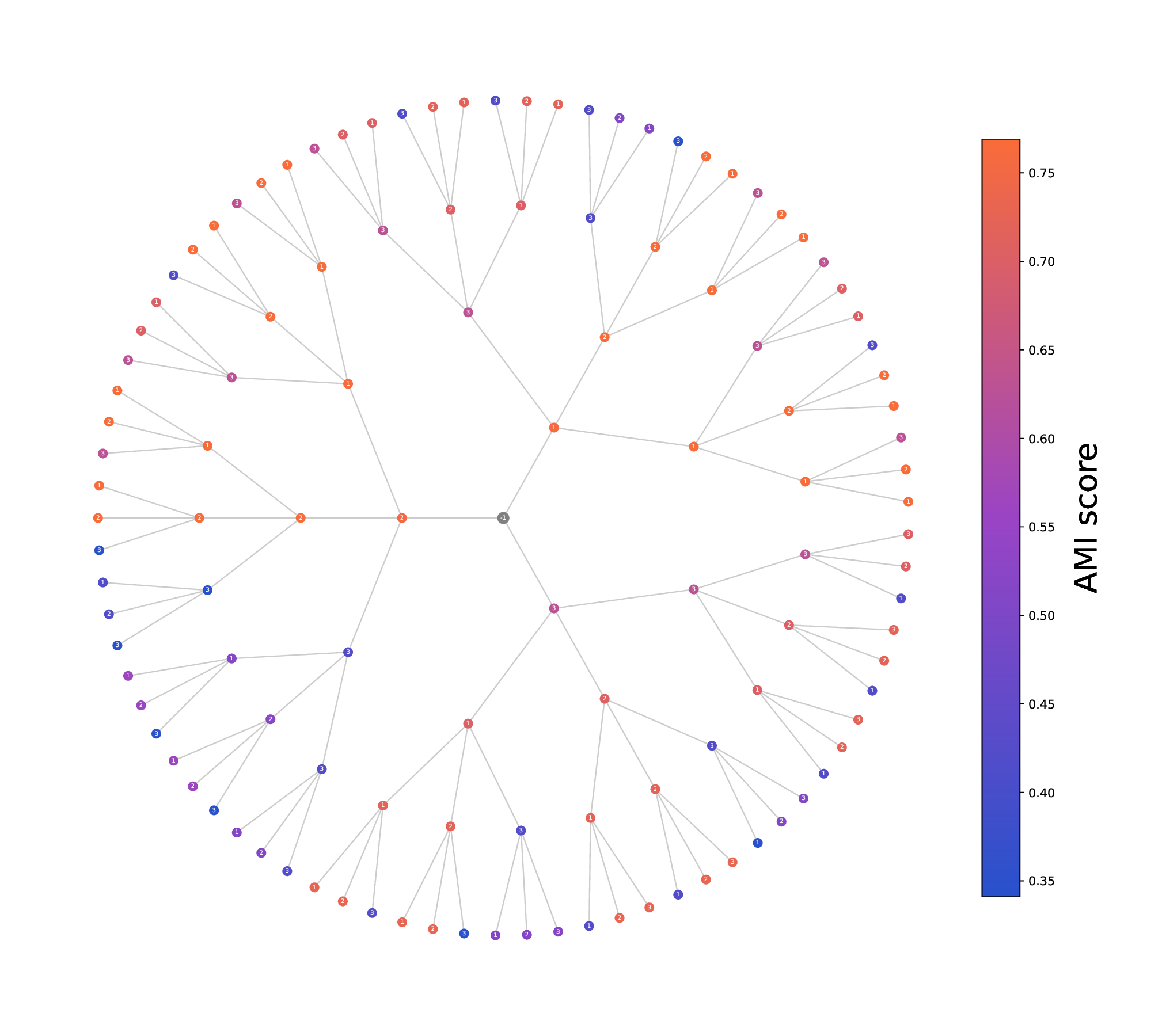

• Multi-view Diffusion Trajectories (MDTs). We introduce a flexible framework for defining diffusion geometry in multiview settings based on time-inhomogeneous diffusion processes that intertwine the random walk operators of different views. The construction relies on an operator space derived from the input data. Each MDT defines a diffusion operator that admits a clear probabilistic and geometric interpretation. Our framework is detailed in Sec. 3, an illustrative example is given in Fig. 1.

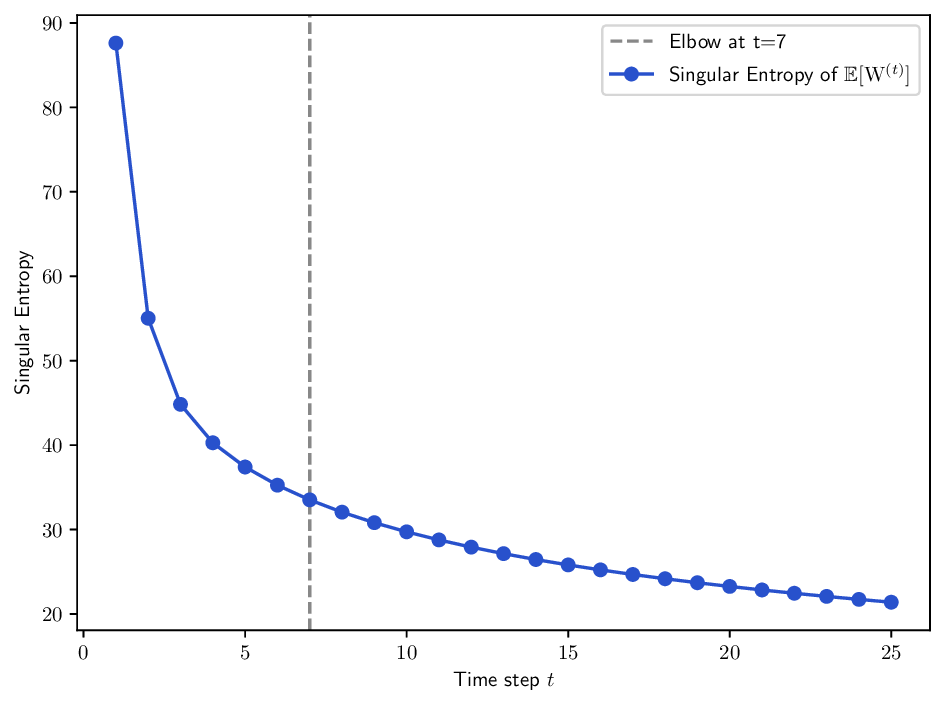

• Unified theoretical foundation. We establish theoretical properties under mild conditions: ergodicity of point-wise operators, ergodicity of the MDT process, and the existence of trajectory-dependent diffusion distances and embeddings obtained via singular value decompositions. Several existing multi-view diffusion schemes (e.g. Alternating Diffusion [19], Integrated Diffusion [20]) arise naturally as special cases.

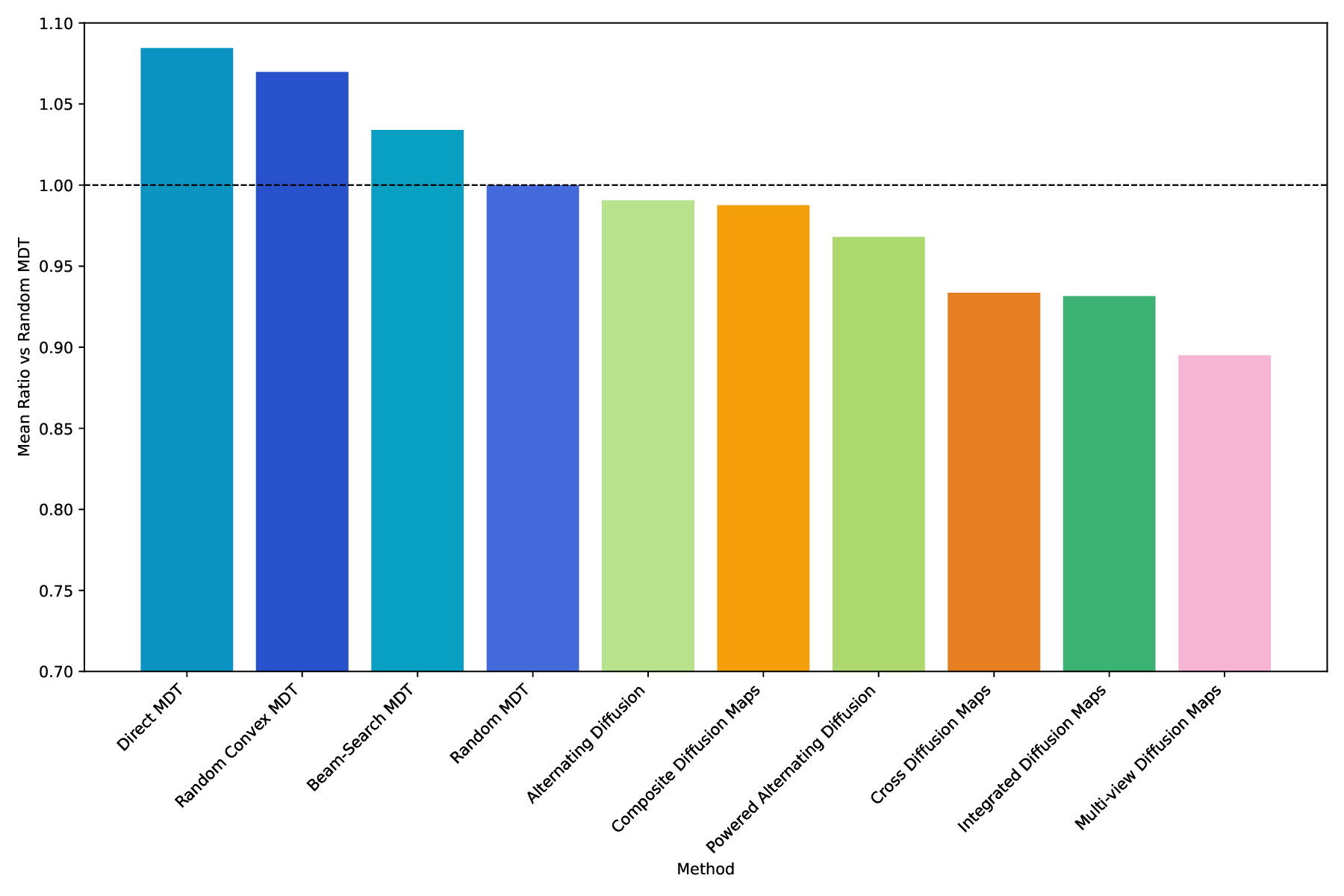

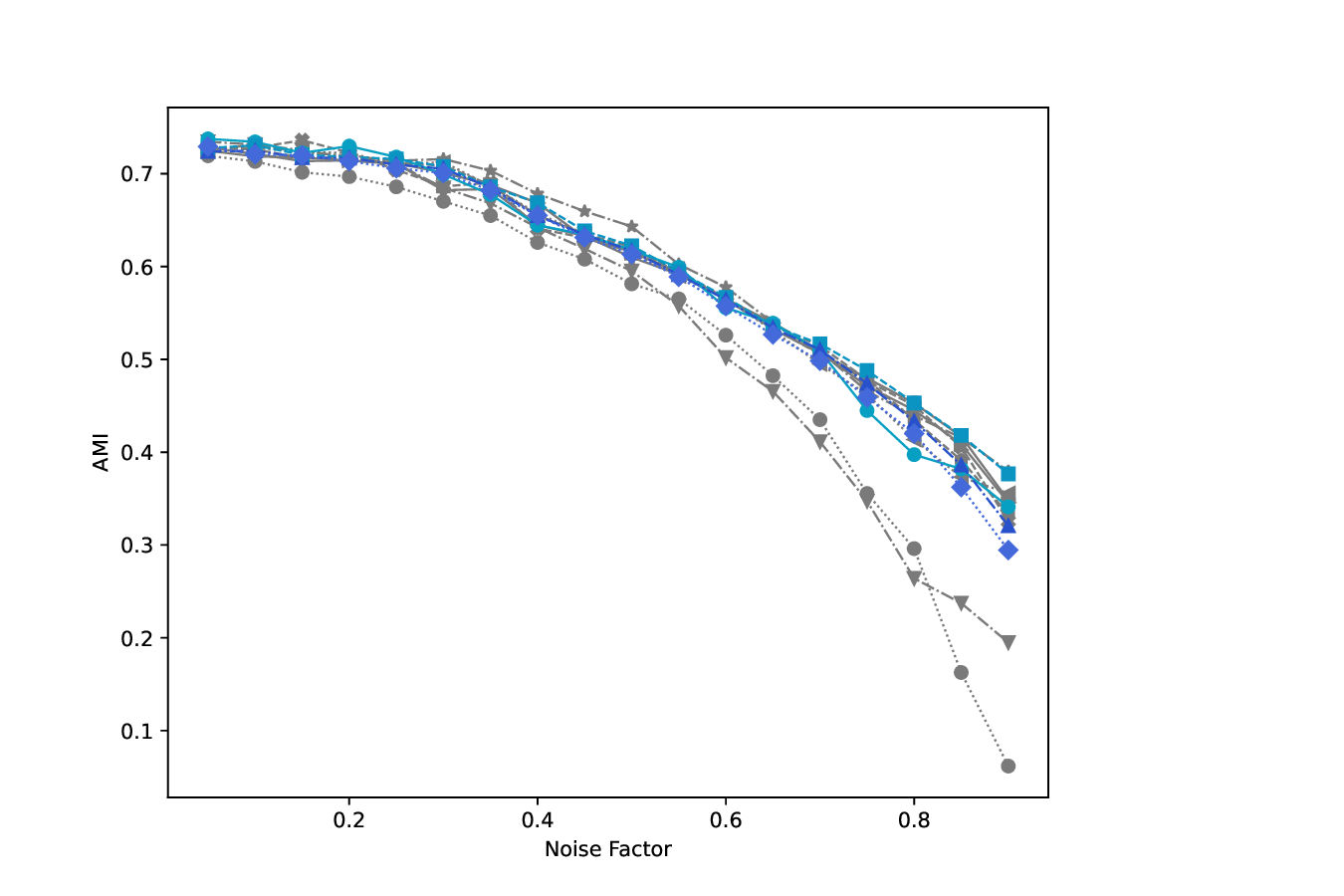

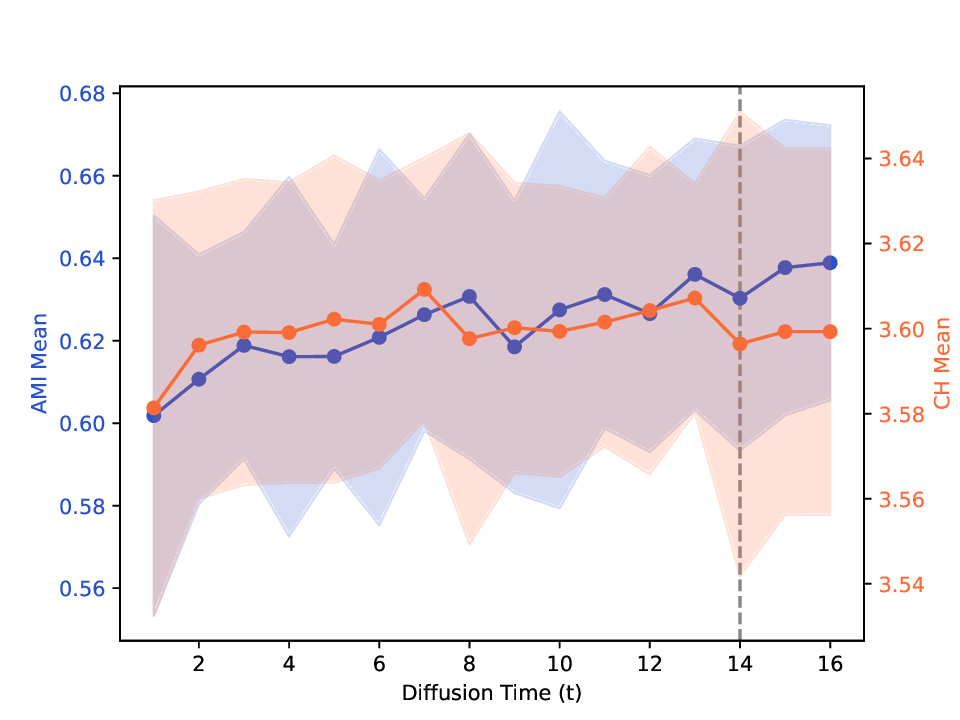

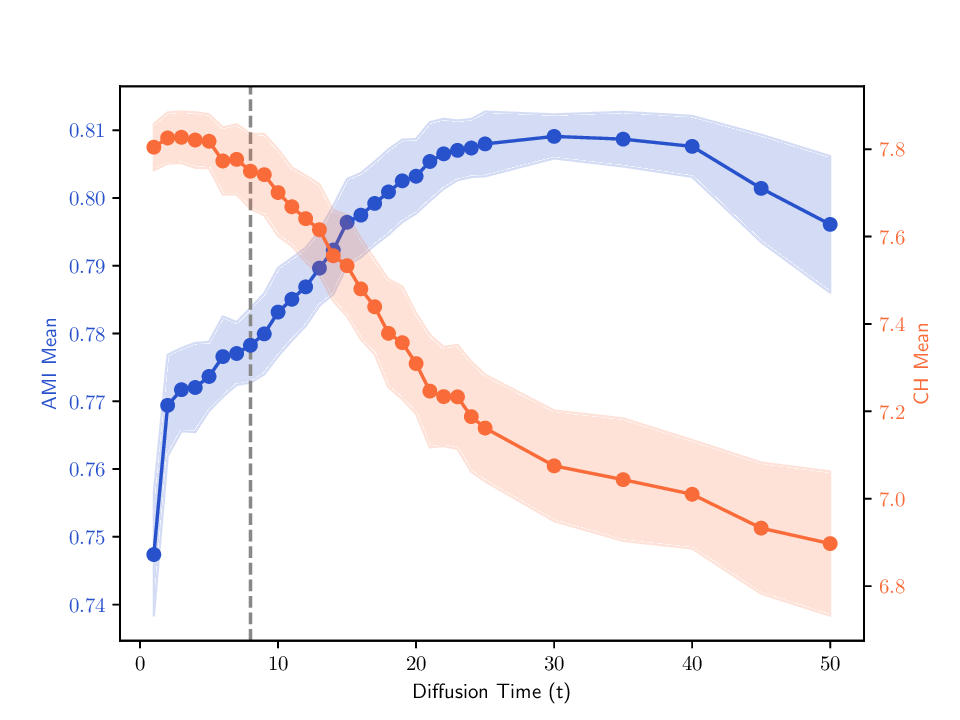

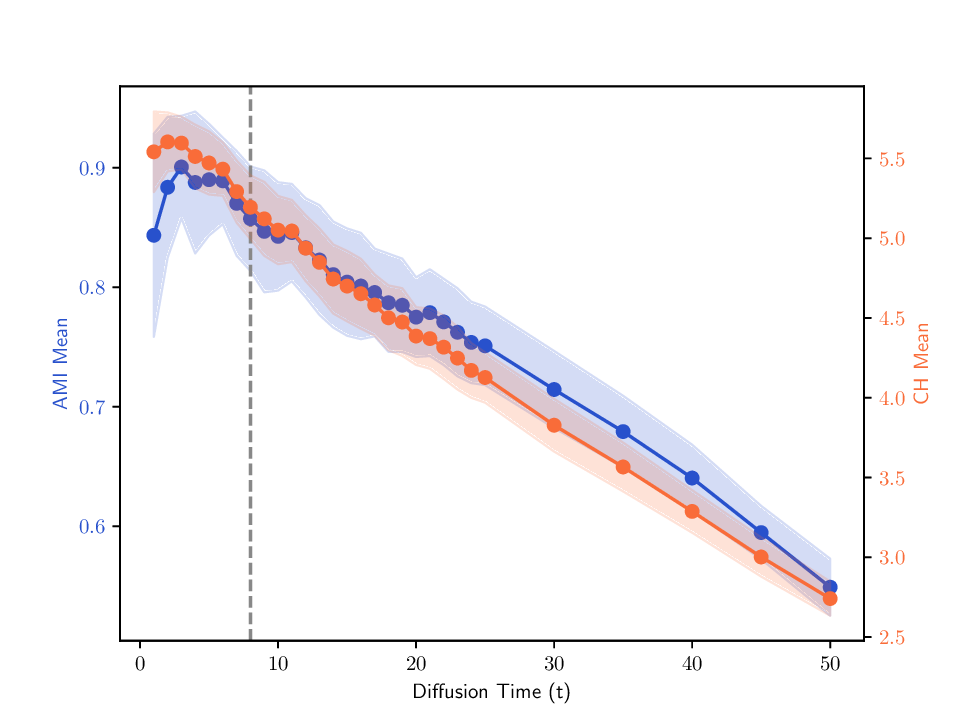

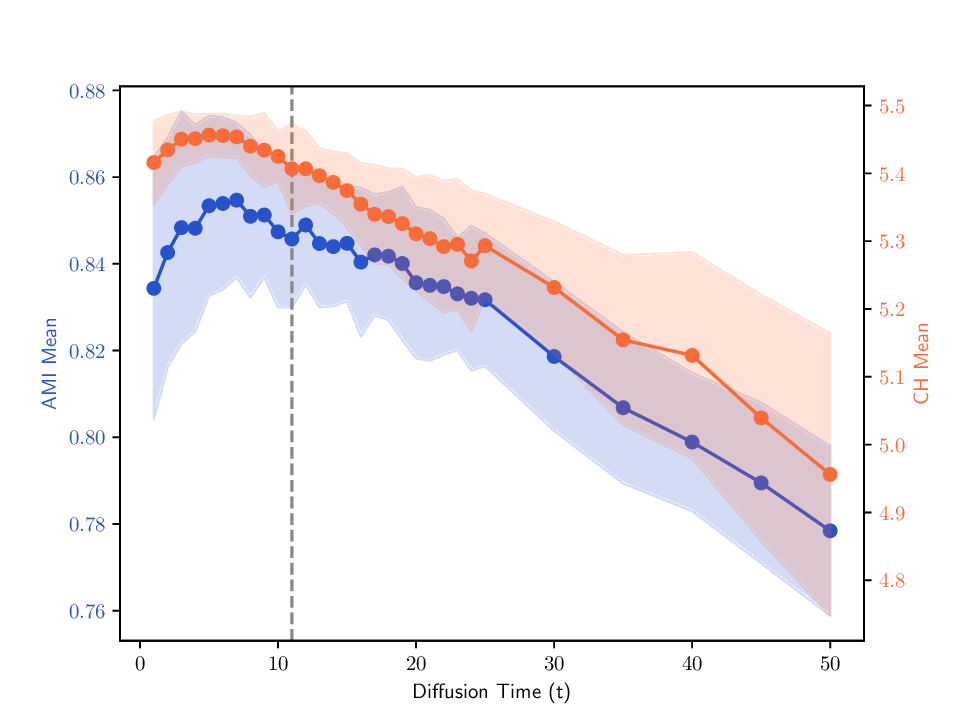

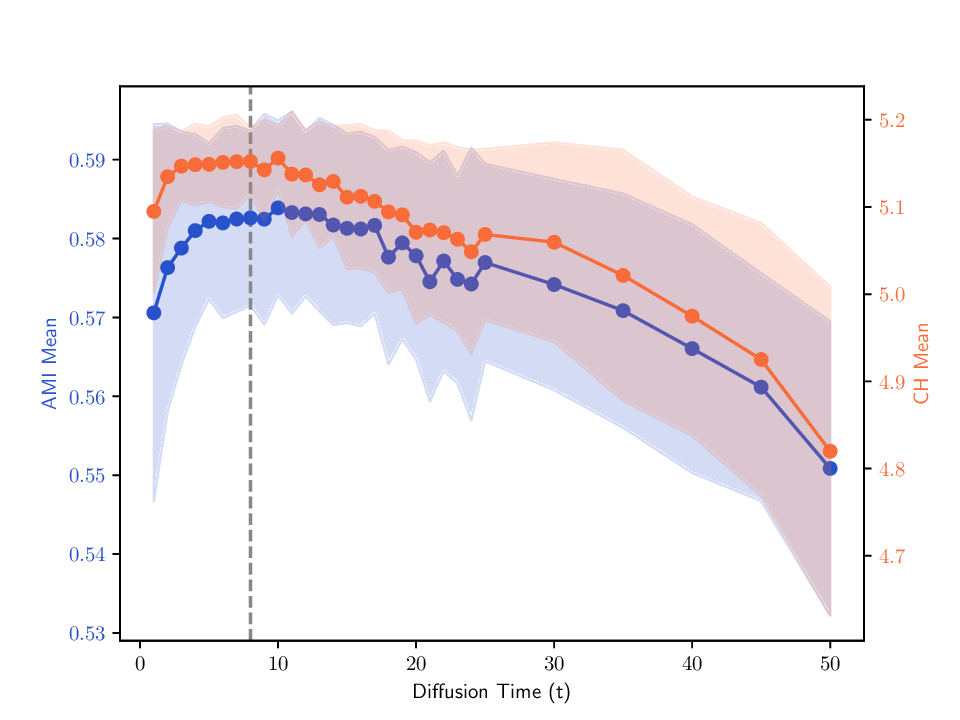

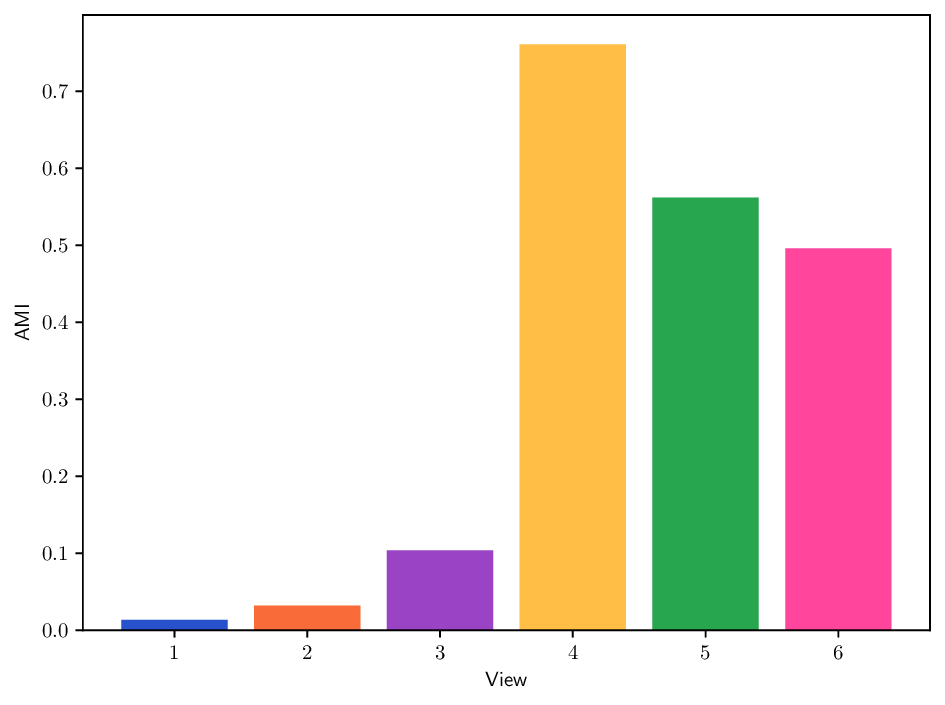

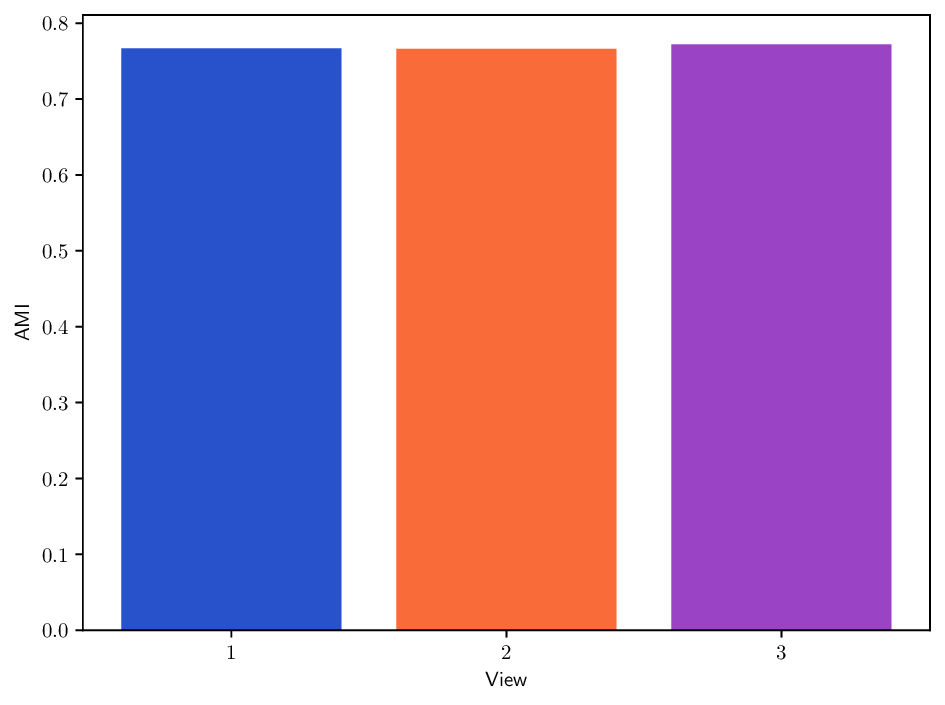

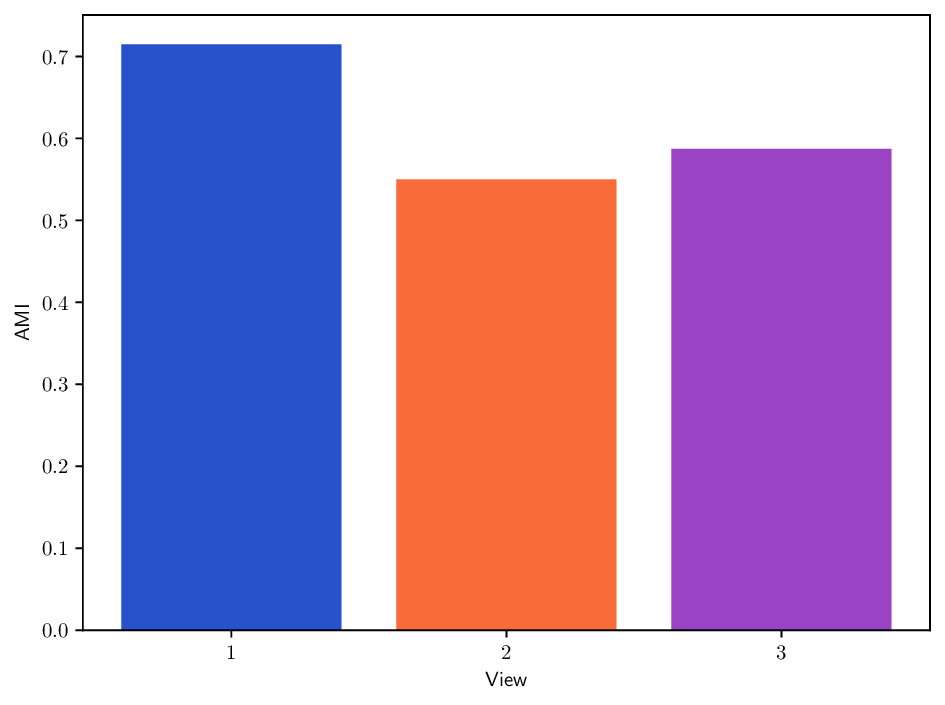

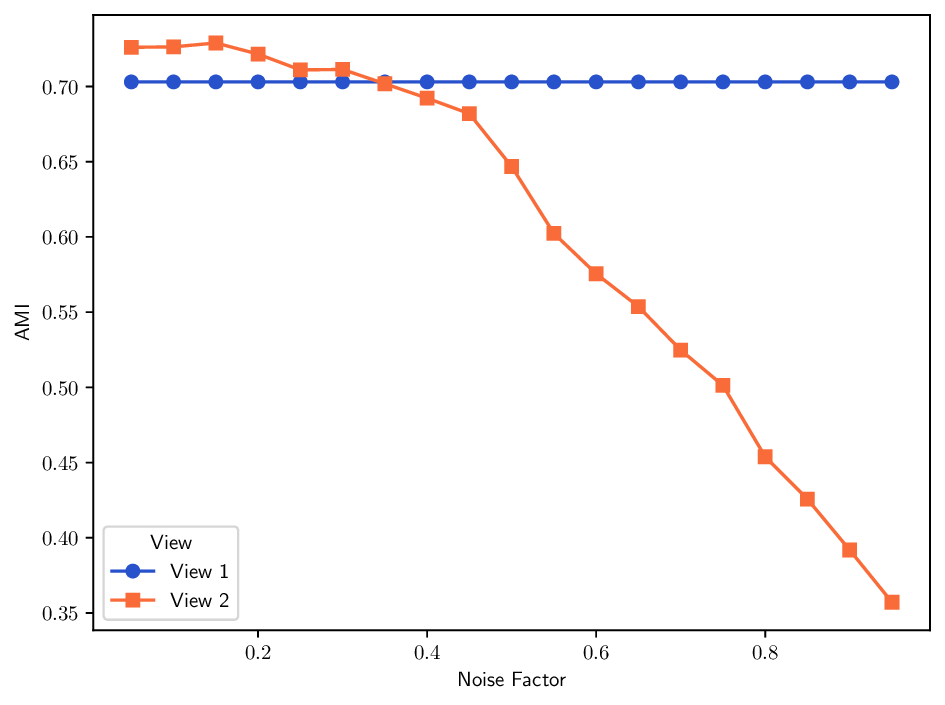

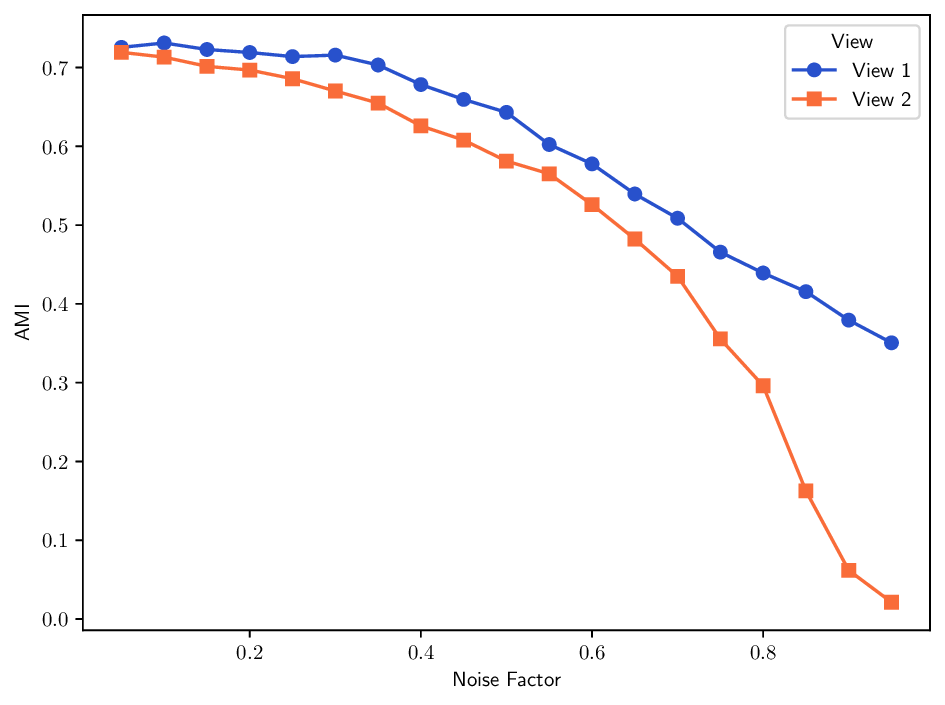

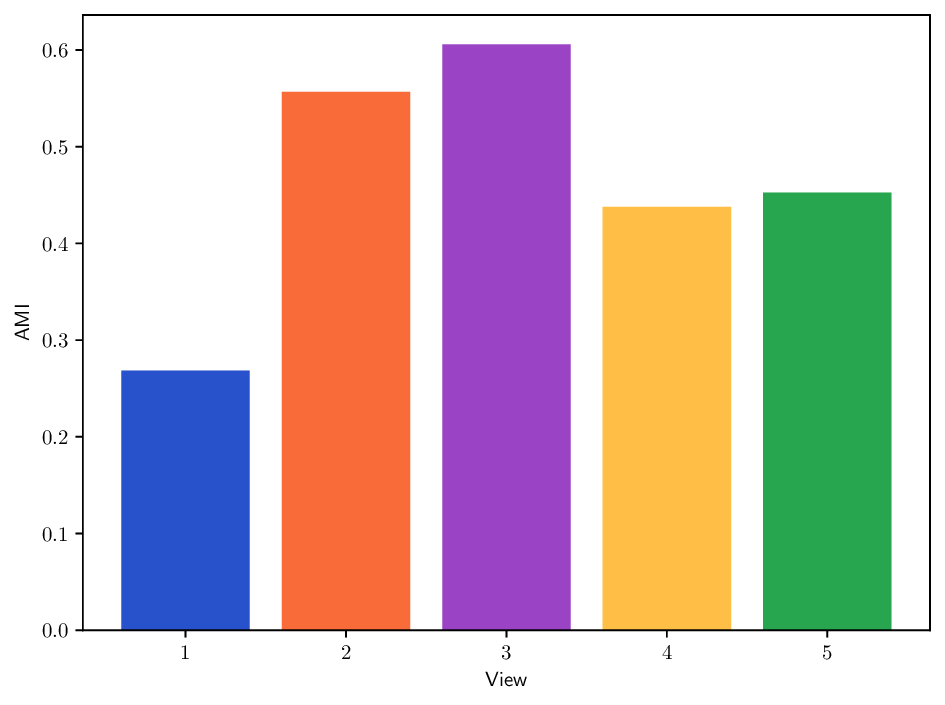

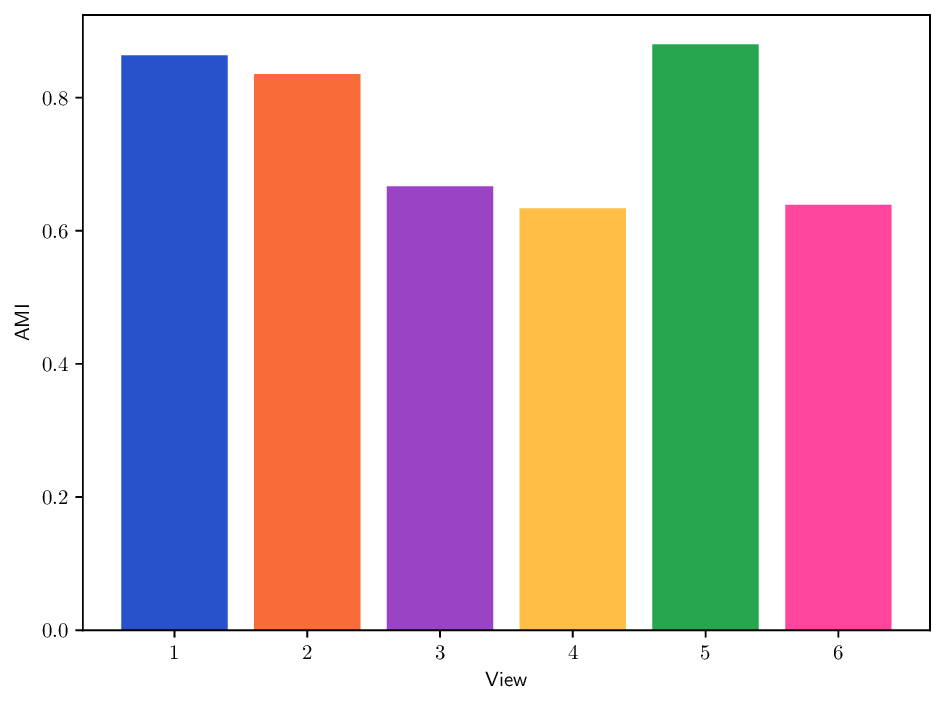

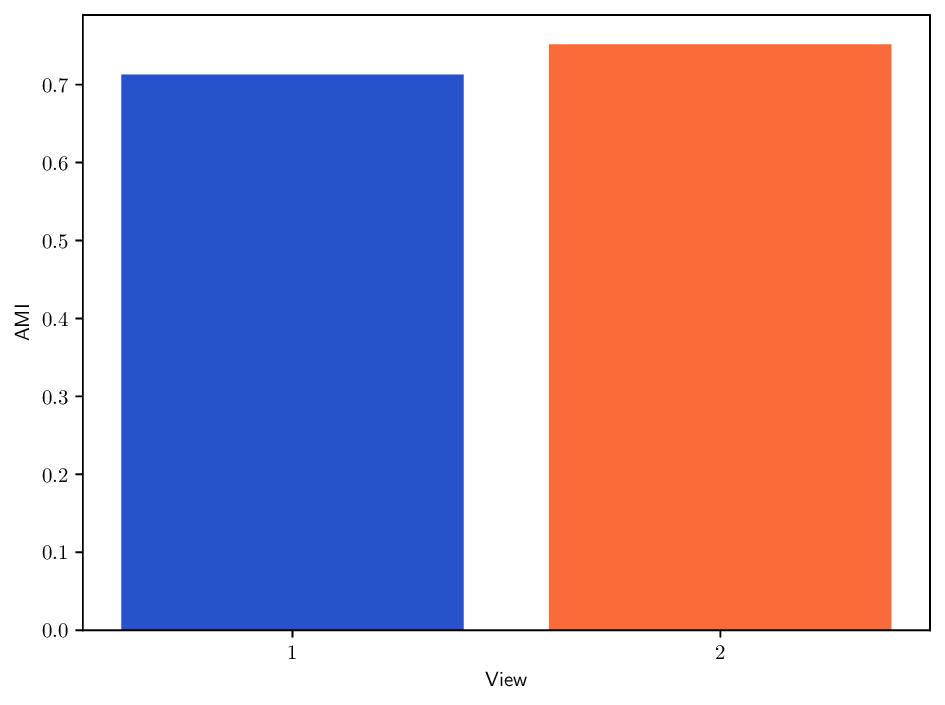

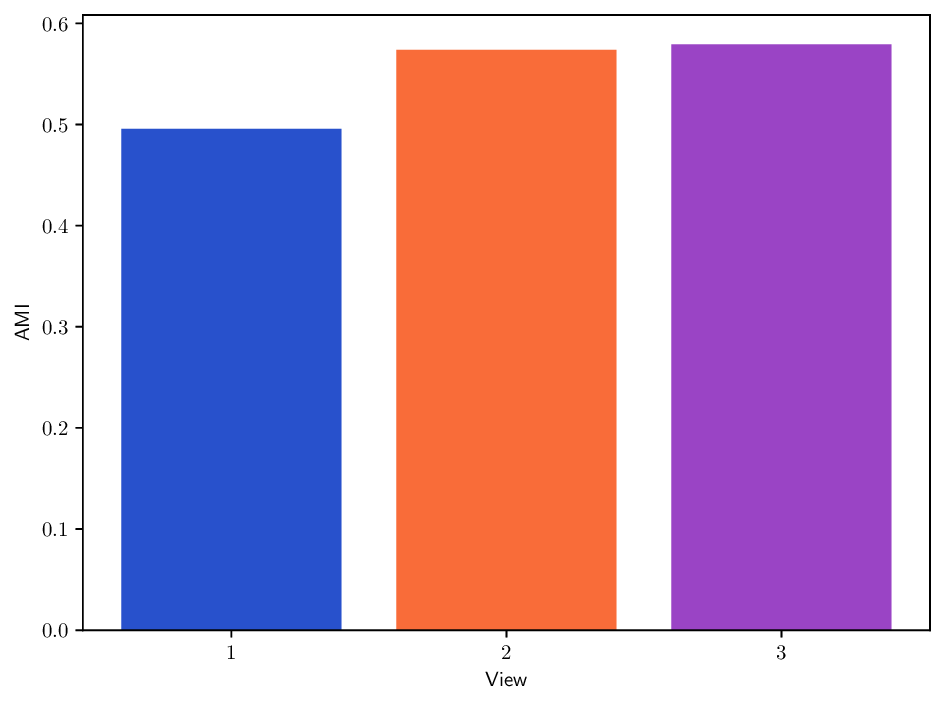

• Learning MDT operators. The proposed formulation enables learning diffusion operators within the admissible operator space. We develop unsupervised strategies guided by internal quality measures and examine configurations relevant for clustering and manifold learning. Experiments on synthetic and real-w

…(Full text truncated)…

This content is AI-processed based on ArXiv data.