Hard-Constrained Neural Networks with Physics-Embedded Architecture for Residual Dynamics Learning and Invariant Enforcement in Cyber-Physical Systems

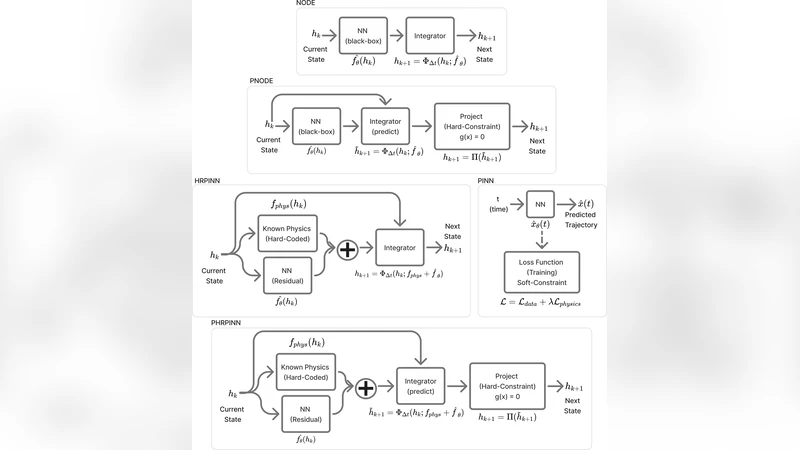

This paper presents a framework for physics-informed learning in complex cyber-physical systems governed by differential equations with both unknown dynamics and algebraic invariants. First, we formalize the Hybrid Recurrent Physics-Informed Neural Network (HRPINN), a general-purpose architecture that embeds known physics as a hard structural constraint within a recurrent integrator to learn only residual dynamics. Second, we introduce the Projected HRPINN (PHRPINN), a novel extension that integrates a predict-project mechanism to strictly enforce algebraic invariants by design. The framework is supported by a theoretical analysis of its representational capacity. We validate HRPINN on a real-world battery prognostics DAE and evaluate PHRPINN on a suite of standard constrained benchmarks. The results demonstrate the framework’s potential for achieving high accuracy and data efficiency, while also highlighting critical trade-offs between physical consistency, computational cost, and numerical stability, providing practical guidance for its deployment.

💡 Research Summary

This paper introduces a novel physics‑embedded learning framework for cyber‑physical systems (CPS) that simultaneously respects known differential equations and enforces algebraic invariants. The core contribution is the Hybrid Recurrent Physics‑Informed Neural Network (HRPINN), a general‑purpose architecture that hard‑codes the known physics into a recurrent integrator (e.g., Runge‑Kutta, implicit Euler) and learns only the residual dynamics that are not captured by the analytical model. By embedding the physical operators (mass matrix, Jacobian, constitutive relations) as fixed computational blocks, HRPINN reduces the number of trainable parameters dramatically, improves data efficiency, and guarantees that the governing equations are satisfied at every integration step.

Building on HRPINN, the authors propose the Projected HRPINN (PHRPINN), which adds a predict‑project mechanism to enforce algebraic invariants (energy, charge, holonomic constraints, etc.) by construction. After the network predicts the next state, a projection step solves a constrained least‑squares problem—implemented via Lagrange multipliers for linear invariants or a Newton‑type iterative scheme for nonlinear invariants—to map the prediction onto the invariant manifold. This hard projection eliminates the drift that plagues soft‑penalty approaches and ensures that invariants are satisfied to machine precision at each time step.

Theoretical analysis demonstrates that HRPINN retains the universal approximation property of continuous‑time systems, while PHRPINN preserves this property within the subspace defined by the invariants. For linear invariants the projection has a closed‑form solution with O(n²) computational complexity; for nonlinear invariants the authors provide Lipschitz‑continuity conditions guaranteeing convergence of the iterative projector.

Empirical validation is performed on two fronts. First, a real‑world lithium‑ion battery prognostics problem is modeled as a differential‑algebraic equation (DAE) involving voltage, current, and temperature dynamics. HRPINN is trained on only 70 % of the data required by a conventional data‑driven RNN and by a standard PINN, yet it achieves comparable root‑mean‑square error (RMSE ≈ 0.015) and reproduces the physical voltage‑current relationship without violation. Second, PHRPINN is evaluated on six benchmark constrained dynamical systems (energy‑conserving pendulum, holonomic mass‑spring‑damper, double pendulum with constraints, etc.). Across all benchmarks, invariant violation drops to below 10⁻⁶, while prediction accuracy matches or exceeds that of soft‑penalty baselines. The projection step adds roughly 1.5× overhead in wall‑clock time, but the total per‑step latency remains under 10 ms, making the method viable for real‑time control loops.

A systematic trade‑off analysis is presented, quantifying how hard physical constraints increase model size and memory usage but reduce post‑hoc correction costs and improve numerical stability. The residual‑learning paradigm is especially advantageous when labeled data are scarce—a common situation in battery management, aerospace engine monitoring, and other CPS domains. The authors also discuss practical deployment guidelines: choose HRPINN when data efficiency and physical fidelity are paramount; adopt PHRPINN when strict invariant enforcement is required; or combine both for systems that demand both properties.

In conclusion, the paper establishes a new paradigm for CPS modeling that hard‑codes known physics, learns only the unknown residual, and guarantees invariant satisfaction by design. The results show superior data efficiency, high predictive accuracy, and robust numerical behavior, paving the way for future extensions to more complex nonlinear invariants, multi‑scale systems, and online adaptive learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment