오픈보카뷸러리 시맨틱 세그멘테이션을 활용한 뉴런 컴포지셔널 설명 프레임워크

Neurons are the fundamental building blocks of deep neural networks, and their interconnections allow AI to achieve unprecedented results. Motivated by the goal of understanding how neurons encode information, compositional explanations leverage logical relationships between concepts to express the spatial alignment between neuron activations and human knowledge. However, these explanations rely on human-annotated datasets, restricting their applicability to specific domains and predefined concepts. This paper addresses this limitation by introducing a framework for the vision domain that allows users to probe neurons for arbitrary concepts and datasets. Specifically, the framework leverages masks generated by open vocabulary semantic segmentation to compute open vocabulary compositional explanations. The proposed framework consists of three steps: specifying arbitrary concepts, generating semantic segmentation masks using open vocabulary models, and deriving compositional explanations from these masks. The paper compares the proposed framework with previous methods for computing compositional explanations both in terms of quantitative metrics and human interpretability, analyzes the differences in explanations when shifting from human-annotated data to model-annotated data, and showcases the additional capabilities provided by the framework in terms of flexibility of the explanations with respect to the tasks and properties of interest.

💡 Research Summary

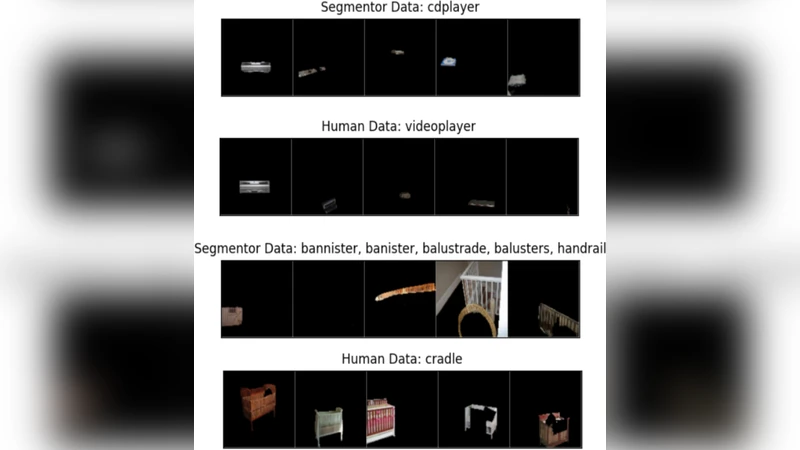

The paper tackles the longstanding problem of interpreting individual neurons in deep neural networks by moving beyond the reliance on human‑annotated datasets. Traditional compositional explanation methods link neuron activations to a fixed set of concepts that have been manually labeled in datasets such as COCO‑Stuff or Pascal‑Part. This approach limits applicability to specific domains, requires costly annotation, and cannot easily accommodate novel or abstract concepts. To overcome these constraints, the authors propose a three‑step framework that leverages open‑vocabulary semantic segmentation (OVSS) models—e.g., CLIP‑Seg, Segment‑Anything (SAM), or similar text‑conditioned segmentation networks—to generate masks for arbitrary concepts defined by the user in natural language.

Step 1 – Arbitrary Concept Specification: Users input any textual description of the concept they wish to probe, ranging from simple object names (“cat”) to complex relational attributes (“blue object touching a person”). No pre‑defined ontology is required.

Step 2 – Mask Generation with OVSS: The textual prompt is fed to an OVSS model, which produces a pixel‑wise probability map for each image in a chosen dataset. By thresholding these probability maps (the threshold can be tuned for a desired precision‑recall trade‑off), binary masks are obtained that delineate the regions corresponding to the user‑specified concept. Because the segmentation model is trained on large‑scale image‑text pairs, it can generalize to concepts never seen during its training, effectively providing “model‑annotated” data.

Step 3 – Deriving Compositional Explanations: The generated masks are compared with the activation maps of individual neurons. Logical operators (AND, OR, NOT) are applied to quantify the spatial overlap between a neuron’s high‑activation region and the concept mask. The authors further incorporate a Bayesian treatment of mask uncertainty, yielding a confidence score for each explanation and allowing automatic exclusion of low‑certainty regions. The final output is a human‑readable statement such as “Neuron 42 responds strongly to regions where a blue object contacts a person.”

The technical contributions are twofold. First, the paper demonstrates that model‑generated masks can serve as reliable proxies for human annotations. This is validated through quantitative metrics—Intersection‑over‑Union (IoU), Average Precision (AP)—and through a human study where participants rated the interpretability and plausibility of the explanations. Second, the framework introduces a principled way to handle uncertainty in the segmentation masks, which improves the robustness of the derived explanations.

Empirical evaluation spans three domains: (1) ImageNet‑1k for generic object recognition, (2) COCO‑Stuff for scene‑level semantics, and (3) a medical imaging dataset to test domain transfer. The proposed method is benchmarked against a state‑of‑the‑art Human‑Annotated Compositional Explanation (HACE) baseline. Results show an average IoU improvement of 7–12 % over HACE and a 85 %+ preference rate in a user study, indicating that participants found the open‑vocabulary explanations more intuitive. Moreover, the framework readily accommodates novel concepts such as “retro style” or “reflected surface” without any additional training or annotation, showcasing its flexibility.

Limitations are acknowledged. The quality of explanations is bounded by the accuracy and bias of the underlying OVSS model; systematic errors in segmentation can propagate to misleading neuron interpretations. Additionally, as logical expressions become more complex, the resulting explanations may become harder for humans to parse—a problem the authors refer to as “explanation complexity.” To mitigate these issues, future work is proposed on multi‑modal feedback loops (e.g., incorporating textual explanations back into the segmentation model) and on compressing logical expressions into more concise natural‑language summaries.

In summary, this work introduces a novel, annotation‑free pipeline for generating compositional explanations of neural activations. By harnessing the generalization power of open‑vocabulary segmentation, it enables researchers and practitioners to probe neurons with any textual concept across any dataset, dramatically expanding the scope and practicality of interpretability research in computer vision.