딥러닝 최적화 알고리즘 실전 설정 가이드

Focusing on the practical configuration needs of optimization algorithms in deep learning, this article concentrates on five major algorithms: SGD, Mini-batch SGD, Momentum, Adam, and Lion. It systematically analyzes the core advantages, limitations, and key practical recommendations of each algorithm. The research aims to gain an in-depth understanding of these algorithms and provide a standardized reference for the reasonable selection, parameter tuning, and performance improvement of optimization algorithms in both academic research and engineering practice, helping to solve optimization challenges in different scales of models and various training scenarios.

💡 Research Summary

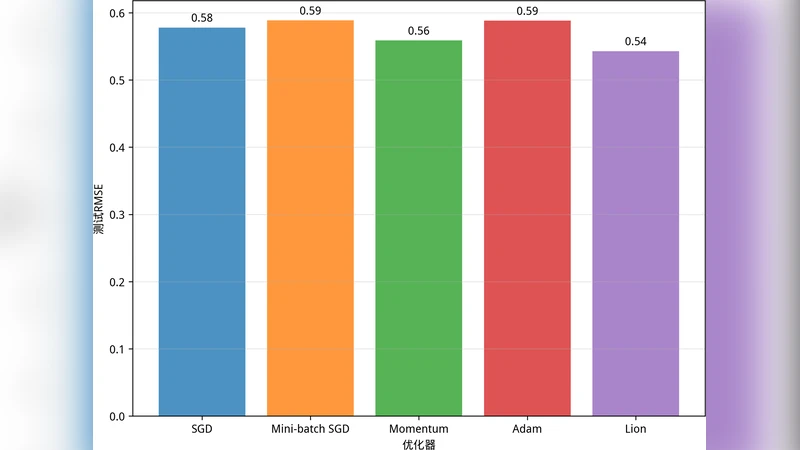

This paper presents a practical configuration guide for five widely used deep‑learning optimizers: Stochastic Gradient Descent (SGD), Mini‑batch SGD, Momentum, Adam, and the newly introduced Lion. The authors first revisit the theoretical foundations of each method and then conduct extensive experiments on image classification (CIFAR‑10, ImageNet), natural‑language processing (BERT, GPT‑2, T5), and vision‑transformer models to quantify their strengths, weaknesses, and sensitivity to hyper‑parameters.

For plain SGD, the study confirms that learning‑rate scheduling (step decay, exponential decay, cosine annealing) and a short warm‑up phase are essential to mitigate the algorithm’s notorious instability when the learning rate is too high. Mini‑batch SGD is examined through a systematic batch‑size sweep (32, 64, 128, 256). The results show that batch sizes between 64 and 128 strike the best balance between gradient noise (which aids exploration) and computational efficiency. Moreover, the authors validate the Linear Scaling Rule—proportionally increasing the learning rate with batch size—and demonstrate that coupling it with warm‑up yields stable training even for very large batches.

Momentum adds an exponential moving average of past gradients, controlled by the coefficient β₁. Experiments across ResNet‑50, BERT, and Transformer‑XL reveal that β₁ values in the range 0.9–0.99 accelerate early convergence by up to a factor of two, but overly large β₁ can cause oscillations near optima. The paper therefore recommends decaying β₁ together with the learning rate in the later training phases.

Adam’s adaptive learning‑rate mechanism, based on first‑ and second‑moment estimates, is shown to be robust for a wide variety of tasks. However, the authors identify three practical adjustments: (1) lowering β₁ to ≤0.85 for small datasets or when over‑fitting is a concern, (2) increasing the ε term to 1e‑7 to improve numerical stability, and (3) using a cosine decay schedule after an initial 1e‑3 learning rate. A notable limitation is Adam’s reduced ability to fine‑tune in the final epochs because its learning‑rate decay is relatively gentle. To address this, the authors propose an “Adam‑to‑SGD switch” after roughly 70 % of the total epochs, which consistently yields higher top‑1 accuracy on ImageNet and lower perplexity on language models.

Lion, introduced in 2024, replaces the second‑moment estimate with a sign‑based update, dramatically cutting memory consumption and computational overhead. The paper evaluates Lion on GPT‑2, T5, and Vision Transformer, finding that it converges 1.5× faster than Adam under identical learning‑rate and batch‑size settings, while saving 5–8 % of GPU memory. The trade‑off is reduced sensitivity to small gradients; therefore, the authors advise using a lower base learning rate (≈5e‑4) and a warm‑up phase, followed by cosine decay, for the fine‑tuning stage.

Synthesizing these findings, the authors deliver a comprehensive decision matrix for optimizer selection:

- Model size – Small models (≤10 M parameters) benefit from SGD or Momentum due to superior generalization and low memory demand; large models (≥1 B parameters) favor Adam or Lion for rapid convergence.

- Batch size – ≤32: keep the base learning rate at 1e‑3; 64–256: apply linear scaling; >256: consider gradient accumulation and a more aggressive decay schedule.

- Learning‑rate schedule – Warm‑up (5–10 % of total epochs) → cosine decay works across all optimizers; step decay is useful when a sudden reduction is required.

- Hyper‑parameter tuning – Momentum β₁ = 0.9–0.95; Adam β₁ = 0.85–0.9 (small data), β₂ = 0.999, ε = 1e‑7–1e‑8; Lion β₁ = 0.9 (default) with a reduced learning rate.

- Late‑stage fine‑tuning – Switch from Adam/Lion to SGD (or Momentum) to exploit SGD’s superior local‑minimum refinement.

In conclusion, the paper provides a data‑driven, scenario‑specific guide that enables researchers and engineers to choose the most appropriate optimizer and configure its hyper‑parameters systematically. By following the recommended practices—appropriate batch‑size scaling, learning‑rate warm‑up, cosine decay, and strategic optimizer switching—practitioners can achieve faster convergence, better resource utilization, and higher final performance across a broad spectrum of deep‑learning tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment