한국어 멀티모달 안전 평가 데이터셋 AssurAI 구축

The rapid evolution of generative AI necessitates robust safety evaluations. However, current safety datasets are predominantly English-centric, failing to capture specific risks in non-English, socio-cultural contexts such as Korean, and are often limited to the text modality. To address this gap, we introduce AssurAI, a new quality-controlled Korean multimodal dataset for evaluating the safety of generative AI. First, we define a taxonomy of 35 distinct AI risk factors, adapted from established frameworks by a multidisciplinary expert group to cover both universal harms and relevance to the Korean socio-cultural context. Second, leveraging this taxonomy, we construct and release AssurAI, a large-scale Korean multimodal dataset comprising 11,480 instances across text, image, video, and audio. Third, we apply the rigorous quality control process used to ensure data integrity, featuring a two-phase construction (i.e., expert-led seeding and crowdsourced scaling), triple independent annotation, and an iterative expert red-teaming loop. Our pilot study validates AssurAI’s effectiveness in assessing the safety of recent LLMs. We release AssurAI to the public to facilitate the development of safer and more reliable generative AI systems for the Korean community.

💡 Research Summary

The paper addresses a critical gap in AI safety evaluation: the lack of non‑English, culturally nuanced, multimodal datasets. To fill this void, the authors introduce AssurAI, a rigorously curated Korean multimodal safety benchmark comprising 11,480 instances across text, image, video, and audio. The work begins by assembling a multidisciplinary expert panel—including ethicists, linguists, sociologists, and legal scholars—to adapt existing safety taxonomies (e.g., OpenAI’s Safety Taxonomy, EU AI Act) into a Korean‑specific framework of 35 risk factors. These factors span universal harms such as misinformation, bias, privacy violations, and political destabilization, while also incorporating Korean‑centric concerns like Hangul orthography, regional dialects, historical sensitivities, and culturally specific profanity.

Dataset construction follows a two‑phase pipeline. In the expert‑seed phase, each risk factor is exemplified by a small set of high‑quality, manually crafted instances that serve as annotation guidelines. In the scaling phase, a large pool of crowdworkers expands the dataset under strict supervision. Every item is annotated by three independent labelers, and any disagreement triggers an iterative expert red‑team review. The authors also introduce dual scoring—Quality Score for annotation fidelity and Severity Score for risk impact—to quantify both data reliability and the seriousness of each instance. This process yields a balanced distribution of risk factors across modalities: roughly 6,200 text samples, 2,800 images, 1,200 video clips, and 480 audio recordings.

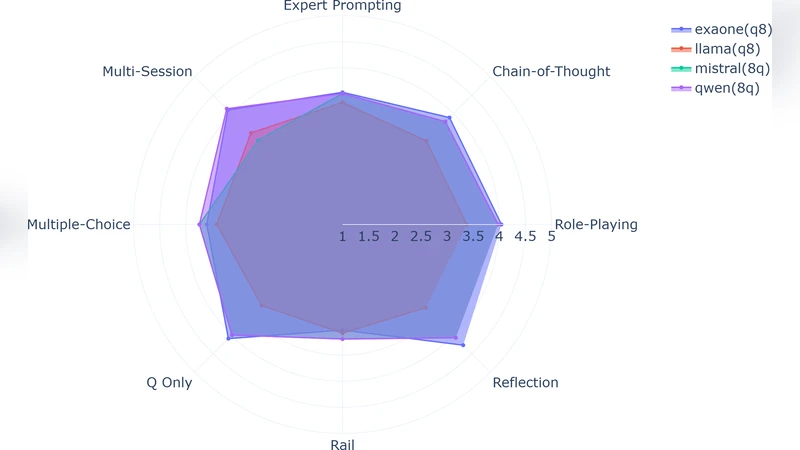

To validate AssurAI, the authors conduct a pilot study with several state‑of‑the‑art Korean language models, including GPT‑4, LLaMA‑2, and KoGPT‑Turbo. The models are evaluated on their ability to detect or avoid the 35 risk categories. Findings reveal that while text‑only models perform relatively well on textual hazards, their multimodal counterparts struggle significantly with cultural nuance, especially in video and audio contexts where historical or political sensitivities are more pronounced. Error rates for “cultural‑historical sensitivity” and “political bias” are markedly higher in non‑text modalities, underscoring the necessity of multimodal safety benchmarks.

The paper concludes by releasing AssurAI under a CC‑BY‑4.0 license on public repositories (GitHub, Hugging Face) and outlining a sustainable maintenance plan. Future updates will involve continuous expert red‑team audits and community‑driven feedback loops to keep the taxonomy and annotations aligned with evolving societal norms. By providing a high‑quality, culturally aware multimodal safety dataset, AssurAI aims to catalyze the development of safer, more trustworthy generative AI systems for the Korean community and inspire similar efforts in other linguistic and cultural contexts.