📝 Original Info

- Title: RecToM: A Benchmark for Evaluating Machine Theory of Mind in LLM-based Conversational Recommender Systems

- ArXiv ID: 2511.22275

- Date: 2025-11-27

- Authors: Researchers from original ArXiv paper

📝 Abstract

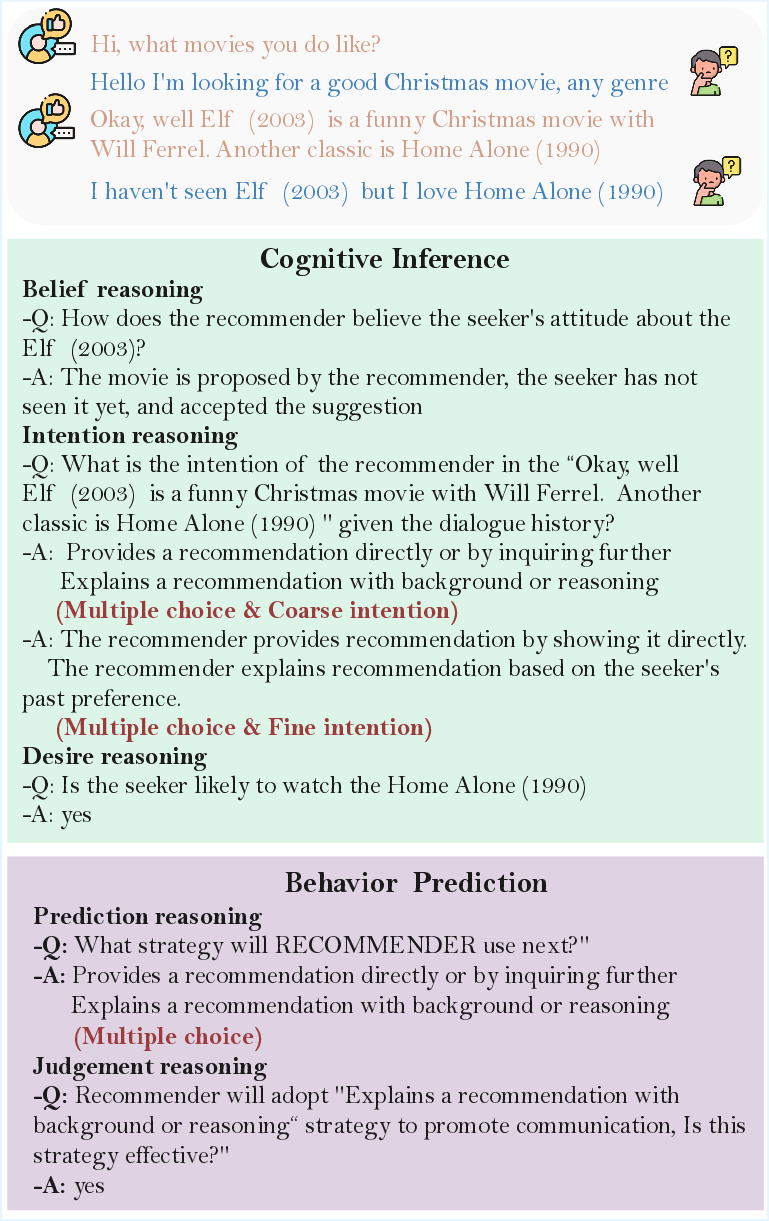

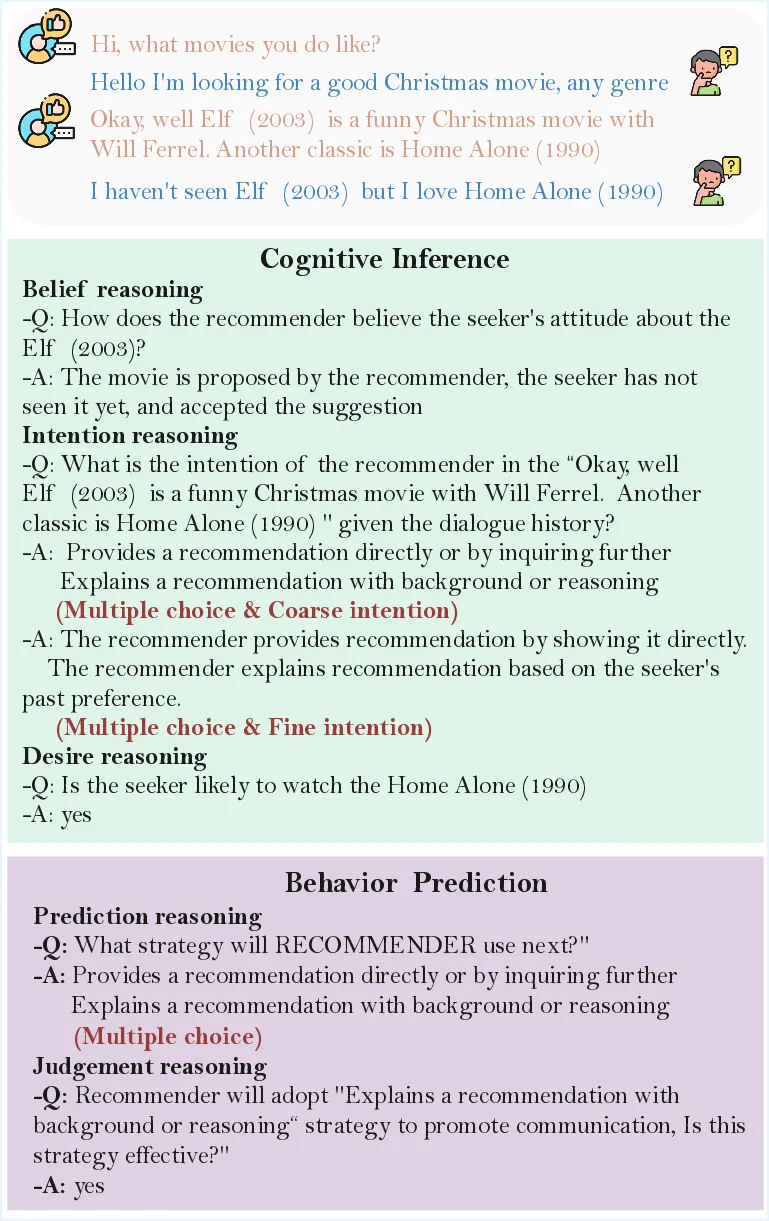

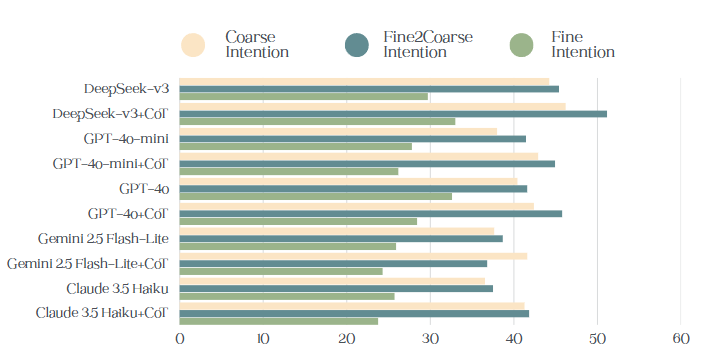

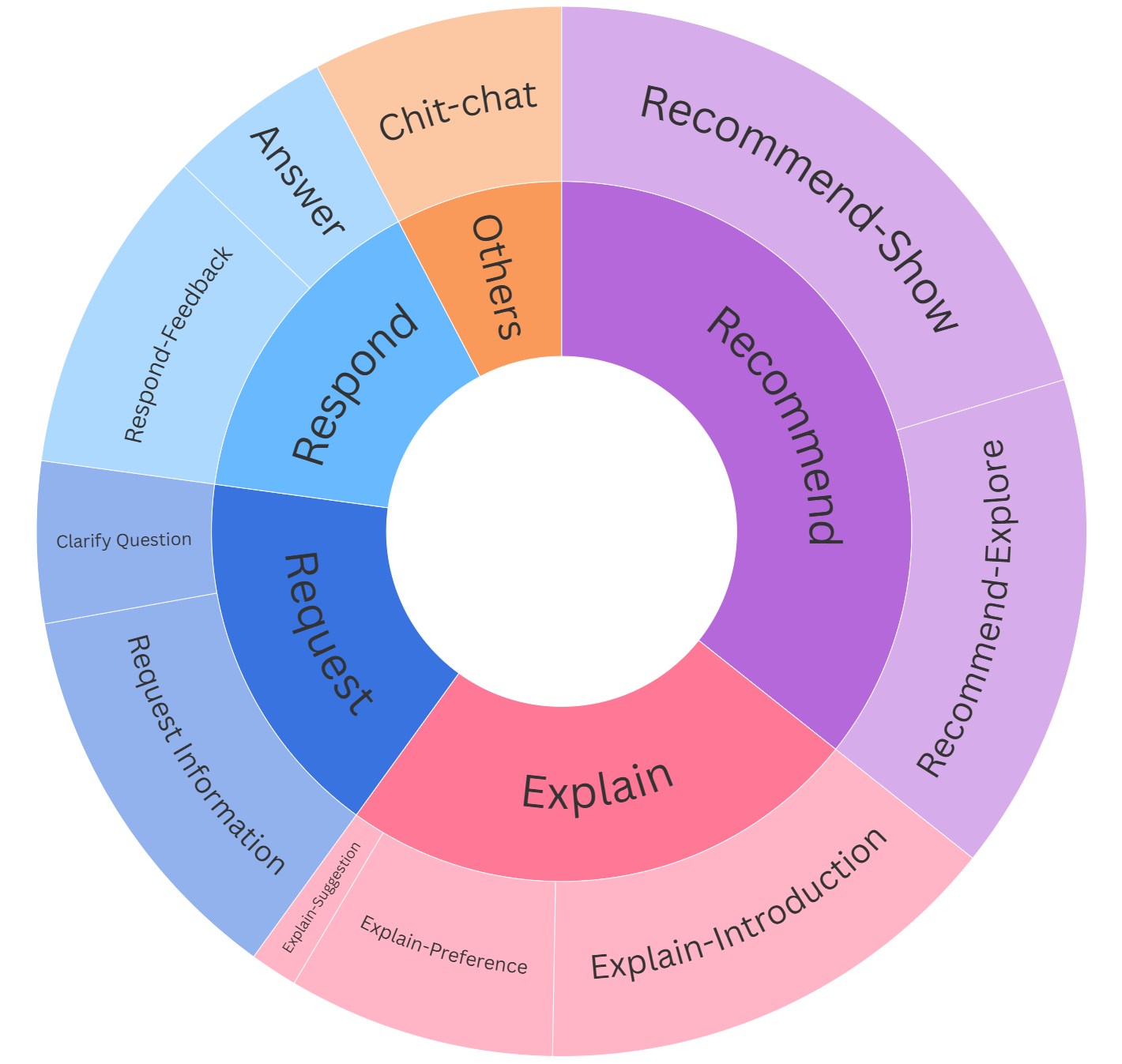

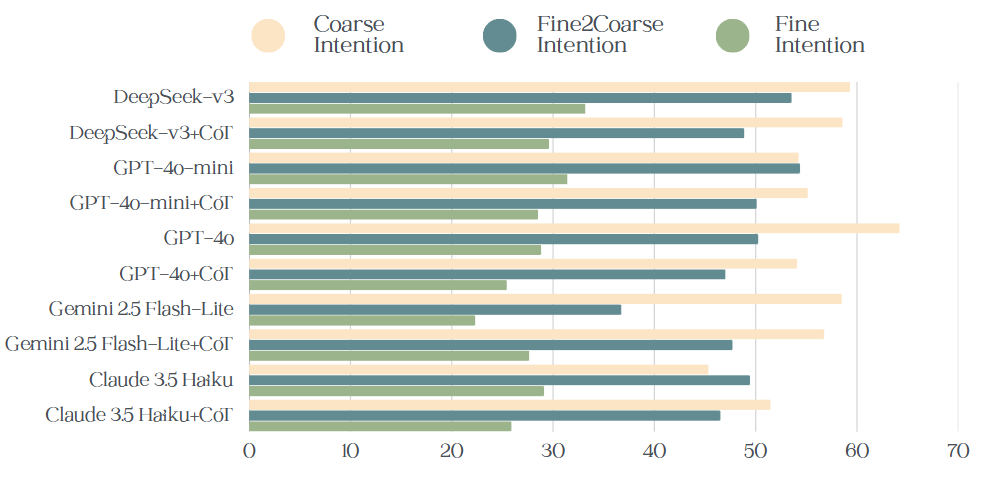

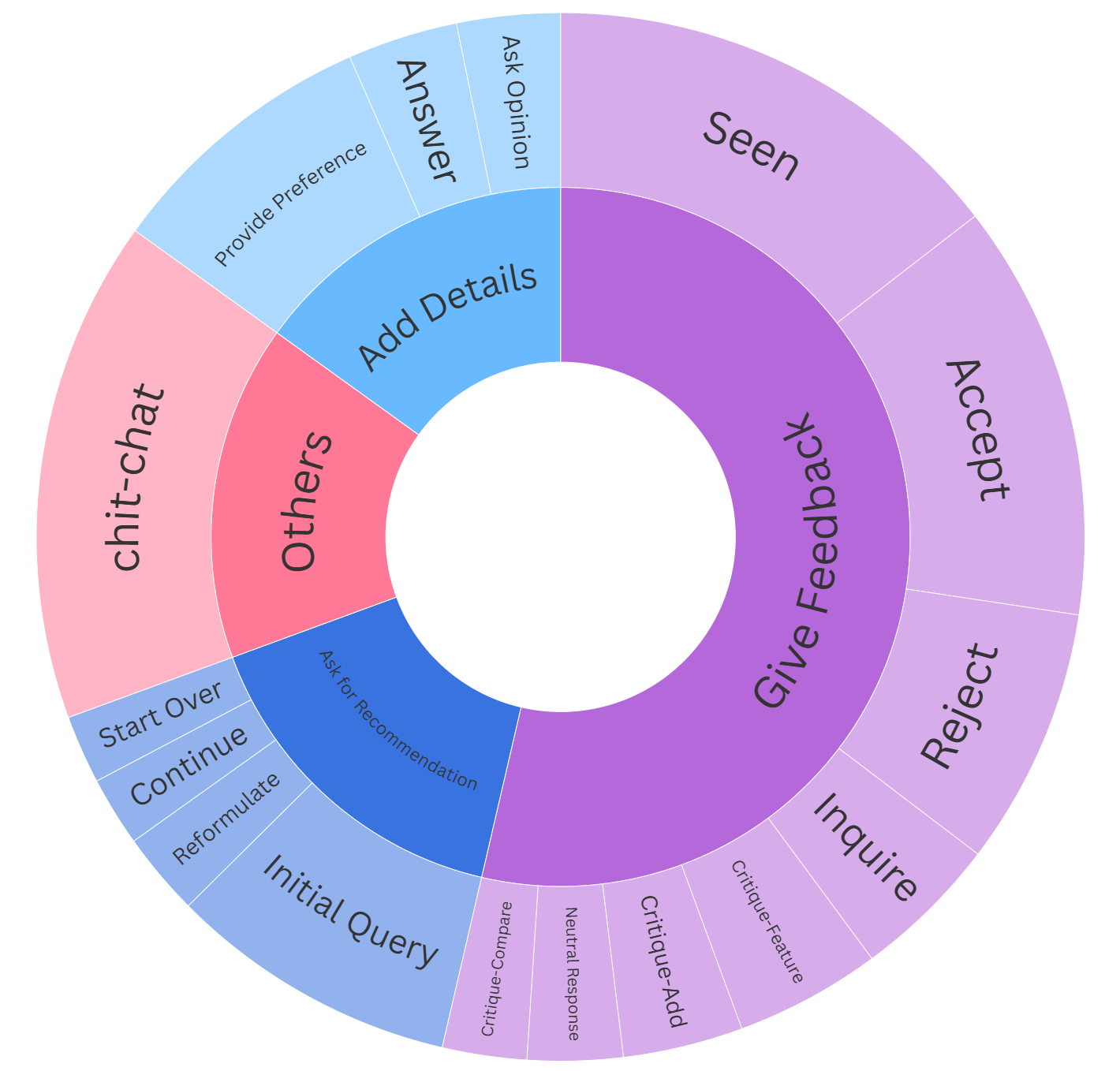

Large Language models (LLMs) are revolutionizing the conversational recommender systems (CRS) through their impressive capabilities in instruction comprehension, reasoning, and human interaction. A core factor underlying effective recommendation dialogue is the ability to infer and reason about users' mental states (such as desire, intention, and belief), a cognitive capacity commonly referred to as Theory of Mind (ToM). Despite growing interest in evaluating ToM in LLMs, current benchmarks predominantly rely on synthetic narratives inspired by Sally-Anne test, which emphasize physical perception and fail to capture the complexity of mental state inference in realistic conversational settings. Moreover, existing benchmarks often overlook a critical component of human ToM: behavioral prediction, the ability to use inferred mental states to guide strategic decision-making and select appropriate conversational actions for future interactions. To better align LLM-based ToM evaluation with human-like social reasoning, we propose RECTOM, a novel benchmark for evaluating ToM abilities in recommendation dialogues. RECTOM focuses on two complementary dimensions: Cognitive Inference and Behavioral Prediction. The former focus on understanding what has been communicated by inferring the underlying mental states. The latter emphasizes what should be done next, evaluating whether LLMs can leverage these inferred mental states to predict, select, and assess appropriate dialogue strategies. Together, these dimensions enable a comprehensive assessment of ToM reasoning in CRS. Extensive experiments on state-of-the-art LLMs demonstrate that RECTOM poses a significant challenge. While the models exhibit partial competence in recognizing mental states, they struggle to maintain coherent, strategic ToM reasoning throughout dynamic recommendation dialogues, particularly in tracking evolving intentions and aligning conversational strategies with inferred mental states.

💡 Deep Analysis

Deep Dive into RecToM: A Benchmark for Evaluating Machine Theory of Mind in LLM-based Conversational Recommender Systems.

Large Language models (LLMs) are revolutionizing the conversational recommender systems (CRS) through their impressive capabilities in instruction comprehension, reasoning, and human interaction. A core factor underlying effective recommendation dialogue is the ability to infer and reason about users’ mental states (such as desire, intention, and belief), a cognitive capacity commonly referred to as Theory of Mind (ToM). Despite growing interest in evaluating ToM in LLMs, current benchmarks predominantly rely on synthetic narratives inspired by Sally-Anne test, which emphasize physical perception and fail to capture the complexity of mental state inference in realistic conversational settings. Moreover, existing benchmarks often overlook a critical component of human ToM: behavioral prediction, the ability to use inferred mental states to guide strategic decision-making and select appropriate conversational actions for future interactions. To better align LLM-based ToM evaluation with

📄 Full Content

RecToM: A Benchmark for Evaluating Machine Theory of Mind in LLM-based

Conversational Recommender Systems

Mengfan Li1*, Xuanhua Shi1†, Yang Deng2

1National Engineering Research Center for Big Data Technology and System,

Services Computing Technology and System Lab, Cluster and Grid Computing Lab,

Huazhong University of Science and Technology

2Singapore Management University

{limf, xhshi}@hust.edu.cn, ydeng@smu.edu.sg

Abstract

Large Language models (LLMs) are revolutionizing the con-

versational recommender systems (CRS) through their im-

pressive capabilities in instruction comprehension, reasoning,

and human interaction. A core factor underlying effective rec-

ommendation dialogue is the ability to infer and reason about

users’ mental states (such as desire, intention, and belief), a

cognitive capacity commonly referred to as Theory of Mind

(ToM). Despite growing interest in evaluating ToM in LLMs,

current benchmarks predominantly rely on synthetic narra-

tives inspired by Sally-Anne test, which emphasize physical

perception and fail to capture the complexity of mental state

inference in realistic conversational settings. Moreover, ex-

isting benchmarks often overlook a critical component of hu-

man ToM: behavioral prediction, the ability to use inferred

mental states to guide strategic decision-making and select

appropriate conversational actions for future interactions. To

better align LLM-based ToM evaluation with human-like so-

cial reasoning, we propose RECTOM, a novel benchmark

for evaluating ToM abilities in recommendation dialogues.

RECTOM focuses on two complementary dimensions: Cog-

nitive Inference and Behavioral Prediction. The former fo-

cus on understanding what has been communicated by infer-

ring the underlying mental states. The latter emphasizes what

should be done next, evaluating whether LLMs can leverage

these inferred mental states to predict, select, and assess ap-

propriate dialogue strategies. Together, these dimensions en-

able a comprehensive assessment of ToM reasoning in CRS.

Extensive experiments on state-of-the-art LLMs demonstrate

that RECTOM poses a significant challenge. While the mod-

els exhibit partial competence in recognizing mental states,

they struggle to maintain coherent, strategic ToM reasoning

throughout dynamic recommendation dialogues, particularly

in tracking evolving intentions and aligning conversational

strategies with inferred mental states.

Datasets — https://github.com/CGCL-codes/RecToM

Introduction

Large Language Models (LLMs) have significantly ad-

vanced conversational recommender systems (An et al.

*Work was done during a visit at SMU.

†Corresponding author.

Copyright © 2026, Association for the Advancement of Artificial

Intelligence (www.aaai.org). All rights reserved.

2025; He et al. 2025; Huang et al. 2025; Qin et al. 2024;

Li et al. 2025), enabling significant proficiency in response

generation that closely resembles human dialogue. A key

capability that supports effective conversational recommen-

dations is the ability to understand and anticipate others’

thoughts, desires, and intentions, which is an ability widely

recognized in cognitive science as the “Theory of Mind”

(ToM) (Kosinski 2023; Zhang et al. 2025). Investigating

ToM in LLM-based conversational recommenders enables

a nuanced evaluation of the models’ competence in compre-

hending user preferences, predicting subsequent behaviors,

and strategically adapting interactions, thereby improving

user satisfaction and engagement in recommendation dia-

logues. This not only foster a deeper understanding of which

specific aspects of LLMs drive effectiveness in conversa-

tional recommendation, but also identifiers critical gaps that

require targeted improvements to align with human ToM, fa-

cilitating more engaging and satisfying user experiences.

Recent advancements in LLMs have fueled growing inter-

est in evaluating their capacity for ToM reasoning (de Car-

valho et al. 2025; Friedman et al. 2023). While several

benchmarks (Gandhi et al. 2023; Xu et al. 2024; Wu et al.

2023; Jin et al. 2024) have been proposed to evaluate ToM

in LLMs, they exhibit significant limitations for assess-

ing conversational recommender systems. One limitation

is that many existing works (Jin et al. 2024; Xu et al.

2024; Shi et al. 2025) rely on the Sally-Anne test and simi-

lar paradigms, which typically involve simplified scenarios,

such as individuals entering a space, moving objects, and

others arriving afterward. These setups lack engaging and

naturalistic interactions, rendering them ill-suited for com-

plex conversational recommendation systems. A further lim-

itation lies in the predominant focus of current benchmarks

(Chan et al. 2024; Jung et al. 2024) on retrospective rea-

soning about mental states (e.g., beliefs, intentions, desires),

based on dialogues that have already transpired. Such bench-

marks fail to capture a core aspect of human ToM: the abil-

ity to use inferred mental states to guide strat

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.