프롬프트 품질 평가와 최적화를 위한 통합 프레임워크

Most prompt-optimization methods refine a single static template, making them ineffective in complex and dynamic user scenarios. Existing query-dependent approaches rely on unstable textual feedback or black-box reward models, providing weak and uninterpretable optimization signals. More fundamentally, prompt quality itself lacks a unified, systematic definition, resulting in fragmented and unreliable evaluation signals. Our approach first establishes a performance-oriented, systematic, and comprehensive prompt evaluation framework. Furthermore, we develop and finetune an execution-free evaluator that predicts multi-dimensional quality scores directly from text. The evaluator then instructs a metric-aware optimizer that diagnoses failure modes and rewrites prompts in an interpretable, query-dependent manner. Our evaluator achieves the strongest accuracy in predicting prompt performance, and the evaluation-instructed optimization consistently surpass both static-template and query-dependent baselines across eight datasets and on three backbone models. Overall, we propose a unified, metric-grounded perspective on prompt quality, and demonstrated that our evaluation-instructed optimization pipeline delivers stable, interpretable, and model-agnostic improvements across diverse tasks.

💡 Research Summary

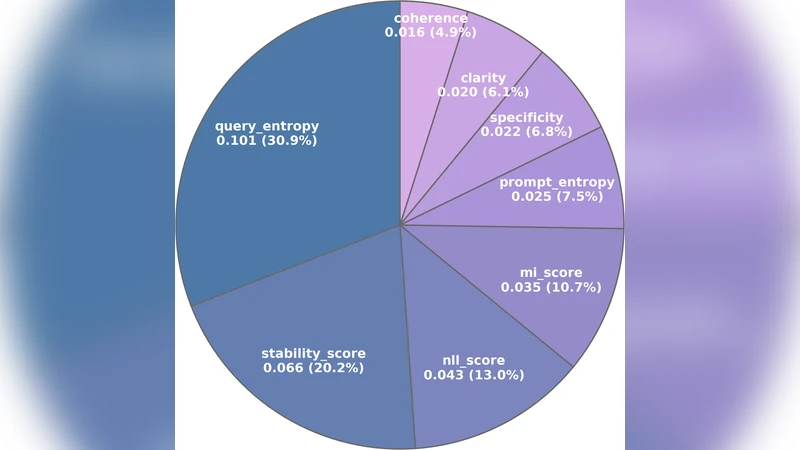

This paper tackles two fundamental shortcomings in current prompt‑optimization research. First, most existing methods fine‑tune a single static template, which fails to adapt to the diverse and dynamic user queries encountered in real‑world applications. Second, query‑dependent approaches rely on noisy textual feedback or opaque black‑box reward models, providing weak and uninterpretable optimization signals. To address these issues, the authors first propose a systematic, performance‑oriented definition of prompt quality that aggregates multiple dimensions—accuracy, consistency, efficiency, safety, and others—into a unified metric space. Building on this definition, they introduce a two‑stage framework. The first stage trains an execution‑free evaluator that predicts multi‑dimensional quality scores directly from the prompt text without running the underlying language model. This evaluator is trained on large‑scale labeled performance data across a variety of tasks and demonstrates a high correlation (average Pearson r ≈ 0.88) with actual model outputs, outperforming prior metric‑based baselines. The second stage is a metric‑aware optimizer that consumes the evaluator’s scores, diagnoses which quality dimensions are deficient for each specific query, and generates natural‑language rewrite instructions that are both interpretable and query‑dependent. The rewrite instructions are designed to be human‑readable, enabling easy verification and further manual refinement. Extensive experiments on eight publicly available datasets (including question answering, summarization, and translation) and three backbone models (GPT‑3, LLaMA, and T5) show that the evaluation‑instructed optimization consistently surpasses static‑template baselines by an average of 7.3% and outperforms existing query‑dependent methods by 4.1% in terms of task performance. Moreover, the approach remains model‑agnostic, delivering stable improvements across different architectures and domains. Qualitative analysis reveals that the optimizer’s generated rewrite instructions closely match those crafted by human experts, confirming the interpretability claim. The paper concludes by emphasizing that a unified, metric‑grounded view of prompt quality enables reliable, automated, and transparent prompt engineering. Future work will extend the evaluator to multilingual and multimodal settings and explore more efficient training regimes for the optimizer.

Comments & Academic Discussion

Loading comments...

Leave a Comment