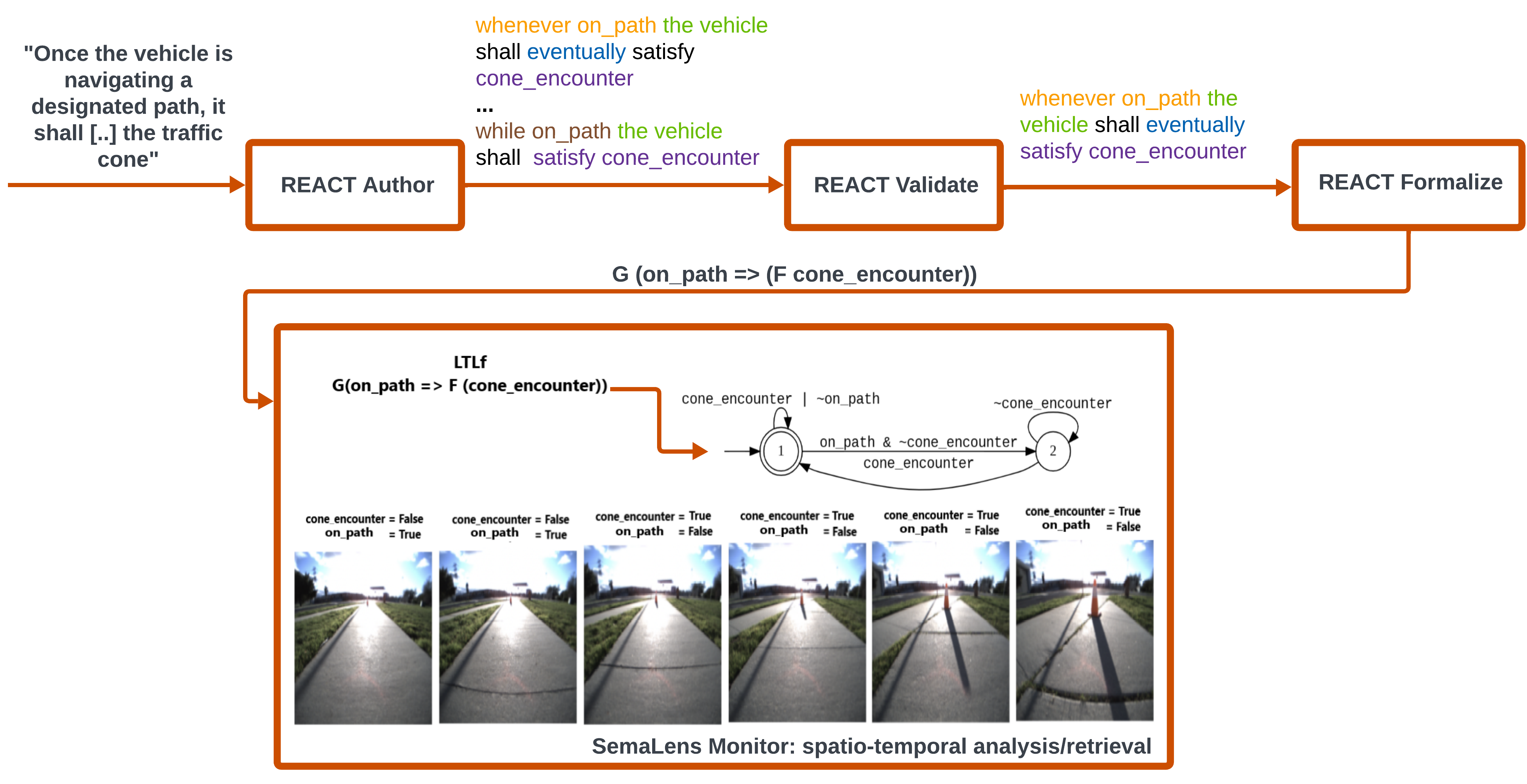

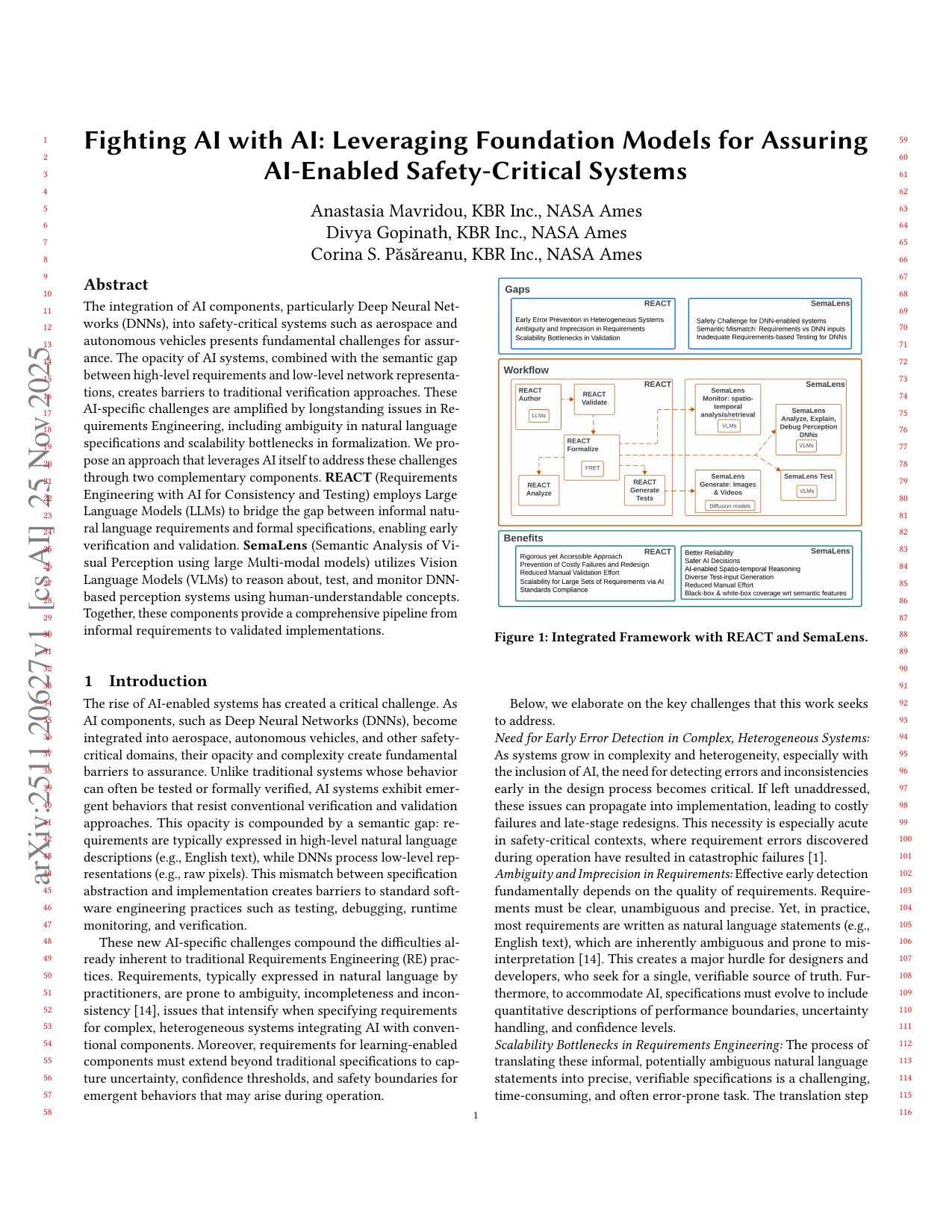

The integration of AI components, particularly Deep Neural Networks (DNNs), into safety-critical systems such as aerospace and autonomous vehicles presents fundamental challenges for assurance. The opacity of AI systems, combined with the semantic gap between high-level requirements and low-level network representations, creates barriers to traditional verification approaches. These AI-specific challenges are amplified by longstanding issues in Requirements Engineering, including ambiguity in natural language specifications and scalability bottlenecks in formalization. We propose an approach that leverages AI itself to address these challenges through two complementary components. REACT (Requirements Engineering with AI for Consistency and Testing) employs Large Language Models (LLMs) to bridge the gap between informal natural language requirements and formal specifications, enabling early verification and validation. SemaLens (Semantic Analysis of Visual Perception using large Multi-modal models) utilizes Vision Language Models (VLMs) to reason about, test, and monitor DNN-based perception systems using human-understandable concepts. Together, these components provide a comprehensive pipeline from informal requirements to validated implementations.

Deep Dive into AI 기반 안전중요 시스템을 위한 요구공학과 시각 인식 검증 통합 프레임워크.

The integration of AI components, particularly Deep Neural Networks (DNNs), into safety-critical systems such as aerospace and autonomous vehicles presents fundamental challenges for assurance. The opacity of AI systems, combined with the semantic gap between high-level requirements and low-level network representations, creates barriers to traditional verification approaches. These AI-specific challenges are amplified by longstanding issues in Requirements Engineering, including ambiguity in natural language specifications and scalability bottlenecks in formalization. We propose an approach that leverages AI itself to address these challenges through two complementary components. REACT (Requirements Engineering with AI for Consistency and Testing) employs Large Language Models (LLMs) to bridge the gap between informal natural language requirements and formal specifications, enabling early verification and validation. SemaLens (Semantic Analysis of Visual Perception using large Multi-m

arXiv:2511.20627v1 [cs.AI] 25 Nov 2025

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

Fighting AI with AI: Leveraging Foundation Models for Assuring

AI-Enabled Safety-Critical Systems

Anastasia Mavridou, KBR Inc., NASA Ames

Divya Gopinath, KBR Inc., NASA Ames

Corina S. Păsăreanu, KBR Inc., NASA Ames

Abstract

The integration of AI components, particularly Deep Neural Net-

works (DNNs), into safety-critical systems such as aerospace and

autonomous vehicles presents fundamental challenges for assur-

ance. The opacity of AI systems, combined with the semantic gap

between high-level requirements and low-level network representa-

tions, creates barriers to traditional verification approaches. These

AI-specific challenges are amplified by longstanding issues in Re-

quirements Engineering, including ambiguity in natural language

specifications and scalability bottlenecks in formalization. We pro-

pose an approach that leverages AI itself to address these challenges

through two complementary components. REACT (Requirements

Engineering with AI for Consistency and Testing) employs Large

Language Models (LLMs) to bridge the gap between informal natu-

ral language requirements and formal specifications, enabling early

verification and validation. SemaLens (Semantic Analysis of Vi-

sual Perception using large Multi-modal models) utilizes Vision

Language Models (VLMs) to reason about, test, and monitor DNN-

based perception systems using human-understandable concepts.

Together, these components provide a comprehensive pipeline from

informal requirements to validated implementations.

1

Introduction

The rise of AI-enabled systems has created a critical challenge. As

AI components, such as Deep Neural Networks (DNNs), become

integrated into aerospace, autonomous vehicles, and other safety-

critical domains, their opacity and complexity create fundamental

barriers to assurance. Unlike traditional systems whose behavior

can often be tested or formally verified, AI systems exhibit emer-

gent behaviors that resist conventional verification and validation

approaches. This opacity is compounded by a semantic gap: re-

quirements are typically expressed in high-level natural language

descriptions (e.g., English text), while DNNs process low-level rep-

resentations (e.g., raw pixels). This mismatch between specification

abstraction and implementation creates barriers to standard soft-

ware engineering practices such as testing, debugging, runtime

monitoring, and verification.

These new AI-specific challenges compound the difficulties al-

ready inherent to traditional Requirements Engineering (RE) prac-

tices. Requirements, typically expressed in natural language by

practitioners, are prone to ambiguity, incompleteness and incon-

sistency [14], issues that intensify when specifying requirements

for complex, heterogeneous systems integrating AI with conven-

tional components. Moreover, requirements for learning-enabled

components must extend beyond traditional specifications to cap-

ture uncertainty, confidence thresholds, and safety boundaries for

emergent behaviors that may arise during operation.

Figure 1: Integrated Framework with REACT and SemaLens.

Below, we elaborate on the key challenges that this work seeks

to address.

Need for Early Error Detection in Complex, Heterogeneous Systems:

As systems grow in complexity and heterogeneity, especially with

the inclusion of AI, the need for detecting errors and inconsistencies

early in the design process becomes critical. If left unaddressed,

these issues can propagate into implementation, leading to costly

failures and late-stage redesigns. This necessity is especially acute

in safety-critical contexts, where requirement errors discovered

during operation have resulted in catastrophic failures [1].

Ambiguity and Imprecision in Requirements: Effective early detection

fundamentally depends on the quality of requirements. Require-

ments must be clear, unambiguous and precise. Yet, in practice,

most requirements are written as natural language statements (e.g.,

English text), which are inherently ambiguous and prone to mis-

interpretation [14]. This creates a major hurdle for designers and

developers, who seek for a single, verifiable source of truth. Fur-

thermore, to accommodate AI, specifications must evolve to include

quantitative descriptions of performance boundaries, uncertainty

handling, and confidence levels.

Scalability Bottlenecks in Requirements Engineering: The process of

translating these informal, potentially ambiguous natural language

statements into precise, verifiable specifications is a challenging,

time-consuming, and often error-prone task. The translation step

1

…(Full text truncated)…

This content is AI-processed based on ArXiv data.