Lindsey [2025] investigates introspective awareness in language models through four experiments, finding that models can sometimes detect and identify injected activation patterns-but unreliably (∼20% success in the best model). We focus on the first of these experiments-self-report of injected "thoughts"and ask whether this capability can be directly trained rather than waiting for emergence. Through fine-tuning on transient single-token injections, we transform a 7B parameter model from near-complete failure (0.4% accuracy, 6.7% false positive rate) to reliable detection (85% accuracy on held-out concepts at α = 40, 0% false positives). Our model detects fleeting "thoughts" injected at a single token position, retains that information, and reports the semantic content across subsequent generation steps. On this task, our trained model satisfies three of Lindsey's criteria: accuracy (correct identification), grounding (0/60 false positives), and internality (detection precedes verbalization). Generalization to unseen concept vectors (7.5pp gap) demonstrates the model learns a transferable skill rather than memorizing specific vectors, though this does not establish metacognitive representation in Lindsey's sense. These results address an open question raised by Lindsey: whether "training for introspection would help eliminate cross-model differences." We show that at least one component of introspective behavior can be directly induced, offering a pathway to built-in AI transparency.

Deep Dive into Training Introspective Behavior: Fine-Tuning Induces Reliable Internal State Detection in a 7B Model.

Lindsey [2025] investigates introspective awareness in language models through four experiments, finding that models can sometimes detect and identify injected activation patterns-but unreliably (∼20% success in the best model). We focus on the first of these experiments-self-report of injected “thoughts"and ask whether this capability can be directly trained rather than waiting for emergence. Through fine-tuning on transient single-token injections, we transform a 7B parameter model from near-complete failure (0.4% accuracy, 6.7% false positive rate) to reliable detection (85% accuracy on held-out concepts at α = 40, 0% false positives). Our model detects fleeting “thoughts” injected at a single token position, retains that information, and reports the semantic content across subsequent generation steps. On this task, our trained model satisfies three of Lindsey’s criteria: accuracy (correct identification), grounding (0/60 false positives), and internality (detection precedes verbal

"I detect an injected thought about volcano." -DeepSeek-7B (7B parameters), correctly identifying a transient concept injection it had never seen during training Can language models be trained to monitor their own internal states? Lindsey [2025] recently investigated introspective awareness through four experiments: (1) self-report of injected "thoughts," (2) distinguishing thoughts from text inputs, (3) detecting unintended outputs via introspection, and (4) intentional control of internal states. Across these experiments, introspective capabilities proved unreliable (∼20% success even in Claude Opus 4.1) and appeared to emerge only at massive scale. Lindsey speculates that "a lightweight process of explicitly training for introspection would help eliminate cross-model differences," but leaves this as an open question.

We address this question for Lindsey’s first experiment: self-report of injected thoughts. We demonstrate that this capability-detecting and identifying injected activation patterns-can be reliably induced in a 7B parameter model through fine-tuning. Moreover, we train on transient injection, where the concept vector is applied at only a single token position. This paradigm tests whether the model can notice a fleeting anomaly, retain that information across subsequent generation steps, and report it accurately.

Before training, DeepSeek-7B fails at introspection tasks almost entirely: only 1.2% detection rate and 0.4% overall success (1/240 trials), while producing 6.7% false positives on control trials. After training on transient injections, it achieves 85% accuracy on held-out concepts at moderate injection strengths (α = 40), with zero false positives across all 60 control trials (95% CI: [0%-6%]). Performance decreases at higher strengths (α = 100 → 55%), suggesting an optimal operating range where injections are detectable but do not overwhelm generation. The generalization gap of only 7.5 percentage points indicates the model learns a robust skill rather than memorizing specific concept-vector mappings.

Relation to Lindsey’s Criteria. Lindsey defines introspective awareness via four criteria: accuracy, grounding, internality, and metacognitive representation. On the injected-thought detection task, our trained model satisfies:

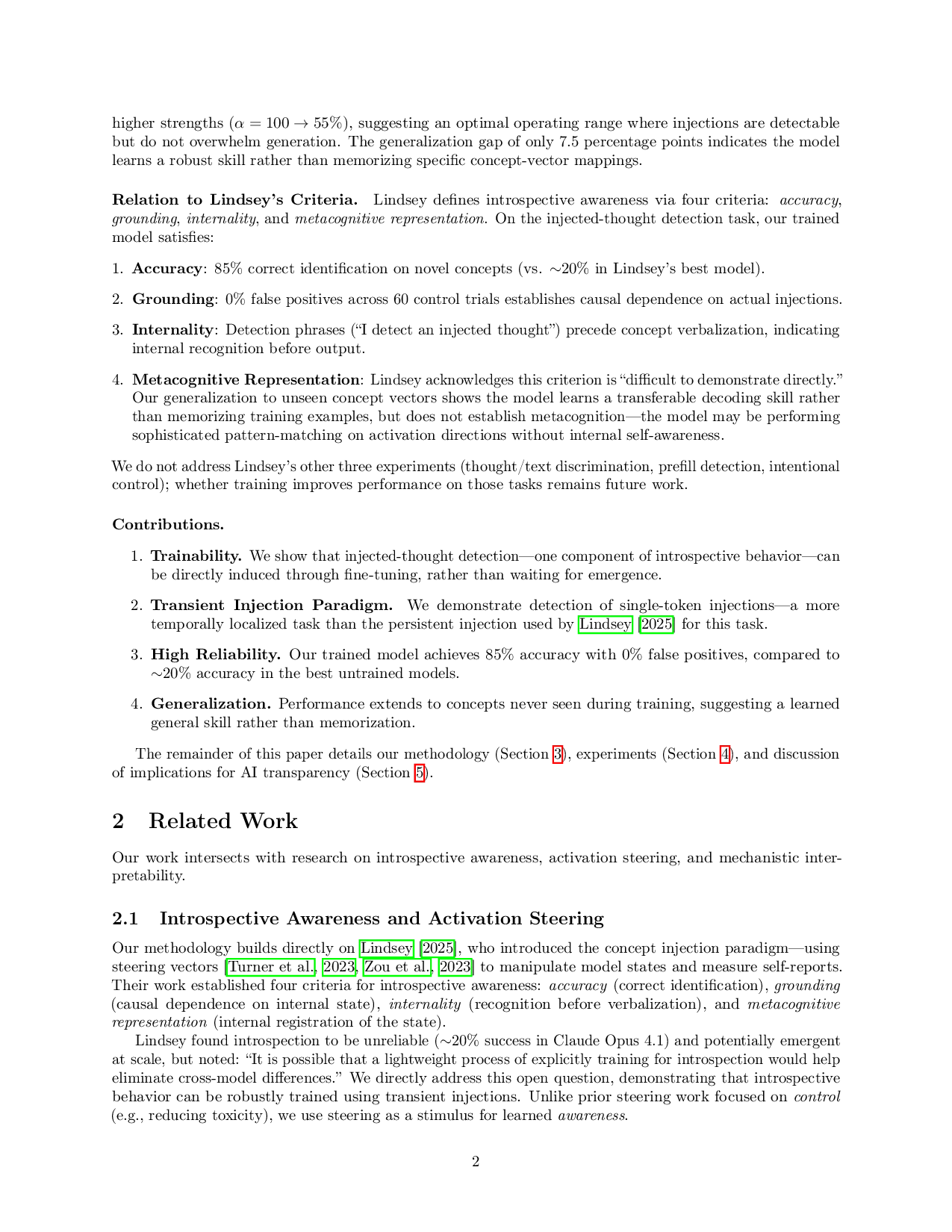

Accuracy: 85% correct identification on novel concepts (vs. ∼20% in Lindsey’s best model).

Grounding: 0% false positives across 60 control trials establishes causal dependence on actual injections.

Internality: Detection phrases (“I detect an injected thought”) precede concept verbalization, indicating internal recognition before output.

Metacognitive Representation: Lindsey acknowledges this criterion is “difficult to demonstrate directly.” Our generalization to unseen concept vectors shows the model learns a transferable decoding skill rather than memorizing training examples, but does not establish metacognition-the model may be performing sophisticated pattern-matching on activation directions without internal self-awareness.

We do not address Lindsey’s other three experiments (thought/text discrimination, prefill detection, intentional control); whether training improves performance on those tasks remains future work.

Trainability. We show that injected-thought detection-one component of introspective behavior-can be directly induced through fine-tuning, rather than waiting for emergence.

Transient Injection Paradigm. We demonstrate detection of single-token injections-a more temporally localized task than the persistent injection used by Lindsey [2025] for this task.

High Reliability. Our trained model achieves 85% accuracy with 0% false positives, compared to ∼20% accuracy in the best untrained models.

Generalization. Performance extends to concepts never seen during training, suggesting a learned general skill rather than memorization.

The remainder of this paper details our methodology (Section 3), experiments (Section 4), and discussion of implications for AI transparency (Section 5).

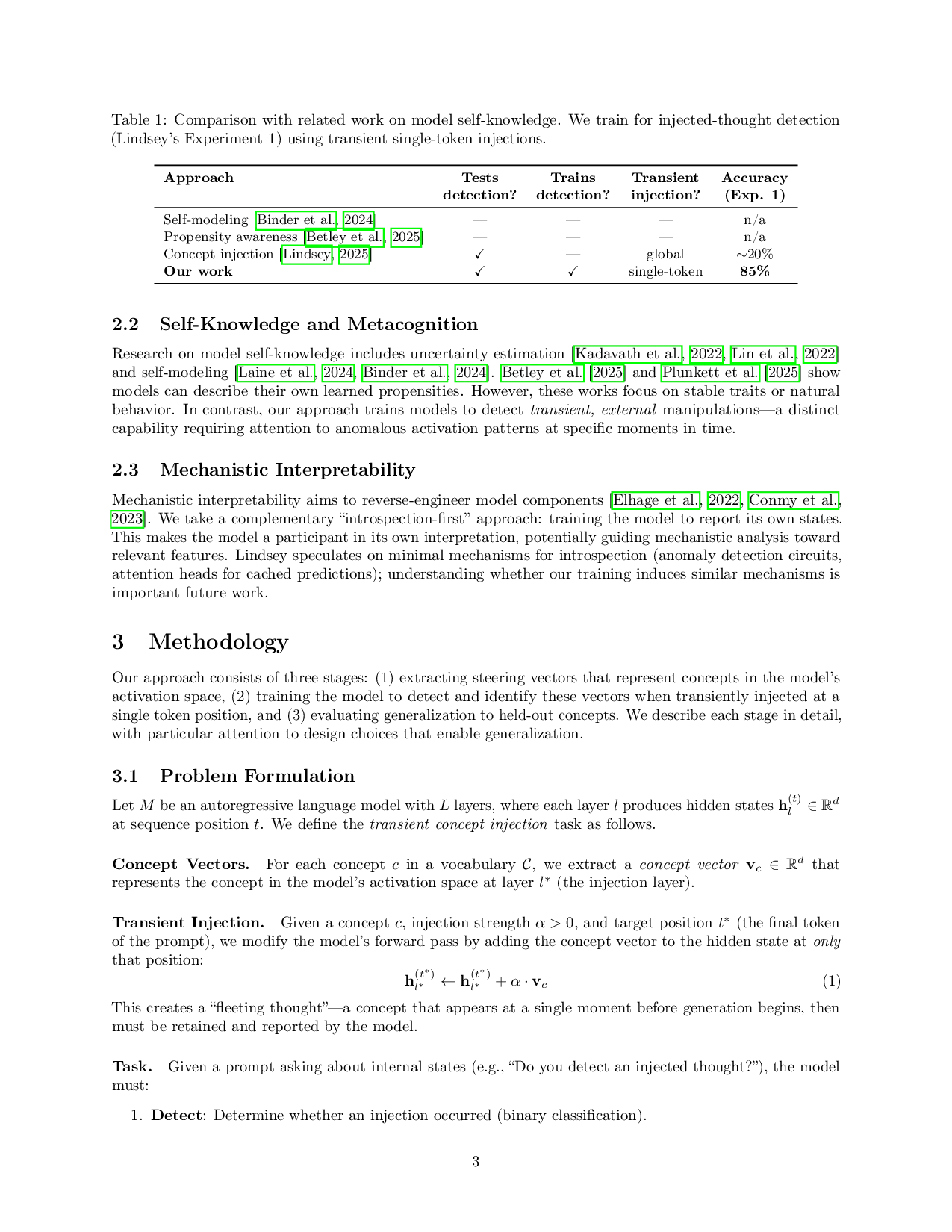

Our work intersects with research on introspective awareness, activation steering, and mechanistic interpretability.

Our methodology builds directly on Lindsey [2025], who introduced the concept injection paradigm-using steering vectors [Turner et al., 2023, Zou et al., 2023] to manipulate model states and measure self-reports. Their work established four criteria for introspective awareness: accuracy (correct identification), grounding (causal dependence on internal state), internality (recognition before verbalization), and metacognitive representation (internal registration of the state).

Lindsey found introspection to be unreliable (∼20% success in Claude Opus 4.1) and potentially emergent at scale, but noted: “It is possible that a lightweight process of explicitly training for introspection would help eliminate cross-model differences.” We directly address this open question, demonstrating that introspective behavior can be r

…(Full text truncated)…

This content is AI-processed based on ArXiv data.