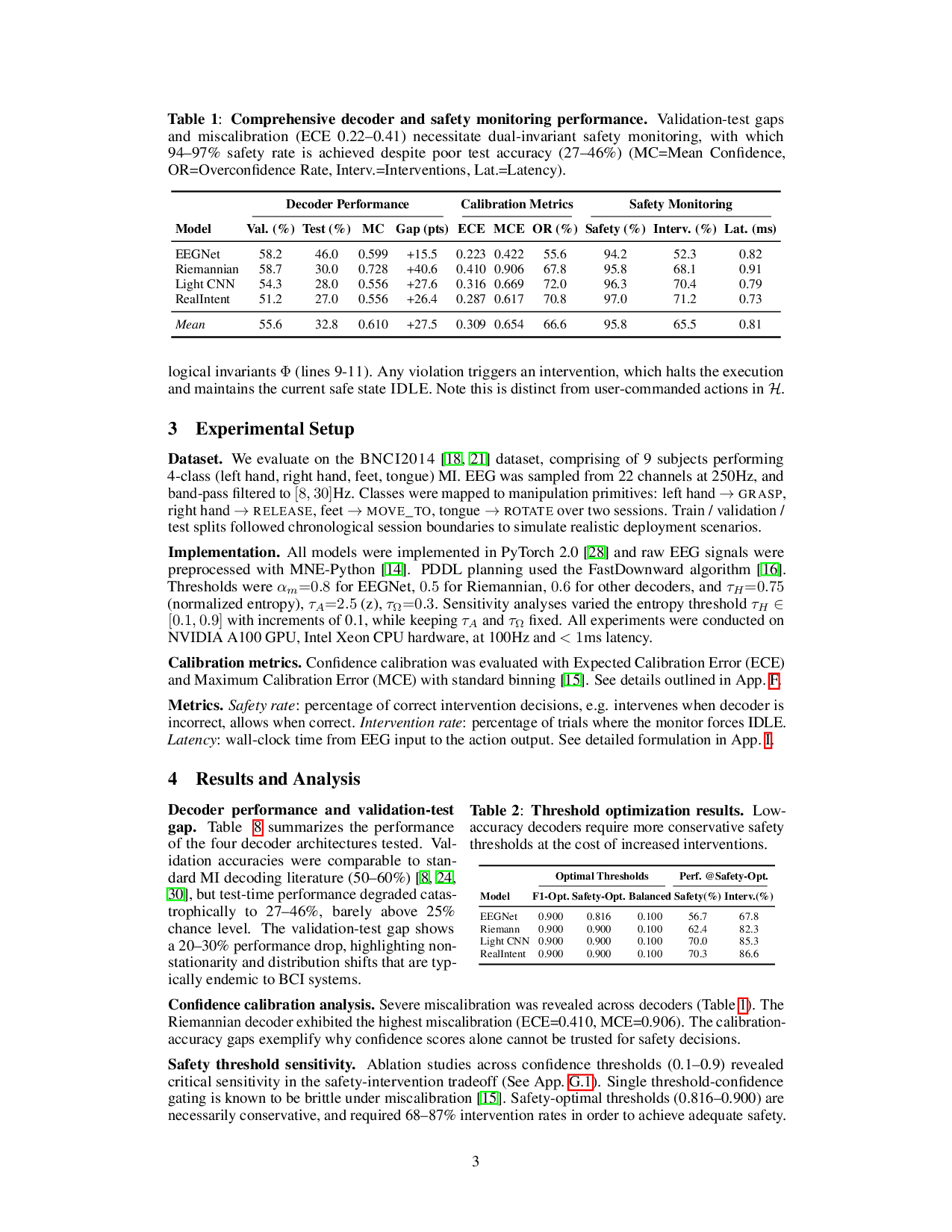

Safety-critical assistive systems that directly decode user intent from neural signals require rigorous guarantees of reliability and trust. We present GUARDIAN (Gated Uncertainty-Aware Runtime Dual Invariants), a framework for real-time neuro-symbolic verification for neural signal-controlled robotics. GUARDIAN enforces both logical safety and physiological trust by coupling confidence-calibrated brain signal decoding with symbolic goal grounding and dual-layer runtime monitoring. On the BNCI2014 motor imagery electroencephalogram (EEG) dataset with 9 subjects and 5,184 trials, the system performs at a high safety rate of 94-97% even with lightweight decoder architectures with low test accuracies (27-46%) and high ECE confidence miscalibration (0.22-0.41). We demonstrate 1.7x correct interventions in simulated noise testing versus at baseline. The monitor operates at 100Hz and sub-millisecond decision latency, making it practically viable for closed-loop neural signal-based systems. Across 21 ablation results, GUARDIAN exhibits a graduated response to signal degradation, and produces auditable traces from intent, plan to action, helping to link neural evidence to verifiable robot action.

Deep Dive into 신경신호 기반 로봇 안전 보장을 위한 실시간 이중 검증 프레임워크.

Safety-critical assistive systems that directly decode user intent from neural signals require rigorous guarantees of reliability and trust. We present GUARDIAN (Gated Uncertainty-Aware Runtime Dual Invariants), a framework for real-time neuro-symbolic verification for neural signal-controlled robotics. GUARDIAN enforces both logical safety and physiological trust by coupling confidence-calibrated brain signal decoding with symbolic goal grounding and dual-layer runtime monitoring. On the BNCI2014 motor imagery electroencephalogram (EEG) dataset with 9 subjects and 5,184 trials, the system performs at a high safety rate of 94-97% even with lightweight decoder architectures with low test accuracies (27-46%) and high ECE confidence miscalibration (0.22-0.41). We demonstrate 1.7x correct interventions in simulated noise testing versus at baseline. The monitor operates at 100Hz and sub-millisecond decision latency, making it practically viable for closed-loop neural signal-based systems. A

Gated Uncertainty-Aware Runtime Dual Invariants for

Neural Signal-Controlled Robotics

Tasha Kim

Oxford Robotics Institute (ORI)

Department of Engineering Science

University of Oxford

tashakim@eng.ox.ac.uk

Oiwi Parker Jones

Oxford Robotics Institute (ORI)

Department of Engineering Science

University of Oxford

oiwi.parkerjones@eng.ox.ac.uk

Abstract

Safety-critical assistive systems that directly decode user intent from neural signals

require rigorous guarantees of reliability and trust. We present GUARDIAN (Gated

Uncertainty-Aware Runtime Dual Invariants), a framework for real-time neuro-

symbolic verification for neural signal-controlled robotics. GUARDIAN enforces

both logical safety and physiological trust by coupling confidence-calibrated brain

signal decoding with symbolic goal grounding and dual-layer runtime monitoring.

On the BNCI2014 motor imagery electroencephalogram (EEG) dataset with 9

subjects and 5,184 trials, the system performs at a high safety rate of 94–97%

even with lightweight decoder architectures with low test accuracies (27–46%) and

high ECE confidence miscalibration (0.22–0.41). We demonstrate ≈1.7x correct

interventions in simulated noise testing versus at baseline. The monitor operates

at 100Hz and sub-millisecond decision latency, making it practically viable for

closed-loop neural signal-based systems. Across 21 ablation results, GUARDIAN

exhibits a graduated response to signal degradation, and produces auditable traces

from intent, plan to action, helping to link neural evidence to verifiable robot action.

1

Introduction

Neural signal-controlled robots have significant potential to improve accessibility for individuals with

limited mobility but they also introduce important safety risks[32]. Maintaining runtime accuracy and

implementing reliable intervention mechanisms are paramount in closed-loop systems to ensure user

safety[6, 10, 17]. Recent closed-loop EEG-based assistive systems [23, 34] and AI-enabled brain-

computer interfaces (BCIs) [25] show encouraging progress, but remain vulnerable to ambiguous

user intent, a known challenge in shared autonomy and teleoperation[12, 19, 20]. They also suffer

signal degradation during long-horizon tasks[22, 27, 29, 31] and lack formal or interpretable safety

mechanisms like runtime assurance[6, 17], or shielding[7]. We propose GUARDIAN, a physiological

runtime verification architecture that provides an auditable, explainable layer between neural decoding

and execution. GUARDIAN acts as a safety gate that complements neurally-controlled systems,

producing tracing and intervention tooling without disrupting real-time operations.

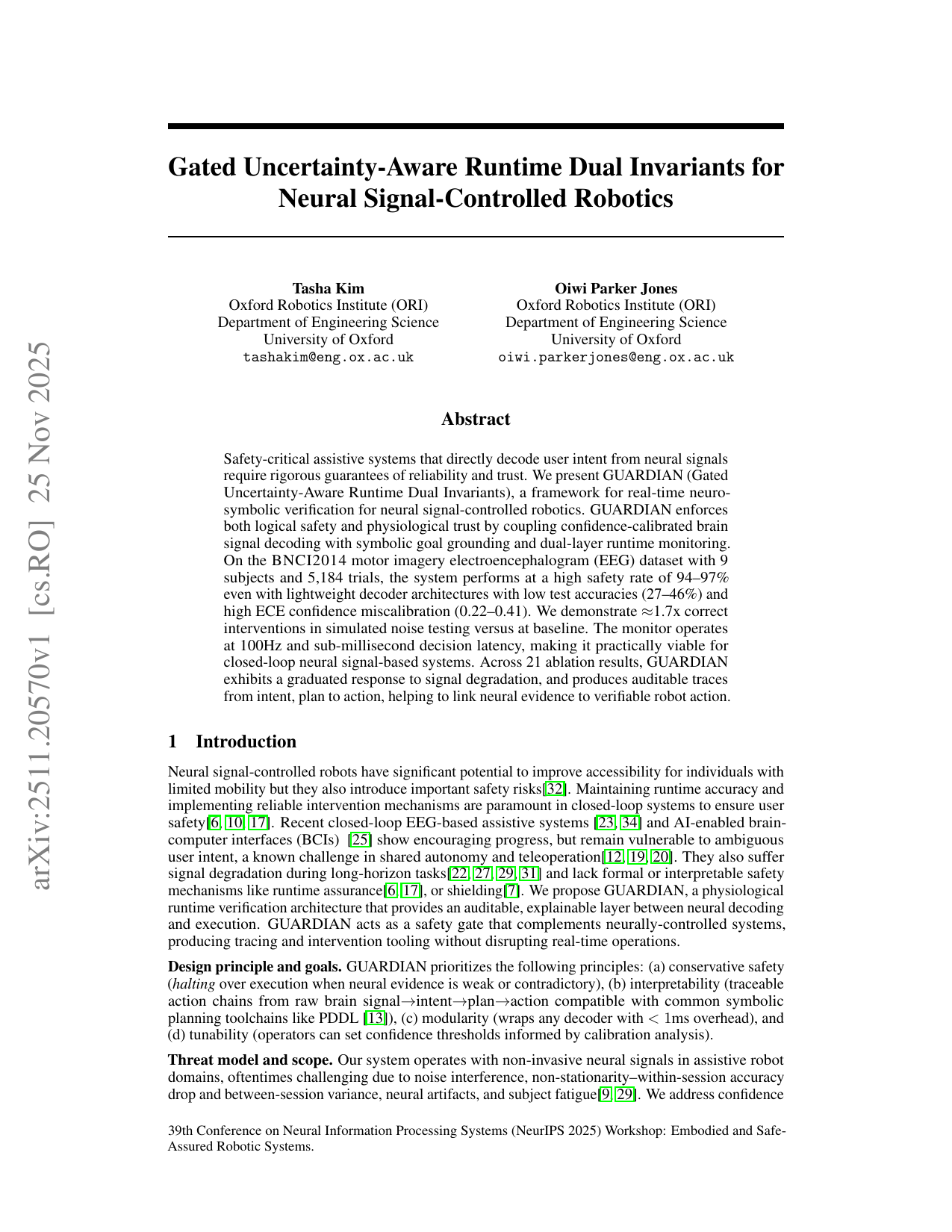

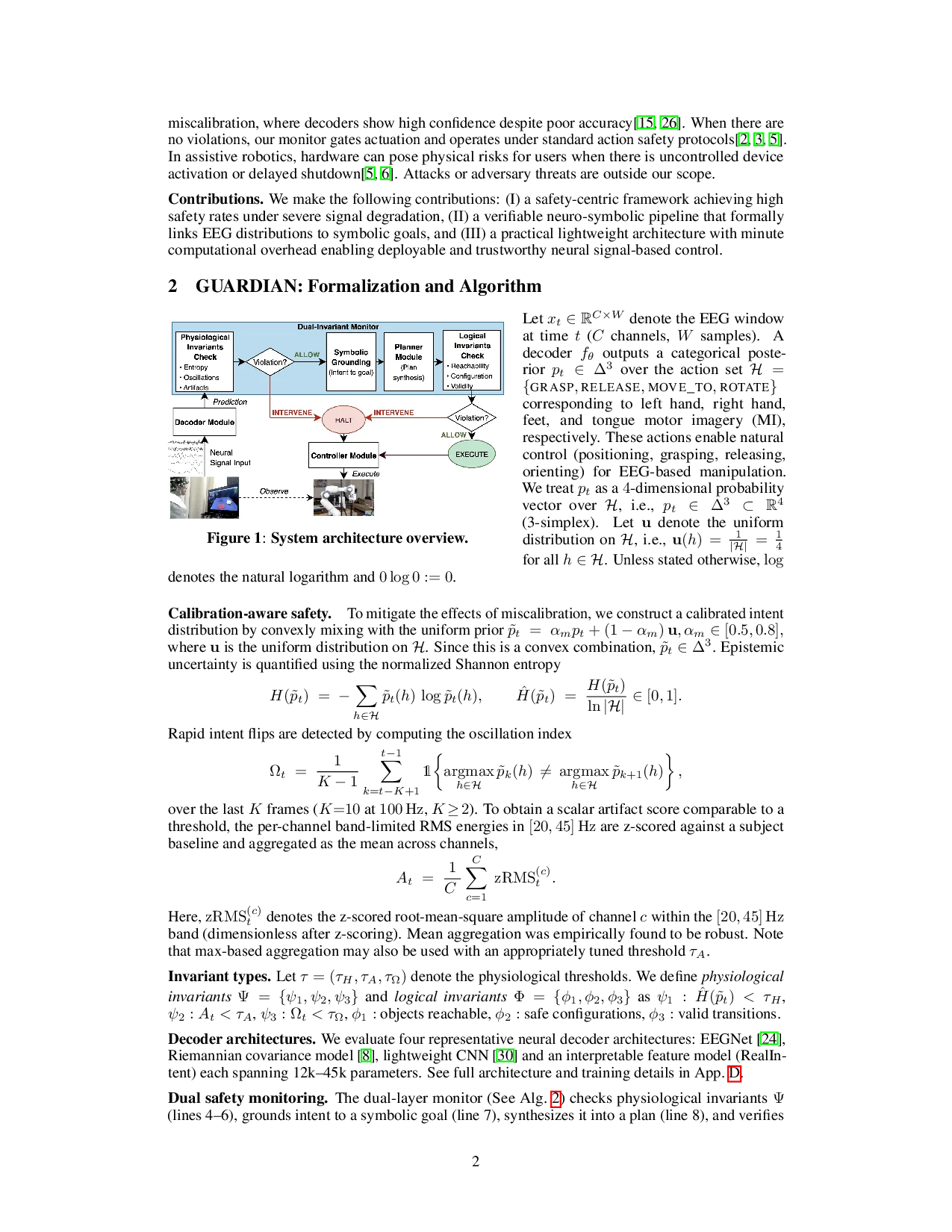

Design principle and goals. GUARDIAN prioritizes the following principles: (a) conservative safety

(halting over execution when neural evidence is weak or contradictory), (b) interpretability (traceable

action chains from raw brain signal→intent→plan→action compatible with common symbolic

planning toolchains like PDDL [13]), (c) modularity (wraps any decoder with < 1ms overhead), and

(d) tunability (operators can set confidence thresholds informed by calibration analysis).

Threat model and scope. Our system operates with non-invasive neural signals in assistive robot

domains, oftentimes challenging due to noise interference, non-stationarity–within-session accuracy

drop and between-session variance, neural artifacts, and subject fatigue[9, 29]. We address confidence

39th Conference on Neural Information Processing Systems (NeurIPS 2025) Workshop: Embodied and Safe-

Assured Robotic Systems.

arXiv:2511.20570v1 [cs.RO] 25 Nov 2025

miscalibration, where decoders show high confidence despite poor accuracy[15, 26]. When there are

no violations, our monitor gates actuation and operates under standard action safety protocols[2, 3, 5].

In assistive robotics, hardware can pose physical risks for users when there is uncontrolled device

activation or delayed shutdown[5, 6]. Attacks or adversary threats are outside our scope.

Contributions. We make the following contributions: (I) a safety-centric framework achieving high

safety rates under severe signal degradation, (II) a verifiable neuro-symbolic pipeline that formally

links EEG distributions to symbolic goals, and (III) a practical lightweight architecture with minute

computational overhead enabling deployable and trustworthy neural signal-based control.

2

GUARDIAN: Formalization and Algorithm

Figure 1: System architecture overview.

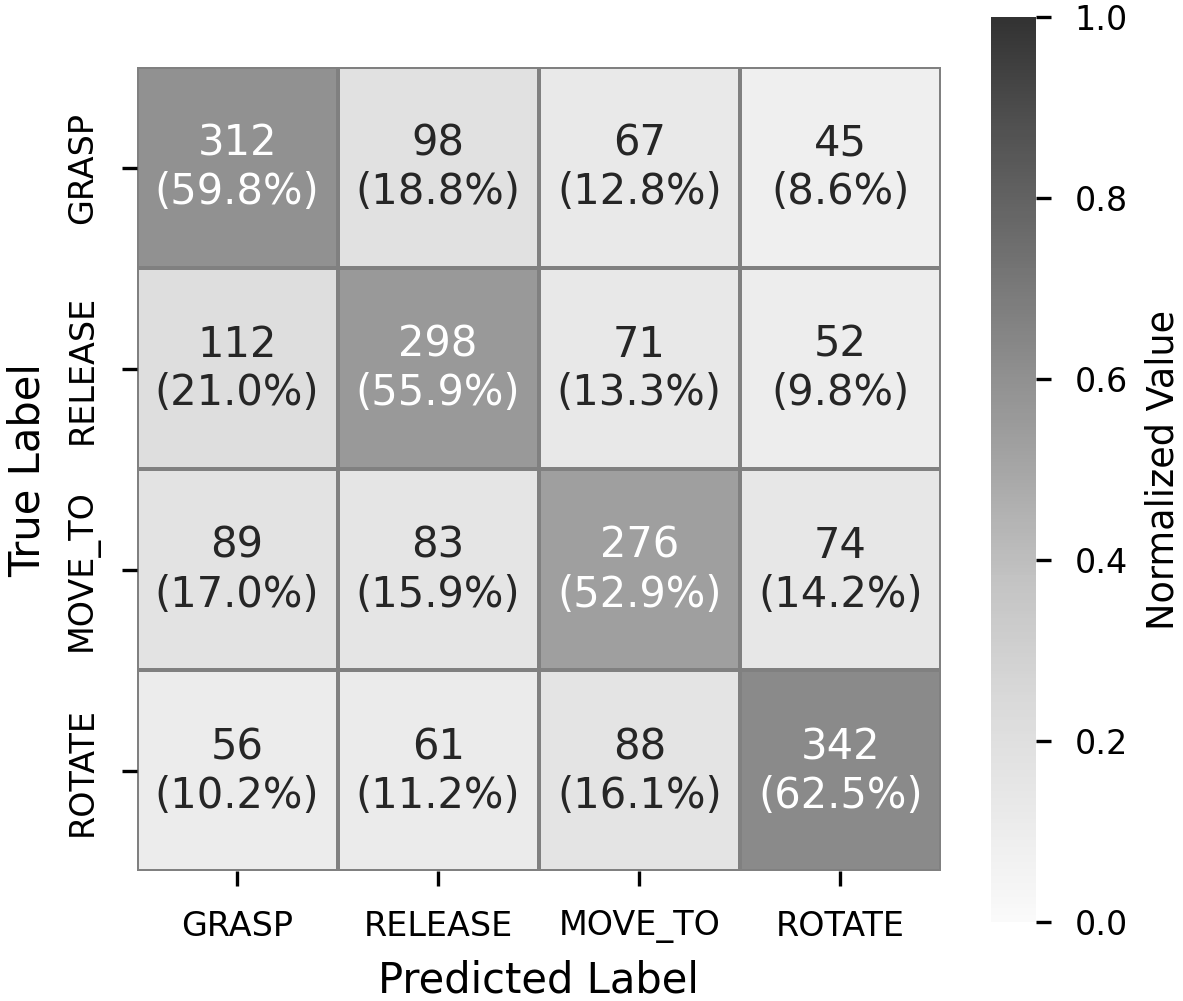

Let xt ∈RC×W denote the EEG window

at time t (C channels, W samples).

A

decoder fθ outputs a categorical poste-

rior pt ∈∆3 over the action set H =

{GRASP, RELEASE, MOVE_TO, ROTATE}

corresponding to left hand, right hand,

feet, and tongue motor imagery (MI),

respectively. These actions enable natural

control (positioning, grasping, releasing,

orienting) for EEG-based manipulation.

We treat pt as a 4-dimensional probability

vector over H, i.e., pt

∈∆3

⊂R4

(3-simplex).

Let u denote the uniform

distribution on H, i.e., u(h) =

1

|H| = 1

4

for all

…(Full text truncated)…

This content is AI-processed based on ArXiv data.