그래프마인드 동적 그래프 기반 다단계 추론 프레임워크

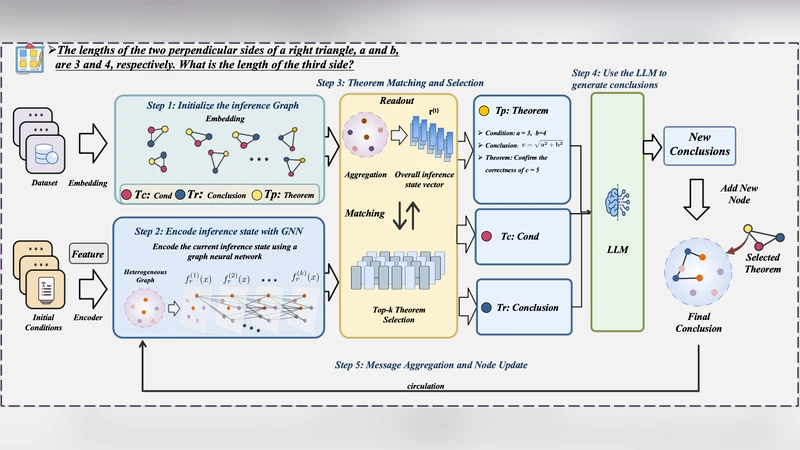

Large language models (LLMs) have demonstrated impressive capabilities in natural language understanding and generation, including multi-step reasoning such as mathematical proving. However, existing approaches often lack an explicit and dynamic mechanism to structurally represent and evolve intermediate reasoning states, which limits their ability to perform context-aware theorem selection and iterative conclusion generation. To address these challenges, we propose GraphMind, a novel dynamic graph-based framework that integrates the graph neural network (GNN) with LLMs to iteratively select theorems and generate intermediate conclusions for multi-step reasoning. Our method models the reasoning process as a heterogeneous evolving graph, where nodes represent conditions, theorems, and conclusions, while edges capture logical dependencies between nodes. By encoding the current reasoning state with GNN and leveraging semantic matching for theorem selection, our framework enables context-aware, interpretable, and structured reasoning in a closed-loop manner. Experiments on various question-answering (QA) datasets demonstrate that our proposed GraphMind method achieves consistent performance improvements and significantly outperforms existing baselines in multi-step reasoning, validating the effectiveness and generalizability of our approach.

💡 Research Summary

The paper addresses a fundamental limitation of current large language model (LLM)‑based multi‑step reasoning systems: they lack an explicit, dynamic representation of intermediate reasoning states, which hampers context‑aware theorem selection and iterative conclusion generation. To overcome this, the authors introduce GraphMind, a novel framework that tightly couples a heterogeneous dynamic graph with a graph neural network (GNN) and an LLM in a closed‑loop architecture.

Core Idea and Graph Formalism

Reasoning is modeled as an evolving heterogeneous graph G = (V, E). Nodes belong to three types: (1) Condition nodes representing premises or given facts, (2) Theorem nodes representing mathematical theorems, lemmas, or logical rules, and (3) Conclusion nodes representing intermediate or final results. Edges encode logical dependencies such as “premise‑to‑theorem”, “theorem‑to‑conclusion”, and “contradiction”. At each reasoning step t, after the LLM generates a new conclusion Cₜ, a new node is added to V and appropriate edges are created, thereby evolving the graph structure over time.

Graph Encoding with GNN

A relational GNN (e.g., RGCN or a metapath‑based GNN) processes the heterogeneous graph. Node features combine textual embeddings (from a pretrained encoder) with type‑specific identifiers. The GNN aggregates information across edge types, producing a global graph embedding h_G that succinctly captures the current reasoning context, including which theorems have already been used and which premises remain relevant.

Theorem Selection Mechanism

Given a pre‑compiled repository of candidate theorems T, GraphMind computes for each candidate t ∈ T a composite score:

s(t) = α·cos(emb(t), h_G) + β·Σ_{v∈RelevantNodes} w_{type(v,t)}·sim(emb(v), emb(t))

The first term measures semantic similarity between the theorem’s textual description and the global graph state; the second term evaluates graph‑based logical compatibility by summing similarity scores over nodes that are directly connected to the theorem via specific edge types, weighted by learned parameters w. The theorem with the highest score is selected and fed to the LLM.

LLM‑Driven Conclusion Generation

The selected theorem, together with the graph context h_G and the original question, is embedded into a prompt of the form:

“Given the current reasoning state: