Given just a few glimpses of a scene, can you imagine the movie playing out as the camera glides through it? That's the lens we take on \emph{sparse-input novel view synthesis}, not only as filling spatial gaps between widely spaced views, but also as \emph{completing a natural video} unfolding through space. We recast the task as \emph{test-time natural video completion}, using powerful priors from \emph{pretrained video diffusion models} to hallucinate plausible in-between views. Our \emph{zero-shot, generation-guided} framework produces pseudo views at novel camera poses, modulated by an \emph{uncertainty-aware mechanism} for spatial coherence. These synthesized frames densify supervision for \emph{3D Gaussian Splatting} (3D-GS) for scene reconstruction, especially in under-observed regions. An iterative feedback loop lets 3D geometry and 2D view synthesis inform each other, improving both the scene reconstruction and the generated views. The result is coherent, high-fidelity renderings from sparse inputs \emph{without any scene-specific training or fine-tuning}. On LLFF, DTU, DL3DV, and MipNeRF-360, our method significantly outperforms strong 3D-GS baselines under extreme sparsity.

Deep Dive into 희소 입력으로부터 자연스러운 비디오를 연상하는 3D 가우시안 스플래팅.

Given just a few glimpses of a scene, can you imagine the movie playing out as the camera glides through it? That’s the lens we take on \emph{sparse-input novel view synthesis}, not only as filling spatial gaps between widely spaced views, but also as \emph{completing a natural video} unfolding through space. We recast the task as \emph{test-time natural video completion}, using powerful priors from \emph{pretrained video diffusion models} to hallucinate plausible in-between views. Our \emph{zero-shot, generation-guided} framework produces pseudo views at novel camera poses, modulated by an \emph{uncertainty-aware mechanism} for spatial coherence. These synthesized frames densify supervision for \emph{3D Gaussian Splatting} (3D-GS) for scene reconstruction, especially in under-observed regions. An iterative feedback loop lets 3D geometry and 2D view synthesis inform each other, improving both the scene reconstruction and the generated views. The result is coherent, high-fidelity re

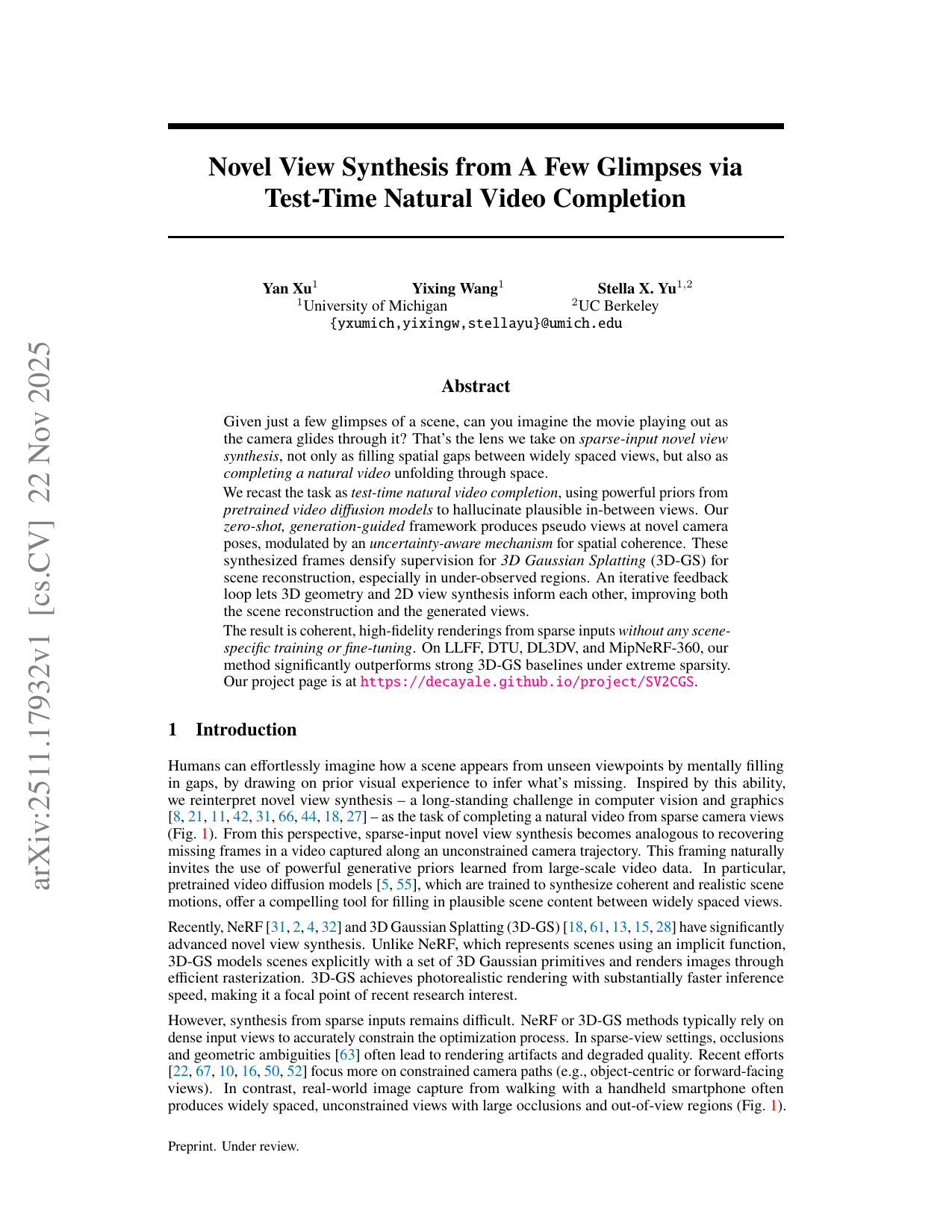

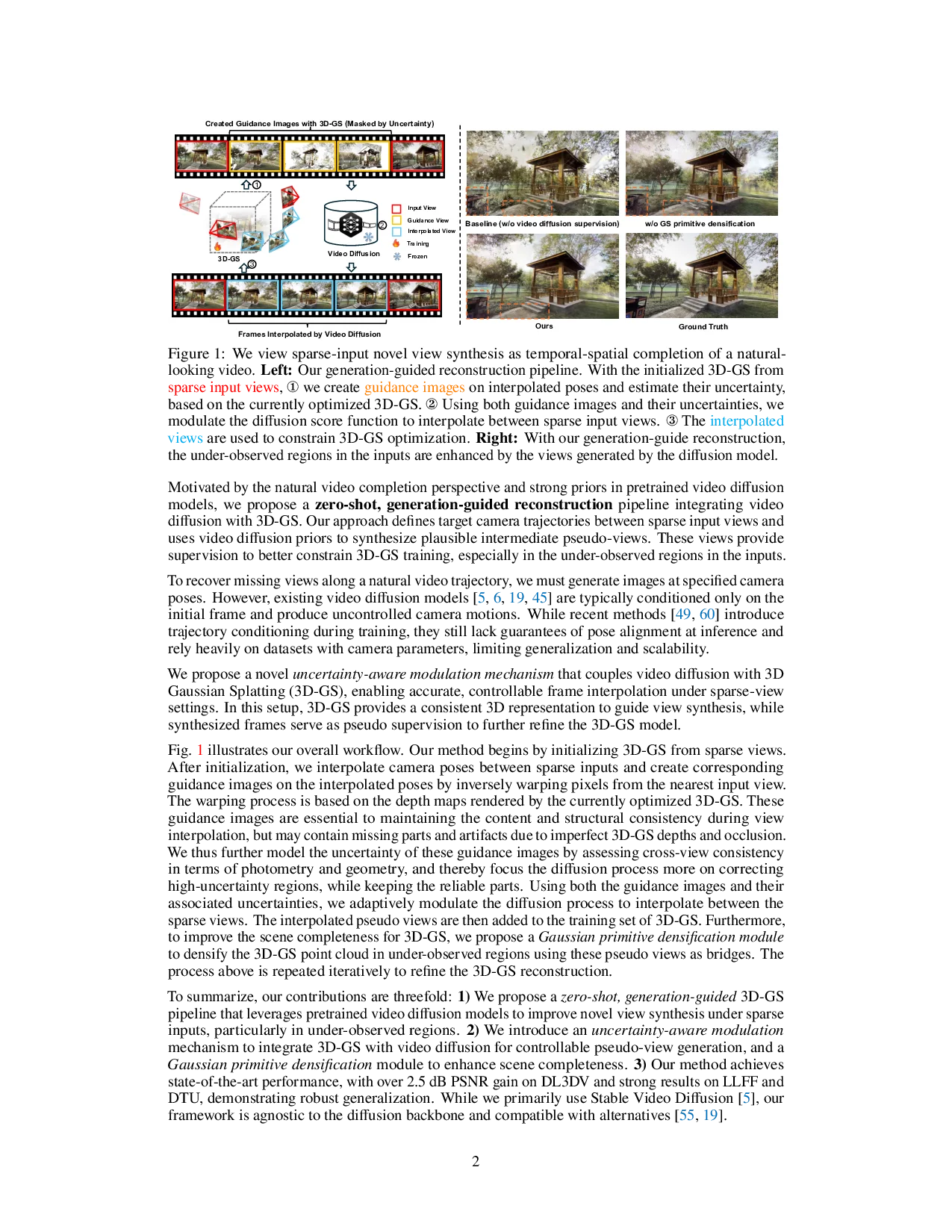

Humans can effortlessly imagine how a scene appears from unseen viewpoints by mentally filling in gaps, by drawing on prior visual experience to infer what's missing. Inspired by this ability, we reinterpret novel view synthesis -a long-standing challenge in computer vision and graphics [8,21,11,42,31,66,44,18,27] -as the task of completing a natural video from sparse camera views (Fig. 1). From this perspective, sparse-input novel view synthesis becomes analogous to recovering missing frames in a video captured along an unconstrained camera trajectory. This framing naturally invites the use of powerful generative priors learned from large-scale video data. In particular, pretrained video diffusion models [5,55], which are trained to synthesize coherent and realistic scene motions, offer a compelling tool for filling in plausible scene content between widely spaced views. Motivated by the natural video completion perspective and strong priors in pretrained video diffusion models, we propose a zero-shot, generation-guided reconstruction pipeline integrating video diffusion with 3D-GS. Our approach defines target camera trajectories between sparse input views and uses video diffusion priors to synthesize plausible intermediate pseudo-views. These views provide supervision to better constrain 3D-GS training, especially in the under-observed regions in the inputs.

To recover missing views along a natural video trajectory, we must generate images at specified camera poses. However, existing video diffusion models [5,6,19,45] are typically conditioned only on the initial frame and produce uncontrolled camera motions. While recent methods [49,60] introduce trajectory conditioning during training, they still lack guarantees of pose alignment at inference and rely heavily on datasets with camera parameters, limiting generalization and scalability.

We propose a novel uncertainty-aware modulation mechanism that couples video diffusion with 3D Gaussian Splatting (3D-GS), enabling accurate, controllable frame interpolation under sparse-view settings. In this setup, 3D-GS provides a consistent 3D representation to guide view synthesis, while synthesized frames serve as pseudo supervision to further refine the 3D-GS model.

Fig. 1 illustrates our overall workflow. Our method begins by initializing 3D-GS from sparse views. After initialization, we interpolate camera poses between sparse inputs and create corresponding guidance images on the interpolated poses by inversely warping pixels from the nearest input view. The warping process is based on the depth maps rendered by the currently optimized 3D-GS. These guidance images are essential to maintaining the content and structural consistency during view interpolation, but may contain missing parts and artifacts due to imperfect 3D-GS depths and occlusion. We thus further model the uncertainty of these guidance images by assessing cross-view consistency in terms of photometry and geometry, and thereby focus the diffusion process more on correcting high-uncertainty regions, while keeping the reliable parts. Using both the guidance images and their associated uncertainties, we adaptively modulate the diffusion process to interpolate between the sparse views. The interpolated pseudo views are then added to the training set of 3D-GS. Furthermore, to improve the scene completeness for 3D-GS, we propose a Gaussian primitive densification module to densify the 3D-GS point cloud in under-observed regions using these pseudo views as bridges. The process above is repeated iteratively to refine the 3D-GS reconstruction.

To summarize, our contributions are threefold: 1) We propose a zero-shot, generation-guided 3D-GS pipeline that leverages pretrained video diffusion models to improve novel view synthesis under sparse inputs, particularly in under-observed regions. 2) We introduce an uncertainty-aware modulation mechanism to integrate 3D-GS with video diffusion for controllable pseudo-view generation, and a Gaussian primitive densification module to enhance scene completeness. 3) Our method achieves state-of-the-art performance, with over 2.5 dB PSNR gain on DL3DV and strong results on LLFF and DTU, demonstrating robust generalization. While we primarily use Stable Video Diffusion [5], our framework is agnostic to the diffusion backbone and compatible with alternatives [55,19].

Sparse-input Novel View Synthesis. Sparse-input novel view synthesis aims to reconstruct a representation for generating novel views of a scene using a few input images. Although existing training-based methods, i.e. NeRF [31] and 3DGS [18], work well with dense inputs, their performance drops significantly with sparse views due to overfitting [37,46,33,12,39]. Several recent works explore robust novel view synthesis under sparse inputs. One group [7,33,16,43,40,20,67,56] focuses on imposing additional regularization on views deviating from the training views. For example, GeoAug [7] randomly samples novel v

…(Full text truncated)…

This content is AI-processed based on ArXiv data.