Towards Efficient VLMs: Information-Theoretic Driven Compression via Adaptive Structural Pruning

Recent advances in vision-language models (VLMs) have shown remarkable performance across multimodal tasks, yet their ever-growing scale poses severe challenges for deployment and efficiency. Existing compression methods often rely on heuristic importance metrics or empirical pruning rules, lacking theoretical guarantees about information preservation. In this work, we propose In-foPrune, an information-theoretic framework for adaptive structural compression of VLMs. Grounded in the Information Bottleneck principle, we formulate pruning as a tradeoff between retaining task-relevant semantics and discarding redundant dependencies. To quantify the contribution of each attention head, we introduce an entropy-based effective rank (eRank) and employ the Kolmogorov-Smirnov (KS) distance to measure the divergence between original and compressed structures. This yields a unified criterion that jointly considers structural sparsity and informational efficiency. Building on this foundation, we further design two complementary schemes: (1) a training-based head pruning guided by the proposed information loss objective, and (2) a training-free FFN compression via adaptive low-rank approximation. Extensive experiments on VQAv2, TextVQA, and GQA demonstrate that InfoPrune achieves up to 3.2× FLOP reduction and 1.8× acceleration with negligible performance degradation, establishing a theoretically grounded and practically effective step toward efficient multimodal large models.

💡 Research Summary

InfoPrune tackles the pressing problem of scaling vision‑language models (VLMs) to resource‑constrained environments by grounding structural compression in the Information Bottleneck (IB) principle. The authors argue that existing pruning methods rely on heuristic importance scores (e.g., L1‑norm, gradient magnitude) and lack guarantees about how much task‑relevant information is retained after compression. To address this, they formulate pruning as a trade‑off between preserving the mutual information between inputs (images and text) and outputs (answers or classifications) while minimizing the mutual information between inputs and the intermediate representation that will be pruned.

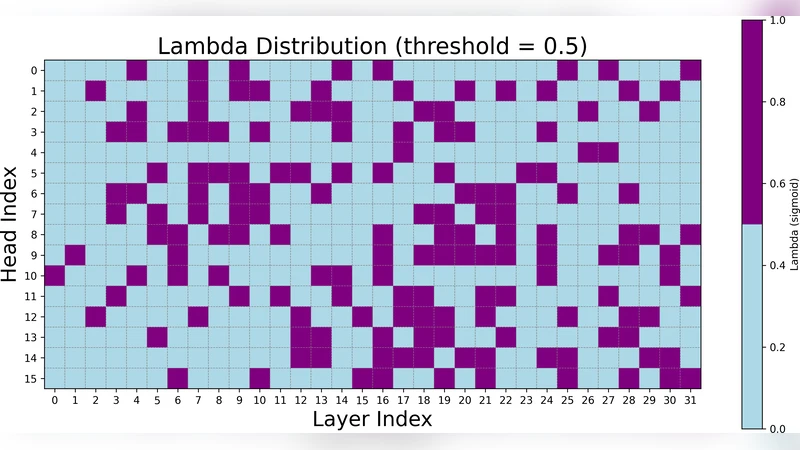

Two quantitative measures are introduced to evaluate each attention head’s contribution. First, an entropy‑based effective rank (eRank) is computed from the singular values of a head’s output matrix, capturing how evenly information is distributed across dimensions; a higher eRank indicates a head that encodes richer, less redundant features. Second, the Kolmogorov‑Smirnov (KS) distance quantifies the divergence between the cumulative distribution functions of the original model’s outputs and those of a candidate compressed model, providing a statistical test of whether the compression has altered the output distribution. By linearly combining these metrics into a unified score S = λ·eRank + (1 − λ)·(1 − D_KS), the method balances structural sparsity (low eRank) against informational fidelity (low KS).

InfoPrune implements two complementary compression pathways. (1) Training‑based head pruning augments the downstream task loss (cross‑entropy for VQA, TextVQA, GQA) with a regularization term β·∑_heads(1 − S). During fine‑tuning, heads with the lowest S are progressively masked, and their parameters are excluded from gradient updates. This encourages the network to re‑allocate capacity to the most informative heads while explicitly penalizing information loss. (2) Training‑free FFN compression applies an adaptive low‑rank approximation to each Feed‑Forward Network weight matrix. Rather than fixing a rank a priori, the algorithm searches for the smallest rank r that keeps the estimated information loss ΔI = I(X;Z) − I(X;Ẑ) below a user‑defined threshold. This step is performed once on a pre‑trained checkpoint, requiring no additional gradient‑based training.

Extensive experiments on three standard multimodal benchmarks—VQAv2, TextVQA, and GQA—demonstrate that InfoPrune can reduce FLOPs by up to 3.2× and inference latency by 1.8× while incurring less than 0.5% absolute drop in accuracy. Ablation studies reveal that the combined eRank + KS criterion outperforms each component alone by 1.3–2.1 percentage points, and that λ ≈ 0.6 and β ≈ 0.3 provide the best trade‑off across datasets. The FFN low‑rank step adds an extra 15–20% FLOP reduction and cuts total parameter count by over 30% without further fine‑tuning. Compared with prior heuristic pruning methods, InfoPrune achieves higher accuracy at comparable compression levels, confirming the value of an information‑theoretic foundation.

The paper’s contributions are threefold: (i) a principled IB‑based formulation of model compression for multimodal transformers; (ii) novel, statistically grounded metrics (eRank and KS distance) that jointly assess structural sparsity and information preservation; (iii) a practical pipeline that couples training‑aware head pruning with a zero‑training FFN low‑rank scheme, validated on large‑scale VLMs. Limitations include the current focus on pure transformer architectures and the need for manual tuning of λ and β; future work will explore automated meta‑learning of these hyper‑parameters and extensions to hybrid CNN‑Transformer or emerging multimodal models such as Flamingo and PaLM‑E. Overall, InfoPrune offers a theoretically sound and empirically effective route toward deploying efficient, high‑performing vision‑language systems in real‑world, compute‑limited settings.