Heterogeneous graphs are widely present in real-world complex networks, where the diversity of node and relation types leads to complex and rich semantics. Efforts for modeling complex relation semantics in heterogeneous graphs are restricted by the limitations of predefined semantic dependencies and the scarcity of supervised signals. The advanced pre-training and fine-tuning paradigm leverages graph structure to provide rich self-supervised signals, but introduces semantic gaps between tasks. Large Language Models (LLMs) offer significant potential to address the semantic issues of relations and tasks in heterogeneous graphs through their strong reasoning capabilities in textual modality, but their incorporation into heterogeneous graphs is largely limited by computational complexity. Therefore, in this paper, we propose an Efficient LLM-Aware (ELLA) framework for heterogeneous graphs, addressing the above issues. To capture complex relation semantics, we propose an LLM-aware Relation Tokenizer that leverages LLM to encode multi-hop, multi-type relations. To reduce computational complexity, we further employ a Hop-level Relation Graph Transformer, which help reduces the complexity of LLM-aware relation reasoning from exponential to linear. To bridge semantic gaps between pre-training and fine-tuning tasks, we introduce the fine-grained task-aware textual Chain-of-Thought (CoT) prompts. Extensive experiments on four heterogeneous graphs show that our proposed ELLA outperforms state-of-the-art methods in the performance and efficiency. In particular, ELLA scales up to 13b-parameter LLMs and achieves up to a 4x speedup compared with existing LLM-based methods. Our code is publicly available at https://github.com/l-wd/ELLA.

Deep Dive into Towards Efficient LLM-aware Heterogeneous Graph Learning.

Heterogeneous graphs are widely present in real-world complex networks, where the diversity of node and relation types leads to complex and rich semantics. Efforts for modeling complex relation semantics in heterogeneous graphs are restricted by the limitations of predefined semantic dependencies and the scarcity of supervised signals. The advanced pre-training and fine-tuning paradigm leverages graph structure to provide rich self-supervised signals, but introduces semantic gaps between tasks. Large Language Models (LLMs) offer significant potential to address the semantic issues of relations and tasks in heterogeneous graphs through their strong reasoning capabilities in textual modality, but their incorporation into heterogeneous graphs is largely limited by computational complexity. Therefore, in this paper, we propose an Efficient LLM-Aware (ELLA) framework for heterogeneous graphs, addressing the above issues. To capture complex relation semantics, we propose an LLM-aware Relatio

graphs contain multiple types of nodes and relations, providing rich and complex semantics. Heterogeneous graph learning is designed to capture these complex semantic relations, including citation relations among papers and authorship relations between papers and authors.

In response to the unique characteristics of heterogeneous graphs, a variety of Heterogeneous Graph Neural Networks (HGNNs) have been developed. Mainstream methods include relation-based [7]- [10], meta-path-based [11]- [14], and tokenbased [15]- [18] approaches. Relation-based methods adopt relation-specific aggregation, meta-path-based methods encode handcrafted semantic paths, and token-based methods represent heterogeneous elements as tokens with attentionbased aggregation. Collectively, these methods attempt to model the complex relation semantics of the textual modality from different perspectives, but they are constrained by predefined semantic dependencies, including meta-paths and inherent heterogeneous relations. Moreover, they generally require extensive labeled data to effectively learn complex relation semantics, and face severe performance degradation when supervised signals are scarce.

The pre-training and fine-tuning paradigm [19] has become a dominant strategy to alleviate the scarcity of supervised signals by leveraging self-supervised signals derived from inherent graph structures [14], [20], [21], but it leads to a substantial gap between pre-training and fine-tuning tasks. Inspired by the success of the language prompt in textual modality, graph prompt learning [19], [22], [23] attempts to manipulate downstream data by inserting an additional learnable prompt module, and reformulates fine-tuning tasks as pre-training tasks to bridge the gap between pre-training and fine-tuning tasks. These prompt modules typically introduce learnable parameters, either as additional node embeddings or task-specific subgraphs, and insert them into the entire graph based on heuristic rules or similarity measures. However, they mostly operate indiscriminately on the whole graph, lacking fine-grained prompting for each node and relation semantics Large Language Models (LLMs) [24], [25] are particularly promising for capturing complex relation semantics and bridging semantic gaps between tasks, owing to their powerful semantic reasoning and understanding capabilities in the textual modality. Advanced studies have explored incorporating LLMs into graph learning to enhance the expressiveness and inference capability of graph-based models. A common strategy is to fine-tune LLMs [26], [27] to better capture graph structures or to enhance the representational power of graph neural networks (GNNs) through specialized archi-tectures [28], [29]. However, the significant computational cost of fine-tuning makes it difficult for these methods to scale even to modestly sized LLMs. In contrast, prompt-based approaches [28]- [31] leverage the reasoning capabilities of LLMs only to capture node semantics, rather than complex relation semantics in heterogeneous graphs, because the exponentially increasing computational complexity caused by neighbor explosion makes such relation modeling difficult. Therefore, there remain three fundamental challenges unsolved for heterogeneous graph learning. First, can we effectively harness the reasoning capabilities of LLMs to capture complex relation semantics in heterogeneous graphs? Second, can we reduce the computational complexity of LLMbased relation reasoning? Third, can we characterize finegrained task semantics to seamlessly bridge the semantic gap between pre-training and fine-tuning stages?

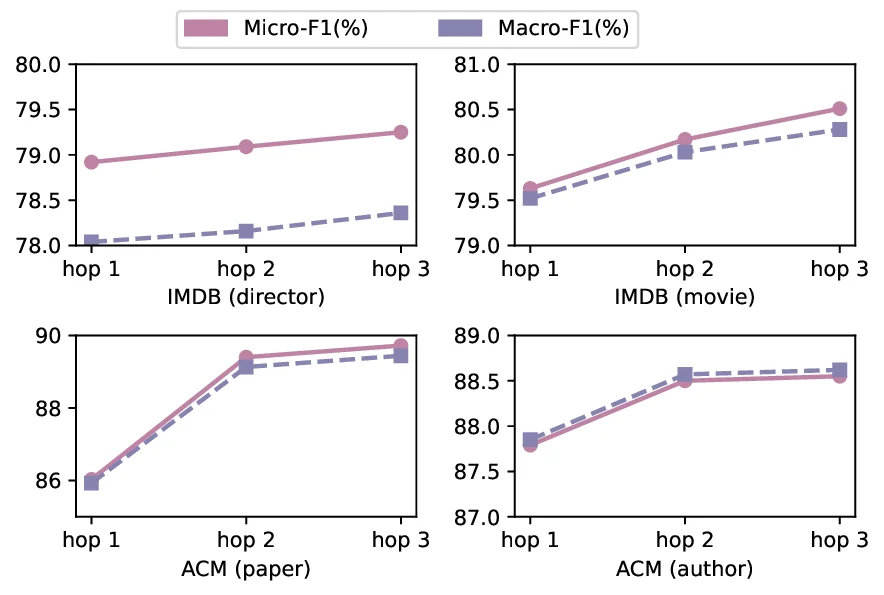

To tackle the above challenges, we propose an Efficient LLMs-Aware framework (ELLA) for heterogeneous graph learning. Firstly, we design an LLM-aware Relation Tokenizer to capture the complex relation semantics across multihop and multi-type in heterogeneous graphs. Secondly, the Hop-level Relation Graph Transformer performs hop-wise aggregation of multi-type hidden states derived from LLMaware relation reasoning, reducing the reasoning complexity from exponential to linear while avoiding semantic confusion. Finally, to bridge the semantic gap between pre-training and fine-tuning tasks, we introduce fine-grained task-aware textual prompts for each node based on Chain-of-Thought (CoT) reasoning, providing prompts within relation semantics in both stages. Extensive experiments on multiple heterogeneous graph benchmarks demonstrate that ELLA, as a promptingbased framework, achieves superior effectiveness and efficiency compared to state-of-the-art methods, with an average performance improvement of 3.79%. In terms of efficiency, compared with existing LLM-based methods, ELLA scales up to 13b-parameter LLMs and achieves up to a 4× speedup.

Overall, our contributions are summarized as follows:

• We identify three critical challenges in applying LLMs to heterogeneous graph learning: the semantic complexity of relations and t

…(Full text truncated)…

This content is AI-processed based on ArXiv data.