Efficient Feature Aggregation and Scale-Aware Regression for Monocular 3D Object Detection

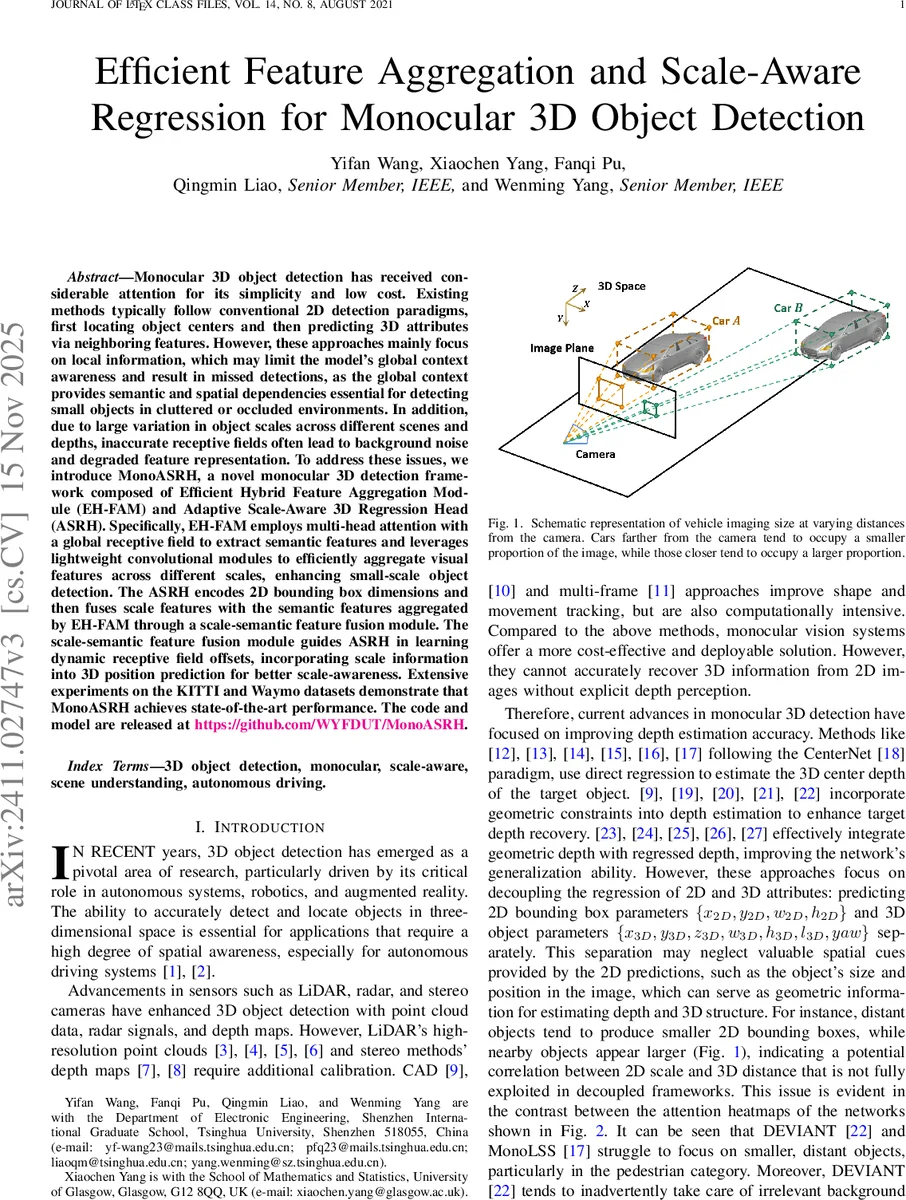

Monocular 3D object detection has attracted great attention due to simplicity and low cost. Existing methods typically follow conventional 2D detection paradigms, first locating object centers and then predicting 3D attributes via neighboring features. However, these methods predominantly rely on progressive cross-scale feature aggregation and focus solely on local information, which may result in a lack of global awareness and the omission of small-scale objects. In addition, due to large variation in object scales across different scenes and depths, inaccurate receptive fields often lead to background noise and degraded feature representation. To address these issues, we introduces MonoASRH, a novel monocular 3D detection framework composed of Efficient Hybrid Feature Aggregation Module (EH-FAM) and Adaptive Scale-Aware 3D Regression Head (ASRH). Specifically, EH-FAM employs multi-head attention with a global receptive field to extract semantic features for small-scale objects and leverages lightweight convolutional modules to efficiently aggregate visual features across different scales. The ASRH encodes 2D bounding box dimensions and then fuses scale features with the semantic features aggregated by EH-FAM through a scale-semantic feature fusion module. The scale-semantic feature fusion module guides ASRH in learning dynamic receptive field offsets, incorporating scale priors into 3D position prediction for better scale-awareness. Extensive experiments on the KITTI and Waymo datasets demonstrate that MonoASRH achieves state-of-the-art performance.

💡 Research Summary

Monocular 3D object detection offers a low‑cost alternative to LiDAR‑based systems, but existing approaches suffer from two major drawbacks: (1) they rely heavily on local features and lack global context, which leads to missed detections of small or distant objects, and (2) they use a fixed receptive field that does not adapt to the large scale variations caused by depth changes, resulting in background noise contaminating the feature representation.

To address these issues, the authors propose MonoASRH, a novel monocular 3D detection framework composed of two key components: the Efficient Hybrid Feature Aggregation Module (EH‑FAM) and the Adaptive Scale‑Aware 3D Regression Head (ASRH).

EH‑FAM fuses the strengths of Vision Transformers and lightweight CNNs. It applies an 8‑head multi‑head self‑attention block only to the highest‑level feature map (S₄, 1/32 resolution) to capture long‑range dependencies and global semantics. Positional embeddings are added before linear projections to Q, K, V, and the standard scaled‑dot‑product attention is computed, followed by a feed‑forward network and reshaping back to spatial dimensions. For cross‑scale aggregation, the module replaces the heavy transposed convolutions of DLA‑Up with a 7×7 convolution (decomposed into 1×7 and 7×1 for efficiency) and bilinear upsampling, which preserves fine details crucial for small objects. A Fusion Block based on RepVGGplus further refines the fused features; during training it uses a multi‑branch architecture (3×3, 1×1, identity) and is re‑parameterized into a single 3×3 convolution for fast inference. This design dramatically reduces parameters and FLOPs while retaining strong representational power.

ASRH leverages the 2D bounding‑box dimensions (width and height) predicted by a CenterNet‑style 2D head. These dimensions are encoded into a scale feature vector via a small MLP. Simultaneously, RoI‑Align extracts local semantic features from the aggregated feature map for each detected region. The scale‑semantic fusion module concatenates the scale vector with the semantic feature and passes them through a 1×1 convolution with Mish activation, producing a fused representation that carries both global context and explicit scale priors. This fused feature drives a dynamic receptive‑field offset predictor that generates offsets for deformable convolutions. By adjusting the receptive field according to object scale, the network can focus on a narrow region for distant, tiny objects and a broader region for nearby, large objects, thereby mitigating scale‑related bias. Additionally, a spatial‑variance‑based attention map (depth attention) suppresses irrelevant background information, and a Selective Confidence‑Guided Heatmap Loss emphasizes high‑confidence detections while down‑weighting hard negatives, improving training stability.

The overall pipeline consists of a DLA‑34 backbone that extracts multi‑scale features {S₁,…,S₄}, the EH‑FAM that produces a unified feature tensor F, a 2D detection head (heatmap, offset, size) for object centers, and the ASRH that outputs 3D box dimensions, 3D center offsets, yaw angle, depth map, depth uncertainty, and a depth attention map.

Experiments on the KITTI 3D detection benchmark and the Waymo Open Dataset demonstrate that MonoASRH outperforms previous state‑of‑the‑art methods such as DEVIANT, MonoLSS, and MonoDETR. Notably, it achieves higher AP for small and distant objects, confirming the benefit of global attention and scale‑aware receptive field adaptation. Ablation studies show that removing the self‑attention block reduces mAP by 2.1 %, and disabling the scale‑aware offset mechanism leads to a pronounced drop in performance on far‑range objects. The model also achieves a ~30 % reduction in parameters and FLOPs compared to DLA‑Up‑based baselines, enabling real‑time inference (>30 FPS) on a single GPU.

Contributions are threefold: (1) a plug‑and‑play EH‑FAM that efficiently aggregates multi‑scale features with global context, (2) an ASRH that encodes 2D scale cues to generate dynamic receptive‑field offsets for scale‑aware 3D regression, and (3) a selective confidence‑guided loss that stabilizes training. The authors release code and pretrained models, facilitating reproducibility and further research.

In summary, MonoASRH presents a compelling solution to the long‑standing challenges of monocular 3D detection by unifying transformer‑style global reasoning with lightweight CNN efficiency and by explicitly incorporating scale information into the regression head. This advances the feasibility of deploying accurate, real‑time 3D perception systems using only a single camera, a critical step toward cost‑effective autonomous driving and robotics applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment