InstantRetouch: Personalized Image Retouching without Test-time Fine-tuning Using an Asymmetric Auto-Encoder

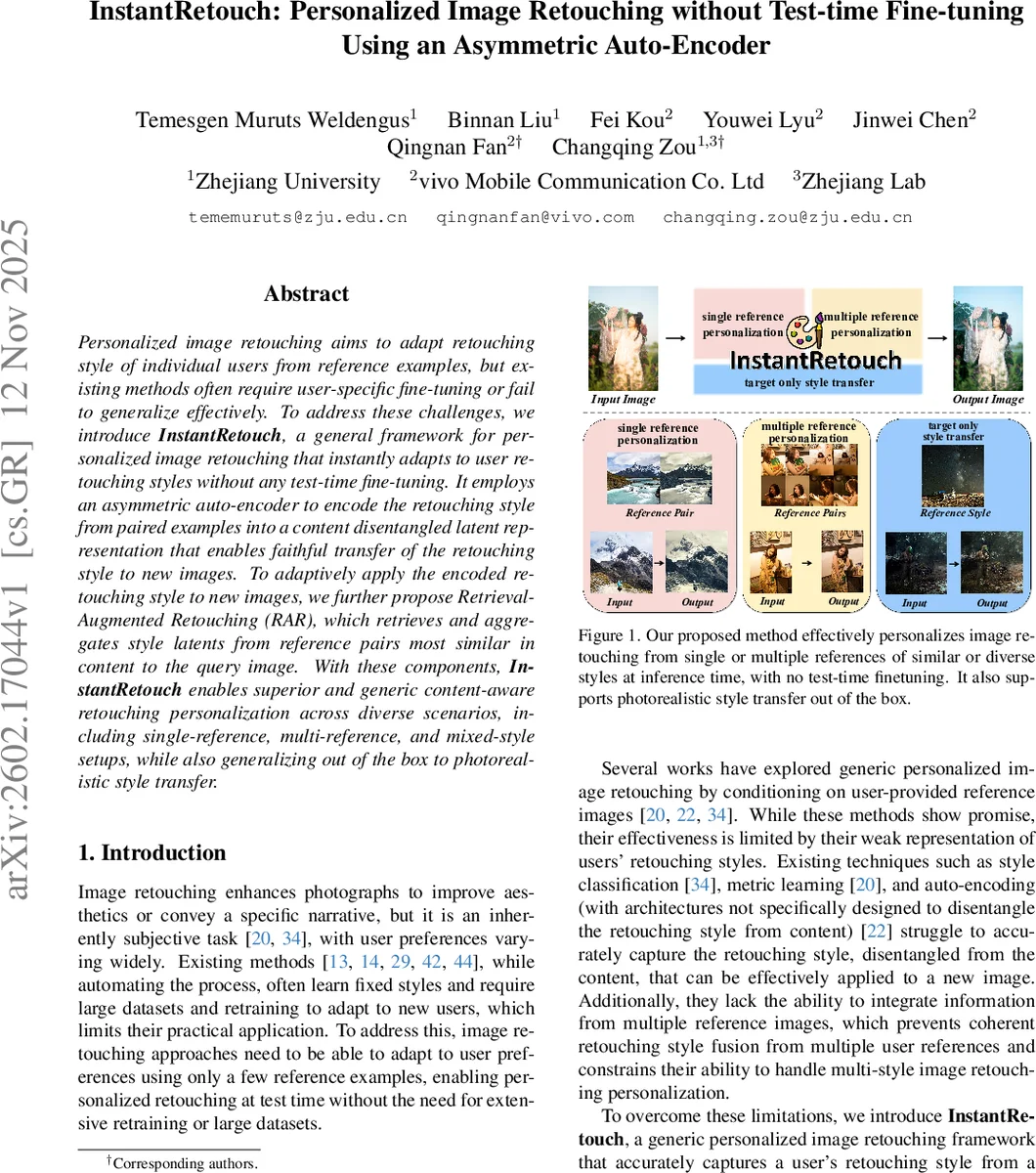

Personalized image retouching aims to adapt retouching style of individual users from reference examples, but existing methods often require user-specific fine-tuning or fail to generalize effectively. To address these challenges, we introduce $\textbf{InstantRetouch}$, a general framework for personalized image retouching that instantly adapts to user retouching styles without any test-time fine-tuning. It employs an $\textit{asymmetric auto-encoder}$ to encode the retouching style from paired examples into a content disentangled latent representation that enables faithful transfer of the retouching style to new images. To adaptively apply the encoded retouching style to new images, we further propose $\textit{retrieval-augmented retouching}$ (RAR), which retrieves and aggregates style latents from reference pairs most similar in content to the query image. With these components, $\textbf{InstantRetouch}$ enables superior and generic content-aware retouching personalization across diverse scenarios, including single-reference, multi-reference, and mixed-style setups, while also generalizing out of the box to photorealistic style transfer.

💡 Research Summary

**

InstantRetouch tackles the long‑standing problem of personalized image retouching without the need for test‑time fine‑tuning. Existing approaches either learn a fixed retouching style that cannot adapt to individual user preferences, or they require per‑user fine‑tuning on a few reference images, which is impractical for real‑world deployment. The proposed framework consists of two novel components: (1) an asymmetric auto‑encoder that learns a content‑disentangled latent representation of the retouching style, and (2) a Retrieval‑Augmented Retouching (RAR) module that adaptively combines style latents from the most content‑similar reference pairs at inference time.

The asymmetric auto‑encoder is built around a Siamese encoder and a lightweight conditional MLP decoder. The encoder uses a SigLIP‑v2 backbone augmented with LoRA adapters; it processes the original image x and its retouched counterpart y in parallel, pools features from both branches, concatenates them, and projects the result into a 2048‑dimensional style latent z. Because the encoder sees both the input and the target, it can isolate color and tone transformations while discarding semantic content. The decoder operates directly in the RGB color space: each pixel is mapped to a hidden vector, passed through three conditional MLP blocks, and finally projected back to RGB. The style latent z is injected into every block via a simple additive projection, forcing the decoder to rely on z for the transformation. Training minimizes an L1 reconstruction loss on a large paired dataset, encouraging the encoder to capture only the retouching-specific changes.

Once a user provides a small set of reference pairs {(x_i, y_i)}, the encoder converts each pair into a style latent z_i. For a new query image x_q, RAR first extracts a content embedding c_q using the same SigLIP‑v2 backbone (with LoRA disabled). It then computes cosine similarity between c_q and the content embeddings c_i of all reference inputs, retrieves the top‑K most similar pairs (K=3 in the paper), and aggregates their style latents with softmax‑weighted averaging:

w_i = exp(s_i/τ) / Σ_j exp(s_j/τ)

z_q = Σ_i w_i z_i

The aggregated latent z_q is fed together with x_q to the conditional decoder, producing the final retouched output ŷ_q. This retrieval‑based conditioning makes the retouching style content‑aware: the same user can have different adjustments for bright outdoor scenes versus dim indoor shots, without having to define multiple explicit styles.

Training data are generated by applying 800 publicly available Adobe Lightroom presets to 95 000 images sampled from LAION, yielding over 760 000 paired examples. The presets are real‑world user‑created styles, ensuring diverse color and tone transformations. Importantly, benchmark images and preset types used for evaluation are excluded from training, guaranteeing a fair comparison.

The authors evaluate InstantRetouch on three increasingly challenging settings: (1) single‑style retouching using the Visually Consistent Image Retouching Benchmark (VCIRB), where each preset is applied to a semantically coherent cluster of images; (2) multi‑style retouching with consistent edits, where multiple reference pairs share the same style; and (3) multi‑style retouching with inconsistent edits, where references exhibit different styles that must be fused. Baselines include StarEnhancer, MSM, VisualCloze, PhotoArtAgent, Seedream 4.0, and NanoBanana. InstantRetouch consistently outperforms these methods in PSNR, SSIM, and LPIPS, especially when the RAR module is used. The method also generalizes to photorealistic style transfer: by treating a single unpaired reference image as a pseudo‑input, the same pipeline can transfer its color palette to new images without any retraining.

Key advantages of InstantRetouch are:

- Zero test‑time fine‑tuning – user preferences are encoded once and instantly reusable.

- Content‑aware style fusion – RAR selects and blends style latents based on visual similarity, enabling per‑image adaptation.

- Support for multiple and mixed styles – the weighted aggregation naturally handles heterogeneous reference sets.

- Resolution‑agnostic, lightweight decoder – operating in color space keeps computation low and preserves high‑frequency details.

Limitations include reliance on paired training data (the asymmetric auto‑encoder cannot be trained on unpaired images), the relatively high dimensionality of the style latent (2048 D) which may affect memory usage, and dependence on the quality of the content encoder for retrieval accuracy. Hyper‑parameters such as K and the temperature τ also influence performance and may need tuning for specific deployments.

In summary, InstantRetouch introduces a practical, scalable solution for personalized image retouching: a disentangled style encoder, a color‑space conditional decoder, and a retrieval‑based style aggregation mechanism that together enable immediate, content‑sensitive personalization without any test‑time model updates. Future work could explore self‑supervised training on unpaired data, further compression of the style latent, and interactive user feedback loops to refine style representations on the fly.

Comments & Academic Discussion

Loading comments...

Leave a Comment