Fuse3D: Generating 3D Assets Controlled by Multi-Image Fusion

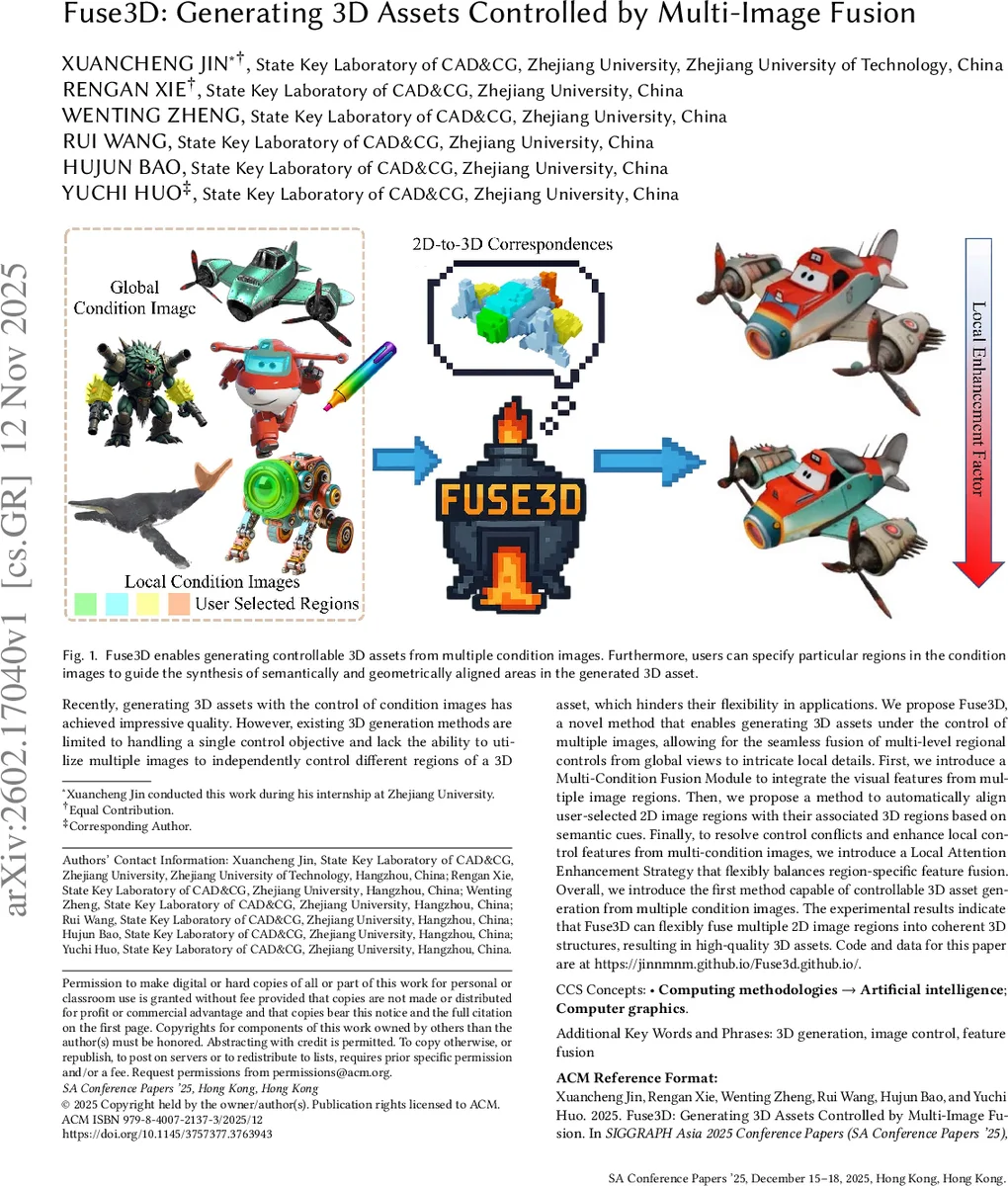

Recently, generating 3D assets with the control of condition images has achieved impressive quality. However, existing 3D generation methods are limited to handling a single control objective and lack the ability to utilize multiple images to independently control different regions of a 3D asset, which hinders their flexibility in applications. We propose Fuse3D, a novel method that enables generating 3D assets under the control of multiple images, allowing for the seamless fusion of multi-level regional controls from global views to intricate local details. First, we introduce a Multi-Condition Fusion Module to integrate the visual features from multiple image regions. Then, we propose a method to automatically align user-selected 2D image regions with their associated 3D regions based on semantic cues. Finally, to resolve control conflicts and enhance local control features from multi-condition images, we introduce a Local Attention Enhancement Strategy that flexibly balances region-specific feature fusion. Overall, we introduce the first method capable of controllable 3D asset generation from multiple condition images. The experimental results indicate that Fuse3D can flexibly fuse multiple 2D image regions into coherent 3D structures, resulting in high-quality 3D assets. Code and data for this paper are at https://jinnmnm.github.io/Fuse3d.github.io/.

💡 Research Summary

Fuse3D introduces a novel framework for controllable 3D asset generation that leverages multiple condition images, each possibly annotated with user‑selected regions. The method addresses three core challenges: (1) fusing heterogeneous visual cues while preserving spatial and semantic integrity, (2) establishing accurate correspondences between 2D image regions and 3D voxels, and (3) resolving conflicts when different conditions overlap in the same 3D space.

The pipeline begins by extracting dense feature tokens from each condition image using a pretrained DINOv2 vision transformer. Masks supplied by the user guide the extraction so that only the selected regions contribute tokens. These tokens are then combined in the Multi‑Condition Fusion Module (MCFM). MCFM aligns tokens according to their 2D masks, aggregates them with mask‑weighted averaging, and produces a unified set of condition tokens that encode global shape cues as well as fine‑grained local details.

Fuse3D adopts TRELLIS as its 3D generation backbone. TRELLIS operates on a sparse latent representation called SLaT, where each active voxel carries a local latent vector. To inject the fused condition tokens into this latent space, the authors exploit the cross‑attention layers of TRELLIS. They first obtain a coarse voxel grid from the global condition image via TRELLIS’s Flow Transformer. Then, a 3D Semantic‑Aware Alignment Strategy establishes bidirectional correspondences: forward alignment maps local condition tokens to the most relevant voxels, while reverse alignment assigns any unmapped voxels to global tokens. This ensures that every voxel receives an appropriate conditioning signal, enabling precise region‑level control.

Because multiple conditions can compete for the same voxels, a Local Attention Enhancement strategy is applied. Using the 2D‑to‑3D mappings derived earlier, the attention scores of region‑specific tokens are dynamically scaled: regions of interest receive amplified attention, whereas overlapping areas are attenuated to avoid destructive blending. This mechanism balances the strength of each control signal, preserving the overall coherence of the generated shape while highlighting the desired local features.

After conditioning the SLaT latent, TRELLIS’s diffusion process generates the structured latent representation, which is finally decoded by a pretrained VAE decoder into a full 3D mesh with texture. The authors report that the entire generation takes roughly 20 seconds on a single GPU, producing assets that simultaneously satisfy global geometry and intricate local details. Quantitative evaluations show improvements over single‑condition baselines in structural consistency (measured by Chamfer distance and IoU) and visual fidelity (measured by FID on rendered views). User studies confirm that participants can more accurately dictate specific parts of the output using Fuse3D’s multi‑image interface.

Limitations include reliance on manual mask creation, dependence on the pretrained DINOv2 and TRELLIS models (which may limit domain transfer), and a lack of extensive experiments on multi‑object scenes or highly cluttered environments. Future work could explore automatic region proposal, lightweight vision backbones, and extensions to handle multiple objects or dynamic scenes.

In summary, Fuse3D is the first method that brings the flexibility of multi‑image, region‑level control—well‑established in 2D diffusion models—into the realm of 3D asset generation. By integrating a robust token‑fusion mechanism, a bidirectional semantic alignment, and attention‑based conflict resolution, it enables artists and developers to create high‑quality, precisely controlled 3D content efficiently, opening new possibilities for interactive design, AR/VR production, and rapid prototyping.

Comments & Academic Discussion

Loading comments...

Leave a Comment