Shared Heritage, Distinct Writing: Rethinking Resource Selection for East Asian Historical Documents

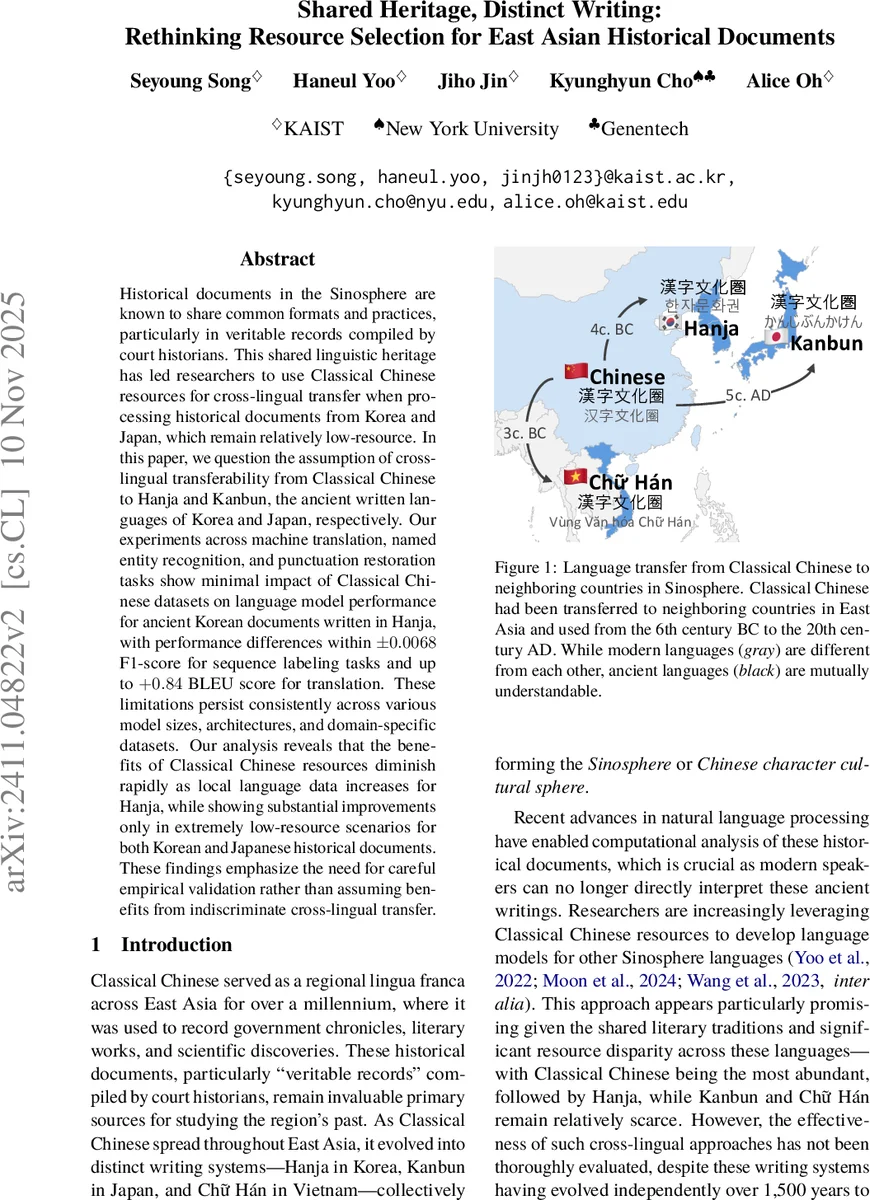

Historical documents in the Sinosphere are known to share common formats and practices, particularly in veritable records compiled by court historians. This shared linguistic heritage has led researchers to use Classical Chinese resources for cross-lingual transfer when processing historical documents from Korea and Japan, which remain relatively low-resource. In this paper, we question the assumption of cross-lingual transferability from Classical Chinese to Hanja and Kanbun, the ancient written languages of Korea and Japan, respectively. Our experiments across machine translation, named entity recognition, and punctuation restoration tasks show minimal impact of Classical Chinese datasets on language model performance for ancient Korean documents written in Hanja, with performance differences within $\pm{}0.0068$ F1-score for sequence labeling tasks and up to $+0.84$ BLEU score for translation. These limitations persist consistently across various model sizes, architectures, and domain-specific datasets. Our analysis reveals that the benefits of Classical Chinese resources diminish rapidly as local language data increases for Hanja, while showing substantial improvements only in extremely low-resource scenarios for both Korean and Japanese historical documents. These findings emphasize the need for careful empirical validation rather than assuming benefits from indiscriminate cross-lingual transfer.

💡 Research Summary

The paper challenges the widely‑held assumption that Classical Chinese resources can be freely transferred to the ancient written forms of Korea (Hanja) and Japan (Kanbun) for natural‑language‑processing tasks. The authors first assemble a comprehensive corpus: over one million sentences of Korean historical records and literary works written in Hanja, complemented by roughly one million sentences of Classical Chinese from benchmarks such as WYWEB and Daizhige. They also collect limited Kanbun and Vietnamese Chữ Hán data for comparative discussion.

Three representative tasks are evaluated: machine translation (MT), named‑entity recognition (NER), and punctuation restoration (PR). For MT, they fine‑tune the multilingual Qwen‑2‑7B model (via QLoRA) on three language pairs (Hanja‑Korean, Hanja‑English, Classical‑Chinese‑Korean). For NER and PR, they use a RoBERTa‑based model pre‑trained on the Siku Quanshu (SikuRoBERTa‑a). Each task is run twice—once with Classical Chinese data included in the training mix and once without—while keeping all other settings identical.

Results show that adding Classical Chinese data yields at best a modest +0.84 BLEU improvement for translating Hanja literary texts, a gain that fails to reach statistical significance under paired bootstrap testing. For NER and PR, the differences are negligible (±0.0068 F1) and uniformly non‑significant. Moreover, as soon as local Hanja data exceed roughly 10 % of the training set, the benefit of Classical Chinese disappears entirely.

A linguistic analysis reveals that surface character overlap is high across the Sinosphere, but deeper divergences—such as the shift from SVO order in Classical Chinese to SOV in Kanbun, the creation of region‑specific characters, and differing lexical preferences—substantially hinder cross‑lingual transfer. Experiments on Kanbun exhibit the same pattern: only in extremely low‑resource regimes does Classical Chinese provide a slight boost; with more local data the effect vanishes.

The authors conclude that indiscriminate use of Classical Chinese resources is inefficient for most realistic scenarios. Cross‑lingual transfer is beneficial only in truly scarce data settings, and even then it must be guided by careful matching of genre, style, and historical period. They release the newly compiled Korean Literary Collections (KLC) dataset and all code, encouraging future work to adopt domain‑aware transfer strategies rather than relying on shared script alone.

Comments & Academic Discussion

Loading comments...

Leave a Comment