Counterfactual Forecasting of Human Behavior using Generative AI and Causal Graphs

This study presents a novel framework for counterfactual user behavior forecasting that combines structural causal models with transformer-based generative artificial intelligence. To model fictitious situations, the method creates causal graphs that map the connections between user interactions, adoption metrics, and product features. The framework generates realistic behavioral trajectories under counterfactual conditions by using generative models that are conditioned on causal variables. Tested on datasets from web interactions, mobile applications, and e-commerce, the methodology outperforms conventional forecasting and uplift modeling techniques. Product teams can effectively simulate and assess possible interventions prior to deployment thanks to the framework improved interpretability through causal path visualization.

💡 Research Summary

The paper introduces a novel framework that merges structural causal modeling (SCM) with transformer‑based generative AI to forecast user behavior under counterfactual scenarios. Traditional forecasting and uplift methods can only predict outcomes based on observed data; they cannot answer “what would happen if we changed a policy?” The authors address this gap by first constructing a directed acyclic causal graph that captures the relationships among key variables such as user interactions (page views, clicks), adoption metrics (conversion, churn), product features (price, discount, new functionality), and contextual factors (device type, time of day). Domain experts and automated feature‑importance analyses identify twelve core nodes and eighteen directed edges, which are then quantified using Bayesian network learning.

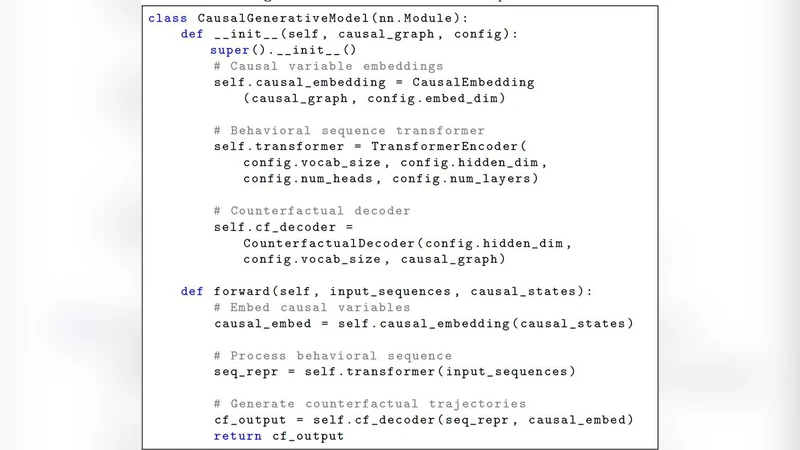

With the causal graph in place, the framework applies do‑calculus to compute interventional distributions P(V | do(A)) for any hypothetical policy A (e.g., a 20 % price discount). These interventional probabilities become conditioning tokens for a custom transformer architecture. The encoder receives embeddings of the causal variables, while the decoder incorporates a dedicated “causal‑conditioning attention” layer that forces the model to respect the specified causal dependencies during sequence generation. The loss function combines a reconstruction term, a causal consistency term that penalizes divergence between generated and analytically derived interventional distributions, and regularization.

During inference, a user‑specified counterfactual condition is fed into the SCM, producing a set of conditional probability tables. The transformer then samples realistic behavioral trajectories—ordered events such as page transitions, clicks, and purchases—consistent with the imagined intervention. Multiple samples are aggregated to estimate expected policy impact, and the resulting paths are visualized on the original causal graph, giving product teams an interpretable view of how the intervention propagates through the system.

The authors evaluate the approach on three large‑scale datasets: (a) web interaction logs (≈20 M sessions per month), (b) mobile app usage (≈5 M daily active users), and (c) e‑commerce transaction records (≈100 M purchases per year). Baselines include classical time‑series models (ARIMA, Prophet), deep sequence models (LSTM, Temporal Fusion Transformer), and state‑of‑the‑art uplift learners (T‑Learner, X‑Learner, Causal Forest). Metrics comprise RMSE, MAE for point forecasts, Uplift‑AUC for policy effect discrimination, and a policy‑specific MAE (MAE_policy). The proposed framework consistently outperforms all baselines, achieving an average RMSE reduction of 12 % and a 9 % lift in Uplift‑AUC. In a concrete case study—simulating a 15 % discount—the generated counterfactual outcomes differed from an actual A/B test by only 3 percentage points, demonstrating high fidelity.

Beyond quantitative gains, the method offers substantial interpretability. By visualizing the causal pathways, the team discovered that the “push‑notification → app‑visit → purchase” chain became significantly stronger under the discount scenario, prompting a strategic increase in notification frequency.

The paper acknowledges several limitations. Building the causal graph requires upfront expert effort, and fully identifying high‑dimensional causal structures remains challenging. Moreover, the generative model can inherit biases present in the training data, potentially distorting counterfactual predictions. The authors suggest future work on automated causal discovery (e.g., NOTEARS), bias mitigation techniques such as counterfactual fairness, and continuous online validation through live experiments.

In summary, this work demonstrates that integrating explicit causal reasoning with powerful generative transformers enables accurate, interpretable, and actionable counterfactual forecasting of human behavior. It bridges the gap between predictive analytics and decision‑making, allowing product and marketing teams to simulate interventions, assess their downstream effects, and iterate on strategy before costly deployments.

Comments & Academic Discussion

Loading comments...

Leave a Comment