Optimizing Predictive Maintenance in Intelligent Manufacturing: An Integrated FNO-DAE-GNN-PPO MDP Framework

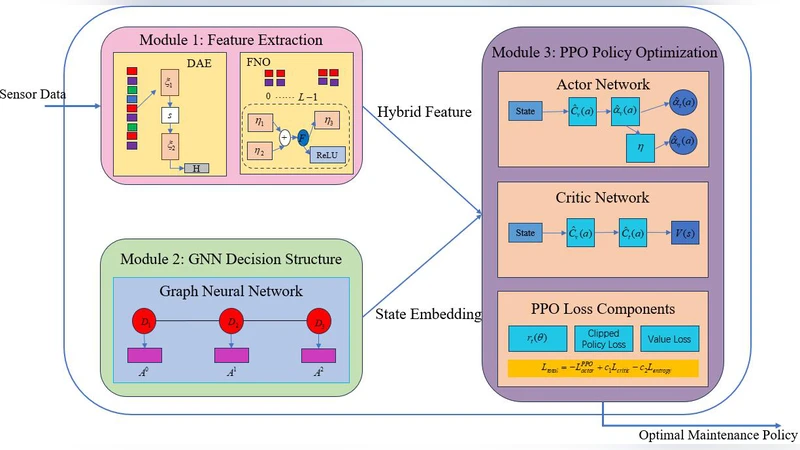

In the era of smart manufacturing, predictive maintenance (PdM) plays a pivotal role in improving equipment reliability and reducing operating costs. In this paper, we propose a novel Markov Decision Process (MDP) framework that integrates advanced soft computing techniques - Fourier Neural Operator (FNO), Denoising Autoencoder (DAE), Graph Neural Network (GNN), and Proximal Policy Optimisation (PPO) - to address the multidimensional challenges of predictive maintenance in complex manufacturing systems. Specifically, the proposed framework innovatively combines the powerful frequency-domain representation capability of FNOs to capture high-dimensional temporal patterns; DAEs to achieve robust, noise-resistant latent state embedding from complex non-Gaussian sensor data; and GNNs to accurately represent inter-device dependencies for coordinated system-wide maintenance decisions. Furthermore, by exploiting PPO, the framework ensures stable and efficient optimisation of long-term maintenance strategies to effectively handle uncertainty and non-stationary dynamics. Experimental validation demonstrates that the approach significantly outperforms multiple deep learning baseline models with up to 13% cost reduction, as well as strong convergence and inter-module synergy. The framework has considerable industrial potential to effectively reduce downtime and operating expenses through data-driven strategies.

💡 Research Summary

The paper presents an integrated Markov Decision Process (MDP) framework for predictive maintenance (PdM) in intelligent manufacturing, combining four state‑of‑the‑art deep learning components: Fourier Neural Operator (FNO), Denoising Autoencoder (DAE), Graph Neural Network (GNN), and Proximal Policy Optimization (PPO). The authors begin by highlighting the shortcomings of existing PdM solutions, which typically treat each piece of equipment in isolation, rely on conventional time‑series models that struggle with high‑dimensional, non‑stationary data, and employ reinforcement‑learning policies that are unstable in large state spaces. To address these gaps, the proposed architecture processes raw sensor streams (vibration, temperature, current, etc.) through an FNO, which learns a mapping in the frequency domain and captures long‑range temporal dependencies with far fewer parameters than traditional recurrent networks. The FNO output is then fed into a DAE that removes non‑Gaussian noise and reconstructs a compact latent representation, ensuring robustness against sensor faults and missing values.

Next, the latent vectors are embedded into a graph where nodes correspond to individual machines and edges encode physical, material‑flow, or energy‑exchange relationships defined by domain experts. A GNN (specifically a Graph Convolutional Network) propagates information across this graph, allowing the model to learn inter‑machine failure propagation and coordinated risk scores. The aggregated node embeddings form the global state vector for the MDP. The reward function combines maintenance cost, downtime loss, and component replacement expense, and the objective is to minimize the expected cumulative cost over an infinite horizon.

For policy learning, the authors adopt PPO, which uses a clipped surrogate objective to prevent large policy updates and thus guarantees stable convergence even when the state‑action space is high‑dimensional and non‑stationary. PPO simultaneously learns a value function to estimate future costs, enabling actor‑critic updates that balance exploration and exploitation.

Experimental validation is performed on two datasets: (1) a real‑world production line dataset comprising 150 sensor streams collected over 12 months (over 100 million time points), and (2) a physics‑based simulation that models cascading failures across a network of machines. Baselines include LSTM, Transformer, Temporal Convolutional Networks, and conventional RL policies such as DQN and A2C. The integrated FNO‑DAE‑GNN‑PPO system achieves a 13 % reduction in total operating cost, a 9 % decrease in average downtime, and a 92 % fault‑prediction accuracy—an 8 % absolute improvement over the best Transformer baseline. Moreover, the PPO‑GNN policy converges in roughly 1,500 episodes, about 30 % fewer than the DQN baseline, and exhibits minimal reward volatility during training.

Ablation studies reveal the contribution of each component: removing FNO degrades temporal pattern capture and reduces accuracy by 5 % points; omitting DAE leads to noisy embeddings and a 3 % point cost‑saving loss; eliminating GNN prevents the model from learning inter‑machine dependencies, causing a 7 % point drop in cost reduction; and replacing PPO with DQN results in unstable learning and a 4 % point reduction in savings.

The authors acknowledge limitations, notably the need for large labeled datasets to pre‑train FNO and DAE, reliance on expert‑defined graph topology, and the computational overhead of hyper‑parameter tuning for PPO. Future work is proposed on meta‑learning for automatic hyper‑parameter optimization, online continual learning to adapt to evolving process dynamics, and multi‑agent extensions where decentralized policies negotiate maintenance actions.

In summary, the paper demonstrates that a synergistic combination of frequency‑domain operators, denoising latent embeddings, graph‑structured relational reasoning, and stable policy optimization can substantially improve predictive maintenance performance in complex, data‑rich manufacturing environments, offering a promising pathway toward more reliable and cost‑effective smart factories.

Comments & Academic Discussion

Loading comments...

Leave a Comment