Context-Guided Decompilation: A Step Towards Re-executability

Binary decompilation plays an important role in software security analysis, reverse engineering, and malware understanding when source code is unavailable. However, existing decompilation techniques often fail to produce source code that can be successfully recompiled and re-executed, particularly for optimized binaries. Recent advances in large language models (LLMs) have enabled neural approaches to decompilation, but the generated code is typically only semantically plausible rather than truly executable, limiting their practical reliability. These shortcomings arise from compiler optimizations and the loss of semantic cues in compiled code, which LLMs struggle to recover without contextual guidance. To address this challenge, we propose ICL4Decomp, a hybrid decompilation framework that leverages in-context learning (ICL) to guide LLMs toward generating re-executable source code. We evaluate our method across multiple datasets, optimization levels, and compilers, demonstrating around 40% improvement in re-executability over state-of-the-art decompilation methods while maintaining robustness.

💡 Research Summary

The paper addresses a long‑standing limitation of binary decompilation: the inability of most decompilers to produce source code that can be re‑compiled and re‑executed, especially when the binary has been heavily optimized. While traditional rule‑based tools (e.g., Hex‑Rays, Ghidra) and recent large‑language‑model (LLM) approaches (e.g., DeGPT, LLM4Decompile) can generate readable code, they often ignore the semantic gaps introduced by compiler optimizations, leading to code that fails to compile or behaves differently from the original program.

To close this gap, the authors propose ICL4Decomp, a hybrid framework that leverages In‑Context Learning (ICL) to guide an LLM toward generating re‑executable source code. The framework combines two complementary sources of contextual information: (1) retrieved exemplars – a set of (assembly, source) pairs that are semantically similar to the target function, and (2) optimization‑aware rules – natural‑language descriptions of compiler transformations such as loop unrolling, register reuse, inlining, and dead‑code elimination. By conditioning the LLM on both concrete examples and high‑level transformation hints, the system can recover lost variable types, control‑flow structures, and naming conventions that are essential for successful recompilation.

Corpus and Retrieval Infrastructure

The authors construct a large corpus of roughly 100 k (assembly, source) pairs drawn from the MBPP benchmark and the ExeBench dataset, covering algorithmic, string, I/O, system, and mathematical code. All assembly snippets are normalized (comments removed, registers stripped, addresses abstracted) and encoded using the Nova encoder, a foundation model pre‑trained on assembly code with two contrastive objectives: (i) functional contrastive learning (to bring together different binaries generated from the same source) and (ii) optimization contrastive learning (to order embeddings according to optimization level O0–O3). Embeddings are indexed with FAISS, enabling millisecond‑scale nearest‑neighbor search. Retrieval uses Cross‑Domain Similarity Local Scaling (CSLS) to mitigate hubness, and a category‑aware re‑ranking factor penalizes mismatched functional categories, ensuring that the most relevant examples are selected.

Prompt Construction

For a target assembly function (A_t), the top‑(k) exemplars ({(A_i, S_i)}) are formatted as alternating “Assembly: … Source: …” blocks, ordered by decreasing adjusted similarity. After the exemplars, the target assembly is presented with a clear instruction (“What is the source code?”). When the optimization‑rule variant (ICL4D‑O) is used, a concise natural‑language description of each applicable compiler transformation is appended to the prompt. The final prompt (P(A_t, C_{ret}, C_{opt})) is fed to a frozen LLM (GPT‑4‑Turbo in the experiments) with deterministic decoding (temperature 0.0, token limit 1024).

Evaluation Methodology

Re‑executability is measured in two steps: (1) does the generated source compile without errors using a standard compiler (gcc or clang) at the same optimization level? (2) does the compiled binary produce identical outputs on a held‑out test suite compared to the original binary? The authors evaluate across four optimization levels (O0–O3) and two compilers, yielding eight experimental settings. Baselines include Hex‑Rays, Ghidra, DeGPT, and a naïve LLM prompt without contextual information.

Results

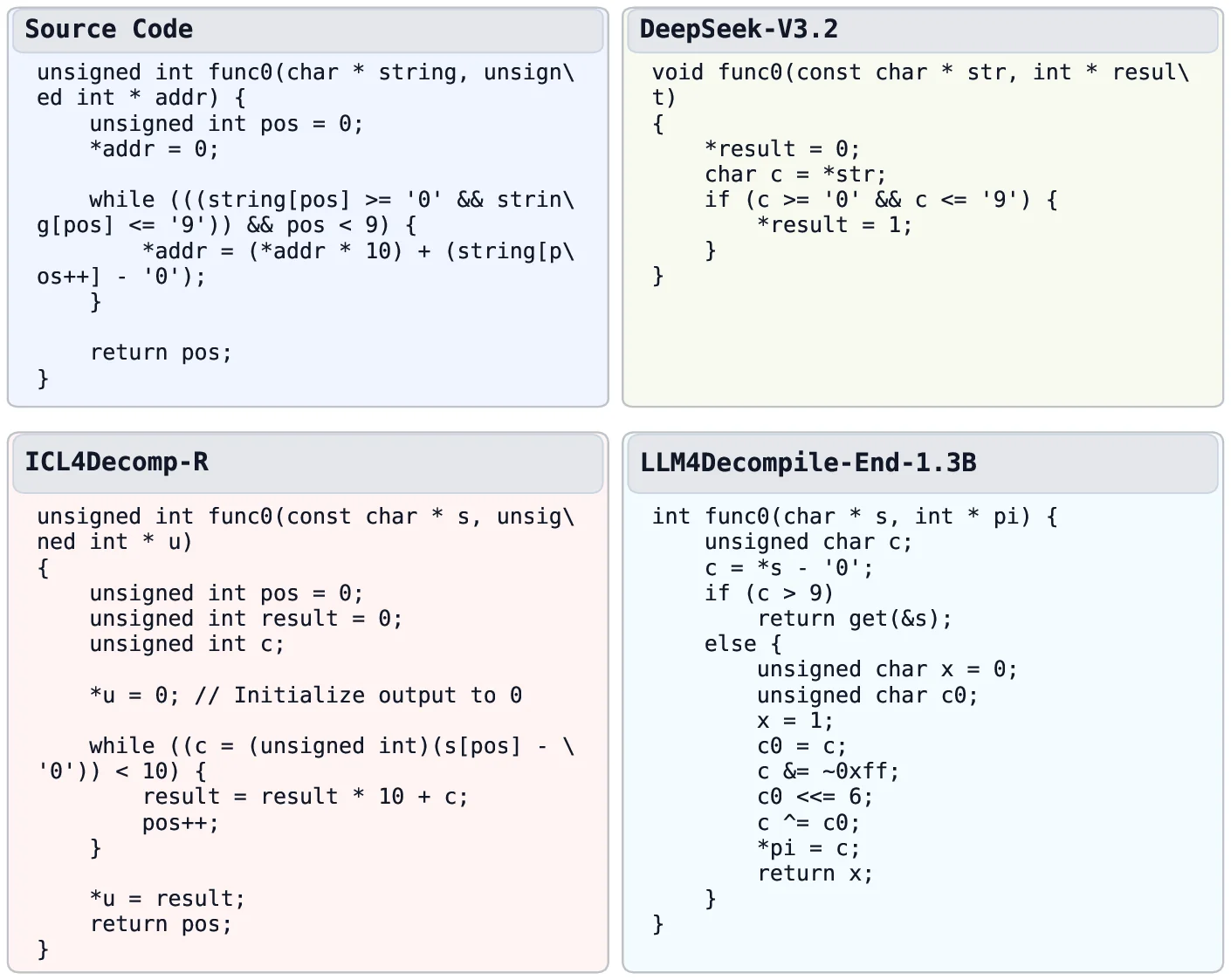

ICL4Decomp achieves an average re‑executability increase of roughly 40 percentage points over the strongest baseline. Gains are especially pronounced at O3, where traditional tools often drop below 15 % re‑executability, while ICL4Decomp reaches 60 % or higher. Ablation studies show that the exemplar‑only variant (ICL4D‑R) contributes about a 20 % lift, the rule‑only variant (ICL4D‑O) about 15 %, and the combination yields the full improvement, confirming a synergistic effect.

Limitations and Future Work

The approach is constrained by prompt length; increasing (k) beyond a modest number degrades performance due to token limits. The rule set is manually curated, limiting scalability to new compilers or exotic optimizations. Test‑suite coverage may not capture all semantic nuances, potentially inflating re‑executability scores. Future directions include automated extraction of optimization rules, multimodal models that jointly process assembly and binary metadata, and extensive validation on real‑world malware and embedded firmware.

Contributions

- Introduction of an ICL‑based decompilation framework that unifies example‑driven and rule‑driven context.

- Development of a function‑aware assembly embedding space and a category‑aware retrieval pipeline.

- Demonstration of substantial, reproducible improvements in re‑compilability and re‑executability across diverse compilers and optimization levels.

- Comprehensive ablation and robustness analyses confirming the complementary nature of the two context sources.

In summary, ICL4Decomp shows that carefully crafted in‑context information can bridge the semantic gap introduced by compiler optimizations, enabling decompilers to not only produce readable code but also code that can be reliably rebuilt and executed. This work paves the way for more trustworthy reverse‑engineering tools and highlights the broader potential of ICL for other low‑level program analysis tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment