A Proof of Learning Rate Transfer under $μ$P

We provide the first proof of learning rate transfer with width in a linear multi-layer perceptron (MLP) parametrized with $μ$P, a neural network parameterization designed to ``maximize’’ feature learning in the infinite-width limit. We show that under $μP$, the optimal learning rate converges to a \emph{non-zero constant} as width goes to infinity, providing a theoretical explanation to learning rate transfer. In contrast, we show that this property fails to hold under alternative parametrizations such as Standard Parametrization (SP) and Neural Tangent Parametrization (NTP). We provide intuitive proofs and support the theoretical findings with extensive empirical results.

💡 Research Summary

The paper addresses a practical phenomenon observed in modern deep learning: learning‑rate transfer, i.e., the stability of the optimal learning rate as a neural network’s width grows. While empirical work has shown that the Maximal Update Parametrization (μP) often exhibits this property, no rigorous proof existed. The authors fill this gap by providing the first formal analysis of learning‑rate transfer for linear multi‑layer perceptrons (MLPs) of arbitrary depth under μP, and they contrast the result with Standard Parametrization (SP) and Neural Tangent Parametrization (NTP).

Problem Setting and Notation

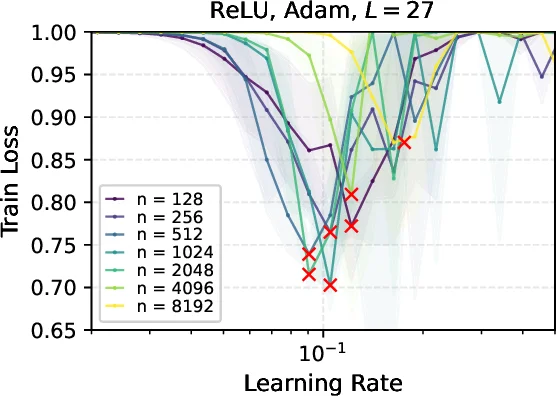

The model is a linear MLP: (f(x)=V^{\top}W_L\cdots W_0 x), with input dimension (d), hidden width (n), depth (L), and a single output. Training minimizes the quadratic loss on a fixed dataset using gradient descent (GD) or Adam. A “neural parametrization” is defined by scaling exponents (\alpha_\ell) for each weight matrix, (\alpha_V) for the output vector, and an exponent (c) for the learning‑rate scaling (\eta n^{-c}). μP sets (\alpha_\ell=1) for all layers, (\alpha_V=2), and (c=0) for GD (or (c=1) for Adam). SP uses (\alpha_V=1) and no learning‑rate scaling, while NTP uses (\alpha_\ell=0), (\alpha_V=0).

Definition of Learning‑Rate Transfer

For a given width (n) and training step (t), the optimal learning rate (\eta^{(t)}_n) is the argument minimizing the loss (L^{(t)}_n(\eta)). Learning‑rate transfer occurs if (\eta^{(t)}n) converges in probability to a deterministic constant (\eta^{(t)}\infty>0) as (n\to\infty). The positivity of the limit is crucial; a limit of zero would imply a trivial (no‑training) regime.

Analysis for One Gradient Step (t = 1)

The loss after one step can be expressed as a polynomial in (\eta):

\

Comments & Academic Discussion

Loading comments...

Leave a Comment