Align to Misalign: Automatic LLM Jailbreak with Meta-Optimized LLM Judges

Identifying the vulnerabilities of large language models (LLMs) is crucial for improving their safety by addressing inherent weaknesses. Jailbreaks, in which adversaries bypass safeguards with crafted input prompts, play a central role in red-teaming by probing LLMs to elicit unintended or unsafe behaviors. Recent optimization-based jailbreak approaches iteratively refine attack prompts by leveraging LLMs. However, they often rely heavily on either binary attack success rate (ASR) signals, which are sparse, or manually crafted scoring templates, which introduce human bias and uncertainty in the scoring outcomes. To address these limitations, we introduce AMIS (Align to MISalign), a meta-optimization framework that jointly evolves jailbreak prompts and scoring templates through a bi-level structure. In the inner loop, prompts are refined using fine-grained and dense feedback using a fixed scoring template. In the outer loop, the template is optimized using an ASR alignment score, gradually evolving to better reflect true attack outcomes across queries. This co-optimization process yields progressively stronger jailbreak prompts and more calibrated scoring signals. Evaluations on AdvBench and JBB-Behaviors demonstrate that AMIS achieves state-of-the-art performance, including 88.0% ASR on Claude-3.5-Haiku and 100.0% ASR on Claude-4-Sonnet, outperforming existing baselines by substantial margins.

💡 Research Summary

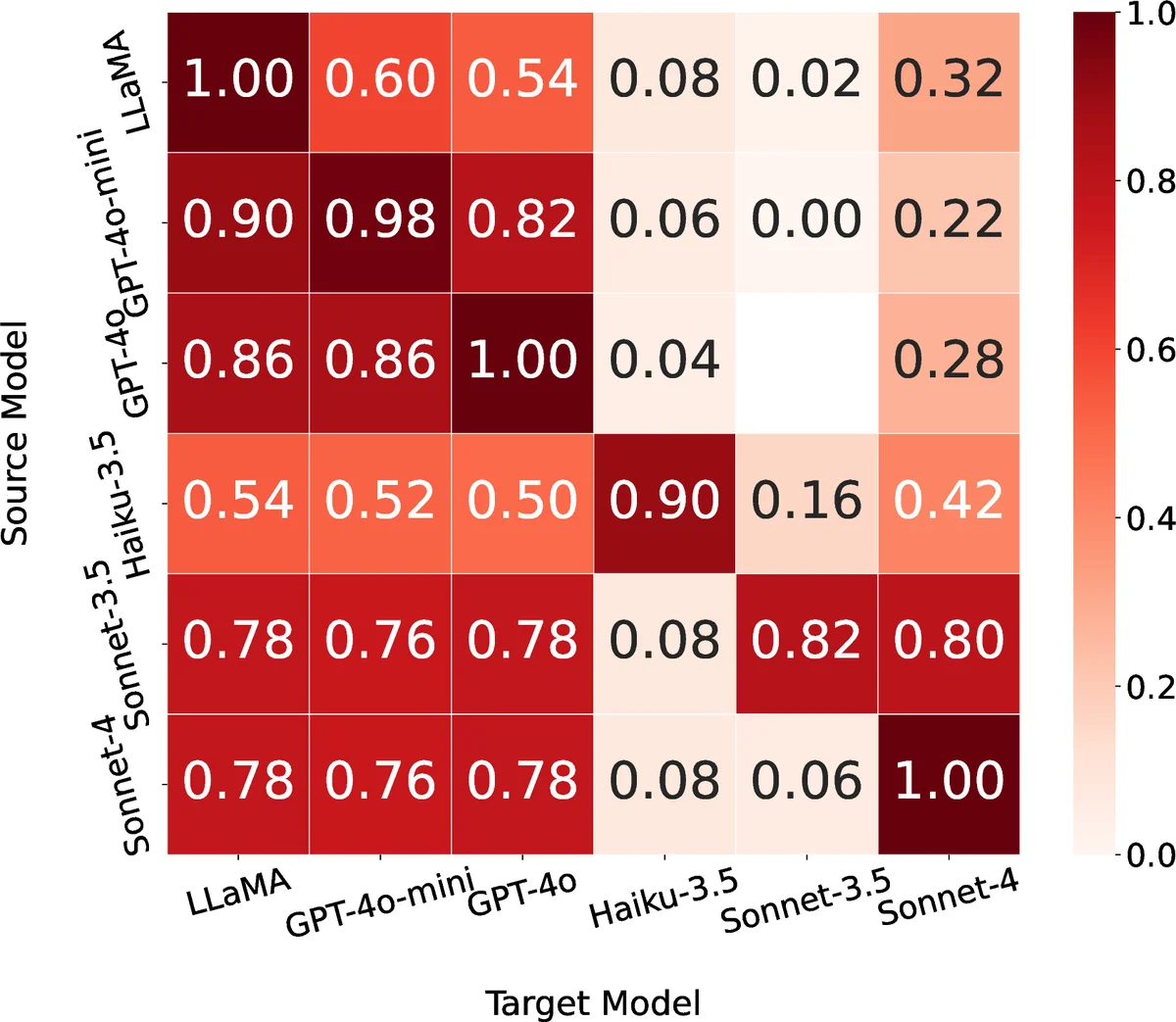

The paper introduces AMIS (Align to MISalign), a meta‑optimization framework that simultaneously evolves jailbreak prompts and the scoring templates used to evaluate them. The approach is organized as a bi‑level optimization: an inner loop refines prompts for each harmful query using a fixed, fine‑grained scoring template that assigns continuous scores (1–10) reflecting the degree of safety‑filter bypass. This dense feedback provides richer gradients than the traditional binary attack success rate (ASR). The outer loop aggregates all prompt‑response‑score triples across the dataset, computes an “ASR alignment score” that measures how closely the template’s continuous scores match the true binary ASR outcomes, and updates the template to maximize this alignment. By iteratively co‑optimizing prompts and the evaluator, AMIS produces stronger attacks while ensuring the scoring signal remains predictive of actual success. Experiments on AdvBench and JBB‑Behaviors with five target LLMs (including Claude‑3.5‑Haiku and Claude‑4‑Sonnet) show state‑of‑the‑art performance, achieving 88 % ASR on Claude‑3.5‑Haiku and a perfect 100 % on Claude‑4‑Sonnet, surpassing prior methods by large margins. Ablation studies confirm that template evolution is critical, and prompt transferability tests demonstrate that attacks optimized on powerful models generalize to weaker ones. Limitations include reliance on the judge model for binary labels, potential bias in the template update, and focus on English‑centric models. Future work suggests extending the template to a learnable neural meta‑learner, incorporating human feedback to mitigate bias, and jointly training defensive mechanisms. Overall, AMIS offers a novel paradigm where the evaluation metric itself is learned, leading to more effective and adaptable jailbreak generation.

Comments & Academic Discussion

Loading comments...

Leave a Comment