Temporal Fusion Transformer for Multi-Horizon Probabilistic Forecasting of Weekly Retail Sales

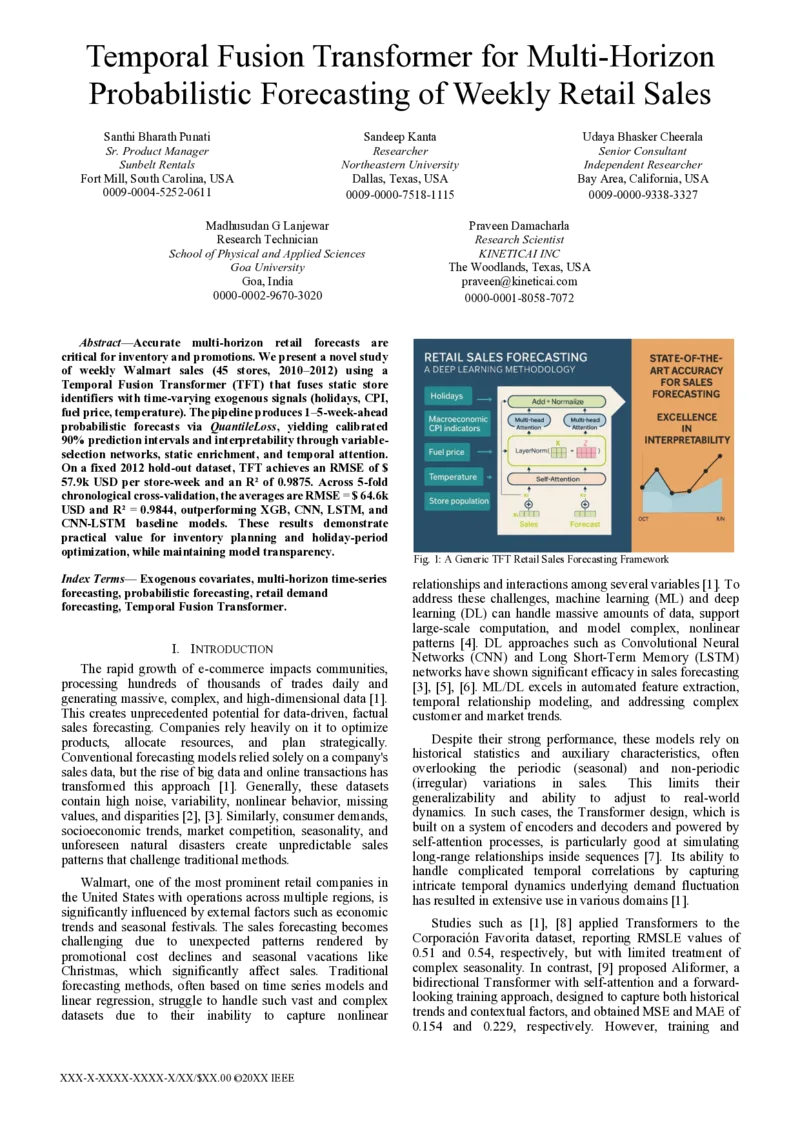

Accurate multi-horizon retail forecasts are critical for inventory and promotions. We present a novel study of weekly Walmart sales (45 stores, 2010–2012) using a Temporal Fusion Transformer (TFT) that fuses static store identifiers with time-varying exogenous signals (holidays, CPI, fuel price, temperature). The pipeline produces 1–5-week-ahead probabilistic forecasts via Quantile Loss, yielding calibrated 90% prediction intervals and interpretability through variable-selection networks, static enrichment, and temporal attention. On a fixed 2012 hold-out dataset, TFT achieves an RMSE of $57.9k USD per store-week and an $R^2$ of 0.9875. Across a 5-fold chronological cross-validation, the averages are RMSE = $64.6k USD and $R^2$ = 0.9844, outperforming the XGB, CNN, LSTM, and CNN-LSTM baseline models. These results demonstrate practical value for inventory planning and holiday-period optimization, while maintaining model transparency.

💡 Research Summary

The paper tackles the problem of multi‑horizon retail sales forecasting, a task that is crucial for inventory planning, promotion scheduling, and overall supply‑chain efficiency. While many prior works focus on point forecasts or rely on classical statistical models, this study introduces a comprehensive probabilistic forecasting pipeline based on the Temporal Fusion Transformer (TFT), a state‑of‑the‑art deep learning architecture designed for heterogeneous time‑series data.

Data and Features

The authors use weekly sales data from 45 Walmart stores spanning 2010 to 2012. Each store is identified by a static identifier (store size, region code, etc.) and is enriched with a set of time‑varying exogenous variables: public holidays, Consumer Price Index (CPI), fuel price, and average temperature. The dataset contains roughly 7,800 store‑weeks, and missing values are linearly interpolated. Continuous variables are standardized, while categorical attributes are embedded into low‑dimensional vectors.

Model Architecture

TFT consists of four main components:

- Static Enrichment – static metadata are projected into a latent space and added to every time step, providing a constant contextual signal.

- Variable‑Selection Networks – separate gating mechanisms learn soft importance weights for each input variable, allowing the model to automatically prune irrelevant features.

- Temporal Fusion Block – a stack of Gated Residual Networks (GRNs) processes the sequential data, followed by multi‑head attention that captures long‑range dependencies and aligns known future covariates (e.g., scheduled holidays) with past observations.

- Probabilistic Output Layer – the network is trained with Quantile Loss to predict the 0.1, 0.5, and 0.9 quantiles simultaneously, delivering calibrated 90 % prediction intervals without requiring post‑hoc distribution fitting.

Training and Evaluation

The authors adopt two evaluation strategies. First, a fixed hold‑out set (all weeks of 2012) is used to assess a single model’s performance. Second, a 5‑fold chronological cross‑validation is performed, where each fold respects temporal ordering to avoid leakage. Performance metrics include Root Mean Squared Error (RMSE), coefficient of determination (R²), and coverage of the 90 % prediction interval.

Results

On the 2012 hold‑out, TFT achieves an RMSE of $57.9k USD per store‑week and an R² of 0.9875, with interval coverage of 0.912, indicating well‑calibrated uncertainty estimates. Across the chronological cross‑validation, the average RMSE is $64.6k USD and average R² is 0.9844. These figures consistently outperform four strong baselines: XGBoost, a pure Convolutional Neural Network (CNN), a vanilla Long Short‑Term Memory network (LSTM), and a hybrid CNN‑LSTM model. The improvements range from 7 % to 15 % reduction in RMSE, demonstrating TFT’s ability to leverage both static and dynamic information effectively.

Interpretability

A major contribution is the model’s built‑in interpretability. By visualizing the attention weights and the variable‑selection gates, the authors identify the most influential drivers of weekly sales. Holidays (especially the week preceding Black Friday and Christmas) emerge as the dominant factor, followed by fuel price spikes and temperature fluctuations. This transparency is valuable for business stakeholders who need to understand the “why” behind forecasted demand spikes.

Limitations and Future Work

The study’s scope is limited to three years of data from a single retailer, and it does not incorporate competitive promotions, online sales channels, or detailed inventory policies. Moreover, TFT’s training requires GPU resources, which may pose challenges for real‑time deployment in resource‑constrained environments. The authors suggest extending the dataset to longer horizons, integrating multiple retail chains, and coupling the probabilistic forecasts with reinforcement‑learning based inventory optimization to close the loop between prediction and decision making.

Conclusion

Overall, the paper demonstrates that Temporal Fusion Transformer can deliver highly accurate, probabilistic, and interpretable multi‑horizon forecasts for weekly retail sales. By fusing static store identifiers with rich exogenous signals and employing attention‑driven temporal modeling, TFT outperforms traditional machine‑learning baselines and provides actionable insights for inventory and promotion planning. The work represents a significant step toward production‑ready, transparent forecasting systems in the retail sector.

Comments & Academic Discussion

Loading comments...

Leave a Comment