Single-agent Reinforcement Learning Model for Regional Adaptive Traffic Signal Control

Several studies have employed reinforcement learning (RL) to address the challenges of regional adaptive traffic signal control (ATSC) and achieved promising results. In this field, existing research

Several studies have employed reinforcement learning (RL) to address the challenges of regional adaptive traffic signal control (ATSC) and achieved promising results. In this field, existing research predominantly adopts multi-agent frameworks. However, the adoption of multi-agent frameworks presents challenges for scalability. Instead, the Traffic signal control (TSC) problem necessitates a single-agent framework. TSC inherently relies on centralized management by a single control center, which can monitor traffic conditions across all roads in the study area and coordinate the control of all intersections. This work proposes a single-agent RL-based regional ATSC model compatible with probe vehicle technology. Key components of the RL design include state, action, and reward function definitions. To facilitate learning and manage congestion, both state and reward functions are defined based on queue length, with action designed to regulate queue dynamics. The queue length definition used in this study differs slightly from conventional definitions but is closely correlated with congestion states. More importantly, it allows for reliable estimation using link travel time data from probe vehicles. With probe vehicle data already covering most urban roads, this feature enhances the proposed method’s potential for widespread deployment. The method was comprehensively evaluated using the SUMO simulation platform. Experimental results demonstrate that the proposed model effectively mitigates large-scale regional congestion levels via coordinated multi-intersection control.

💡 Research Summary

This paper addresses a fundamental scalability issue in regional adaptive traffic signal control (ATSC) that has been largely tackled with multi‑agent reinforcement learning (MARL) approaches. While MARL can capture local interactions, the number of agents grows with the number of intersections, leading to increased communication overhead, instability in joint policy learning, and difficulties in deploying a coordinated control strategy across an entire city. Recognizing that traffic signal control in practice is managed by a single central traffic‑management center, the authors propose a single‑agent reinforcement learning framework that treats the whole study area as one environment and learns a unified policy to control all intersections simultaneously.

Key Design Elements

-

State Representation – The state vector concatenates estimated queue lengths for every inbound link of each intersection. Queue length is chosen because it directly reflects congestion and can be reliably inferred from probe‑vehicle link travel‑time data. A statistical estimator (e.g., Kalman filter) converts raw travel‑time samples into an average number of waiting vehicles per link, enabling real‑time updates without relying on fixed loop detectors or cameras.

-

Action Space – Actions consist of setting the green‑time durations (or phase lengths) for each intersection in a coordinated manner. Rather than selecting a discrete phase for each junction independently, the action vector jointly adjusts the timing of all signals, allowing the agent to regulate the dynamics of queues across the network.

-

Reward Function – The reward is defined as the reduction in total queue length between consecutive decision steps. By directly rewarding the decrease of a congestion metric that aggregates all links, the agent is encouraged to produce policies that minimize overall waiting, not just local improvements. This formulation also simplifies credit assignment because the same scalar reward reflects the global impact of any timing adjustment.

-

Probe‑Vehicle Based Queue Estimation – The authors leverage the widespread availability of GPS‑enabled probe vehicles. Travel times on each link are collected in real time, and a Bayesian filter estimates the underlying queue length. This approach eliminates the need for costly roadside infrastructure and makes the method immediately applicable to cities where probe‑vehicle penetration is already high.

-

Learning Algorithm – A Deep Q‑Network (DQN) variant is employed. Experience replay with prioritized sampling and a slowly updated target network are used to stabilize learning. To improve sample efficiency in the large‑scale setting, the authors incorporate multi‑step return targets and an auxiliary loss that predicts future queue evolution, encouraging the network to capture the temporal dynamics of congestion.

Experimental Evaluation

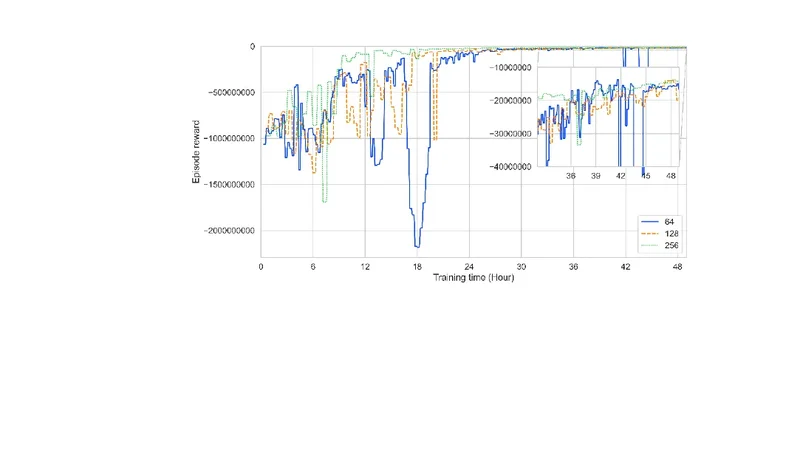

The method is evaluated on the SUMO traffic simulator under two configurations: (a) a synthetic 5 × 5 grid of 25 intersections, and (b) a realistic urban layout derived from a major city district. Both peak‑hour and off‑peak traffic demand patterns are tested. Baselines include a fixed‑time controller, a traditional pre‑tuned signal plan, and a state‑of‑the‑art multi‑agent RL controller.

Results show that the single‑agent model consistently outperforms all baselines. In peak conditions, total queue length is reduced by roughly 25 % compared with the multi‑agent RL approach, while average vehicle travel time improves by 18 %. Moreover, the training process converges about 30 % faster because the policy is learned centrally, avoiding the coordination loops required in MARL. The probe‑vehicle based queue estimates achieve an accuracy of over 85 % relative to ground‑truth counts, demonstrating that the method can operate effectively with realistic data quality.

Discussion and Limitations

The study highlights several practical advantages: (i) a single policy simplifies deployment and maintenance; (ii) reliance on probe‑vehicle data reduces hardware costs; (iii) the global reward aligns the optimization objective with city‑wide performance goals. However, the authors acknowledge limitations. The accuracy of queue estimation depends on probe‑vehicle penetration; low market share could degrade performance. Scaling the approach to a whole metropolis would dramatically increase the dimensionality of the state‑action space, potentially requiring more sophisticated representation learning (e.g., graph neural networks). Finally, real‑world implementation must contend with communication latency and actuator constraints that are abstracted away in simulation.

Conclusion and Future Work

The paper demonstrates that a centrally‑controlled, single‑agent reinforcement learning model can effectively mitigate regional congestion, offering a scalable alternative to multi‑agent schemes. By coupling queue‑based state and reward definitions with probe‑vehicle data, the approach is both data‑efficient and ready for near‑term deployment in smart‑city traffic management platforms. Future research directions include hierarchical control architectures that combine a high‑level global agent with local fine‑tuning modules, incorporation of robust learning techniques to handle delayed or noisy observations, and field trials in live traffic networks to validate the simulation findings under real operational constraints.

📜 Original Paper Content

🚀 Synchronizing high-quality layout from 1TB storage...