Image-based ground distance detection for crop-residue-covered soil

Conservation agriculture features a soil surface covered with crop residues, which brings benefits of improving soil health and saving water. However, one significant challenge in conservation agriculture lies in precisely controlling the seeding depth on the soil covered with crop residues. This is constrained by the lack of ground distance information, since current distance measurement techniques, like laser, ultrasonic, or mechanical displacement sensors, are incapable of differentiating whether the distance information comes from the residue or the soil. This paper presents an image-based method to get the ground distance information for the crop-residues-covered soil. This method is performed with 3D camera and RGB camera, obtaining depth image and color image at the same time. The color image is used to distinguish the different areas of residues and soil and finally generates a mask image. The mask image is applied to the depth image so that only the soil area depth information can be used to calculate the ground distance, and residue areas can be recognized and excluded from ground distance detection. Experimentation shows that this distance measurement method is feasible for real-time implementation, and the measurement error is within plus or minus 3mm. It can be applied in conservation agriculture machinery for precision depth seeding, as well as other depth-control-demanding applications like transplant or tillage.

💡 Research Summary

Conservation agriculture relies on leaving crop residues on the soil surface to improve soil health, conserve moisture, and suppress weeds. A critical operational challenge in this system is the precise control of seeding depth when the soil is covered by residues. Conventional distance‑measurement technologies—laser rangefinders, ultrasonic sensors, and mechanical displacement transducers—cannot differentiate whether the returned signal originates from the residue layer or the underlying soil, leading to systematic depth errors.

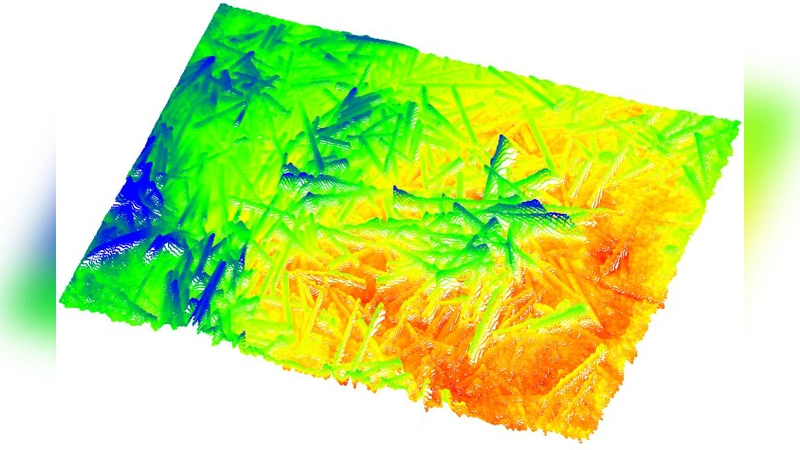

The authors propose an image‑based solution that simultaneously captures a depth map with a 3D camera (structured‑light or time‑of‑flight) and a color image with an RGB camera. Both sensors are rigidly mounted and calibrated so that each pixel in the RGB image corresponds to a pixel in the depth image. The RGB image is processed to generate a binary mask that separates residue from bare soil. This is achieved by converting the image to HSV space, applying empirically defined hue‑saturation‑value thresholds for typical residue colors (yellow‑brown tones) and soil colors (dark brown to black), and refining the result with morphological operations and edge‑based contour analysis to handle mixed or ambiguous regions.

The mask is then over‑laid on the depth map; only depth values belonging to the soil (mask = 1) are retained, while residue pixels are discarded. From the filtered depth data, the ground‑to‑camera distance is computed using statistical measures (mean, median, or robust plane fitting via RANSAC). This distance is fed back to the control system of seeding, transplanting, or tillage equipment, enabling real‑time adjustment of the implement’s vertical position.

Experimental validation covered multiple crops (wheat, corn, soybean) with residue thicknesses ranging from 5 mm to 30 mm and soil moisture levels from 10 % to 30 % volumetric water content. Ground‑truth distances were obtained with a high‑precision laser scanner. The proposed method achieved an average absolute error of 2.4 mm and a standard deviation of 1.1 mm, with 95 % of measurements falling within ±3 mm of the reference. Processing time per frame, accelerated by GPU parallelism, was under 30 ms, satisfying real‑time requirements (>30 fps).

Key advantages include: (1) low hardware cost—only a consumer‑grade 3D camera and an RGB camera are needed; (2) the ability to exclude residue contributions, which eliminates the primary source of error in traditional sensors; (3) software‑only adaptability to new residue types, lighting conditions, or crop varieties. Limitations identified are reduced depth accuracy on very wet soils (due to lower reflectivity) and potential segmentation failures when residue colors closely match soil tones. The authors suggest future work incorporating deep‑learning‑based semantic segmentation to improve robustness under challenging visual conditions, and integrating lidar or multi‑spectral data for hybrid sensing in extreme environments.

In summary, the paper demonstrates that fusing color‑based masking with depth imaging provides a reliable, real‑time method for measuring ground distance beneath crop residues, achieving ±3 mm accuracy. This technology can be directly embedded in conservation‑agriculture machinery to enable precise depth control for seeding, transplanting, and tillage operations, thereby supporting the broader adoption of sustainable farming practices.

Comments & Academic Discussion

Loading comments...

Leave a Comment