Effectiveness of LLMs in Temporal User Profiling for Recommendation

💡 Research Summary

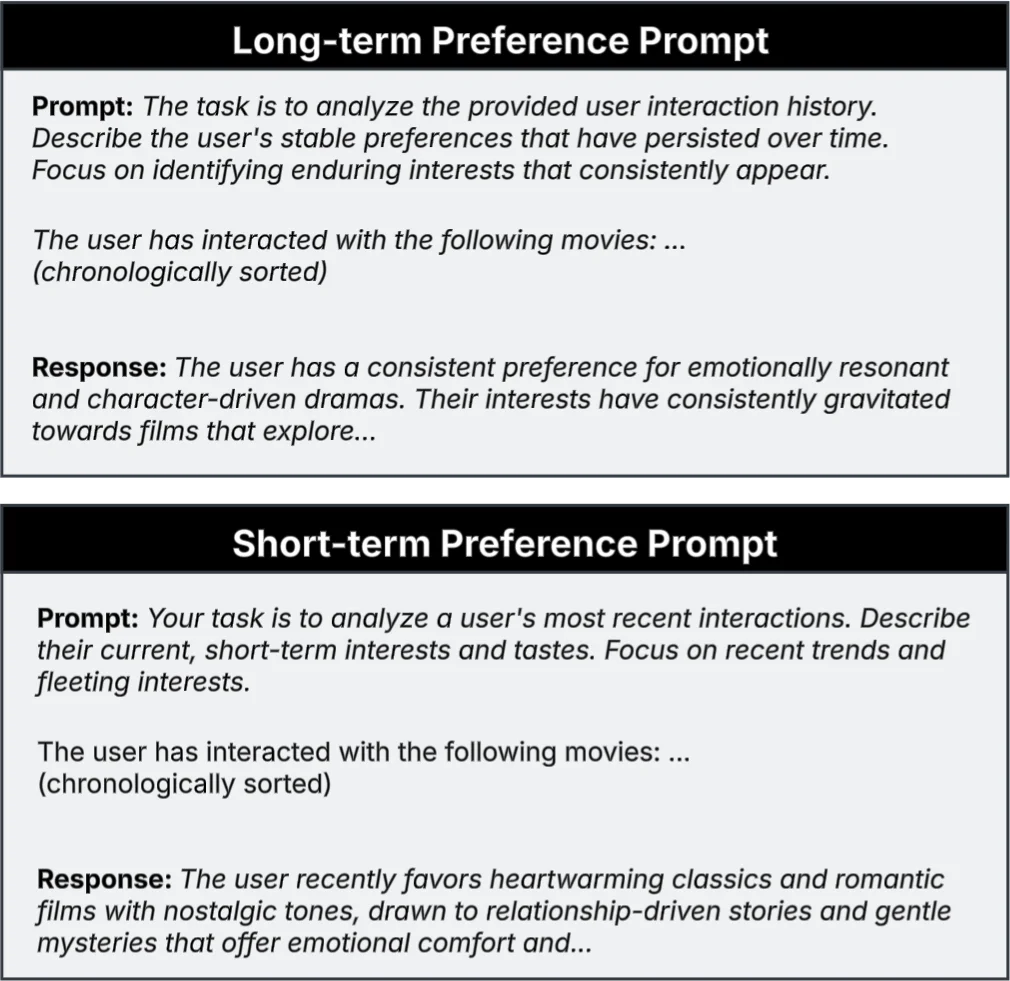

The paper investigates whether large language models (LLMs) can be used to create temporally aware user profiles that improve recommendation accuracy while also providing intrinsic interpretability. Traditional profiling methods—such as averaging item embeddings or using sequential neural models—typically conflate short‑term, fleeting interests with long‑term, stable preferences, which hampers both predictive performance and explainability. To address this, the authors propose a three‑stage pipeline. First, for each user they query an LLM twice with carefully crafted prompts: one that asks the model to summarize recent interactions (short‑term profile) and another that asks for a summary of enduring behaviors across the entire history (long‑term profile). The resulting natural‑language texts (NLshort and NLlong) are then encoded with a pretrained BERT variant (MiniLM‑L6‑v2) to obtain dense vectors rshort and rlong. Second, a learnable attention layer computes scalar weights αshort and αlong from these vectors, allowing the model to dynamically balance the influence of recent versus historical interests. The final user embedding e_u = αshort·rshort + αlong·rlong is concatenated with an item embedding e_i and fed into a multilayer perceptron (MLP) that predicts interaction likelihood via binary cross‑entropy loss.

Experiments are conducted on two Amazon domains: Movies&TV (high activity, average 11.79 interactions per user) and Video Games (low activity, average 4.55 interactions). Baselines include a naïve “Centric” average‑embedding method, popularity ranking, matrix factorization (MF), and a temporal‑fusion model (Temp‑Fusion) that aggregates numerical short‑ and long‑term embeddings without any LLM‑generated text. Evaluation follows a strict per‑user temporal hold‑out protocol, reporting Recall@K and NDCG@K.

Results show that the LLM‑driven temporal profiling (LLM‑TP) yields the most substantial gains in the high‑activity Movies&TV domain: Recall@10 improves by 17% and NDCG@10 by 14% over the Centric baseline, and it also outperforms Temp‑Fusion. In the sparser Video Games domain, improvements are modest; LLM‑TP achieves the highest Recall@20 but is otherwise comparable to or slightly below Temp‑Fusion for smaller K values. The authors attribute this disparity to the fact that, in low‑activity settings, user preferences tend to be more stable, reducing the benefit of finely separating short‑ and long‑term signals.

Beyond accuracy, the approach offers built‑in explainability. The natural‑language profiles are human‑readable, and the learned attention weights directly indicate whether a recommendation is driven more by recent or historical interests. Figure 2 in the paper illustrates how these components could be combined to generate user‑facing explanations, although a full user‑interface and user study are left for future work.

The paper also conducts an ablation study on the Movies&TV dataset. Removing either the short‑term or long‑term textual component degrades performance (≈15% loss in Recall@20), confirming that both temporal signals are complementary. Replacing the MLP scorer with a simple dot product causes the steepest drop, highlighting the importance of non‑linear interaction modeling.

Limitations include the computational cost of invoking LLMs for every user, the sensitivity of results to prompt design, and potential domain‑specific variations in summarization quality. The authors suggest future directions such as employing distilled or quantized LLMs, automated prompt optimization, and systematic user studies to assess explanation satisfaction.

In summary, the study demonstrates that LLM‑generated, temporally disentangled user profiles can substantially boost recommendation performance in domains with rich, dynamic interaction histories while simultaneously providing a transparent mechanism for explaining recommendations. The benefits, however, are context‑dependent, and practical deployment will require careful consideration of computational overhead and domain characteristics.

Comments & Academic Discussion

Loading comments...

Leave a Comment