Hybrid Modeling, Sim-to-Real Reinforcement Learning, and Large Language Model Driven Control for Digital Twins

💡 Research Summary

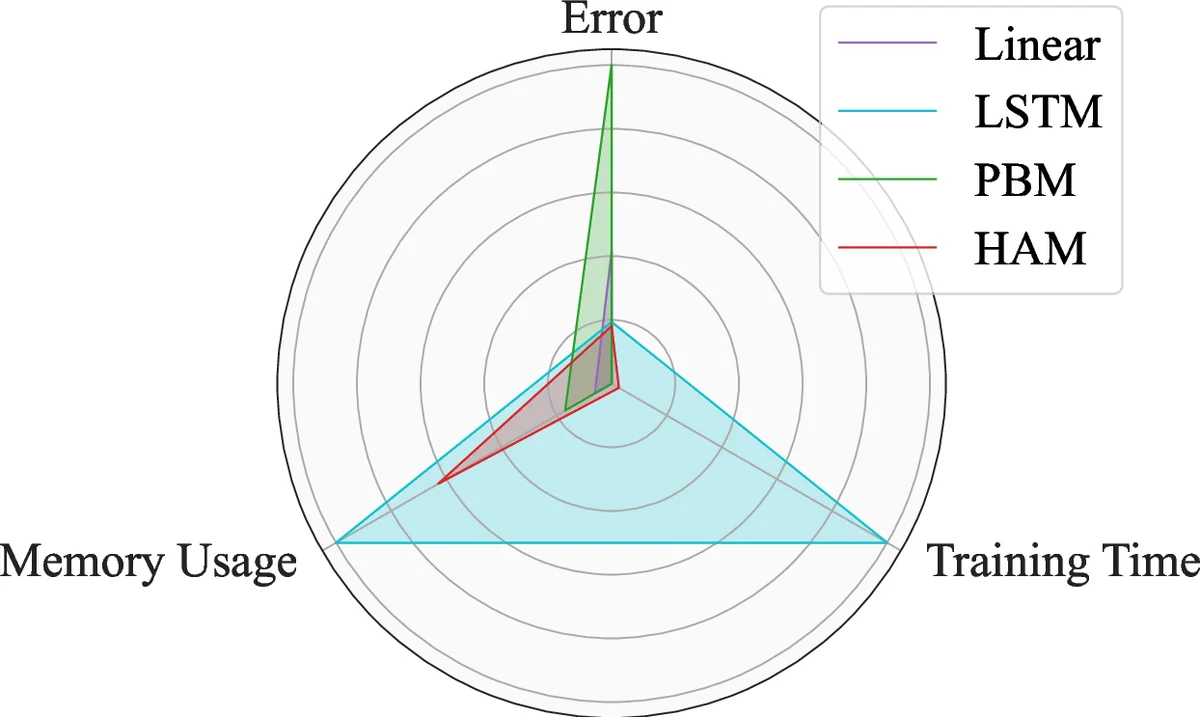

This paper investigates the integration of hybrid modeling, sim‑to‑real reinforcement learning (RL), and large language model (LLM) driven control within a digital‑twin (DT) framework, using a miniature greenhouse as a physical testbed. Four predictive models are developed and compared: a physics‑based model (PBM), a linear autoregressive with exogenous inputs (ARX) model, a Long Short‑Term Memory (LSTM) neural network, and a hybrid analysis‑and‑modeling (HAM) approach called CoST A, which augments the PBM with a data‑driven residual term. The models are evaluated under interpolation (within‑training‑distribution) and extrapolation (outside‑distribution) scenarios. Results show that HAM delivers the most balanced performance, offering low prediction error, good generalization, and modest computational cost. LSTM achieves the highest accuracy in interpolation but degrades sharply when extrapolating, while the linear ARX model is the least accurate but computationally cheap.

Three control strategies are implemented: Model Predictive Control (MPC), Deep Q‑Learning (DQN) RL, and an LLM‑based controller built on GPT‑4. MPC uses the HAM linearized model to solve a quadratic program over a 10‑step horizon, respecting actuator bounds and a temperature set‑point of 22 °C. It provides stable, low‑overshoot regulation. The RL agent learns a discrete policy over heater duty cycles and fan on/off states, with a reward that penalizes temperature deviation and energy use. After extensive offline training (>10⁴ episodes) in the DT, the policy is transferred to the real greenhouse; it initially overshoots but quickly adapts to external disturbances such as ambient temperature shifts and plant growth, demonstrating the importance of DT fidelity for sim‑to‑real transfer. The LLM controller receives the current state and a natural‑language goal, then generates control commands (e.g., “set heater to 30 % and turn the fan on”). By employing Retrieval‑Augmented Generation, the LLM can query recent sensor data and historical logs before issuing actions, providing a transparent, human‑centric interface. Although its raw control performance lags behind MPC and RL, the LLM excels in interpretability and ease of specifying objectives.

The study concludes that (i) hybrid modeling effectively bridges the gap between mechanistic insight and data‑driven flexibility, crucial for accurate DTs; (ii) MPC offers predictability when a reliable model exists, RL offers adaptability when the environment changes, and LLMs enable natural‑language interaction and explainability; and (iii) successful sim‑to‑real RL hinges on the DT’s predictive fidelity, which HAM substantially improves. Future work is suggested on multi‑variable control (humidity, CO₂, light), continuous‑action RL algorithms, safety‑aware LLM policies, and scaling the DT framework to cloud‑based, real‑time deployments.

Comments & Academic Discussion

Loading comments...

Leave a Comment