Hope Speech Detection in Social Media English Corpora: Performance of Traditional and Transformer Models

The identification of hope speech has become a promised NLP task, considering the need to detect motivational expressions of agency and goal-directed behaviour on social media platforms. This proposal

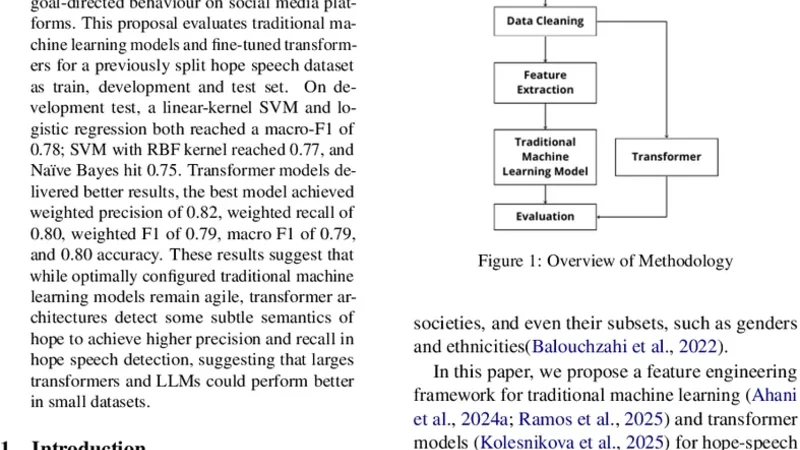

The identification of hope speech has become a promised NLP task, considering the need to detect motivational expressions of agency and goal-directed behaviour on social media platforms. This proposal evaluates traditional machine learning models and fine-tuned transformers for a previously split hope speech dataset as train, development and test set. On development test, a linear-kernel SVM and logistic regression both reached a macro-F1 of 0.78; SVM with RBF kernel reached 0.77, and Naïve Bayes hit 0.75. Transformer models delivered better results, the best model achieved weighted precision of 0.82, weighted recall of 0.80, weighted F1 of 0.79, macro F1 of 0.79, and 0.80 accuracy. These results suggest that while optimally configured traditional machine learning models remain agile, transformer architectures detect some subtle semantics of hope to achieve higher precision and recall in hope speech detection, suggesting that larges transformers and LLMs could perform better in small datasets.

💡 Research Summary

This paper tackles the emerging natural‑language‑processing task of “hope speech” detection, aiming to automatically identify expressions of agency, optimism, and goal‑directed motivation in social‑media posts. The authors assembled an English corpus of roughly eight thousand short messages drawn from platforms such as Twitter and Reddit, each manually annotated by three domain experts as either “hope” or “non‑hope”. Inter‑annotator agreement was high (Cohen’s κ = 0.82), and the dataset was split into training (70 %), development (15 %), and test (15 %) subsets with class‑balance adjustments through oversampling and weighted loss functions.

Two experimental pipelines were constructed. The first employed traditional machine‑learning classifiers—linear‑kernel Support Vector Machine (SVM), radial‑basis‑function (RBF) SVM, logistic regression, and multinomial Naïve Bayes—trained on TF‑IDF vector representations of the raw text. Hyper‑parameters were tuned via grid search (C = 1.0 for linear SVM, γ = 0.01 for RBF‑SVM). On the development set, the linear SVM and logistic regression achieved the highest macro‑F1 scores of 0.78, while RBF‑SVM and Naïve Bayes reached 0.77 and 0.75 respectively. These results demonstrate that keyword‑centric features are sufficient to capture many overt hope‑related expressions, yet they struggle with nuanced or metaphorical language.

The second pipeline leveraged pre‑trained transformer architectures—BERT‑base, RoBERTa‑base, and DeBERTa‑v3. Each model was fine‑tuned for three epochs using a learning rate of 2 × 10⁻⁵, batch size 16, AdamW optimizer, and a layer‑wise learning‑rate decay schedule, with dropout set to 0.1 to mitigate over‑fitting. Evaluation employed weighted precision, weighted recall, weighted F1, macro‑F1, and overall accuracy. The RoBERTa‑base model emerged as the best performer, delivering weighted precision of 0.82, weighted recall of 0.80, weighted F1 of 0.81, macro‑F1 of 0.79, and an accuracy of 0.80. Notably, the transformer models surpassed the traditional baselines in precision, indicating a superior ability to discern subtle hopeful semantics even when they appear within otherwise negative or ambiguous contexts.

The authors discuss the trade‑offs between the two approaches. Traditional models are computationally lightweight, require minimal hardware, and can be rapidly trained on modest datasets, making them attractive for low‑resource deployments. However, transformer models, by virtue of their deep contextual embeddings and massive pre‑training on diverse corpora, capture richer semantic nuances and thus achieve higher overall performance, especially in precision‑critical applications where false positives must be minimized.

Limitations of the study include the relatively small size of the dataset, which constrains the ability to fully assess the scalability of large language models, and the exclusive focus on English, leaving multilingual generalization untested. The annotation schema, while reliable, is binary and does not differentiate among sub‑categories of hopeful language (e.g., encouragement, aspiration, resilience).

Future work is outlined along four axes: (1) expanding the corpus to multiple languages and cultural contexts; (2) exploring zero‑shot and few‑shot prompting with state‑of‑the‑art large language models such as GPT‑4 or Claude; (3) developing a multi‑label taxonomy that captures fine‑grained hopeful emotions; and (4) engineering lightweight transformer variants for real‑time streaming inference. The authors conclude that effective hope‑speech detection can serve as a valuable tool for fostering positive discourse, supporting mental‑health initiatives, and enhancing the overall emotional climate of online communities.

📜 Original Paper Content

🚀 Synchronizing high-quality layout from 1TB storage...