UrbanVLA: A Vision-Language-Action Model for Urban Micromobility

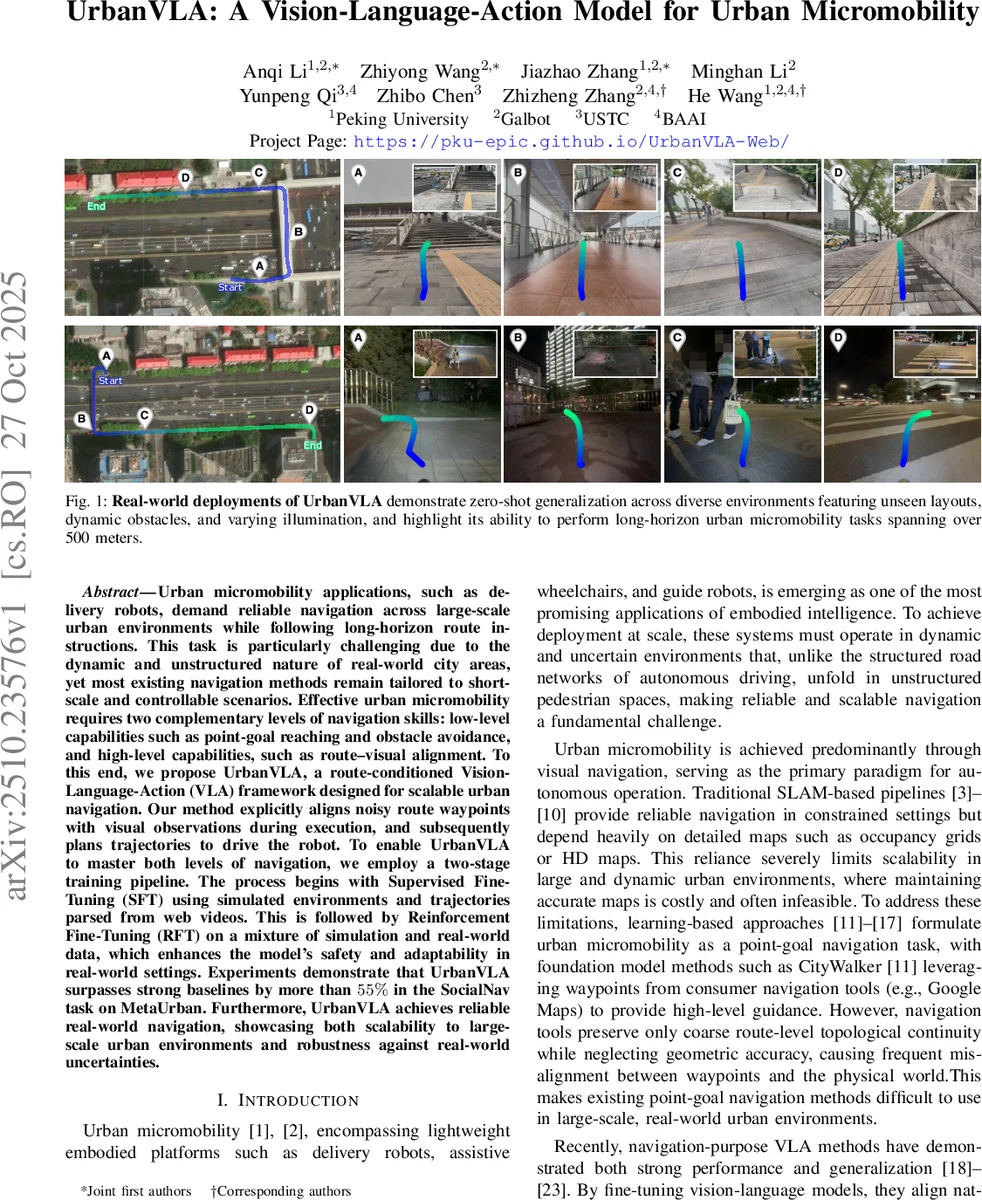

Urban micromobility applications, such as delivery robots, demand reliable navigation across large-scale urban environments while following long-horizon route instructions. This task is particularly challenging due to the dynamic and unstructured nature of real-world city areas, yet most existing navigation methods remain tailored to short-scale and controllable scenarios. Effective urban micromobility requires two complementary levels of navigation skills: low-level capabilities such as point-goal reaching and obstacle avoidance, and high-level capabilities, such as route-visual alignment. To this end, we propose UrbanVLA, a route-conditioned Vision-Language-Action (VLA) framework designed for scalable urban navigation. Our method explicitly aligns noisy route waypoints with visual observations during execution, and subsequently plans trajectories to drive the robot. To enable UrbanVLA to master both levels of navigation, we employ a two-stage training pipeline. The process begins with Supervised Fine-Tuning (SFT) using simulated environments and trajectories parsed from web videos. This is followed by Reinforcement Fine-Tuning (RFT) on a mixture of simulation and real-world data, which enhances the model’s safety and adaptability in real-world settings. Experiments demonstrate that UrbanVLA surpasses strong baselines by more than 55% in the SocialNav task on MetaUrban. Furthermore, UrbanVLA achieves reliable real-world navigation, showcasing both scalability to large-scale urban environments and robustness against real-world uncertainties.

💡 Research Summary

UrbanVLA introduces a route‑conditioned Vision‑Language‑Action framework designed to enable reliable, long‑horizon navigation for urban micromobility platforms such as delivery robots, assistive wheelchairs, and guide robots. Traditional SLAM pipelines rely heavily on high‑definition maps, which are costly to maintain and brittle in dynamic, unstructured pedestrian environments. Recent learning‑based point‑goal methods improve low‑level control but suffer from misalignment between coarse navigation waypoints (e.g., from Google Maps) and the physical world, limiting scalability. UrbanVLA addresses both gaps by (1) converting high‑level route information (“roadbooks”) into structured natural‑language instructions and explicitly aligning these with multi‑view visual observations, and (2) employing a two‑stage training pipeline that first leverages supervised fine‑tuning (SFT) on simulated trajectories and web‑scale navigation videos, then refines the policy with offline reinforcement learning (RFT) using Implicit Q‑Learning (IQL) on a hybrid simulation‑real dataset.

In the route‑conditioning step, the target route is resampled every 2 m, yielding about 20 waypoints for the next 40 m. A corner‑detection algorithm segments the route into blocks, from which distance‑and‑direction cues (e.g., “turn right in 30 m”) are extracted. These cues are embedded into a language template to form the instruction I. Visual inputs from four RGB cameras are processed by two pretrained encoders (DINOv2 and SigLIP), concatenated, down‑sampled via grid pooling, and projected into the embedding space of a large language model (Qwen2). The LLM receives both language tokens (EL) and visual tokens (E1:C1:T) and produces two streams: an action token decoded into a SE(2) trajectory τ, and, for VideoQA tasks, a sequence of answer tokens.

The SFT stage uses a novel Heuristic Trajectory Lifting (HTL) pipeline to generate realistic (roadbook, observation, trajectory) triples from both simulation and real‑world data. Raw real trajectories are denoised with a Savitzky‑Golay filter, corner points are identified, Gaussian noise is added to each segment to model corridor‑level flexibility, and the resulting noisy segments are merged and resampled. This prevents the model from over‑fitting to idealized paths and forces it to rely on visual cues. Training minimizes mean‑squared error between predicted and ground‑truth waypoints.

RFT applies IQL, an offline RL algorithm that mitigates over‑optimistic Q‑value estimates and provides conservative updates for out‑of‑distribution samples. The reward combines trajectory efficiency (shorter distance and time) with safety penalties for collisions, pedestrian violations, and traffic‑signal infractions. By fine‑tuning on a mixed dataset of expert demonstrations from MetaUrban and real‑world runs, UrbanVLA learns to respect social norms while maintaining efficient navigation.

Experimental evaluation on MetaUrban‑test and MetaUrban‑unseen benchmarks shows that UrbanVLA outperforms strong baselines (NavFoM, CityWalker) by 55 % and 56 % success rates respectively in the SocialNav task. In long‑horizon scenarios exceeding 500 m, the model achieves a 0.78 goal‑arrival rate, handling dynamic obstacles and varying illumination without collisions. Real‑world trials with a 3 kg delivery robot demonstrate zero‑shot generalization across unseen city layouts, successfully navigating intersections, sidewalks, and roadways while obeying traffic signals and yielding to pedestrians.

Key contributions are: (1) the first route‑conditioned VLA that fuses high‑level navigation tools with vision‑language policies, (2) the HTL method for realistic simulation‑to‑real data generation, and (3) IQL‑based reinforcement fine‑tuning that enhances safety‑critical behaviors. Limitations include reliance on a fixed camera rig and incomplete handling of complex traffic regulations such as one‑way streets or bike lanes. Future work will explore multimodal sensor fusion (LiDAR, ultrasonic) and rule‑based reasoning modules to further broaden the applicability of UrbanVLA to diverse urban micromobility scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment