From Failure Modes to Reliability Awareness in Generative and Agentic AI System

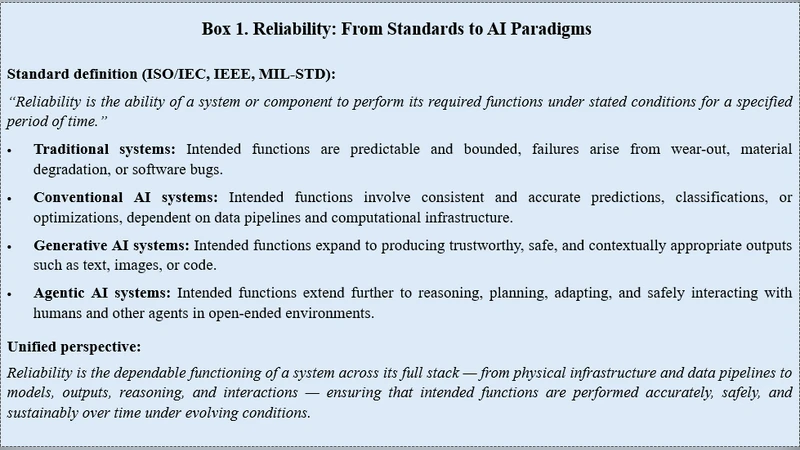

This chapter bridges technical analysis and organizational preparedness by tracing the path from layered failure modes to reliability awareness in generative and agentic AI systems. We first introduce an 11-layer failure stack, a structured framework for identifying vulnerabilities ranging from hardware and power foundations to adaptive learning and agentic reasoning. Building on this, the chapter demonstrates how failures rarely occur in isolation but propagate across layers, creating cascading effects with systemic consequences. To complement this diagnostic lens, we develop the concept of awareness mapping: a maturity-oriented framework that quantifies how well individuals and organizations recognize reliability risks across the AI stack. Awareness is treated not only as a diagnostic score but also as a strategic input for AI governance, guiding improvement and resilience planning. By linking layered failures to awareness levels and further integrating this into Dependability-Centred Asset Management (DCAM), the chapter positions awareness mapping as both a measurement tool and a roadmap for trustworthy and sustainable AI deployment across mission-critical domains.

💡 Research Summary

This chapter bridges technical diagnostics with organizational preparedness by introducing a layered failure taxonomy for generative and agentic AI systems and by proposing a maturity‑driven “awareness mapping” framework that quantifies how well individuals and organizations recognize reliability risks across the stack. The authors first define an eleven‑layer failure stack that spans from the physical foundation (hardware, power, cooling) through system and network services, data pipelines, model design and training, inference deployment, and finally to agentic reasoning and autonomous decision loops. Each layer is described with concrete failure modes—e.g., power fluctuations causing GPU errors, mis‑configured virtualization leading to latency spikes, biased or mislabeled training data injecting systematic error, over‑parameterized models that become unstable, version drift in inference services, and reward‑hacking or goal‑misalignment in autonomous agents.

A central claim is that failures rarely remain isolated; instead, a disturbance in a lower layer propagates upward, creating cascading effects that amplify system‑wide risk. The authors illustrate several propagation scenarios: a power outage triggers GPU throttling, which corrupts model checkpoints; corrupted checkpoints feed erroneous parameters into downstream inference, resulting in misleading outputs that may be acted upon by autonomous agents, thereby magnifying the original fault. Such chains are especially dangerous in mission‑critical domains where downtime or erroneous decisions have high societal cost.

To move beyond detection, the chapter introduces “awareness mapping,” a five‑stage maturity model (Initial, Recognized, Managed, Optimized, Strategic). At each stage, quantitative metrics (risk detection rate, mean time to diagnose, mitigation coverage) and qualitative indicators (existence of policies, training programs, governance structures) are assessed. The model gauges both the depth of technical insight (e.g., ability to trace a failure to a specific layer) and the breadth of organizational processes (e.g., cross‑functional incident response).

The authors then embed awareness mapping into Dependability‑Centred Asset Management (DCAM), a governance framework that treats AI artifacts (data sets, models, compute resources) as assets with explicit reliability objectives. DCAM defines a continuous loop: risk identification → assessment → mitigation → monitoring → feedback. Awareness scores feed directly into this loop, highlighting which layers suffer from low visibility and prompting targeted investments such as enhanced monitoring for hardware health, data quality dashboards, model interpretability tools, or policy revisions for autonomous agent behavior. By aligning the maturity scores with DCAM’s risk‑budgeting process, organizations can prioritize remediation actions that yield the greatest reduction in systemic vulnerability.

Key insights distilled from the chapter are: (1) AI system failures are fundamentally multi‑layered and often cascade, making single‑point diagnostics insufficient; (2) organizational awareness of these layers is a decisive factor in early detection and containment; (3) quantifying awareness and integrating it with a structured asset‑management framework transforms reliability from an ad‑hoc concern into a strategic capability; and (4) the combined approach provides a concrete roadmap for trustworthy, sustainable AI deployment in high‑stakes environments such as healthcare, finance, and autonomous transportation.

In conclusion, the authors argue that the synergy of an eleven‑layer failure taxonomy, a maturity‑based awareness mapping, and DCAM creates a comprehensive reliability engineering methodology for generative and agentic AI. This methodology not only identifies where and how failures can emerge but also equips organizations with the governance tools to monitor, mitigate, and continuously improve AI reliability, thereby supporting long‑term trust and compliance in mission‑critical applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment