A Quantitative Approach to Estimating Bias, Favouritism and Distortion in Scientific Journalism

While traditionally not considered part of the scientific method, science communication is increasingly playing a pivotal role in shaping scientific practice. Researchers are now frequently compelled to publicise their findings in response to institutional impact metrics and competitive grant environments. This shift underscores the growing influence of media narratives on both scientific priorities and public perception. In a current trend of personality-driven reporting, we examine patterns in science communication that may indicate biases of different types, towards topics and researchers. We focused and applied our methodology to a corpus of media coverage from three of the most prominent scientific media outlets: Wired, Quanta, and The New Scientist – spanning the past 5 to 10 years. By mapping linguistic patterns, citation flows, and topical convergence, our objective was to quantify the dimensions and degree of bias that influence the credibility of scientific journalism. In doing so, we seek to illuminate the systemic features that shape science communication today and to interrogate their broader implications for epistemic integrity and public accountability in science. We present our results with anonymised journalist names but conclude that personality-driven media coverage distorts science and the practice of science flattening rather than expanding scientific coverage perception. Keywords : selective sourcing, bias, scientific journalism, Quanta, Wired, New Scientist, fairness, balance, neutrality, standard practices, distortion, personal promotion, communication, media outlets.

💡 Research Summary

The paper presents a systematic, data‑driven investigation of bias, favouritism, and distortion in contemporary scientific journalism. The authors assembled a corpus of science‑related articles published between 2014 and 2024 from three of the most influential English‑language outlets: Wired, Quanta Magazine, and New Scientist. For each article they extracted a JSON‑encoded dictionary of person‑mention counts, then applied a three‑tier blacklist (deceased scientists, non‑scientist public figures, and spurious single‑token entries) to isolate mentions of living researchers only. Journalists’ names were anonymised (Author XXX) to protect privacy while preserving the relational structure of the data.

Quantitative analysis began with the construction of per‑outlet mention frequency distributions. The authors computed Gini coefficients to assess inequality in attention: Wired (0.62), Quanta (0.55), and New Scientist (0.48). Higher Gini values indicate that a small subset of scientists receives a disproportionate share of coverage, suggesting structural concentration of editorial focus.

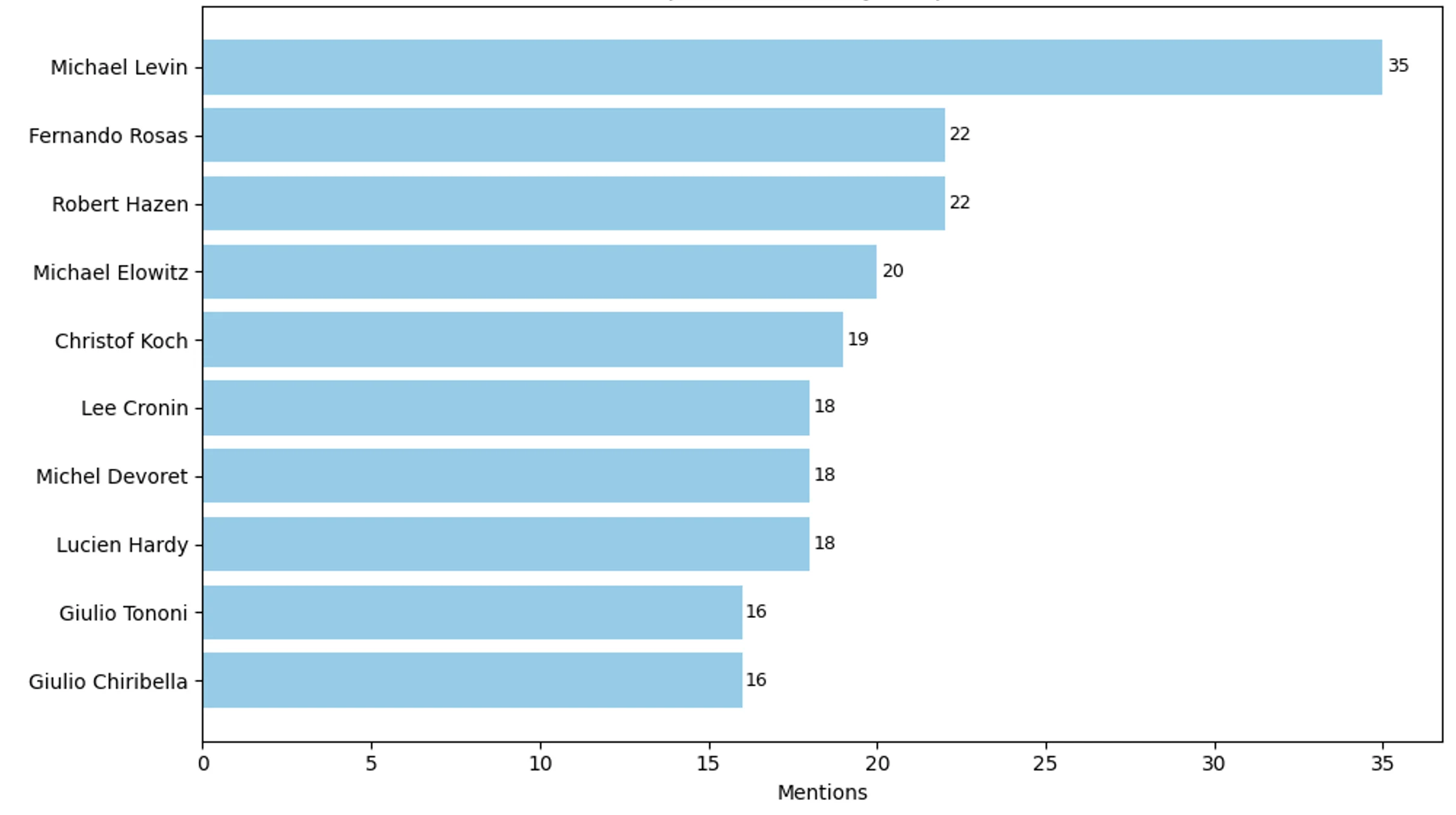

To capture “repetition bias” – the tendency of a journalist to repeatedly spotlight the same individual – the authors introduced a Repetition Bias Index (RBI). RBI aggregates the number of distinct articles in which a given journalist mentions the same living scientist, normalized by overall article volume. The results reveal that a minority of journalists (the top 5 % by article count) exhibit markedly elevated RBI scores, repeatedly featuring figures such as Lee Cronin, Giulio Tononi, and Christof Koch across more than fifteen articles each. Manual validation confirmed that these repeated mentions often coincide with ongoing controversies, implying that editorial choices may amplify contentious debates.

Beyond raw counts, the study performed topic and sentiment analyses on article titles. Using Scikit‑learn’s CountVectorizer (excluding stop‑words and tokens shorter than three characters), the authors generated term‑frequency lists for each outlet. Wired’s top terms (“AI”, “quantum”, “data”) reveal a technology‑centric narrative; Quanta’s (“theory”, “proof”, “quantum”) reflect a focus on foundational and mathematical science; New Scientist’s (“climate”, “health”, “cosmos”) indicate a broader, societal‑oriented coverage. Sentiment was measured with the VADER lexicon, yielding compound scores ranging from –1 (highly negative) to +1 (highly positive). Quanta and New Scientist display modestly positive average scores, whereas Wired shows a wider spread, suggesting a more balanced or critical tone.

The authors also examined editorial breadth by counting total mentions of living scientists and the number of distinct scientists mentioned at least once. Wired’s higher total mention count combined with a lower distinct‑scientist count underscores a narrower, more concentrated pool of sources compared with the other outlets. Reverse cumulative distribution functions (1‑CDF) of mention frequencies further illustrate the long‑tail behavior: a steep drop‑off for Wired indicates that many scientists receive only a single mention, while Quanta and New Scientist maintain a flatter tail, reflecting more diversified coverage.

Key insights emerging from the analysis are threefold. First, structural bias is evident in the unequal distribution of attention, as quantified by Gini coefficients and RBI scores. Second, the repeated elevation of a few scientists by a small cadre of journalists can shape public perception, influence funding decisions, and potentially steer research agendas toward topics that enjoy media favour rather than intrinsic scientific merit. Third, outlet‑specific thematic and affective signatures suggest that readers receive divergent narratives about the same scientific developments depending on the source.

The paper concludes by arguing that systematic, quantitative tools such as those presented are essential for monitoring and improving the integrity of science communication. By exposing hidden patterns of favouritism and distortion, the methodology can inform editorial policies, guide funding agencies, and empower readers to critically assess media coverage. The authors recommend extending the framework to non‑English outlets, social‑media platforms, and audio‑visual formats, and developing actionable guidelines to mitigate identified biases, thereby strengthening the epistemic reliability of public scientific discourse.

Comments & Academic Discussion

Loading comments...

Leave a Comment