Directive, Metacognitive or a Blend of Both? A Comparison of AI-Generated Feedback Types on Student Engagement, Confidence, and Outcomes

Feedback is one of the most powerful influences on student learning, with extensive research examining how best to implement it in educational settings. Increasingly, feedback is being generated by artificial intelligence (AI), offering scalable and adaptive responses. Two widely studied approaches are directive feedback, which gives explicit explanations and reduces cognitive load to speed up learning, and metacognitive feedback which prompts learners to reflect, track their progress, and develop self-regulated learning (SRL) skills. While both approaches have clear theoretical advantages, their comparative effects on engagement, confidence, and quality of work remain underexplored. This study presents a semester-long randomised controlled trial with 329 students in an introductory design and programming course using an adaptive educational platform. Participants were assigned to receive directive, metacognitive, or hybrid AI-generated feedback that blended elements of both directive and metacognitive feedback. Results showed that revision behaviour differed across feedback conditions, with Hybrid prompting the most revisions compared to Directive and Metacognitive. Confidence ratings were uniformly high, and resource quality outcomes were comparable across conditions. These findings highlight the promise of AI in delivering feedback that balances clarity with reflection. Hybrid approaches, in particular, show potential to combine actionable guidance for immediate improvement with opportunities for self-reflection and metacognitive growth.

💡 Research Summary

This paper presents a semester‑long randomized controlled trial that directly compares three AI‑generated feedback modalities—directive, metacognitive, and a hybrid blend of the two—within an authentic university design and programming course. A total of 329 students (predominantly undergraduates, with a large proportion of international learners and many for whom English is a second language) were randomly assigned to receive one of the three feedback types while creating learning resources (multiple‑choice questions, explanatory notes, or flip‑cards) on the RiPPLE adaptive learning platform.

All feedback followed a uniform three‑part template (Summary, Strengths, Suggestions for Improvement); only the “Suggestions” section varied across conditions. Directive feedback delivered explicit error identification and concrete revision instructions, aiming to reduce cognitive load and accelerate early performance. Metacognitive feedback omitted direct solutions and instead posed reflective prompts, encouraging self‑assessment, planning, and monitoring in line with self‑regulated learning (SRL) theory. The hybrid condition combined both approaches, providing clear guidance alongside reflective questions.

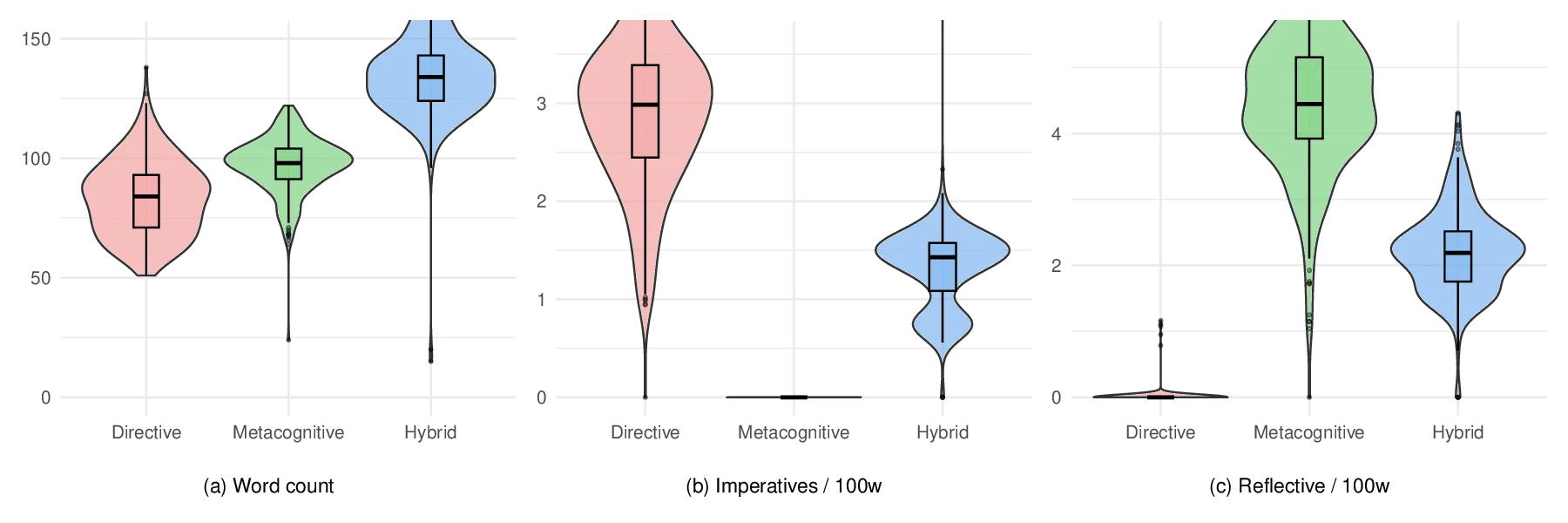

The study addressed four research questions: (1) linguistic and structural differences among feedback types; (2) impact on student engagement (interaction time, actions taken on feedback, workflow transitions); (3) effect on confidence in the quality of one’s work; and (4) influence on the quality of revised submissions. Linguistic analysis confirmed that directive feedback was shortest and most imperative, metacognitive feedback was longest with a higher proportion of interrogative and reflective language, and hybrid feedback occupied an intermediate position.

Engagement data, captured via detailed interaction logs, revealed that the hybrid group performed the greatest number of revisions, spent the most time interacting with feedback, and exhibited the highest frequency of workflow transitions. Directive feedback prompted quick, surface‑level edits, while metacognitive feedback led to deeper but fewer revisions. Confidence ratings, collected through post‑feedback surveys, were uniformly high across all groups (average >4 on a 5‑point scale), indicating that learners trusted AI feedback regardless of its style.

Quality of the final revised resources was assessed by expert raters using a standardized rubric. No statistically significant differences emerged among the three conditions; average scores ranged from 78 to 81 out of 100, suggesting that while hybrid feedback spurred more iterative work, the ultimate learning product was comparable across modalities.

The authors interpret these findings as evidence that a blended feedback approach can harness the strengths of both clarity and reflection: learners receive actionable guidance while simultaneously developing metacognitive awareness. This dual stimulus appears to motivate more extensive revision cycles without compromising confidence or final output quality.

Limitations include the heavy representation of non‑native English speakers, which may have affected comprehension of reflective prompts; the confinement to a single course and a specific type of creative assessment; and the focus on short‑term outcomes within a single semester, leaving long‑term transfer effects unexamined.

Practical implications suggest that AI tutoring systems might adopt a “progressive hybrid” model: early in a course, prioritize directive cues to scaffold novices, then gradually introduce metacognitive prompts as learners gain competence, thereby fostering self‑regulated learning without overwhelming them. Designers should also ensure linguistic simplicity for diverse language backgrounds while preserving reflective depth.

Future research directions proposed are: (a) longitudinal studies tracking retention and transfer of skills; (b) replication across varied disciplines and assessment formats; (c) personalization of the hybrid mix based on individual learner profiles and feedback literacy; and (d) exploration of synergistic human‑AI feedback collaborations.

In sum, the study provides robust empirical evidence that AI‑generated feedback can be systematically varied and that a hybrid feedback model most effectively promotes iterative engagement, maintains learner confidence, and yields learning products of comparable quality to purely directive or purely metacognitive approaches.

Comments & Academic Discussion

Loading comments...

Leave a Comment