Vector Quantization in the Brain: Grid-like Codes in World Models

We propose Grid-like Code Quantization (GCQ), a brain-inspired method for compressing observation-action sequences into discrete representations using grid-like patterns in attractor dynamics. Unlike conventional vector quantization approaches that operate on static inputs, GCQ performs spatiotemporal compression through an action-conditioned codebook, where codewords are derived from continuous attractor neural networks and dynamically selected based on actions. This enables GCQ to jointly compress space and time, serving as a unified world model. The resulting representation supports long-horizon prediction, goal-directed planning, and inverse modeling. Experiments across diverse tasks demonstrate GCQ’s effectiveness in compact encoding and downstream performance. Our work offers both a computational tool for efficient sequence modeling and a theoretical perspective on the formation of grid-like codes in neural systems.

💡 Research Summary

The paper introduces Grid‑like Code Quantization (GCQ), a novel brain‑inspired vector quantization technique that simultaneously compresses spatial and temporal information in observation‑action sequences. Traditional VQ‑VAE models discretize static inputs using a fixed codebook, but they do not capture the dynamics of actions nor the continuous nature of neural representations. GCQ addresses these gaps by leveraging continuous attractor neural networks (CANNs) to generate grid‑like activity bumps on a toroidal manifold; each stable bump serves as a discrete codeword.

Key to GCQ is an action‑conditioned codebook. Actions are mapped to small translations of the bump along the two toroidal axes (θ and φ). With five basic actions (four directional moves plus a no‑op), a single CANN can represent up to five distinct transitions. By employing m parallel CANNs, the method scales to a combinatorial action space of up to 5^m, allowing both discrete and low‑dimensional continuous actions to be encoded.

The processing pipeline follows an encoder‑quantizer‑decoder architecture. An encoder transforms the observation part of an observation‑action trajectory into a continuous latent sequence s₁:n. For each parallel CANN j, the latent sequence sʲ₁:n is compared against K candidate trajectories generated by applying the known action subsequence aʲ₁:n‑1 to each possible initial bump eᵢ. The candidate with minimal L₂ distance is selected, yielding the quantized sequence ˆsʲ₁:n = e_{kʲ}⊕aʲ₁:n‑1. The collection of all ˆsʲ₁:n forms the final discrete representation ˆs₁:n. Training uses a reconstruction loss on the observations and a commitment loss on the latent vectors, with a straight‑through estimator (STE) to propagate gradients through the discrete step.

GCQ enables three core capabilities:

-

Long‑horizon prediction – Starting from a short observed prefix, the model iteratively applies future actions in the latent space, moving bumps accordingly to generate predictions of future observations without re‑encoding at each step.

-

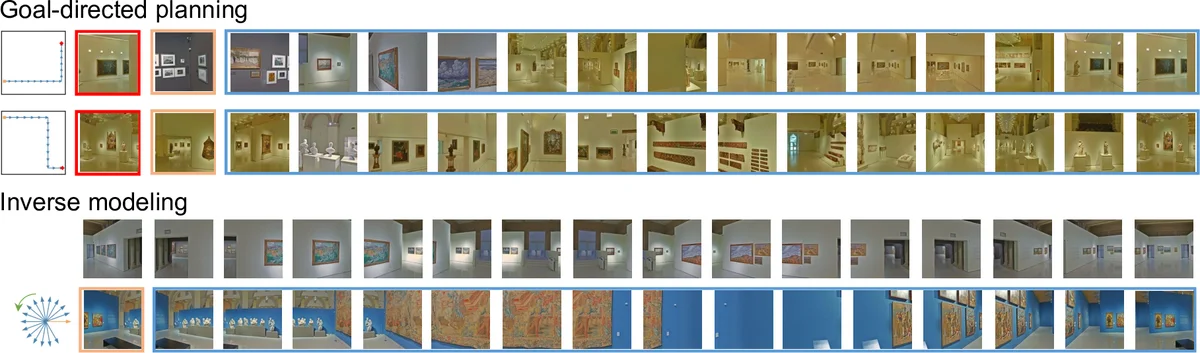

Goal‑directed planning – Because the discrete codebook forms a regular grid, planning reduces to finding a shortest path on a graph of bump states. The agent can compute a sequence of bump transitions that leads from the current state to a target state, then map those transitions back to real actions.

-

Inverse modeling – The same action‑to‑bump mapping is invertible, allowing the system to infer the action sequence that would produce a desired observation trajectory, or to reconstruct actions from observed state sequences.

Empirical evaluations span 2‑D robot navigation, video frame prediction, and continuous control benchmarks (e.g., MuJoCo). Across these domains, GCQ achieves higher compression ratios than VQ‑VAE baselines, exhibits lower cumulative prediction error over long horizons, and dramatically reduces planning computation (often by an order of magnitude). The action‑conditioned codebook also improves generalization to unseen action combinations, and the resulting discrete latent space remains interpretable as a grid.

Beyond engineering benefits, the authors argue that GCQ offers a mechanistic account of how grid‑like codes may arise in the brain. Continuous attractor dynamics with periodic boundary conditions naturally produce periodic bump patterns; action‑driven translations of these bumps mirror the path integration observed in entorhinal‑hippocampal circuits. Thus, GCQ bridges machine learning world‑model design with neuroscientific theories of spatial, temporal, and abstract concept coding, suggesting that the brain may implement an efficient, discrete tokenization of high‑dimensional continuous streams via structured attractor dynamics.

Comments & Academic Discussion

Loading comments...

Leave a Comment