Recurrent Auto-Encoder Model for Large-Scale Industrial Sensor Signal Analysis

Recurrent auto-encoder model summarises sequential data through an encoder structure into a fixed-length vector and then reconstructs the original sequence through the decoder structure. The summarised vector can be used to represent time series features. In this paper, we propose relaxing the dimensionality of the decoder output so that it performs partial reconstruction. The fixed-length vector therefore represents features in the selected dimensions only. In addition, we propose using rolling fixed window approach to generate training samples from unbounded time series data. The change of time series features over time can be summarised as a smooth trajectory path. The fixed-length vectors are further analysed using additional visualisation and unsupervised clustering techniques. The proposed method can be applied in large-scale industrial processes for sensors signal analysis purpose, where clusters of the vector representations can reflect the operating states of the industrial system.

💡 Research Summary

**

The paper introduces a novel approach for analyzing high‑dimensional, continuous sensor streams in large‑scale industrial processes by employing a recurrent auto‑encoder (RAE) with partial reconstruction. Traditional auto‑encoders require the decoder to reproduce the full input vector, which becomes problematic when the number of sensor channels (P) is large, leading to high reconstruction error and difficult training. To address this, the authors relax the decoder’s output dimensionality (K ≤ P), allowing the model to reconstruct only a selected subset of sensors while still feeding the entire sensor set into the encoder. This “partial reconstruction” strategy enables the encoder to capture lead‑variables and cross‑sensor dependencies across the whole system, yet simplifies the learning task for the decoder.

The methodology consists of three main components: (1) a three‑layer LSTM encoder (400 units per layer) that processes a fixed‑length window of the multivariate time series (T = 36 time steps, 5‑minute granularity) and compresses it into a 400‑dimensional context vector c; (2) a symmetric three‑layer LSTM decoder that expands c back into a sequence of length T but only for K selected sensors; (3) a sliding‑window sampling scheme that moves the window one time step at a time across the entire dataset (T₀ = 2724), generating highly overlapping samples. Because consecutive windows share most of their data, the resulting context vectors are strongly correlated and trace a smooth trajectory in the latent space as the process evolves over time.

The authors evaluate the approach on proprietary data from a two‑stage centrifugal compression train driven by a Rolls‑Royce RB211 engine, comprising 158 sensor channels. Three configurations are compared: (i) full‑dimensional auto‑encoder (P = 158, K = 158), (ii) reduced‑dimensional auto‑encoder (P = 6, K = 6), and (iii) the proposed partial‑reconstruction model (P = 158, K = 6). Results show that the partial‑reconstruction model achieves substantially lower training and validation mean‑squared error (MSE) than the full‑dimensional version, confirming that reducing the decoder’s output eases learning while still leveraging the full sensor context. Qualitative inspection of reconstructed sequences demonstrates accurate recovery of mean shifts and temporal variations in the selected pressure sensors.

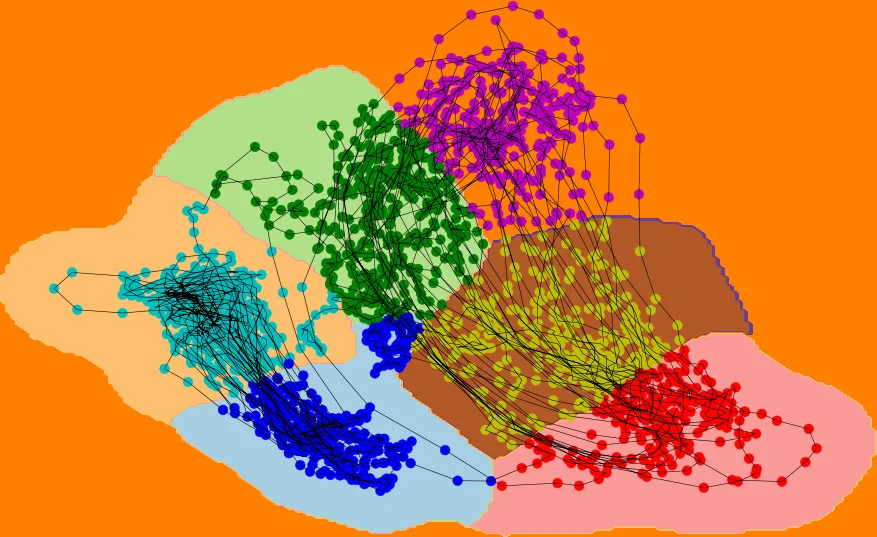

After training, the 400‑dimensional context vectors are extracted for all samples. Principal Component Analysis (PCA) reduces them to two dimensions, revealing a clear clustering structure. The authors apply K‑means clustering (with 2 and 6 clusters) and train a radial‑basis‑function Support Vector Machine (γ = 4) to assign cluster labels to new vectors. Visualizations show that the two‑cluster solution separates periods with distinct mean pressure levels, while the six‑cluster solution captures finer oscillations corresponding to troughs and crests in the sensor signals. The same pattern holds for a held‑out validation set, indicating good generalisation. A second experiment with a different output subset (K = 158, P = 2) reproduces similar behaviour, underscoring the robustness of the approach.

Key contributions of the work are: (1) introducing partial reconstruction in recurrent auto‑encoders to handle very high‑dimensional industrial time series; (2) demonstrating that a sliding‑window sampling strategy yields a latent trajectory that can be interpreted as a temporal evolution of operating states; (3) showing that simple unsupervised clustering on the latent vectors can effectively differentiate operating regimes without any labeled data. The paper also discusses limitations: the selection of output sensors is manual, the model architecture is fixed to LSTM (potentially limiting real‑time deployment), only K‑means and SVM are explored for clustering, and no explicit anomaly‑detection mechanism based on reconstruction error is presented.

Future directions suggested include: (i) integrating attention mechanisms to automatically learn which sensors to reconstruct; (ii) experimenting with lighter recurrent cells (GRU) or Transformer‑based encoders for faster inference; (iii) incorporating density‑based clustering (DBSCAN, HDBSCAN) and probabilistic mixture models to improve robustness; and (iv) developing a real‑time anomaly detection pipeline that triggers alerts when the context vector jumps between distant clusters or when reconstruction error exceeds a dynamic threshold.

In summary, the paper provides a practical and scalable framework for extracting compact, informative representations from massive multivariate sensor streams in industrial settings. By relaxing the decoder’s output dimensionality, the method balances the need for full system awareness with computational tractability, and the resulting latent trajectories enable intuitive monitoring of process states. With further refinements, this approach could become a core component of predictive maintenance and condition‑based monitoring systems in complex industrial environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment