DISC-GAN: Disentangling Style and Content for Cluster-Specific Synthetic Underwater Image Generation

In this paper, we propose a novel framework, Disentangled Style-Content GAN (DISC-GAN), which integrates style-content disentanglement with a cluster-specific training strategy towards photorealistic underwater image synthesis. The quality of synthetic underwater images is challenged by optical due to phenomena such as color attenuation and turbidity. These phenomena are represented by distinct stylistic variations across different waterbodies, such as changes in tint and haze. While generative models are well-suited to capture complex patterns, they often lack the ability to model the non-uniform conditions of diverse underwater environments. To address these challenges, we employ K-means clustering to partition a dataset into style-specific domains. We use separate encoders to get latent spaces for style and content; we further integrate these latent representations via Adaptive Instance Normalization (AdaIN) and decode the result to produce the final synthetic image. The model is trained independently on each style cluster to preserve domain-specific characteristics. Our framework demonstrates state-of-the-art performance, obtaining a Structural Similarity Index (SSIM) of 0.9012, an average Peak Signal-to-Noise Ratio (PSNR) of 32.5118 dB, and a Frechet Inception Distance (FID) of 13.3728.

💡 Research Summary

The paper introduces DISC‑GAN, a novel framework for generating photorealistic underwater images by explicitly disentangling style and content and by training separate generative models for distinct underwater style domains. The authors start from the RSUIGM dataset, which provides synthetically degraded underwater images together with clean references and depth maps. To capture the non‑uniform optical conditions of different water bodies, each image is represented by a feature vector that concatenates its RGB histogram and normalized mean depth. K‑means clustering is then applied to these vectors, and the elbow method determines that four clusters (blue, light‑blue, dark‑blue, black) best reflect the underlying Jerlov water types. This physics‑informed partitioning ensures that each cluster corresponds to a coherent set of attenuation coefficients and background light parameters.

For synthesis, DISC‑GAN employs two parallel encoders based on pretrained VGG‑19. The content encoder extracts high‑level structural features from a clean terrestrial image using the relu4_2 layer, producing a latent tensor z_c. The style encoder processes an underwater reference from a chosen cluster, computes Gram matrices from shallow layers (relu1_1, relu2_1, relu3_1), and yields a style vector z_s that encodes color tint and texture statistics. These two latent representations are fused inside the generator via Adaptive Instance Normalization (AdaIN), which aligns the mean and variance of the content feature maps to those of the style vector, thereby injecting the desired underwater appearance without disturbing spatial layout.

Training proceeds in two phases. First, the entire RSUIGM collection is partitioned into the four style clusters. Second, for each cluster a dedicated GAN (generator + PatchGAN discriminator) is trained independently. In each iteration a clean content image from the SUID dataset and a random style image from the current cluster are fed through the encoders, the generator produces a synthetic image ˆI, and a composite loss is back‑propagated. The loss combines an L1 reconstruction term (encouraging pixel‑wise fidelity to the style reference) and an L2 adversarial term (promoting realism as judged by the discriminator). Hyper‑parameters λ_rec and λ_adv balance these objectives. The models are optimized with Adam (lr = 2 × 10⁻⁴, β₁ = 0.5, β₂ = 0.999) for 100 epochs per cluster on an NVIDIA Tesla V100.

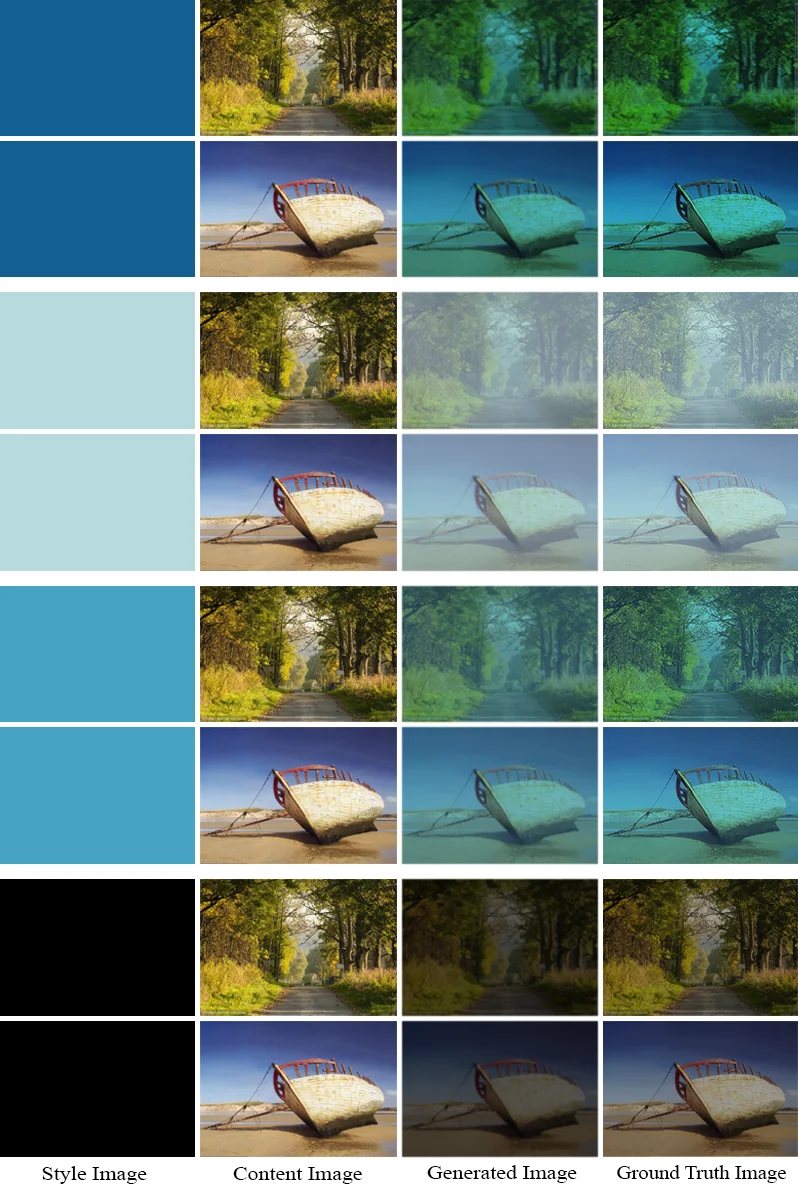

Quantitative evaluation on a held‑out test set yields SSIM = 0.9012, PSNR = 32.5118 dB, and FID = 13.3728, outperforming prior approaches such as WaterGAN and CycleGAN by a substantial margin. Visual inspection confirms that each cluster’s characteristic color shift and haze level are faithfully reproduced while preserving scene geometry. The cluster‑specific training prevents “style leakage,” enabling precise control over the generated underwater condition—an advantage for downstream tasks like data augmentation for marine robotics or underwater object detection.

The authors acknowledge several limitations. The number of clusters is fixed to four, which may be insufficient for finer granularity in real‑world deployments. The clustering relies solely on RGB histograms and mean depth, ignoring more subtle scattering properties such as particle size distribution. Training four separate GANs also multiplies computational cost. Future work is suggested to explore multi‑scale or unsupervised clustering, incorporate physics‑based loss terms directly derived from the Beer‑Lambert model, and develop lightweight decoder architectures for real‑time applications.

In summary, DISC‑GAN demonstrates that a physics‑guided data partitioning combined with modern style‑content disentanglement can dramatically improve both the realism and controllability of synthetic underwater imagery, opening new possibilities for training robust vision systems in challenging marine environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment