HYPERDOA: Robust and Efficient DoA Estimation using Hyperdimensional Computing

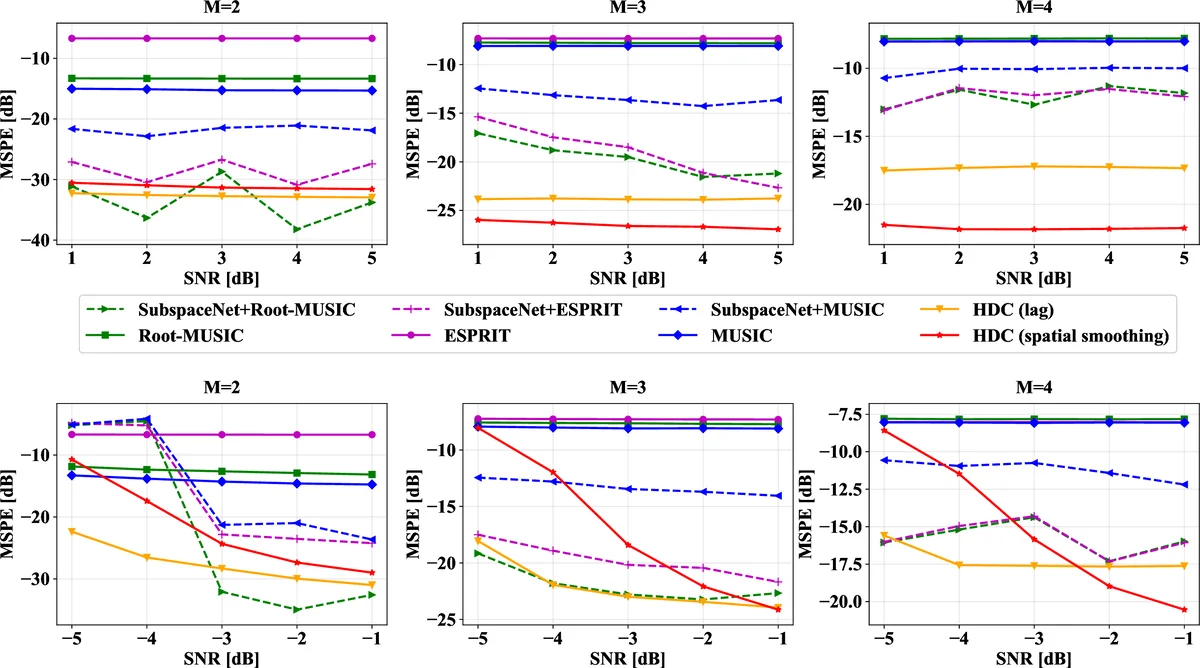

Direction of Arrival (DoA) estimation techniques face a critical trade-off, as classical methods often lack accuracy in challenging, low signal-to-noise ratio (SNR) conditions, while modern deep learning approaches are too energy-intensive and opaque for resource-constrained, safety-critical systems. We introduce HYPERDOA, a novel estimator leveraging Hyperdimensional Computing (HDC). The framework introduces two distinct feature extraction strategies – Mean Spatial-Lag Autocorrelation and Spatial Smoothing – for its HDC pipeline, and then reframes DoA estimation as a pattern recognition problem. This approach leverages HDC’s inherent robustness to noise and its transparent algebraic operations to bypass the expensive matrix decompositions and “black-box” nature of classical and deep learning methods, respectively. Our evaluation demonstrates that HYPERDOA achieves ~35.39% higher accuracy than state-of-the-art methods in low-SNR, coherent-source scenarios. Crucially, it also consumes ~93% less energy than competing neural baselines on an embedded NVIDIA Jetson Xavier NX platform. This dual advantage in accuracy and efficiency establishes HYPERDOA as a robust and viable solution for mission-critical applications on edge devices.

💡 Research Summary

The paper introduces HYPERDOA, a novel direction‑of‑arrival (DoA) estimator that leverages Hyperdimensional Computing (HDC), also known as Vector Symbolic Architectures. Classical high‑resolution methods such as MUSIC, Root‑MUSIC, and ESPRIT rely on subspace decomposition (eigen‑ or singular‑value decomposition) and therefore suffer from high computational cost and poor robustness in low‑SNR, closely spaced, or coherent‑source scenarios. Recent deep‑learning‑based approaches (e.g., DeepMUSIC, SubspaceNet) improve accuracy but bring two major drawbacks: they are black‑box models that are difficult to verify for safety‑critical applications, and they consume large numbers of FLOPs and significant power, making them unsuitable for edge devices.

HYPERDOA reframes DoA estimation as a pattern‑recognition problem. The raw snapshot matrix (X\in\mathbb{C}^{N\times T}) is first processed by a feature‑extraction module that produces a low‑dimensional real‑valued vector (f). Two alternative feature extractors are proposed:

-

Mean Spatial‑Lag Autocorrelation (Lag) – computes the sample covariance (\hat R_X = \frac{1}{T}XX^H), then averages each diagonal (spatial lag) to obtain a complex lag vector (r). After separating real and imaginary parts and normalizing by the magnitude of the zero‑lag element, a 2N‑dimensional feature vector is formed and z‑score normalized.

-

Spatial Smoothing – divides the uniform linear array into overlapping sub‑arrays, computes a covariance matrix for each sub‑array, and averages them to obtain a smoothed covariance (\hat R_{SS}). The upper‑triangular part of (\hat R_{SS}) is vectorized, split into real and imaginary components, and z‑score normalized. This technique restores rank loss caused by coherent sources.

The feature vector (f) is then encoded into a high‑dimensional hypervector (H_q) using a Fractional Power Encoder based on Fourier Holographic Reduced Representations (FHRR). Each feature dimension (i) is assigned a random complex base hypervector (B_i) (unit‑magnitude random phase). The feature value (f_i) is interpreted as a phase rotation (\rho_{f_i}) applied to (B_i). All rotated base vectors are bound together via element‑wise multiplication:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment