GL-DT: Multi-UAV Detection and Tracking with Global-Local Integration

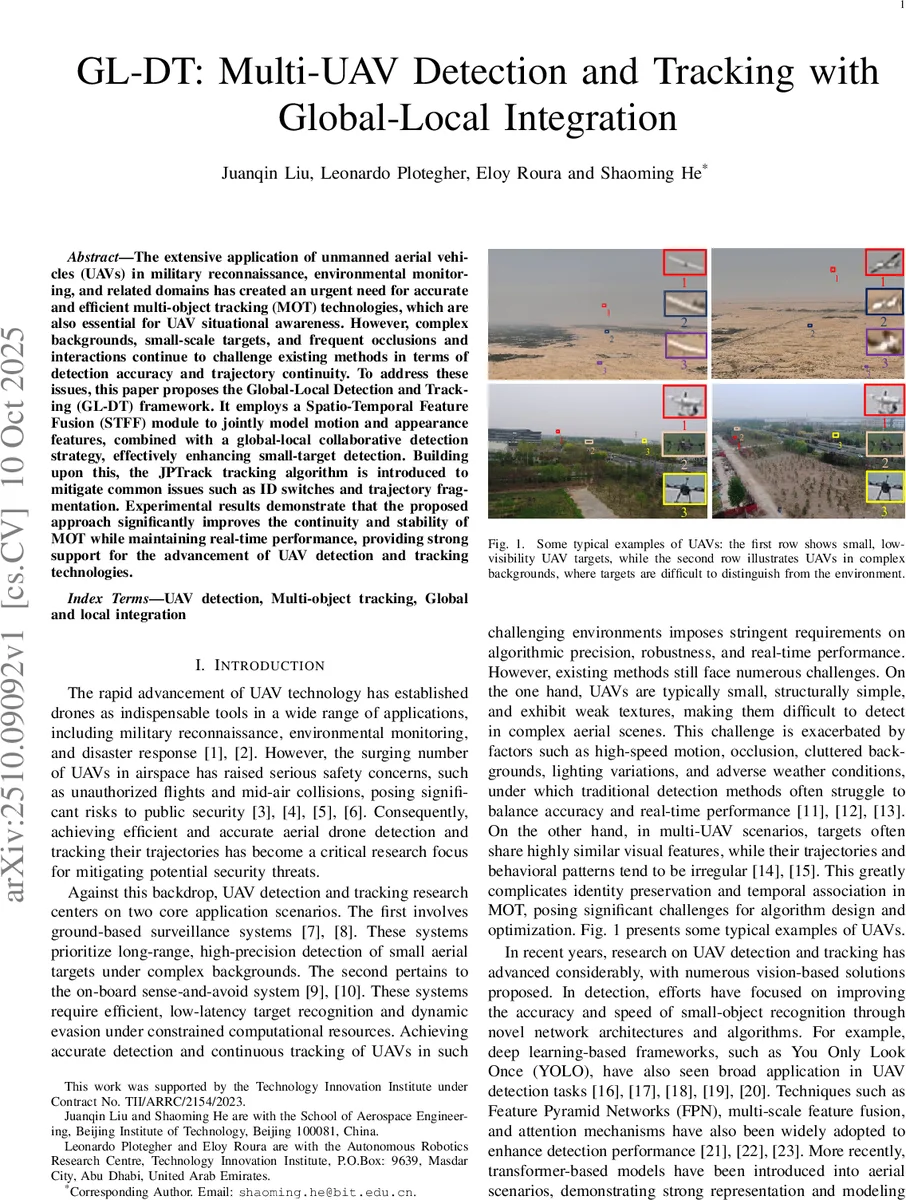

The extensive application of unmanned aerial vehicles (UAVs) in military reconnaissance, environmental monitoring, and related domains has created an urgent need for accurate and efficient multi-object tracking (MOT) technologies, which are also essential for UAV situational awareness. However, complex backgrounds, small-scale targets, and frequent occlusions and interactions continue to challenge existing methods in terms of detection accuracy and trajectory continuity. To address these issues, this paper proposes the Global-Local Detection and Tracking (GL-DT) framework. It employs a Spatio-Temporal Feature Fusion (STFF) module to jointly model motion and appearance features, combined with a global-local collaborative detection strategy, effectively enhancing small-target detection. Building upon this, the JPTrack tracking algorithm is introduced to mitigate common issues such as ID switches and trajectory fragmentation. Experimental results demonstrate that the proposed approach significantly improves the continuity and stability of MOT while maintaining real-time performance, providing strong support for the advancement of UAV detection and tracking technologies.

💡 Research Summary

**

The paper addresses the pressing need for robust multi‑UAV detection and tracking in increasingly crowded airspaces, where small, low‑visibility drones often appear against cluttered backgrounds and undergo frequent occlusions. To overcome these challenges, the authors propose the Global‑Local Detection and Tracking (GL‑DT) framework, which integrates a spatio‑temporal feature fusion (STFF) module, a dynamic global‑local collaborative detection strategy, and a novel JPTrack tracking algorithm.

In the global detection (GD) stage, an enhanced AM‑YOLO model processes two consecutive frames (Ft and Ft‑1) through a weight‑shared backbone. Critical to this stage is the STFF module, which separates appearance (Xapp) and motion (Xmotion) features using a Motion‑aware Attention mechanism. Xmotion is transformed into gating weights (G) via a 1×1 convolution and sigmoid activation, which adaptively align the previous‑frame features (Xalign‑t‑1). The current frame features (Xt), Xapp, and Xalign‑t‑1 are concatenated, passed through a 1×1 convolution, and soft‑maxed to produce fusion weights (W1, W2, W3). A weighted sum yields the fused feature Xf, which is combined with the original Xt through a learnable residual coefficient α, producing the final output Xout. This design captures dynamic object patterns while preserving spatial semantics, markedly improving detection of ultra‑small UAVs.

The framework dynamically switches between GD and a local detection (LD) mode based on frame index N. After GD establishes stable tracks for Ng frames, the system enters LD, generating Regions of Interest (ROIs) from previously confirmed target locations. A lightweight YOLO11s‑P2 model then performs parallel inference on these ROIs, enabling fast, high‑resolution detection of the filtered targets. If LD persists beyond Nl frames or fails to detect any target within an ROI for Nm consecutive frames, the system automatically resets to GD, ensuring that newly appearing drones or those that have moved out of the local field are promptly reacquired.

JPTrack, the tracking component, performs hierarchical association of high‑confidence and low‑confidence detections. High‑confidence detections are first linked across frames using a Kalman filter and a Joint Cost‑Matrix Association (JCMA) strategy that fuses distance, appearance similarity, and motion consistency into a unified cost matrix solved by the Hungarian algorithm. Low‑confidence detections serve as auxiliary candidates to recover tracks during short‑term occlusions. The Prediction‑Mask‑Recovery (PMR) module further refines these recovered trajectories by combining Kalman predictions with mask‑based adjustments. This two‑tiered approach dramatically reduces ID switches and trajectory fragmentation.

The authors introduce the FT dataset, comprising 25,855 frames captured from long‑range fixed‑wing UAV scenarios, with 1–3 drones per frame and an average pixel occupancy below 0.1 %. Experiments on this benchmark demonstrate that GL‑DT outperforms state‑of‑the‑art baselines such as YOLO‑based detection with DeepSORT, ByteTrack, and recent transformer‑based models. Gains of 8–12 % are reported across MOTA, IDF1, and FP/FN metrics, while detection recall for tiny UAVs improves by over 15 %. Importantly, the full pipeline runs at approximately 30 FPS on a single GPU, satisfying real‑time constraints.

In summary, GL‑DT advances multi‑UAV detection and tracking by (1) fusing motion‑aware attention with spatial features through the STFF module, (2) employing an adaptive global‑local detection schedule that balances scene‑wide awareness with fine‑grained local focus, and (3) introducing JPTrack’s hierarchical association and recovery mechanisms to maintain trajectory continuity. The framework delivers superior accuracy and stability for small UAVs in challenging environments without sacrificing computational efficiency, paving the way for practical deployment in surveillance, air‑traffic management, and autonomous drone‑avoidance systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment