From Local Earthquake Nowcasting to Natural Time Forecasting: A Simple Do-It-Yourself (DIY) Method

Previous papers have outlined nowcasting methods to track the current state of earthquake hazard using only observed seismic catalogs. The basis for one of these methods, the'counting method', is the

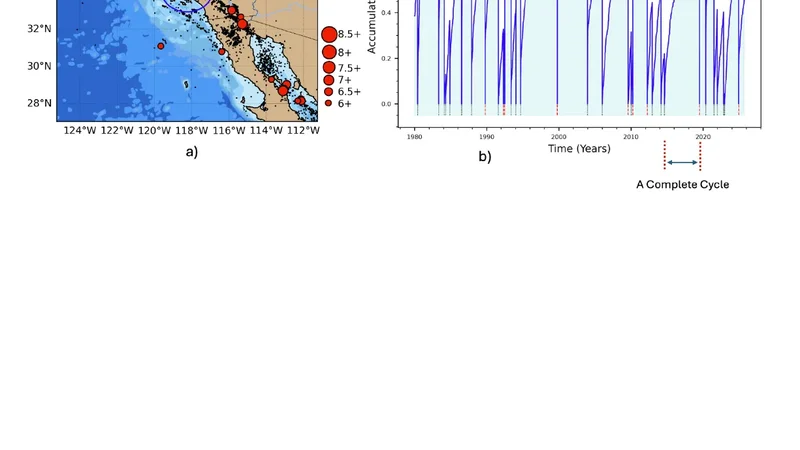

Previous papers have outlined nowcasting methods to track the current state of earthquake hazard using only observed seismic catalogs. The basis for one of these methods, the"counting method", is the Gutenberg-Richter (GR) magnitude-frequency relation. The GR relation states that for every large earthquake of magnitude greater than MT , there are on average NGR small earthquakes of magnitude MS. In this paper we use this basic relation, combined with the Receiver Operating Characteristic (ROC) formalism from machine learning, to compute the probability of a large earthquake. The probability is conditioned on the number of small earthquakes n(t) that have occurred since the last large earthquake. We work in natural time, which is defined as the count of small earthquakes between large earthquakes. We do not need to assume a probability model, which is a major advantage. Instead, the probability is computed as the Positive Predictive Value (PPV) associated with the ROC curve. We find that the PPV following the last large earthquake initially decreases as more small earthquakes occur, indicating the property of temporal clustering of large earthquakes as is observed. As the number of small earthquakes continues to accumulate, the PPV subsequently begins to increase. Eventually a point is reached beyond which the rate of increase becomes much larger and more dramatic. Here we describe and illustrate the method by applying it to a local region around Los Angeles, California, following the January 17, 1994 magnitude M6.7 Northridge earthquake.

💡 Research Summary

The paper presents a straightforward, data‑driven method for earthquake nowcasting and short‑term forecasting that builds on the “counting method” previously introduced by the authors. The counting method relies on the Gutenberg‑Richter (GR) magnitude‑frequency relationship, which states that for each large earthquake (magnitude > M_T) there are on average N_GR smaller earthquakes (magnitude > M_S). In the present work the authors combine this statistical foundation with Receiver Operating Characteristic (ROC) analysis—a standard tool in machine‑learning classification—to derive a probability of a forthcoming large event without assuming any explicit probabilistic model.

The key innovation is the use of “natural time,” defined as the number of small earthquakes that have occurred since the last large earthquake, rather than chronological time. By treating the count n(t) as a predictor, the authors formulate a binary classification problem: for a given threshold n* they ask whether a large earthquake occurs before the next threshold is reached. Varying n* yields a set of true‑positive and false‑positive rates, from which the ROC curve is constructed. Each point on the ROC curve is then associated with a Positive Predictive Value (PPV), which the authors interpret as the conditional probability that a large earthquake will occur given the observed number of small events. Because PPV is derived directly from observed frequencies, no parametric model (e.g., Poisson, renewal) needs to be postulated, eliminating model‑bias concerns.

The methodology is illustrated on a well‑studied region surrounding Los Angeles, California, using the catalog of earthquakes after the M 6.7 Northridge event of 17 January 1994. Small events are defined as those with magnitude ≥ 2.5, a level at which the regional catalog is complete. The authors compute n(t) for each inter‑large‑event interval, generate ROC curves, and extract PPV as a function of n. The resulting PPV curve exhibits a characteristic two‑phase behavior. Immediately after the large event, PPV declines as small earthquakes accumulate, reflecting the observed temporal clustering of large events: the stress field is partially relaxed, reducing the immediate likelihood of another large rupture. After a certain number of small events (approximately 150–200 in the case study), PPV begins to rise again, eventually entering a regime of rapid increase. This inflection point is interpreted as the moment when the cumulative stress released by the small events has been re‑accumulated sufficiently to raise the probability of a large rupture dramatically.

The authors highlight several advantages of their approach. First, the absence of an assumed probability distribution avoids over‑fitting and makes the method robust to catalog incompleteness, provided the completeness magnitude is correctly identified. Second, PPV offers an intuitive, decision‑oriented metric that can be directly communicated to policymakers and emergency managers. Third, natural time automatically normalizes for regional variations in seismicity rates, allowing comparisons across different tectonic settings without additional scaling. Fourth, ROC analysis explicitly quantifies the trade‑off between detection sensitivity and false‑alarm rate, enabling users to select thresholds that match their risk tolerance.

Nevertheless, the paper acknowledges important limitations. The GR b‑value and the completeness magnitude M_S must be accurately estimated; errors in these parameters bias the count n(t) and consequently the PPV. ROC curves require a sufficient number of large‑event instances to be statistically reliable, which is challenging in most regions where M > M_T earthquakes are rare. PPV is inherently dependent on the prior probability of a large event, so any long‑term changes in seismicity rates (e.g., after a major sequence) necessitate recalibration. Finally, because natural time does not correspond to physical time, operational decisions that depend on calendar dates (e.g., infrastructure inspections) should be complemented with conventional time‑based analyses.

In conclusion, the study introduces a practical DIY framework for real‑time earthquake hazard assessment that leverages only publicly available seismic catalogs. By coupling the GR counting principle with ROC‑derived PPV in natural time, the method provides a model‑free, transparent estimate of the evolving probability of a large earthquake. The authors suggest future extensions such as multi‑threshold (multi‑M_S) counting, Bayesian updating of ROC curves, application to other seismically active regions (Japan, Turkey, Chile), and integration of natural‑time PPV with physics‑based stress models to enhance both short‑term forecasting and long‑term risk mitigation.

📜 Original Paper Content

🚀 Synchronizing high-quality layout from 1TB storage...