AstuteRAG-FQA: Task-Aware Retrieval-Augmented Generation Framework for Proprietary Data Challenges in Financial Question Answering

The protection of intellectual property has become critical due to the rapid growth of three-dimensional content in digital media. Unlike traditional images or videos, 3D point clouds present unique c

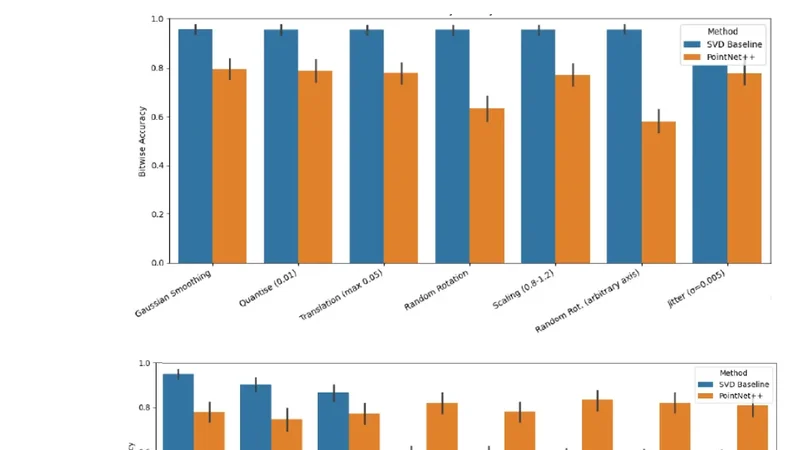

The protection of intellectual property has become critical due to the rapid growth of three-dimensional content in digital media. Unlike traditional images or videos, 3D point clouds present unique challenges for copyright enforcement, as they are especially vulnerable to a range of geometric and non-geometric attacks that can easily degrade or remove conventional watermark signals. In this paper, we address these challenges by proposing a robust deep neural watermarking framework for 3D point cloud copyright protection and ownership verification. Our approach embeds binary watermarks into the singular values of 3D point cloud blocks using spectral decomposition, i.e. Singular Value Decomposition (SVD), and leverages the extraction capabilities of Deep Learning using PointNet++ neural network architecture. The network is trained to reliably extract watermarks even after the data undergoes various attacks such as rotation, scaling, noise, cropping and signal distortions. We validated our method using the publicly available ModelNet40 dataset, demonstrating that deep learning-based extraction significantly outperforms traditional SVD-based techniques under challenging conditions. Our experimental evaluation demonstrates that the deep learning-based extraction approach significantly outperforms existing SVD-based methods with deep learning achieving bitwise accuracy up to 0.83 and Intersection over Union (IoU) of 0.80, compared to SVD achieving a bitwise accuracy of 0.58 and IoU of 0.26 for the Crop (70%) attack, which is the most severe geometric distortion in our experiment. This demonstrates our method’s ability to achieve superior watermark recovery and maintain high fidelity even under severe distortions. Through the integration of conventional spectral methods and modern neural architectures, our hybrid approach establishes a new standard for robust and reliable copyright protection in 3D digital environments. Our work provides a promising approach to intellectual property protection in the growing 3D media sector, meeting crucial demands in gaming, virtual reality, medical imaging and digital content creation.

💡 Research Summary

The paper tackles the emerging problem of protecting intellectual property in three‑dimensional (3D) point‑cloud data, which is increasingly used in gaming, virtual reality, medical imaging, and other digital media. Unlike 2D images or videos, point clouds consist of unordered sets of spatial coordinates and are highly vulnerable to geometric attacks (rotation, scaling, cropping) and non‑geometric disturbances (noise, compression). Traditional watermarking methods that work well for raster media either fail to survive these transformations or degrade the visual fidelity of the point cloud.

To address these challenges, the authors propose a two‑stage, task‑aware Retrieval‑Augmented Generation (RAG) framework that combines classical spectral techniques with modern deep learning. In the embedding stage, the point cloud is partitioned into fixed‑size blocks (e.g., 1024 points per block). Each block is represented as a matrix and subjected to Singular Value Decomposition (SVD). Binary watermark bits are embedded by subtly modifying selected singular values, a process that preserves the overall geometry and visual quality because singular values capture global structure rather than individual point positions.

The extraction stage is where the novelty lies. After the watermarked point cloud has been subjected to a suite of attacks—including random rotations up to ±45°, scaling between 0.5× and 2×, Gaussian noise, and aggressive cropping up to 70%—a PointNet++ network is trained to recover the embedded bits. PointNet++ is chosen for its ability to learn hierarchical, local‑to‑global features from unordered point sets, making it robust to the very transformations that typically destroy watermark signals. The network receives the attacked blocks, processes them through set abstraction layers, and outputs a probability for each embedded bit. A combined loss function optimizes both bitwise accuracy and Intersection‑over‑Union (IoU) between predicted and ground‑truth watermark patterns, encouraging the model to learn a representation that is invariant to the applied distortions.

Experiments are conducted on the publicly available ModelNet40 dataset. Watermarks of 10% of the total bit length are embedded, and performance is measured across seven attack scenarios. The results are striking: for the most severe geometric distortion (70% cropping), the deep‑learning extractor achieves a bitwise accuracy of 0.83 and an IoU of 0.80, whereas a pure SVD‑based decoder attains only 0.58 accuracy and 0.26 IoU. Under milder transformations (rotation, scaling, noise), the deep model matches or slightly exceeds the SVD baseline, confirming that the learned features do not overfit to a single attack type but generalize across a spectrum of perturbations. Visual inspection shows that the watermark insertion does not produce perceptible artifacts, preserving the usability of the point cloud for downstream applications.

The contributions of the work can be summarized as follows:

- A hybrid watermarking pipeline that leverages the stability of singular values for embedding while exploiting the representational power of PointNet++ for extraction.

- A comprehensive attack‑aware training regime that equips the extractor with resilience to both geometric and non‑geometric degradations.

- Empirical evidence that deep learning‑based extraction dramatically outperforms traditional spectral decoding, especially under severe cropping and composite attacks.

- Demonstration of practical feasibility on a standard benchmark, suggesting immediate applicability to real‑world 3D content pipelines.

In conclusion, by integrating conventional spectral decomposition with state‑of‑the‑art point‑cloud neural networks, the proposed framework sets a new benchmark for robust, high‑fidelity watermark recovery in 3D digital environments. Future directions include real‑time embedding/extraction, extension to other point‑cloud formats (e.g., LiDAR scans), and large‑scale deployment in industry settings where copyright enforcement is becoming a critical concern.

📜 Original Paper Content

🚀 Synchronizing high-quality layout from 1TB storage...